mirror of

https://github.com/invoke-ai/InvokeAI

synced 2024-08-30 20:32:17 +00:00

Merge branch 'main' into feat/nodes/freeu

This commit is contained in:

2

.github/workflows/pypi-release.yml

vendored

2

.github/workflows/pypi-release.yml

vendored

@ -28,7 +28,7 @@ jobs:

|

|||||||

run: twine check dist/*

|

run: twine check dist/*

|

||||||

|

|

||||||

- name: check PyPI versions

|

- name: check PyPI versions

|

||||||

if: github.ref == 'refs/heads/main' || github.ref == 'refs/heads/v2.3'

|

if: github.ref == 'refs/heads/main' || startsWith(github.ref, 'refs/heads/release/')

|

||||||

run: |

|

run: |

|

||||||

pip install --upgrade requests

|

pip install --upgrade requests

|

||||||

python -c "\

|

python -c "\

|

||||||

|

|||||||

@ -8,28 +8,42 @@ To download a node, simply download the `.py` node file from the link and add it

|

|||||||

|

|

||||||

To use a community workflow, download the the `.json` node graph file and load it into Invoke AI via the **Load Workflow** button in the Workflow Editor.

|

To use a community workflow, download the the `.json` node graph file and load it into Invoke AI via the **Load Workflow** button in the Workflow Editor.

|

||||||

|

|

||||||

--------------------------------

|

- Community Nodes

|

||||||

|

+ [Depth Map from Wavefront OBJ](#depth-map-from-wavefront-obj)

|

||||||

|

+ [Film Grain](#film-grain)

|

||||||

|

+ [Generative Grammar-Based Prompt Nodes](#generative-grammar-based-prompt-nodes)

|

||||||

|

+ [GPT2RandomPromptMaker](#gpt2randompromptmaker)

|

||||||

|

+ [Grid to Gif](#grid-to-gif)

|

||||||

|

+ [Halftone](#halftone)

|

||||||

|

+ [Ideal Size](#ideal-size)

|

||||||

|

+ [Image and Mask Composition Pack](#image-and-mask-composition-pack)

|

||||||

|

+ [Image to Character Art Image Nodes](#image-to-character-art-image-nodes)

|

||||||

|

+ [Image Picker](#image-picker)

|

||||||

|

+ [Load Video Frame](#load-video-frame)

|

||||||

|

+ [Make 3D](#make-3d)

|

||||||

|

+ [Oobabooga](#oobabooga)

|

||||||

|

+ [Prompt Tools](#prompt-tools)

|

||||||

|

+ [Retroize](#retroize)

|

||||||

|

+ [Size Stepper Nodes](#size-stepper-nodes)

|

||||||

|

+ [Text font to Image](#text-font-to-image)

|

||||||

|

+ [Thresholding](#thresholding)

|

||||||

|

+ [XY Image to Grid and Images to Grids nodes](#xy-image-to-grid-and-images-to-grids-nodes)

|

||||||

|

- [Example Node Template](#example-node-template)

|

||||||

|

- [Disclaimer](#disclaimer)

|

||||||

|

- [Help](#help)

|

||||||

|

|

||||||

|

|

||||||

--------------------------------

|

--------------------------------

|

||||||

### Make 3D

|

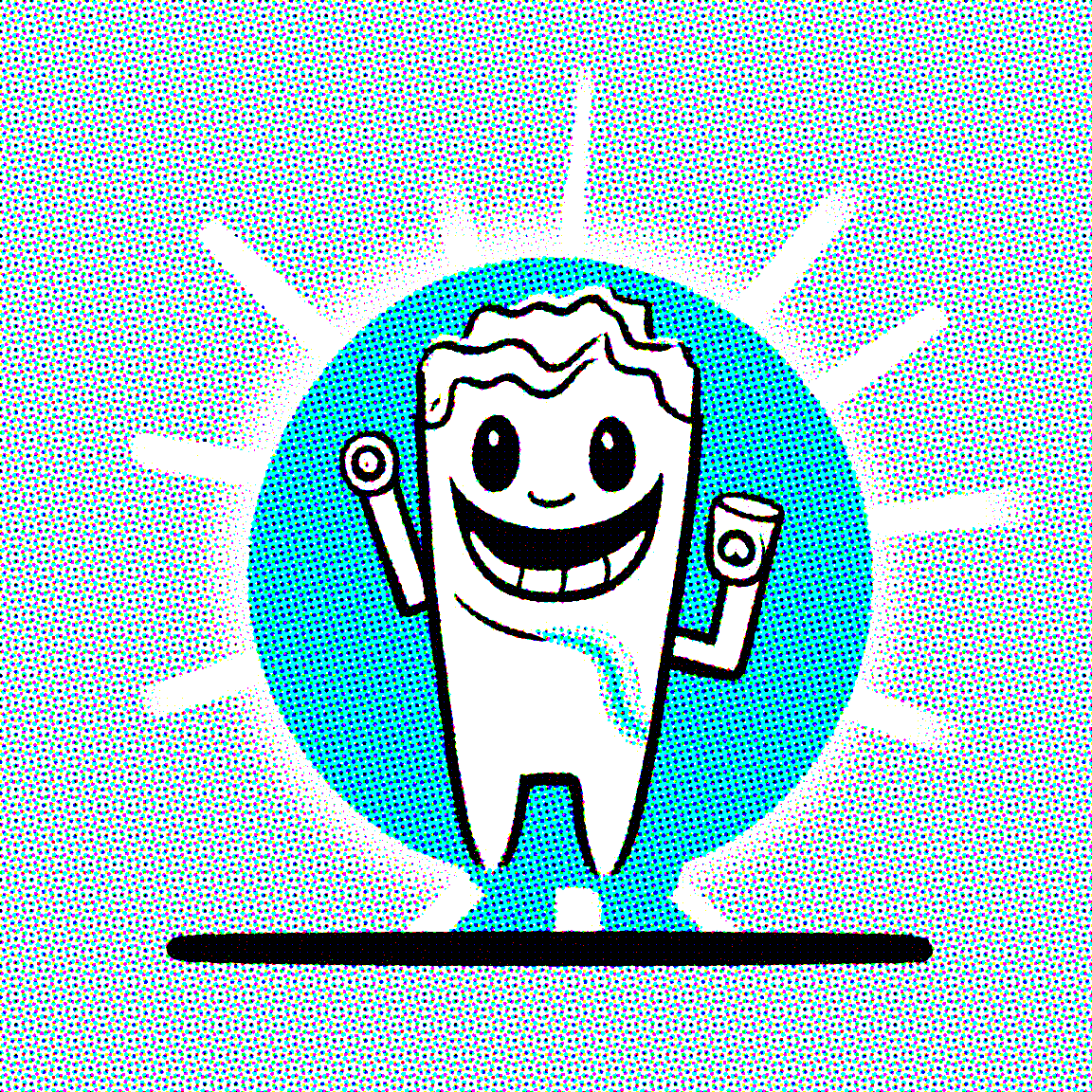

### Depth Map from Wavefront OBJ

|

||||||

|

|

||||||

**Description:** Create compelling 3D stereo images from 2D originals.

|

**Description:** Render depth maps from Wavefront .obj files (triangulated) using this simple 3D renderer utilizing numpy and matplotlib to compute and color the scene. There are simple parameters to change the FOV, camera position, and model orientation.

|

||||||

|

|

||||||

**Node Link:** [https://gitlab.com/srcrr/shift3d/-/raw/main/make3d.py](https://gitlab.com/srcrr/shift3d)

|

To be imported, an .obj must use triangulated meshes, so make sure to enable that option if exporting from a 3D modeling program. This renderer makes each triangle a solid color based on its average depth, so it will cause anomalies if your .obj has large triangles. In Blender, the Remesh modifier can be helpful to subdivide a mesh into small pieces that work well given these limitations.

|

||||||

|

|

||||||

**Example Node Graph:** https://gitlab.com/srcrr/shift3d/-/raw/main/example-workflow.json?ref_type=heads&inline=false

|

**Node Link:** https://github.com/dwringer/depth-from-obj-node

|

||||||

|

|

||||||

**Output Examples**

|

**Example Usage:**

|

||||||

|

</br><img src="https://raw.githubusercontent.com/dwringer/depth-from-obj-node/main/depth_from_obj_usage.jpg" width="500" />

|

||||||

{: style="height:512px;width:512px"}

|

|

||||||

{: style="height:512px;width:512px"}

|

|

||||||

|

|

||||||

--------------------------------

|

|

||||||

### Ideal Size

|

|

||||||

|

|

||||||

**Description:** This node calculates an ideal image size for a first pass of a multi-pass upscaling. The aim is to avoid duplication that results from choosing a size larger than the model is capable of.

|

|

||||||

|

|

||||||

**Node Link:** https://github.com/JPPhoto/ideal-size-node

|

|

||||||

|

|

||||||

--------------------------------

|

--------------------------------

|

||||||

### Film Grain

|

### Film Grain

|

||||||

@ -39,68 +53,19 @@ To use a community workflow, download the the `.json` node graph file and load i

|

|||||||

**Node Link:** https://github.com/JPPhoto/film-grain-node

|

**Node Link:** https://github.com/JPPhoto/film-grain-node

|

||||||

|

|

||||||

--------------------------------

|

--------------------------------

|

||||||

### Image Picker

|

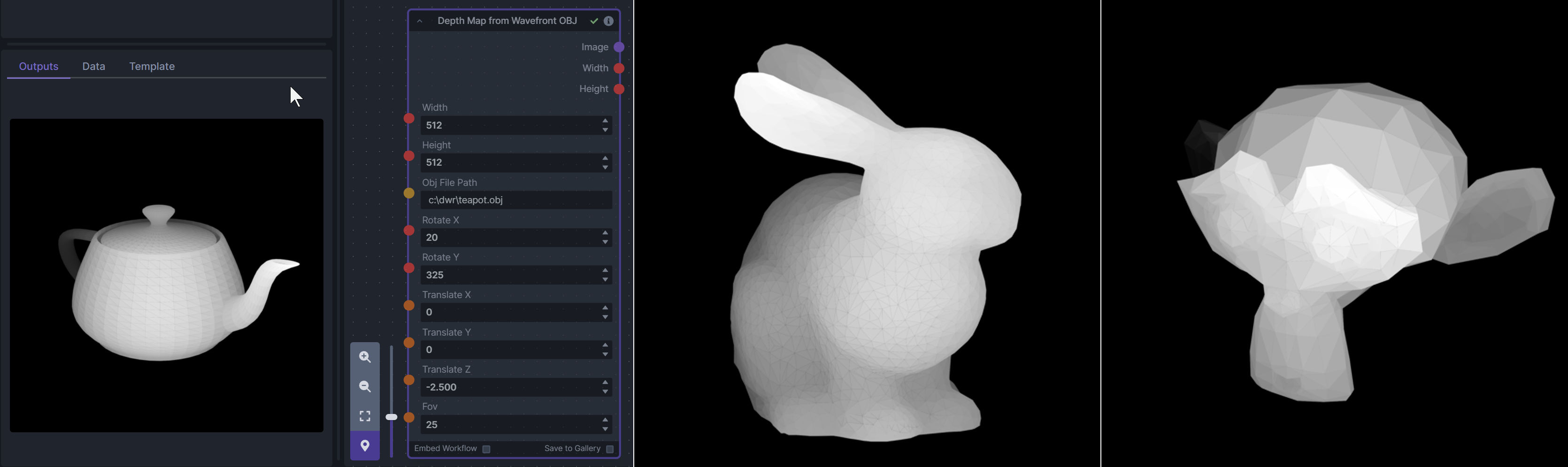

### Generative Grammar-Based Prompt Nodes

|

||||||

|

|

||||||

**Description:** This InvokeAI node takes in a collection of images and randomly chooses one. This can be useful when you have a number of poses to choose from for a ControlNet node, or a number of input images for another purpose.

|

**Description:** This set of 3 nodes generates prompts from simple user-defined grammar rules (loaded from custom files - examples provided below). The prompts are made by recursively expanding a special template string, replacing nonterminal "parts-of-speech" until no nonterminal terms remain in the string.

|

||||||

|

|

||||||

**Node Link:** https://github.com/JPPhoto/image-picker-node

|

This includes 3 Nodes:

|

||||||

|

- *Lookup Table from File* - loads a YAML file "prompt" section (or of a whole folder of YAML's) into a JSON-ified dictionary (Lookups output)

|

||||||

|

- *Lookups Entry from Prompt* - places a single entry in a new Lookups output under the specified heading

|

||||||

|

- *Prompt from Lookup Table* - uses a Collection of Lookups as grammar rules from which to randomly generate prompts.

|

||||||

|

|

||||||

--------------------------------

|

**Node Link:** https://github.com/dwringer/generative-grammar-prompt-nodes

|

||||||

### Thresholding

|

|

||||||

|

|

||||||

**Description:** This node generates masks for highlights, midtones, and shadows given an input image. You can optionally specify a blur for the lookup table used in making those masks from the source image.

|

**Example Usage:**

|

||||||

|

</br><img src="https://raw.githubusercontent.com/dwringer/generative-grammar-prompt-nodes/main/lookuptables_usage.jpg" width="500" />

|

||||||

**Node Link:** https://github.com/JPPhoto/thresholding-node

|

|

||||||

|

|

||||||

**Examples**

|

|

||||||

|

|

||||||

Input:

|

|

||||||

|

|

||||||

{: style="height:512px;width:512px"}

|

|

||||||

|

|

||||||

Highlights/Midtones/Shadows:

|

|

||||||

|

|

||||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/727021c1-36ff-4ec8-90c8-105e00de986d" style="width: 30%" />

|

|

||||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/0b721bfc-f051-404e-b905-2f16b824ddfe" style="width: 30%" />

|

|

||||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/04c1297f-1c88-42b6-a7df-dd090b976286" style="width: 30%" />

|

|

||||||

|

|

||||||

Highlights/Midtones/Shadows (with LUT blur enabled):

|

|

||||||

|

|

||||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/19aa718a-70c1-4668-8169-d68f4bd13771" style="width: 30%" />

|

|

||||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/0a440e43-697f-4d17-82ee-f287467df0a5" style="width: 30%" />

|

|

||||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/0701fd0f-2ca7-4fe2-8613-2b52547bafce" style="width: 30%" />

|

|

||||||

|

|

||||||

--------------------------------

|

|

||||||

### Halftone

|

|

||||||

|

|

||||||

**Description**: Halftone converts the source image to grayscale and then performs halftoning. CMYK Halftone converts the image to CMYK and applies a per-channel halftoning to make the source image look like a magazine or newspaper. For both nodes, you can specify angles and halftone dot spacing.

|

|

||||||

|

|

||||||

**Node Link:** https://github.com/JPPhoto/halftone-node

|

|

||||||

|

|

||||||

**Example**

|

|

||||||

|

|

||||||

Input:

|

|

||||||

|

|

||||||

{: style="height:512px;width:512px"}

|

|

||||||

|

|

||||||

Halftone Output:

|

|

||||||

|

|

||||||

{: style="height:512px;width:512px"}

|

|

||||||

|

|

||||||

CMYK Halftone Output:

|

|

||||||

|

|

||||||

{: style="height:512px;width:512px"}

|

|

||||||

|

|

||||||

--------------------------------

|

|

||||||

### Retroize

|

|

||||||

|

|

||||||

**Description:** Retroize is a collection of nodes for InvokeAI to "Retroize" images. Any image can be given a fresh coat of retro paint with these nodes, either from your gallery or from within the graph itself. It includes nodes to pixelize, quantize, palettize, and ditherize images; as well as to retrieve palettes from existing images.

|

|

||||||

|

|

||||||

**Node Link:** https://github.com/Ar7ific1al/invokeai-retroizeinode/

|

|

||||||

|

|

||||||

**Retroize Output Examples**

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

--------------------------------

|

--------------------------------

|

||||||

### GPT2RandomPromptMaker

|

### GPT2RandomPromptMaker

|

||||||

@ -113,76 +78,49 @@ CMYK Halftone Output:

|

|||||||

|

|

||||||

Generated Prompt: An enchanted weapon will be usable by any character regardless of their alignment.

|

Generated Prompt: An enchanted weapon will be usable by any character regardless of their alignment.

|

||||||

|

|

||||||

|

<img src="https://github.com/mickr777/InvokeAI/assets/115216705/8496ba09-bcdd-4ff7-8076-ff213b6a1e4c" width="200" />

|

||||||

|

|

||||||

--------------------------------

|

--------------------------------

|

||||||

### Load Video Frame

|

### Grid to Gif

|

||||||

|

|

||||||

**Description:** This is a video frame image provider + indexer/video creation nodes for hooking up to iterators and ranges and ControlNets and such for invokeAI node experimentation. Think animation + ControlNet outputs.

|

**Description:** One node that turns a grid image into an image collection, one node that turns an image collection into a gif.

|

||||||

|

|

||||||

**Node Link:** https://github.com/helix4u/load_video_frame

|

**Node Link:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/GridToGif.py

|

||||||

|

|

||||||

**Example Node Graph:** https://github.com/helix4u/load_video_frame/blob/main/Example_Workflow.json

|

**Example Node Graph:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/Grid%20to%20Gif%20Example%20Workflow.json

|

||||||

|

|

||||||

**Output Example:**

|

**Output Examples**

|

||||||

|

|

||||||

|

<img src="https://raw.githubusercontent.com/mildmisery/invokeai-GridToGifNode/main/input.png" width="300" />

|

||||||

[Full mp4 of Example Output test.mp4](https://github.com/helix4u/load_video_frame/blob/main/test.mp4)

|

<img src="https://raw.githubusercontent.com/mildmisery/invokeai-GridToGifNode/main/output.gif" width="300" />

|

||||||

|

|

||||||

--------------------------------

|

--------------------------------

|

||||||

|

### Halftone

|

||||||

|

|

||||||

### Oobabooga

|

**Description**: Halftone converts the source image to grayscale and then performs halftoning. CMYK Halftone converts the image to CMYK and applies a per-channel halftoning to make the source image look like a magazine or newspaper. For both nodes, you can specify angles and halftone dot spacing.

|

||||||

|

|

||||||

**Description:** asks a local LLM running in Oobabooga's Text-Generation-Webui to write a prompt based on the user input.

|

**Node Link:** https://github.com/JPPhoto/halftone-node

|

||||||

|

|

||||||

**Link:** https://github.com/sammyf/oobabooga-node

|

**Example**

|

||||||

|

|

||||||

|

Input:

|

||||||

|

|

||||||

**Example:**

|

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/fd5efb9f-4355-4409-a1c2-c1ca99e0cab4" width="300" />

|

||||||

|

|

||||||

"describe a new mystical creature in its natural environment"

|

Halftone Output:

|

||||||

|

|

||||||

*can return*

|

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/7e606f29-e68f-4d46-b3d5-97f799a4ec2f" width="300" />

|

||||||

|

|

||||||

"The mystical creature I am describing to you is called the "Glimmerwing". It is a majestic, iridescent being that inhabits the depths of the most enchanted forests and glimmering lakes. Its body is covered in shimmering scales that reflect every color of the rainbow, and it has delicate, translucent wings that sparkle like diamonds in the sunlight. The Glimmerwing's home is a crystal-clear lake, surrounded by towering trees with leaves that shimmer like jewels. In this serene environment, the Glimmerwing spends its days swimming gracefully through the water, chasing schools of glittering fish and playing with the gentle ripples of the lake's surface.

|

CMYK Halftone Output:

|

||||||

As the sun sets, the Glimmerwing perches on a branch of one of the trees, spreading its wings to catch the last rays of light. The creature's scales glow softly, casting a rainbow of colors across the forest floor. The Glimmerwing sings a haunting melody, its voice echoing through the stillness of the night air. Its song is said to have the power to heal the sick and bring peace to troubled souls. Those who are lucky enough to hear the Glimmerwing's song are forever changed by its beauty and grace."

|

|

||||||

|

|

||||||

|

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/c59c578f-db8e-4d66-8c66-2851752d75ea" width="300" />

|

||||||

|

|

||||||

**Requirement**

|

|

||||||

|

|

||||||

a Text-Generation-Webui instance (might work remotely too, but I never tried it) and obviously InvokeAI 3.x

|

|

||||||

|

|

||||||

**Note**

|

|

||||||

|

|

||||||

This node works best with SDXL models, especially as the style can be described independantly of the LLM's output.

|

|

||||||

|

|

||||||

--------------------------------

|

--------------------------------

|

||||||

### Depth Map from Wavefront OBJ

|

### Ideal Size

|

||||||

|

|

||||||

**Description:** Render depth maps from Wavefront .obj files (triangulated) using this simple 3D renderer utilizing numpy and matplotlib to compute and color the scene. There are simple parameters to change the FOV, camera position, and model orientation.

|

**Description:** This node calculates an ideal image size for a first pass of a multi-pass upscaling. The aim is to avoid duplication that results from choosing a size larger than the model is capable of.

|

||||||

|

|

||||||

To be imported, an .obj must use triangulated meshes, so make sure to enable that option if exporting from a 3D modeling program. This renderer makes each triangle a solid color based on its average depth, so it will cause anomalies if your .obj has large triangles. In Blender, the Remesh modifier can be helpful to subdivide a mesh into small pieces that work well given these limitations.

|

**Node Link:** https://github.com/JPPhoto/ideal-size-node

|

||||||

|

|

||||||

**Node Link:** https://github.com/dwringer/depth-from-obj-node

|

|

||||||

|

|

||||||

**Example Usage:**

|

|

||||||

|

|

||||||

|

|

||||||

--------------------------------

|

|

||||||

### Generative Grammar-Based Prompt Nodes

|

|

||||||

|

|

||||||

**Description:** This set of 3 nodes generates prompts from simple user-defined grammar rules (loaded from custom files - examples provided below). The prompts are made by recursively expanding a special template string, replacing nonterminal "parts-of-speech" until no more nonterminal terms remain in the string.

|

|

||||||

|

|

||||||

This includes 3 Nodes:

|

|

||||||

- *Lookup Table from File* - loads a YAML file "prompt" section (or of a whole folder of YAML's) into a JSON-ified dictionary (Lookups output)

|

|

||||||

- *Lookups Entry from Prompt* - places a single entry in a new Lookups output under the specified heading

|

|

||||||

- *Prompt from Lookup Table* - uses a Collection of Lookups as grammar rules from which to randomly generate prompts.

|

|

||||||

|

|

||||||

**Node Link:** https://github.com/dwringer/generative-grammar-prompt-nodes

|

|

||||||

|

|

||||||

**Example Usage:**

|

|

||||||

|

|

||||||

|

|

||||||

--------------------------------

|

--------------------------------

|

||||||

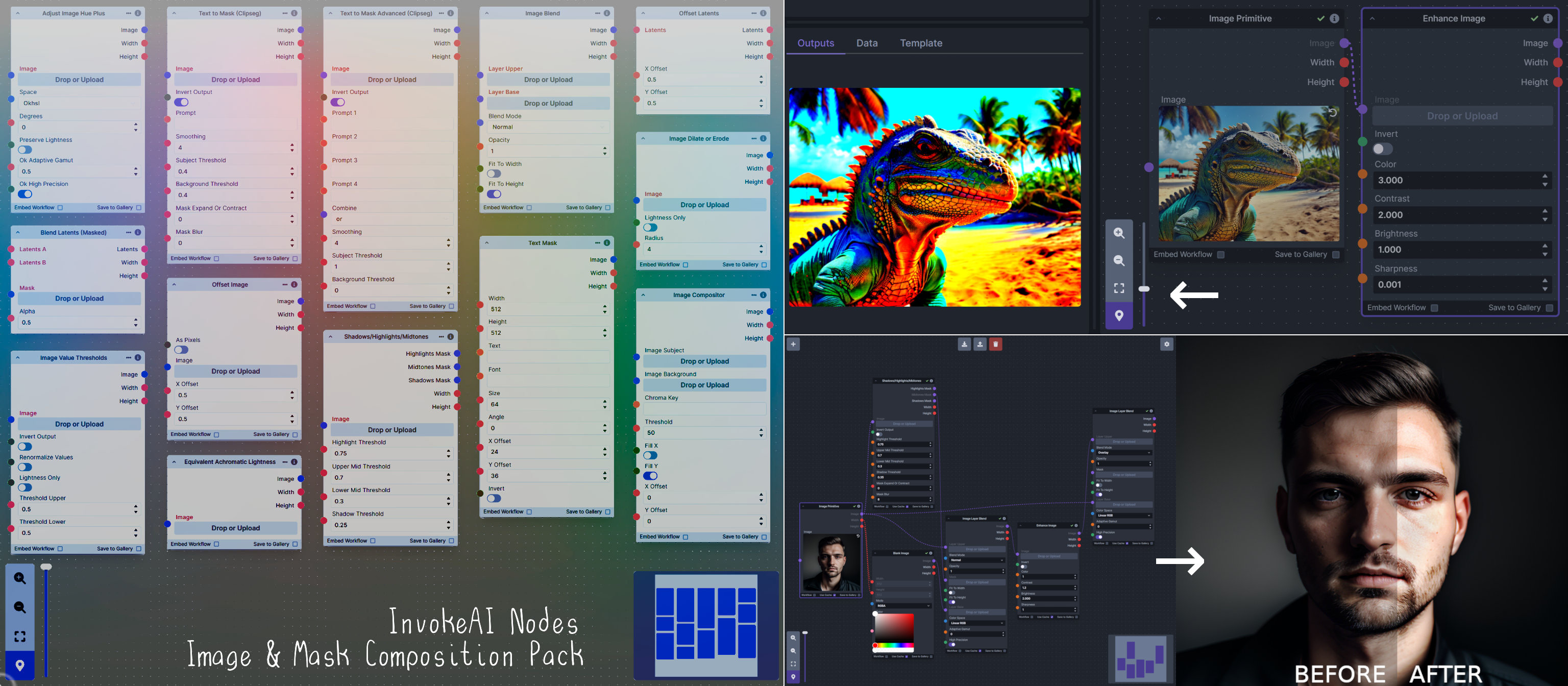

### Image and Mask Composition Pack

|

### Image and Mask Composition Pack

|

||||||

@ -209,44 +147,87 @@ This includes 15 Nodes:

|

|||||||

|

|

||||||

**Node Link:** https://github.com/dwringer/composition-nodes

|

**Node Link:** https://github.com/dwringer/composition-nodes

|

||||||

|

|

||||||

**Nodes and Output Examples:**

|

</br><img src="https://raw.githubusercontent.com/dwringer/composition-nodes/main/composition_pack_overview.jpg" width="500" />

|

||||||

|

|

||||||

|

|

||||||

--------------------------------

|

--------------------------------

|

||||||

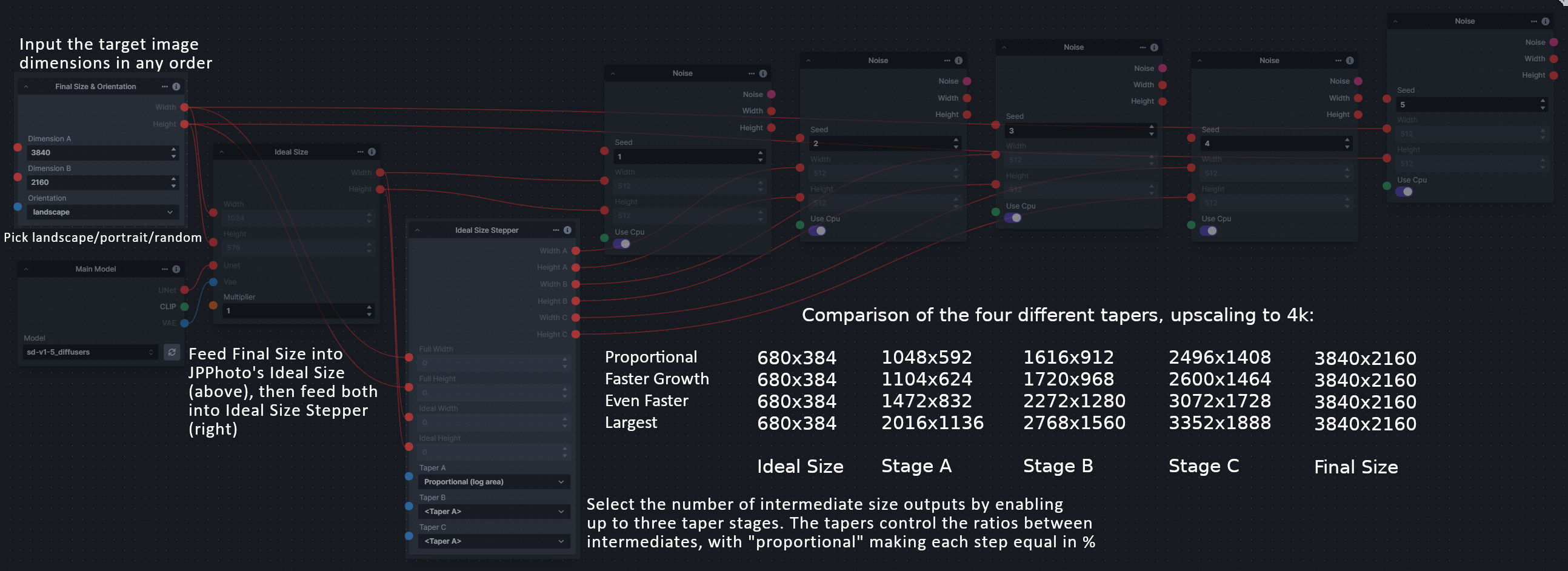

### Size Stepper Nodes

|

### Image to Character Art Image Nodes

|

||||||

|

|

||||||

**Description:** This is a set of nodes for calculating the necessary size increments for doing upscaling workflows. Use the *Final Size & Orientation* node to enter your full size dimensions and orientation (portrait/landscape/random), then plug that and your initial generation dimensions into the *Ideal Size Stepper* and get 1, 2, or 3 intermediate pairs of dimensions for upscaling. Note this does not output the initial size or full size dimensions: the 1, 2, or 3 outputs of this node are only the intermediate sizes.

|

**Description:** Group of nodes to convert an input image into ascii/unicode art Image

|

||||||

|

|

||||||

A third node is included, *Random Switch (Integers)*, which is just a generic version of Final Size with no orientation selection.

|

**Node Link:** https://github.com/mickr777/imagetoasciiimage

|

||||||

|

|

||||||

**Node Link:** https://github.com/dwringer/size-stepper-nodes

|

|

||||||

|

|

||||||

**Example Usage:**

|

|

||||||

|

|

||||||

|

|

||||||

--------------------------------

|

|

||||||

|

|

||||||

### Text font to Image

|

|

||||||

|

|

||||||

**Description:** text font to text image node for InvokeAI, download a font to use (or if in font cache uses it from there), the text is always resized to the image size, but can control that with padding, optional 2nd line

|

|

||||||

|

|

||||||

**Node Link:** https://github.com/mickr777/textfontimage

|

|

||||||

|

|

||||||

**Output Examples**

|

**Output Examples**

|

||||||

|

|

||||||

|

<img src="https://user-images.githubusercontent.com/115216705/271817646-8e061fcc-9a2c-4fa9-bcc7-c0f7b01e9056.png" width="300" /><img src="https://github.com/mickr777/imagetoasciiimage/assets/115216705/3c4990eb-2f42-46b9-90f9-0088b939dc6a" width="300" /></br>

|

||||||

|

<img src="https://github.com/mickr777/imagetoasciiimage/assets/115216705/fee7f800-a4a8-41e2-a66b-c66e4343307e" width="300" />

|

||||||

Results after using the depth controlnet

|

<img src="https://github.com/mickr777/imagetoasciiimage/assets/115216705/1d9c1003-a45f-45c2-aac7-46470bb89330" width="300" />

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

--------------------------------

|

--------------------------------

|

||||||

|

|

||||||

|

### Image Picker

|

||||||

|

|

||||||

|

**Description:** This InvokeAI node takes in a collection of images and randomly chooses one. This can be useful when you have a number of poses to choose from for a ControlNet node, or a number of input images for another purpose.

|

||||||

|

|

||||||

|

**Node Link:** https://github.com/JPPhoto/image-picker-node

|

||||||

|

|

||||||

|

--------------------------------

|

||||||

|

### Load Video Frame

|

||||||

|

|

||||||

|

**Description:** This is a video frame image provider + indexer/video creation nodes for hooking up to iterators and ranges and ControlNets and such for invokeAI node experimentation. Think animation + ControlNet outputs.

|

||||||

|

|

||||||

|

**Node Link:** https://github.com/helix4u/load_video_frame

|

||||||

|

|

||||||

|

**Example Node Graph:** https://github.com/helix4u/load_video_frame/blob/main/Example_Workflow.json

|

||||||

|

|

||||||

|

**Output Example:**

|

||||||

|

|

||||||

|

<img src="https://github.com/helix4u/load_video_frame/blob/main/testmp4_embed_converted.gif" width="500" />

|

||||||

|

[Full mp4 of Example Output test.mp4](https://github.com/helix4u/load_video_frame/blob/main/test.mp4)

|

||||||

|

|

||||||

|

--------------------------------

|

||||||

|

### Make 3D

|

||||||

|

|

||||||

|

**Description:** Create compelling 3D stereo images from 2D originals.

|

||||||

|

|

||||||

|

**Node Link:** [https://gitlab.com/srcrr/shift3d/-/raw/main/make3d.py](https://gitlab.com/srcrr/shift3d)

|

||||||

|

|

||||||

|

**Example Node Graph:** https://gitlab.com/srcrr/shift3d/-/raw/main/example-workflow.json?ref_type=heads&inline=false

|

||||||

|

|

||||||

|

**Output Examples**

|

||||||

|

|

||||||

|

<img src="https://gitlab.com/srcrr/shift3d/-/raw/main/example-1.png" width="300" />

|

||||||

|

<img src="https://gitlab.com/srcrr/shift3d/-/raw/main/example-2.png" width="300" />

|

||||||

|

|

||||||

|

--------------------------------

|

||||||

|

### Oobabooga

|

||||||

|

|

||||||

|

**Description:** asks a local LLM running in Oobabooga's Text-Generation-Webui to write a prompt based on the user input.

|

||||||

|

|

||||||

|

**Link:** https://github.com/sammyf/oobabooga-node

|

||||||

|

|

||||||

|

**Example:**

|

||||||

|

|

||||||

|

"describe a new mystical creature in its natural environment"

|

||||||

|

|

||||||

|

*can return*

|

||||||

|

|

||||||

|

"The mystical creature I am describing to you is called the "Glimmerwing". It is a majestic, iridescent being that inhabits the depths of the most enchanted forests and glimmering lakes. Its body is covered in shimmering scales that reflect every color of the rainbow, and it has delicate, translucent wings that sparkle like diamonds in the sunlight. The Glimmerwing's home is a crystal-clear lake, surrounded by towering trees with leaves that shimmer like jewels. In this serene environment, the Glimmerwing spends its days swimming gracefully through the water, chasing schools of glittering fish and playing with the gentle ripples of the lake's surface.

|

||||||

|

As the sun sets, the Glimmerwing perches on a branch of one of the trees, spreading its wings to catch the last rays of light. The creature's scales glow softly, casting a rainbow of colors across the forest floor. The Glimmerwing sings a haunting melody, its voice echoing through the stillness of the night air. Its song is said to have the power to heal the sick and bring peace to troubled souls. Those who are lucky enough to hear the Glimmerwing's song are forever changed by its beauty and grace."

|

||||||

|

|

||||||

|

<img src="https://github.com/sammyf/oobabooga-node/assets/42468608/cecdd820-93dd-4c35-abbf-607e001fb2ed" width="300" />

|

||||||

|

|

||||||

|

**Requirement**

|

||||||

|

|

||||||

|

a Text-Generation-Webui instance (might work remotely too, but I never tried it) and obviously InvokeAI 3.x

|

||||||

|

|

||||||

|

**Note**

|

||||||

|

|

||||||

|

This node works best with SDXL models, especially as the style can be described independently of the LLM's output.

|

||||||

|

|

||||||

|

--------------------------------

|

||||||

### Prompt Tools

|

### Prompt Tools

|

||||||

|

|

||||||

**Description:** A set of InvokeAI nodes that add general prompt manipulation tools. These where written to accompany the PromptsFromFile node and other prompt generation nodes.

|

**Description:** A set of InvokeAI nodes that add general prompt manipulation tools. These were written to accompany the PromptsFromFile node and other prompt generation nodes.

|

||||||

|

|

||||||

1. PromptJoin - Joins to prompts into one.

|

1. PromptJoin - Joins to prompts into one.

|

||||||

2. PromptReplace - performs a search and replace on a prompt. With the option of using regex.

|

2. PromptReplace - performs a search and replace on a prompt. With the option of using regex.

|

||||||

@ -263,51 +244,83 @@ See full docs here: https://github.com/skunkworxdark/Prompt-tools-nodes/edit/mai

|

|||||||

**Node Link:** https://github.com/skunkworxdark/Prompt-tools-nodes

|

**Node Link:** https://github.com/skunkworxdark/Prompt-tools-nodes

|

||||||

|

|

||||||

--------------------------------

|

--------------------------------

|

||||||

|

### Retroize

|

||||||

|

|

||||||

|

**Description:** Retroize is a collection of nodes for InvokeAI to "Retroize" images. Any image can be given a fresh coat of retro paint with these nodes, either from your gallery or from within the graph itself. It includes nodes to pixelize, quantize, palettize, and ditherize images; as well as to retrieve palettes from existing images.

|

||||||

|

|

||||||

|

**Node Link:** https://github.com/Ar7ific1al/invokeai-retroizeinode/

|

||||||

|

|

||||||

|

**Retroize Output Examples**

|

||||||

|

|

||||||

|

<img src="https://github.com/Ar7ific1al/InvokeAI_nodes_retroize/assets/2306586/de8b4fa6-324c-4c2d-b36c-297600c73974" width="500" />

|

||||||

|

|

||||||

|

--------------------------------

|

||||||

|

### Size Stepper Nodes

|

||||||

|

|

||||||

|

**Description:** This is a set of nodes for calculating the necessary size increments for doing upscaling workflows. Use the *Final Size & Orientation* node to enter your full size dimensions and orientation (portrait/landscape/random), then plug that and your initial generation dimensions into the *Ideal Size Stepper* and get 1, 2, or 3 intermediate pairs of dimensions for upscaling. Note this does not output the initial size or full size dimensions: the 1, 2, or 3 outputs of this node are only the intermediate sizes.

|

||||||

|

|

||||||

|

A third node is included, *Random Switch (Integers)*, which is just a generic version of Final Size with no orientation selection.

|

||||||

|

|

||||||

|

**Node Link:** https://github.com/dwringer/size-stepper-nodes

|

||||||

|

|

||||||

|

**Example Usage:**

|

||||||

|

</br><img src="https://raw.githubusercontent.com/dwringer/size-stepper-nodes/main/size_nodes_usage.jpg" width="500" />

|

||||||

|

|

||||||

|

--------------------------------

|

||||||

|

### Text font to Image

|

||||||

|

|

||||||

|

**Description:** text font to text image node for InvokeAI, download a font to use (or if in font cache uses it from there), the text is always resized to the image size, but can control that with padding, optional 2nd line

|

||||||

|

|

||||||

|

**Node Link:** https://github.com/mickr777/textfontimage

|

||||||

|

|

||||||

|

**Output Examples**

|

||||||

|

|

||||||

|

<img src="https://github.com/mickr777/InvokeAI/assets/115216705/c21b0af3-d9c6-4c16-9152-846a23effd36" width="300" />

|

||||||

|

|

||||||

|

Results after using the depth controlnet

|

||||||

|

|

||||||

|

<img src="https://github.com/mickr777/InvokeAI/assets/115216705/915f1a53-968e-43eb-aa61-07cd8f1a733a" width="300" />

|

||||||

|

<img src="https://github.com/mickr777/InvokeAI/assets/115216705/821ef89e-8a60-44f5-b94e-471a9d8690cc" width="300" />

|

||||||

|

<img src="https://github.com/mickr777/InvokeAI/assets/115216705/2befcb6d-49f4-4bfd-b5fc-1fee19274f89" width="300" />

|

||||||

|

|

||||||

|

--------------------------------

|

||||||

|

### Thresholding

|

||||||

|

|

||||||

|

**Description:** This node generates masks for highlights, midtones, and shadows given an input image. You can optionally specify a blur for the lookup table used in making those masks from the source image.

|

||||||

|

|

||||||

|

**Node Link:** https://github.com/JPPhoto/thresholding-node

|

||||||

|

|

||||||

|

**Examples**

|

||||||

|

|

||||||

|

Input:

|

||||||

|

|

||||||

|

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/c88ada13-fb3d-484c-a4fe-947b44712632" width="300" />

|

||||||

|

|

||||||

|

Highlights/Midtones/Shadows:

|

||||||

|

|

||||||

|

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/727021c1-36ff-4ec8-90c8-105e00de986d" width="300" />

|

||||||

|

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/0b721bfc-f051-404e-b905-2f16b824ddfe" width="300" />

|

||||||

|

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/04c1297f-1c88-42b6-a7df-dd090b976286" width="300" />

|

||||||

|

|

||||||

|

Highlights/Midtones/Shadows (with LUT blur enabled):

|

||||||

|

|

||||||

|

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/19aa718a-70c1-4668-8169-d68f4bd13771" width="300" />

|

||||||

|

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/0a440e43-697f-4d17-82ee-f287467df0a5" width="300" />

|

||||||

|

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/0701fd0f-2ca7-4fe2-8613-2b52547bafce" width="300" />

|

||||||

|

|

||||||

|

--------------------------------

|

||||||

### XY Image to Grid and Images to Grids nodes

|

### XY Image to Grid and Images to Grids nodes

|

||||||

|

|

||||||

**Description:** Image to grid nodes and supporting tools.

|

**Description:** Image to grid nodes and supporting tools.

|

||||||

|

|

||||||

1. "Images To Grids" node - Takes a collection of images and creates a grid(s) of images. If there are more images than the size of a single grid then mutilple grids will be created until it runs out of images.

|

1. "Images To Grids" node - Takes a collection of images and creates a grid(s) of images. If there are more images than the size of a single grid then multiple grids will be created until it runs out of images.

|

||||||

2. "XYImage To Grid" node - Converts a collection of XYImages into a labeled Grid of images. The XYImages collection has to be built using the supporoting nodes. See example node setups for more details.

|

2. "XYImage To Grid" node - Converts a collection of XYImages into a labeled Grid of images. The XYImages collection has to be built using the supporting nodes. See example node setups for more details.

|

||||||

|

|

||||||

|

|

||||||

See full docs here: https://github.com/skunkworxdark/XYGrid_nodes/edit/main/README.md

|

See full docs here: https://github.com/skunkworxdark/XYGrid_nodes/edit/main/README.md

|

||||||

|

|

||||||

**Node Link:** https://github.com/skunkworxdark/XYGrid_nodes

|

**Node Link:** https://github.com/skunkworxdark/XYGrid_nodes

|

||||||

|

|

||||||

--------------------------------

|

--------------------------------

|

||||||

|

|

||||||

### Image to Character Art Image Node's

|

|

||||||

|

|

||||||

**Description:** Group of nodes to convert an input image into ascii/unicode art Image

|

|

||||||

|

|

||||||

**Node Link:** https://github.com/mickr777/imagetoasciiimage

|

|

||||||

|

|

||||||

**Output Examples**

|

|

||||||

|

|

||||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/115216705/8e061fcc-9a2c-4fa9-bcc7-c0f7b01e9056" width="300" />

|

|

||||||

<img src="https://github.com/mickr777/imagetoasciiimage/assets/115216705/3c4990eb-2f42-46b9-90f9-0088b939dc6a" width="300" /></br>

|

|

||||||

<img src="https://github.com/mickr777/imagetoasciiimage/assets/115216705/fee7f800-a4a8-41e2-a66b-c66e4343307e" width="300" />

|

|

||||||

<img src="https://github.com/mickr777/imagetoasciiimage/assets/115216705/1d9c1003-a45f-45c2-aac7-46470bb89330" width="300" />

|

|

||||||

|

|

||||||

--------------------------------

|

|

||||||

|

|

||||||

### Grid to Gif

|

|

||||||

|

|

||||||

**Description:** One node that turns a grid image into an image colletion, one node that turns an image collection into a gif

|

|

||||||

|

|

||||||

**Node Link:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/GridToGif.py

|

|

||||||

|

|

||||||

**Example Node Graph:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/Grid%20to%20Gif%20Example%20Workflow.json

|

|

||||||

|

|

||||||

**Output Examples**

|

|

||||||

|

|

||||||

<img src="https://raw.githubusercontent.com/mildmisery/invokeai-GridToGifNode/main/input.png" width="300" />

|

|

||||||

<img src="https://raw.githubusercontent.com/mildmisery/invokeai-GridToGifNode/main/output.gif" width="300" />

|

|

||||||

|

|

||||||

--------------------------------

|

|

||||||

|

|

||||||

### Example Node Template

|

### Example Node Template

|

||||||

|

|

||||||

**Description:** This node allows you to do super cool things with InvokeAI.

|

**Description:** This node allows you to do super cool things with InvokeAI.

|

||||||

@ -318,7 +331,7 @@ See full docs here: https://github.com/skunkworxdark/XYGrid_nodes/edit/main/READ

|

|||||||

|

|

||||||

**Output Examples**

|

**Output Examples**

|

||||||

|

|

||||||

{: style="height:115px;width:240px"}

|

</br><img src="https://invoke-ai.github.io/InvokeAI/assets/invoke_ai_banner.png" width="500" />

|

||||||

|

|

||||||

|

|

||||||

## Disclaimer

|

## Disclaimer

|

||||||

|

|||||||

@ -1,35 +1,35 @@

|

|||||||

# Copyright (c) 2022 Kyle Schouviller (https://github.com/kyle0654)

|

# Copyright (c) 2022 Kyle Schouviller (https://github.com/kyle0654)

|

||||||

|

|

||||||

import sqlite3

|

|

||||||

from logging import Logger

|

from logging import Logger

|

||||||

|

|

||||||

from invokeai.app.services.board_image_record_storage import SqliteBoardImageRecordStorage

|

|

||||||

from invokeai.app.services.board_images import BoardImagesService, BoardImagesServiceDependencies

|

|

||||||

from invokeai.app.services.board_record_storage import SqliteBoardRecordStorage

|

|

||||||

from invokeai.app.services.boards import BoardService, BoardServiceDependencies

|

|

||||||

from invokeai.app.services.config import InvokeAIAppConfig

|

|

||||||

from invokeai.app.services.image_record_storage import SqliteImageRecordStorage

|

|

||||||

from invokeai.app.services.images import ImageService, ImageServiceDependencies

|

|

||||||

from invokeai.app.services.invocation_cache.invocation_cache_memory import MemoryInvocationCache

|

|

||||||

from invokeai.app.services.resource_name import SimpleNameService

|

|

||||||

from invokeai.app.services.session_processor.session_processor_default import DefaultSessionProcessor

|

|

||||||

from invokeai.app.services.session_queue.session_queue_sqlite import SqliteSessionQueue

|

|

||||||

from invokeai.app.services.urls import LocalUrlService

|

|

||||||

from invokeai.backend.util.logging import InvokeAILogger

|

from invokeai.backend.util.logging import InvokeAILogger

|

||||||

from invokeai.version.invokeai_version import __version__

|

from invokeai.version.invokeai_version import __version__

|

||||||

|

|

||||||

from ..services.default_graphs import create_system_graphs

|

from ..services.board_image_records.board_image_records_sqlite import SqliteBoardImageRecordStorage

|

||||||

from ..services.graph import GraphExecutionState, LibraryGraph

|

from ..services.board_images.board_images_default import BoardImagesService

|

||||||

from ..services.image_file_storage import DiskImageFileStorage

|

from ..services.board_records.board_records_sqlite import SqliteBoardRecordStorage

|

||||||

from ..services.invocation_queue import MemoryInvocationQueue

|

from ..services.boards.boards_default import BoardService

|

||||||

|

from ..services.config import InvokeAIAppConfig

|

||||||

|

from ..services.image_files.image_files_disk import DiskImageFileStorage

|

||||||

|

from ..services.image_records.image_records_sqlite import SqliteImageRecordStorage

|

||||||

|

from ..services.images.images_default import ImageService

|

||||||

|

from ..services.invocation_cache.invocation_cache_memory import MemoryInvocationCache

|

||||||

|

from ..services.invocation_processor.invocation_processor_default import DefaultInvocationProcessor

|

||||||

|

from ..services.invocation_queue.invocation_queue_memory import MemoryInvocationQueue

|

||||||

from ..services.invocation_services import InvocationServices

|

from ..services.invocation_services import InvocationServices

|

||||||

from ..services.invocation_stats import InvocationStatsService

|

from ..services.invocation_stats.invocation_stats_default import InvocationStatsService

|

||||||

from ..services.invoker import Invoker

|

from ..services.invoker import Invoker

|

||||||

from ..services.latent_storage import DiskLatentsStorage, ForwardCacheLatentsStorage

|

from ..services.item_storage.item_storage_sqlite import SqliteItemStorage

|

||||||

from ..services.model_manager_service import ModelManagerService

|

from ..services.latents_storage.latents_storage_disk import DiskLatentsStorage

|

||||||

from ..services.processor import DefaultInvocationProcessor

|

from ..services.latents_storage.latents_storage_forward_cache import ForwardCacheLatentsStorage

|

||||||

from ..services.sqlite import SqliteItemStorage

|

from ..services.model_manager.model_manager_default import ModelManagerService

|

||||||

from ..services.thread import lock

|

from ..services.names.names_default import SimpleNameService

|

||||||

|

from ..services.session_processor.session_processor_default import DefaultSessionProcessor

|

||||||

|

from ..services.session_queue.session_queue_sqlite import SqliteSessionQueue

|

||||||

|

from ..services.shared.default_graphs import create_system_graphs

|

||||||

|

from ..services.shared.graph import GraphExecutionState, LibraryGraph

|

||||||

|

from ..services.shared.sqlite import SqliteDatabase

|

||||||

|

from ..services.urls.urls_default import LocalUrlService

|

||||||

from .events import FastAPIEventService

|

from .events import FastAPIEventService

|

||||||

|

|

||||||

|

|

||||||

@ -63,100 +63,64 @@ class ApiDependencies:

|

|||||||

logger.info(f"Root directory = {str(config.root_path)}")

|

logger.info(f"Root directory = {str(config.root_path)}")

|

||||||

logger.debug(f"Internet connectivity is {config.internet_available}")

|

logger.debug(f"Internet connectivity is {config.internet_available}")

|

||||||

|

|

||||||

events = FastAPIEventService(event_handler_id)

|

|

||||||

|

|

||||||

output_folder = config.output_path

|

output_folder = config.output_path

|

||||||

|

|

||||||

# TODO: build a file/path manager?

|

db = SqliteDatabase(config, logger)

|

||||||

if config.use_memory_db:

|

|

||||||

db_location = ":memory:"

|

|

||||||

else:

|

|

||||||

db_path = config.db_path

|

|

||||||

db_path.parent.mkdir(parents=True, exist_ok=True)

|

|

||||||

db_location = str(db_path)

|

|

||||||

|

|

||||||

logger.info(f"Using database at {db_location}")

|

configuration = config

|

||||||

db_conn = sqlite3.connect(db_location, check_same_thread=False) # TODO: figure out a better threading solution

|

logger = logger

|

||||||

|

|

||||||

if config.log_sql:

|

board_image_records = SqliteBoardImageRecordStorage(db=db)

|

||||||

db_conn.set_trace_callback(print)

|

board_images = BoardImagesService()

|

||||||

db_conn.execute("PRAGMA foreign_keys = ON;")

|

board_records = SqliteBoardRecordStorage(db=db)

|

||||||

|

boards = BoardService()

|

||||||

graph_execution_manager = SqliteItemStorage[GraphExecutionState](

|

events = FastAPIEventService(event_handler_id)

|

||||||

conn=db_conn, table_name="graph_executions", lock=lock

|

graph_execution_manager = SqliteItemStorage[GraphExecutionState](db=db, table_name="graph_executions")

|

||||||

)

|

graph_library = SqliteItemStorage[LibraryGraph](db=db, table_name="graphs")

|

||||||

|

image_files = DiskImageFileStorage(f"{output_folder}/images")

|

||||||

urls = LocalUrlService()

|

image_records = SqliteImageRecordStorage(db=db)

|

||||||

image_record_storage = SqliteImageRecordStorage(conn=db_conn, lock=lock)

|

images = ImageService()

|

||||||

image_file_storage = DiskImageFileStorage(f"{output_folder}/images")

|

invocation_cache = MemoryInvocationCache(max_cache_size=config.node_cache_size)

|

||||||

names = SimpleNameService()

|

|

||||||

latents = ForwardCacheLatentsStorage(DiskLatentsStorage(f"{output_folder}/latents"))

|

latents = ForwardCacheLatentsStorage(DiskLatentsStorage(f"{output_folder}/latents"))

|

||||||

|

model_manager = ModelManagerService(config, logger)

|

||||||

board_record_storage = SqliteBoardRecordStorage(conn=db_conn, lock=lock)

|

names = SimpleNameService()

|

||||||

board_image_record_storage = SqliteBoardImageRecordStorage(conn=db_conn, lock=lock)

|

performance_statistics = InvocationStatsService()

|

||||||

|

processor = DefaultInvocationProcessor()

|

||||||

boards = BoardService(

|

queue = MemoryInvocationQueue()

|

||||||

services=BoardServiceDependencies(

|

session_processor = DefaultSessionProcessor()

|

||||||

board_image_record_storage=board_image_record_storage,

|

session_queue = SqliteSessionQueue(db=db)

|

||||||

board_record_storage=board_record_storage,

|

urls = LocalUrlService()

|

||||||

image_record_storage=image_record_storage,

|

|

||||||

url=urls,

|

|

||||||

logger=logger,

|

|

||||||

)

|

|

||||||

)

|

|

||||||

|

|

||||||

board_images = BoardImagesService(

|

|

||||||

services=BoardImagesServiceDependencies(

|

|

||||||

board_image_record_storage=board_image_record_storage,

|

|

||||||

board_record_storage=board_record_storage,

|

|

||||||

image_record_storage=image_record_storage,

|

|

||||||

url=urls,

|

|

||||||

logger=logger,

|

|

||||||

)

|

|

||||||

)

|

|

||||||

|

|

||||||

images = ImageService(

|

|

||||||

services=ImageServiceDependencies(

|

|

||||||

board_image_record_storage=board_image_record_storage,

|

|

||||||

image_record_storage=image_record_storage,

|

|

||||||

image_file_storage=image_file_storage,

|

|

||||||

url=urls,

|

|

||||||

logger=logger,

|

|

||||||

names=names,

|

|

||||||

graph_execution_manager=graph_execution_manager,

|

|

||||||

)

|

|

||||||

)

|

|

||||||

|

|

||||||

services = InvocationServices(

|

services = InvocationServices(

|

||||||

model_manager=ModelManagerService(config, logger),

|

board_image_records=board_image_records,

|

||||||

events=events,

|

|

||||||

latents=latents,

|

|

||||||

images=images,

|

|

||||||

boards=boards,

|

|

||||||

board_images=board_images,

|

board_images=board_images,

|

||||||

queue=MemoryInvocationQueue(),

|

board_records=board_records,

|

||||||

graph_library=SqliteItemStorage[LibraryGraph](conn=db_conn, lock=lock, table_name="graphs"),

|

boards=boards,

|

||||||

|

configuration=configuration,

|

||||||

|

events=events,

|

||||||

graph_execution_manager=graph_execution_manager,

|

graph_execution_manager=graph_execution_manager,

|

||||||

processor=DefaultInvocationProcessor(),

|

graph_library=graph_library,

|

||||||

configuration=config,

|

image_files=image_files,

|

||||||

performance_statistics=InvocationStatsService(graph_execution_manager),

|

image_records=image_records,

|

||||||

|

images=images,

|

||||||

|

invocation_cache=invocation_cache,

|

||||||

|

latents=latents,

|

||||||

logger=logger,

|

logger=logger,

|

||||||

session_queue=SqliteSessionQueue(conn=db_conn, lock=lock),

|

model_manager=model_manager,

|

||||||

session_processor=DefaultSessionProcessor(),

|

names=names,

|

||||||

invocation_cache=MemoryInvocationCache(max_cache_size=config.node_cache_size),

|

performance_statistics=performance_statistics,

|

||||||

|

processor=processor,

|

||||||

|

queue=queue,

|

||||||

|

session_processor=session_processor,

|

||||||

|

session_queue=session_queue,

|

||||||

|

urls=urls,

|

||||||

)

|

)

|

||||||

|

|

||||||

create_system_graphs(services.graph_library)

|

create_system_graphs(services.graph_library)

|

||||||

|

|

||||||

ApiDependencies.invoker = Invoker(services)

|

ApiDependencies.invoker = Invoker(services)

|

||||||

|

|

||||||

try:

|

db.clean()

|

||||||

lock.acquire()

|

|

||||||

db_conn.execute("VACUUM;")

|

|

||||||

db_conn.commit()

|

|

||||||

logger.info("Cleaned database")

|

|

||||||

finally:

|

|

||||||

lock.release()

|

|

||||||

|

|

||||||

@staticmethod

|

@staticmethod

|

||||||

def shutdown():

|

def shutdown():

|

||||||

|

|||||||

@ -7,7 +7,7 @@ from typing import Any

|

|||||||

|

|

||||||

from fastapi_events.dispatcher import dispatch

|

from fastapi_events.dispatcher import dispatch

|

||||||

|

|

||||||

from ..services.events import EventServiceBase

|

from ..services.events.events_base import EventServiceBase

|

||||||

|

|

||||||

|

|

||||||

class FastAPIEventService(EventServiceBase):

|

class FastAPIEventService(EventServiceBase):

|

||||||

|

|||||||

@ -4,9 +4,9 @@ from fastapi import Body, HTTPException, Path, Query

|

|||||||

from fastapi.routing import APIRouter

|

from fastapi.routing import APIRouter

|

||||||

from pydantic import BaseModel, Field

|

from pydantic import BaseModel, Field

|

||||||

|

|

||||||

from invokeai.app.services.board_record_storage import BoardChanges

|

from invokeai.app.services.board_records.board_records_common import BoardChanges

|

||||||

from invokeai.app.services.image_record_storage import OffsetPaginatedResults

|

from invokeai.app.services.boards.boards_common import BoardDTO

|

||||||

from invokeai.app.services.models.board_record import BoardDTO

|

from invokeai.app.services.shared.pagination import OffsetPaginatedResults

|

||||||

|

|

||||||

from ..dependencies import ApiDependencies

|

from ..dependencies import ApiDependencies

|

||||||

|

|

||||||

|

|||||||

@ -8,9 +8,9 @@ from PIL import Image

|

|||||||

from pydantic import BaseModel, Field

|

from pydantic import BaseModel, Field

|

||||||

|

|

||||||

from invokeai.app.invocations.metadata import ImageMetadata

|

from invokeai.app.invocations.metadata import ImageMetadata

|

||||||

from invokeai.app.models.image import ImageCategory, ResourceOrigin

|

from invokeai.app.services.image_records.image_records_common import ImageCategory, ImageRecordChanges, ResourceOrigin

|

||||||

from invokeai.app.services.image_record_storage import OffsetPaginatedResults

|

from invokeai.app.services.images.images_common import ImageDTO, ImageUrlsDTO

|

||||||

from invokeai.app.services.models.image_record import ImageDTO, ImageRecordChanges, ImageUrlsDTO

|

from invokeai.app.services.shared.pagination import OffsetPaginatedResults

|

||||||

|

|

||||||

from ..dependencies import ApiDependencies

|

from ..dependencies import ApiDependencies

|

||||||

|

|

||||||

@ -42,7 +42,7 @@ async def upload_image(

|

|||||||

crop_visible: Optional[bool] = Query(default=False, description="Whether to crop the image"),

|

crop_visible: Optional[bool] = Query(default=False, description="Whether to crop the image"),

|

||||||

) -> ImageDTO:

|

) -> ImageDTO:

|

||||||

"""Uploads an image"""

|

"""Uploads an image"""

|

||||||

if not file.content_type.startswith("image"):

|

if not file.content_type or not file.content_type.startswith("image"):

|

||||||

raise HTTPException(status_code=415, detail="Not an image")

|

raise HTTPException(status_code=415, detail="Not an image")

|

||||||

|

|

||||||

contents = await file.read()

|

contents = await file.read()

|

||||||

@ -322,3 +322,20 @@ async def unstar_images_in_list(

|

|||||||

return ImagesUpdatedFromListResult(updated_image_names=updated_image_names)

|

return ImagesUpdatedFromListResult(updated_image_names=updated_image_names)

|

||||||

except Exception:

|

except Exception:

|

||||||

raise HTTPException(status_code=500, detail="Failed to unstar images")

|

raise HTTPException(status_code=500, detail="Failed to unstar images")

|

||||||

|

|

||||||

|

|

||||||

|

class ImagesDownloaded(BaseModel):

|

||||||

|

response: Optional[str] = Field(

|

||||||

|

description="If defined, the message to display to the user when images begin downloading"

|

||||||

|

)

|

||||||

|

|

||||||

|

|

||||||

|

@images_router.post("/download", operation_id="download_images_from_list", response_model=ImagesDownloaded)

|

||||||

|

async def download_images_from_list(

|

||||||

|

image_names: list[str] = Body(description="The list of names of images to download", embed=True),

|

||||||

|

board_id: Optional[str] = Body(

|

||||||

|

default=None, description="The board from which image should be downloaded from", embed=True

|

||||||

|

),

|

||||||

|

) -> ImagesDownloaded:

|

||||||

|

# return ImagesDownloaded(response="Your images are downloading")

|

||||||

|

raise HTTPException(status_code=501, detail="Endpoint is not yet implemented")

|

||||||

|

|||||||

@ -2,11 +2,11 @@

|

|||||||

|

|

||||||

|

|

||||||

import pathlib

|

import pathlib

|

||||||

from typing import List, Literal, Optional, Union

|

from typing import Annotated, List, Literal, Optional, Union

|

||||||

|

|

||||||

from fastapi import Body, Path, Query, Response

|

from fastapi import Body, Path, Query, Response

|

||||||

from fastapi.routing import APIRouter

|

from fastapi.routing import APIRouter

|

||||||

from pydantic import BaseModel, parse_obj_as

|

from pydantic import BaseModel, ConfigDict, Field, TypeAdapter

|

||||||

from starlette.exceptions import HTTPException

|

from starlette.exceptions import HTTPException

|

||||||

|

|

||||||

from invokeai.backend import BaseModelType, ModelType

|

from invokeai.backend import BaseModelType, ModelType

|

||||||

@ -23,8 +23,14 @@ from ..dependencies import ApiDependencies

|

|||||||

models_router = APIRouter(prefix="/v1/models", tags=["models"])

|

models_router = APIRouter(prefix="/v1/models", tags=["models"])

|

||||||

|

|

||||||

UpdateModelResponse = Union[tuple(OPENAPI_MODEL_CONFIGS)]

|

UpdateModelResponse = Union[tuple(OPENAPI_MODEL_CONFIGS)]

|

||||||

|

update_models_response_adapter = TypeAdapter(UpdateModelResponse)

|

||||||

|

|

||||||

ImportModelResponse = Union[tuple(OPENAPI_MODEL_CONFIGS)]

|

ImportModelResponse = Union[tuple(OPENAPI_MODEL_CONFIGS)]

|

||||||

|

import_models_response_adapter = TypeAdapter(ImportModelResponse)

|

||||||

|

|

||||||

ConvertModelResponse = Union[tuple(OPENAPI_MODEL_CONFIGS)]

|

ConvertModelResponse = Union[tuple(OPENAPI_MODEL_CONFIGS)]

|

||||||

|

convert_models_response_adapter = TypeAdapter(ConvertModelResponse)

|

||||||

|

|

||||||

MergeModelResponse = Union[tuple(OPENAPI_MODEL_CONFIGS)]

|

MergeModelResponse = Union[tuple(OPENAPI_MODEL_CONFIGS)]

|

||||||

ImportModelAttributes = Union[tuple(OPENAPI_MODEL_CONFIGS)]

|

ImportModelAttributes = Union[tuple(OPENAPI_MODEL_CONFIGS)]

|

||||||

|

|

||||||

@ -32,6 +38,11 @@ ImportModelAttributes = Union[tuple(OPENAPI_MODEL_CONFIGS)]

|

|||||||

class ModelsList(BaseModel):

|

class ModelsList(BaseModel):

|

||||||

models: list[Union[tuple(OPENAPI_MODEL_CONFIGS)]]

|

models: list[Union[tuple(OPENAPI_MODEL_CONFIGS)]]

|

||||||

|

|

||||||

|

model_config = ConfigDict(use_enum_values=True)

|

||||||

|

|

||||||

|

|

||||||

|

models_list_adapter = TypeAdapter(ModelsList)

|

||||||

|

|

||||||

|

|

||||||

@models_router.get(

|

@models_router.get(

|

||||||

"/",

|

"/",

|

||||||

@ -49,7 +60,7 @@ async def list_models(

|

|||||||

models_raw.extend(ApiDependencies.invoker.services.model_manager.list_models(base_model, model_type))

|

models_raw.extend(ApiDependencies.invoker.services.model_manager.list_models(base_model, model_type))

|

||||||

else:

|

else:

|

||||||

models_raw = ApiDependencies.invoker.services.model_manager.list_models(None, model_type)

|

models_raw = ApiDependencies.invoker.services.model_manager.list_models(None, model_type)

|

||||||

models = parse_obj_as(ModelsList, {"models": models_raw})

|

models = models_list_adapter.validate_python({"models": models_raw})

|

||||||

return models

|

return models

|

||||||

|

|

||||||

|

|

||||||

@ -105,11 +116,14 @@ async def update_model(

|

|||||||

info.path = new_info.get("path")

|

info.path = new_info.get("path")

|

||||||

|

|

||||||

# replace empty string values with None/null to avoid phenomenon of vae: ''

|

# replace empty string values with None/null to avoid phenomenon of vae: ''

|

||||||

info_dict = info.dict()

|

info_dict = info.model_dump()

|

||||||

info_dict = {x: info_dict[x] if info_dict[x] else None for x in info_dict.keys()}

|

info_dict = {x: info_dict[x] if info_dict[x] else None for x in info_dict.keys()}

|

||||||

|

|

||||||

ApiDependencies.invoker.services.model_manager.update_model(

|

ApiDependencies.invoker.services.model_manager.update_model(

|

||||||

model_name=model_name, base_model=base_model, model_type=model_type, model_attributes=info_dict

|

model_name=model_name,

|

||||||

|

base_model=base_model,

|

||||||

|

model_type=model_type,

|

||||||

|

model_attributes=info_dict,

|

||||||

)

|

)

|

||||||

|

|

||||||

model_raw = ApiDependencies.invoker.services.model_manager.list_model(

|

model_raw = ApiDependencies.invoker.services.model_manager.list_model(

|

||||||

@ -117,7 +131,7 @@ async def update_model(

|

|||||||

base_model=base_model,

|

base_model=base_model,

|

||||||

model_type=model_type,

|

model_type=model_type,

|

||||||

)

|

)

|

||||||

model_response = parse_obj_as(UpdateModelResponse, model_raw)

|

model_response = update_models_response_adapter.validate_python(model_raw)

|

||||||

except ModelNotFoundException as e:

|

except ModelNotFoundException as e:

|

||||||

raise HTTPException(status_code=404, detail=str(e))

|

raise HTTPException(status_code=404, detail=str(e))

|

||||||

except ValueError as e:

|

except ValueError as e:

|

||||||

@ -152,13 +166,15 @@ async def import_model(

|

|||||||

) -> ImportModelResponse:

|

) -> ImportModelResponse:

|

||||||

"""Add a model using its local path, repo_id, or remote URL. Model characteristics will be probed and configured automatically"""

|

"""Add a model using its local path, repo_id, or remote URL. Model characteristics will be probed and configured automatically"""

|

||||||

|

|

||||||

|

location = location.strip("\"' ")

|

||||||

items_to_import = {location}

|

items_to_import = {location}

|

||||||

prediction_types = {x.value: x for x in SchedulerPredictionType}

|

prediction_types = {x.value: x for x in SchedulerPredictionType}

|

||||||

logger = ApiDependencies.invoker.services.logger

|

logger = ApiDependencies.invoker.services.logger

|

||||||

|

|

||||||

try:

|

try:

|

||||||

installed_models = ApiDependencies.invoker.services.model_manager.heuristic_import(

|

installed_models = ApiDependencies.invoker.services.model_manager.heuristic_import(

|

||||||

items_to_import=items_to_import, prediction_type_helper=lambda x: prediction_types.get(prediction_type)

|

items_to_import=items_to_import,

|

||||||

|

prediction_type_helper=lambda x: prediction_types.get(prediction_type),

|

||||||

)

|

)

|

||||||

info = installed_models.get(location)

|

info = installed_models.get(location)

|

||||||

|

|

||||||

@ -170,7 +186,7 @@ async def import_model(

|

|||||||

model_raw = ApiDependencies.invoker.services.model_manager.list_model(

|

model_raw = ApiDependencies.invoker.services.model_manager.list_model(

|

||||||

model_name=info.name, base_model=info.base_model, model_type=info.model_type

|

model_name=info.name, base_model=info.base_model, model_type=info.model_type

|

||||||

)

|

)

|

||||||

return parse_obj_as(ImportModelResponse, model_raw)

|

return import_models_response_adapter.validate_python(model_raw)

|

||||||

|

|

||||||

except ModelNotFoundException as e:

|

except ModelNotFoundException as e:

|

||||||

logger.error(str(e))

|

logger.error(str(e))

|

||||||

@ -204,13 +220,18 @@ async def add_model(

|

|||||||

|

|

||||||

try:

|

try:

|

||||||

ApiDependencies.invoker.services.model_manager.add_model(

|

ApiDependencies.invoker.services.model_manager.add_model(

|

||||||

info.model_name, info.base_model, info.model_type, model_attributes=info.dict()

|

info.model_name,

|

||||||

|

info.base_model,

|

||||||

|

info.model_type,

|

||||||

|

model_attributes=info.model_dump(),

|

||||||

)

|

)

|

||||||

logger.info(f"Successfully added {info.model_name}")

|

logger.info(f"Successfully added {info.model_name}")

|

||||||

model_raw = ApiDependencies.invoker.services.model_manager.list_model(

|

model_raw = ApiDependencies.invoker.services.model_manager.list_model(

|

||||||

model_name=info.model_name, base_model=info.base_model, model_type=info.model_type

|

model_name=info.model_name,

|

||||||

|

base_model=info.base_model,

|

||||||

|

model_type=info.model_type,

|

||||||

)

|

)

|

||||||

return parse_obj_as(ImportModelResponse, model_raw)

|

return import_models_response_adapter.validate_python(model_raw)

|

||||||

except ModelNotFoundException as e:

|

except ModelNotFoundException as e:

|

||||||

logger.error(str(e))

|

logger.error(str(e))

|

||||||

raise HTTPException(status_code=404, detail=str(e))

|

raise HTTPException(status_code=404, detail=str(e))

|

||||||

@ -222,7 +243,10 @@ async def add_model(

|

|||||||

@models_router.delete(

|

@models_router.delete(

|

||||||

"/{base_model}/{model_type}/{model_name}",

|

"/{base_model}/{model_type}/{model_name}",

|

||||||

operation_id="del_model",

|

operation_id="del_model",

|

||||||

responses={204: {"description": "Model deleted successfully"}, 404: {"description": "Model not found"}},

|

responses={

|

||||||

|

204: {"description": "Model deleted successfully"},

|

||||||

|

404: {"description": "Model not found"},

|

||||||

|

},

|

||||||

status_code=204,

|

status_code=204,

|

||||||

response_model=None,

|

response_model=None,

|

||||||

)

|

)

|

||||||

@ -278,7 +302,7 @@ async def convert_model(

|

|||||||

model_raw = ApiDependencies.invoker.services.model_manager.list_model(

|

model_raw = ApiDependencies.invoker.services.model_manager.list_model(

|

||||||

model_name, base_model=base_model, model_type=model_type

|

model_name, base_model=base_model, model_type=model_type

|

||||||

)

|

)

|

||||||

response = parse_obj_as(ConvertModelResponse, model_raw)

|

response = convert_models_response_adapter.validate_python(model_raw)

|

||||||

except ModelNotFoundException as e:

|

except ModelNotFoundException as e:

|

||||||

raise HTTPException(status_code=404, detail=f"Model '{model_name}' not found: {str(e)}")

|

raise HTTPException(status_code=404, detail=f"Model '{model_name}' not found: {str(e)}")

|

||||||

except ValueError as e:

|

except ValueError as e:

|

||||||

@ -301,7 +325,8 @@ async def search_for_models(

|

|||||||

) -> List[pathlib.Path]:

|

) -> List[pathlib.Path]:

|

||||||

if not search_path.is_dir():

|

if not search_path.is_dir():

|

||||||

raise HTTPException(

|

raise HTTPException(

|

||||||

status_code=404, detail=f"The search path '{search_path}' does not exist or is not directory"

|

status_code=404,

|

||||||

|

detail=f"The search path '{search_path}' does not exist or is not directory",

|

||||||

)

|

)

|

||||||

return ApiDependencies.invoker.services.model_manager.search_for_models(search_path)

|

return ApiDependencies.invoker.services.model_manager.search_for_models(search_path)

|

||||||

|

|

||||||

@ -336,6 +361,26 @@ async def sync_to_config() -> bool:

|

|||||||

return True

|

return True

|

||||||

|

|

||||||

|

|

||||||

|

# There's some weird pydantic-fastapi behaviour that requires this to be a separate class

|

||||||

|

# TODO: After a few updates, see if it works inside the route operation handler?

|

||||||

|

class MergeModelsBody(BaseModel):

|

||||||

|

model_names: List[str] = Field(description="model name", min_length=2, max_length=3)

|

||||||

|

merged_model_name: Optional[str] = Field(description="Name of destination model")

|

||||||

|

alpha: Optional[float] = Field(description="Alpha weighting strength to apply to 2d and 3d models", default=0.5)

|

||||||

|

interp: Optional[MergeInterpolationMethod] = Field(description="Interpolation method")

|

||||||

|

force: Optional[bool] = Field(

|

||||||

|