mirror of

https://github.com/invoke-ai/InvokeAI

synced 2024-08-30 20:32:17 +00:00

more updates to many docs, including:

- better readability in dark mode since color change - better looking changelog - fix images which where not loading - also center most of the images - fix some syntax errors like - headlines ending with a colon - codeblocks with wrong fences - codeblocks without shell - update conda prompts from ldm to invokeai - ....

This commit is contained in:

parent

8ea07f3bb0

commit

1c687d6d03

@ -4,7 +4,7 @@ title: Changelog

|

||||

|

||||

# :octicons-log-16: **Changelog**

|

||||

|

||||

- v2.0.0 (9 October 2022)

|

||||

## v2.0.0 <small>(9 October 2022)</small>

|

||||

|

||||

- `dream.py` script renamed `invoke.py`. A `dream.py` script wrapper remains

|

||||

for backward compatibility.

|

||||

@ -31,7 +31,7 @@ title: Changelog

|

||||

- Deprecated `--full_precision` / `-F`. Simply omit it and `invoke.py` will auto

|

||||

configure. To switch away from auto use the new flag like `--precision=float32`.

|

||||

|

||||

- v1.14 (11 September 2022)

|

||||

## v1.14 <small>(11 September 2022)</small>

|

||||

|

||||

- Memory optimizations for small-RAM cards. 512x512 now possible on 4 GB GPUs.

|

||||

- Full support for Apple hardware with M1 or M2 chips.

|

||||

@ -41,7 +41,7 @@ title: Changelog

|

||||

- Improved web server GUI.

|

||||

- Lots of code and documentation cleanups.

|

||||

|

||||

- v1.13 (3 September 2022

|

||||

## v1.13 <small>(3 September 2022)</small>

|

||||

|

||||

- Support image variations (see [VARIATIONS](features/VARIATIONS.md)

|

||||

([Kevin Gibbons](https://github.com/bakkot) and many contributors and reviewers)

|

||||

@ -61,7 +61,7 @@ title: Changelog

|

||||

|

||||

---

|

||||

|

||||

## v1.12 (28 August 2022)

|

||||

## v1.12 <small>(28 August 2022)</small>

|

||||

|

||||

- Improved file handling, including ability to read prompts from standard input.

|

||||

(kudos to [Yunsaki](https://github.com/yunsaki)

|

||||

@ -75,7 +75,7 @@ title: Changelog

|

||||

|

||||

---

|

||||

|

||||

## v1.11 (26 August 2022)

|

||||

## v1.11 <small>(26 August 2022)</small>

|

||||

|

||||

- NEW FEATURE: Support upscaling and face enhancement using the GFPGAN module. (kudos to [Oceanswave](https://github.com/Oceanswave)

|

||||

- You now can specify a seed of -1 to use the previous image's seed, -2 to use the seed for the image generated before that, etc.

|

||||

@ -88,13 +88,13 @@ title: Changelog

|

||||

|

||||

---

|

||||

|

||||

## v1.10 (25 August 2022)

|

||||

## v1.10 <small>(25 August 2022)</small>

|

||||

|

||||

- A barebones but fully functional interactive web server for online generation of txt2img and img2img.

|

||||

|

||||

---

|

||||

|

||||

## v1.09 (24 August 2022)

|

||||

## v1.09 <small>(24 August 2022)</small>

|

||||

|

||||

- A new -v option allows you to generate multiple variants of an initial image

|

||||

in img2img mode. (kudos to [Oceanswave](https://github.com/Oceanswave). [

|

||||

@ -104,7 +104,7 @@ title: Changelog

|

||||

|

||||

---

|

||||

|

||||

## v1.08 (24 August 2022)

|

||||

## v1.08 <small>(24 August 2022)</small>

|

||||

|

||||

- Escape single quotes on the invoke> command before trying to parse. This avoids

|

||||

parse errors.

|

||||

@ -115,7 +115,7 @@ title: Changelog

|

||||

|

||||

---

|

||||

|

||||

## v1.07 (23 August 2022)

|

||||

## v1.07 <small>(23 August 2022)</small>

|

||||

|

||||

- Image filenames will now never fill gaps in the sequence, but will be assigned the

|

||||

next higher name in the chosen directory. This ensures that the alphabetic and chronological

|

||||

@ -123,14 +123,14 @@ title: Changelog

|

||||

|

||||

---

|

||||

|

||||

## v1.06 (23 August 2022)

|

||||

## v1.06 <small>(23 August 2022)</small>

|

||||

|

||||

- Added weighted prompt support contributed by [xraxra](https://github.com/xraxra)

|

||||

- Example of using weighted prompts to tweak a demonic figure contributed by [bmaltais](https://github.com/bmaltais)

|

||||

|

||||

---

|

||||

|

||||

## v1.05 (22 August 2022 - after the drop)

|

||||

## v1.05 <small>(22 August 2022 - after the drop)</small>

|

||||

|

||||

- Filenames now use the following formats:

|

||||

000010.95183149.png -- Two files produced by the same command (e.g. -n2),

|

||||

@ -148,7 +148,7 @@ title: Changelog

|

||||

|

||||

---

|

||||

|

||||

## v1.04 (22 August 2022 - after the drop)

|

||||

## v1.04 <small>(22 August 2022 - after the drop)</small>

|

||||

|

||||

- Updated README to reflect installation of the released weights.

|

||||

- Suppressed very noisy and inconsequential warning when loading the frozen CLIP

|

||||

@ -156,14 +156,14 @@ title: Changelog

|

||||

|

||||

---

|

||||

|

||||

## v1.03 (22 August 2022)

|

||||

## v1.03 <small>(22 August 2022)</small>

|

||||

|

||||

- The original txt2img and img2img scripts from the CompViz repository have been moved into

|

||||

a subfolder named "orig_scripts", to reduce confusion.

|

||||

|

||||

---

|

||||

|

||||

## v1.02 (21 August 2022)

|

||||

## v1.02 <small>(21 August 2022)</small>

|

||||

|

||||

- A copy of the prompt and all of its switches and options is now stored in the corresponding

|

||||

image in a tEXt metadata field named "Dream". You can read the prompt using scripts/images2prompt.py,

|

||||

@ -172,7 +172,7 @@ title: Changelog

|

||||

|

||||

---

|

||||

|

||||

## v1.01 (21 August 2022)

|

||||

## v1.01 <small>(21 August 2022)</small>

|

||||

|

||||

- added k_lms sampling.

|

||||

**Please run "conda env update" to load the k_lms dependencies!!**

|

||||

|

||||

@ -34,7 +34,7 @@ The script is confirmed to work on Linux, Windows and Mac systems.

|

||||

currently rudimentary, but a much better replacement is on its way.

|

||||

|

||||

```bash

|

||||

(ldm) ~/stable-diffusion$ python3 ./scripts/invoke.py

|

||||

(invokeai) ~/stable-diffusion$ python3 ./scripts/invoke.py

|

||||

* Initializing, be patient...

|

||||

Loading model from models/ldm/text2img-large/model.ckpt

|

||||

(...more initialization messages...)

|

||||

@ -51,7 +51,7 @@ invoke> "there's a fly in my soup" -n6 -g

|

||||

invoke> q

|

||||

|

||||

# this shows how to retrieve the prompt stored in the saved image's metadata

|

||||

(ldm) ~/stable-diffusion$ python ./scripts/images2prompt.py outputs/img_samples/*.png

|

||||

(invokeai) ~/stable-diffusion$ python ./scripts/images2prompt.py outputs/img_samples/*.png

|

||||

00009.png: "ashley judd riding a camel" -s150 -S 416354203

|

||||

00010.png: "ashley judd riding a camel" -s150 -S 1362479620

|

||||

00011.png: "there's a fly in my soup" -n6 -g -S 2685670268

|

||||

|

||||

@ -50,28 +50,28 @@ We are hoping to get rid of the need for this workaround in an upcoming release.

|

||||

|

||||

1. Open image in Photoshop

|

||||

|

||||

|

||||

<div align="center" markdown></div>

|

||||

|

||||

2. Use any of the selection tools (Marquee, Lasso, or Wand) to select the area you desire to inpaint.

|

||||

|

||||

|

||||

<div align="center" markdown></div>

|

||||

|

||||

3. Because we'll be applying a mask over the area we want to preserve, you should now select the inverse by using the ++shift+ctrl+i++ shortcut, or right clicking and using the "Select Inverse" option.

|

||||

|

||||

4. You'll now create a mask by selecting the image layer, and Masking the selection. Make sure that you don't delete any of the undrlying image, or your inpainting results will be dramatically impacted.

|

||||

|

||||

|

||||

<div align="center" markdown></div>

|

||||

|

||||

5. Make sure to hide any background layers that are present. You should see the mask applied to your image layer, and the image on your canvas should display the checkered background.

|

||||

|

||||

|

||||

<div align="center" markdown></div>

|

||||

|

||||

6. Save the image as a transparent PNG by using `File`-->`Save a Copy` from the menu bar, or by using the keyboard shortcut ++alt+ctrl+s++

|

||||

|

||||

|

||||

<div align="center" markdown></div>

|

||||

|

||||

7. After following the inpainting instructions above (either through the CLI or the Web UI), marvel at your newfound ability to selectively invoke. Lookin' good!

|

||||

|

||||

|

||||

<div align="center" markdown></div>

|

||||

|

||||

8. In the export dialogue, Make sure the "Save colour values from transparent pixels" checkbox is selected.

|

||||

|

||||

@ -6,11 +6,13 @@ title: Others

|

||||

|

||||

## **Google Colab**

|

||||

|

||||

Stable Diffusion AI Notebook: [](https://colab.research.google.com/github/lstein/stable-diffusion/blob/main/notebooks/Stable_Diffusion_AI_Notebook.ipynb)

|

||||

[{ align="right" }](https://colab.research.google.com/github/lstein/stable-diffusion/blob/main/notebooks/Stable_Diffusion_AI_Notebook.ipynb)

|

||||

|

||||

Open and follow instructions to use an isolated environment running Dream.

|

||||

|

||||

Output Example:

|

||||

Output Example:

|

||||

|

||||

|

||||

|

||||

---

|

||||

|

||||

@ -29,12 +31,12 @@ invoke> "pond garden with lotus by claude monet" --seamless -s100 -n4

|

||||

## **Shortcuts: Reusing Seeds**

|

||||

|

||||

Since it is so common to reuse seeds while refining a prompt, there is now a shortcut as of version

|

||||

1.11. Provide a `**-S**` (or `**--seed**`) switch of `-1` to use the seed of the most recent image

|

||||

generated. If you produced multiple images with the `**-n**` switch, then you can go back further

|

||||

using -2, -3, etc. up to the first image generated by the previous command. Sorry, but you can't go

|

||||

1.11. Provide a `-S` (or `--seed`) switch of `-1` to use the seed of the most recent image

|

||||

generated. If you produced multiple images with the `-n` switch, then you can go back further

|

||||

using `-2`, `-3`, etc. up to the first image generated by the previous command. Sorry, but you can't go

|

||||

back further than one command.

|

||||

|

||||

Here's an example of using this to do a quick refinement. It also illustrates using the new `**-G**`

|

||||

Here's an example of using this to do a quick refinement. It also illustrates using the new `-G`

|

||||

switch to turn on upscaling and face enhancement (see previous section):

|

||||

|

||||

```bash

|

||||

@ -54,7 +56,7 @@ outputs/img-samples/000040.3498014304.png: "a cute child playing hopscotch" -G1.

|

||||

## **Weighted Prompts**

|

||||

|

||||

You may weight different sections of the prompt to tell the sampler to attach different levels of

|

||||

priority to them, by adding `:(number)` to the end of the section you wish to up- or downweight. For

|

||||

priority to them, by adding `:<percent>` to the end of the section you wish to up- or downweight. For

|

||||

example consider this prompt:

|

||||

|

||||

```bash

|

||||

@ -122,7 +124,7 @@ internet. In the following runs, it will load up the cached versions of the requ

|

||||

`.cache` directory of the system.

|

||||

|

||||

```bash

|

||||

(ldm) ~/stable-diffusion$ python3 ./scripts/preload_models.py

|

||||

(invokeai) ~/stable-diffusion$ python3 ./scripts/preload_models.py

|

||||

preloading bert tokenizer...

|

||||

Downloading: 100%|██████████████████████████████████| 28.0/28.0 [00:00<00:00, 49.3kB/s]

|

||||

Downloading: 100%|██████████████████████████████████| 226k/226k [00:00<00:00, 2.79MB/s]

|

||||

|

||||

@ -25,14 +25,16 @@ implementations.

|

||||

|

||||

Consider this image:

|

||||

|

||||

<div align="center" markdown>

|

||||

|

||||

</div>

|

||||

|

||||

Pretty nice, but it's annoying that the top of her head is cut

|

||||

off. She's also a bit off center. Let's fix that!

|

||||

|

||||

~~~~

|

||||

```bash

|

||||

invoke> !fix images/curly.png --outcrop top 64 right 64

|

||||

~~~~

|

||||

```

|

||||

|

||||

This is saying to apply the `outcrop` extension by extending the top

|

||||

of the image by 64 pixels, and the right of the image by the same

|

||||

@ -42,7 +44,9 @@ specify any number of pixels to extend. You can also abbreviate

|

||||

|

||||

The result looks like this:

|

||||

|

||||

<div align="center" markdown>

|

||||

|

||||

</div>

|

||||

|

||||

The new image is actually slightly larger than the original (576x576,

|

||||

because 64 pixels were added to the top and right sides.)

|

||||

@ -66,33 +70,36 @@ The `outpaint` extension does the same thing, but with subtle

|

||||

differences. Starting with the same image, here is how we would add an

|

||||

additional 64 pixels to the top of the image:

|

||||

|

||||

~~~

|

||||

```bash

|

||||

invoke> !fix images/curly.png --out_direction top 64

|

||||

~~~

|

||||

```

|

||||

|

||||

(you can abbreviate `--out_direction` as `-D`.

|

||||

|

||||

The result is shown here:

|

||||

|

||||

<div align="center" markdown>

|

||||

|

||||

</div>

|

||||

|

||||

Although the effect is similar, there are significant differences from

|

||||

outcropping:

|

||||

|

||||

1. You can only specify one direction to extend at a time.

|

||||

2. The image is **not** resized. Instead, the image is shifted by the specified

|

||||

- You can only specify one direction to extend at a time.

|

||||

- The image is **not** resized. Instead, the image is shifted by the specified

|

||||

number of pixels. If you look carefully, you'll see that less of the lady's

|

||||

torso is visible in the image.

|

||||

3. Because the image dimensions remain the same, there's no rounding

|

||||

- Because the image dimensions remain the same, there's no rounding

|

||||

to multiples of 64.

|

||||

4. Attempting to outpaint larger areas will frequently give rise to ugly

|

||||

- Attempting to outpaint larger areas will frequently give rise to ugly

|

||||

ghosting effects.

|

||||

5. For best results, try increasing the step number.

|

||||

6. If you don't specify a pixel value in -D, it will default to half

|

||||

- For best results, try increasing the step number.

|

||||

- If you don't specify a pixel value in `-D`, it will default to half

|

||||

of the whole image, which is likely not what you want.

|

||||

|

||||

Neither `outpaint` nor `outcrop` are perfect, but we continue to tune

|

||||

and improve them. If one doesn't work, try the other. You may also

|

||||

wish to experiment with other `img2img` arguments, such as `-C`, `-f`

|

||||

and `-s`.

|

||||

!!! tip

|

||||

|

||||

Neither `outpaint` nor `outcrop` are perfect, but we continue to tune

|

||||

and improve them. If one doesn't work, try the other. You may also

|

||||

wish to experiment with other `img2img` arguments, such as `-C`, `-f`

|

||||

and `-s`.

|

||||

|

||||

@ -34,13 +34,13 @@ work. These are loaded when you run `scripts/preload_models.py`. If

|

||||

GFPAN is failing with an error, please run the following from the

|

||||

InvokeAI directory:

|

||||

|

||||

~~~~

|

||||

```bash

|

||||

python scripts/preload_models.py

|

||||

~~~~

|

||||

```

|

||||

|

||||

If you do not run this script in advance, the GFPGAN module will attempt

|

||||

to download the models files the first time you try to perform facial

|

||||

reconstruction.

|

||||

reconstruction.

|

||||

|

||||

Alternatively, if you have GFPGAN installed elsewhere, or if you are

|

||||

using an earlier version of this package which asked you to install

|

||||

@ -89,13 +89,13 @@ too.

|

||||

### Example Usage

|

||||

|

||||

```bash

|

||||

invoke> superman dancing with a panda bear -U 2 0.6 -G 0.4

|

||||

invoke> "superman dancing with a panda bear" -U 2 0.6 -G 0.4

|

||||

```

|

||||

|

||||

This also works with img2img:

|

||||

|

||||

```bash

|

||||

invoke> a man wearing a pineapple hat -I path/to/your/file.png -U 2 0.5 -G 0.6

|

||||

invoke> "a man wearing a pineapple hat" -I path/to/your/file.png -U 2 0.5 -G 0.6

|

||||

```

|

||||

|

||||

!!! note

|

||||

@ -129,14 +129,14 @@ You can use `-ft` prompt argument to swap between CodeFormer and the

|

||||

default GFPGAN. The above mentioned `-G` prompt argument will allow

|

||||

you to control the strength of the restoration effect.

|

||||

|

||||

### Usage:

|

||||

### Usage

|

||||

|

||||

The following command will perform face restoration with CodeFormer instead of

|

||||

the default gfpgan.

|

||||

|

||||

`<prompt> -G 0.8 -ft codeformer`

|

||||

|

||||

### Other Options:

|

||||

### Other Options

|

||||

|

||||

- `-cf` - cf or CodeFormer Fidelity takes values between `0` and `1`. 0 produces

|

||||

high quality results but low accuracy and 1 produces lower quality results but

|

||||

@ -162,7 +162,7 @@ previously-generated file. Just use the syntax `!fix path/to/file.png

|

||||

2X for a file named `./outputs/img-samples/000044.2945021133.png`,

|

||||

just run:

|

||||

|

||||

```

|

||||

```bash

|

||||

invoke> !fix ./outputs/img-samples/000044.2945021133.png -G 0.8 -U 2

|

||||

```

|

||||

|

||||

@ -170,7 +170,7 @@ A new file named `000044.2945021133.fixed.png` will be created in the output

|

||||

directory. Note that the `!fix` command does not replace the original file,

|

||||

unlike the behavior at generate time.

|

||||

|

||||

### Disabling:

|

||||

### Disabling

|

||||

|

||||

If, for some reason, you do not wish to load the GFPGAN and/or ESRGAN libraries,

|

||||

you can disable them on the invoke.py command line with the `--no_restore` and

|

||||

|

||||

@ -2,7 +2,7 @@

|

||||

title: Prompting Features

|

||||

---

|

||||

|

||||

# :octicons-command-palette-24: Prompting Features

|

||||

# :octicons-command-palette-16: Prompting Features

|

||||

|

||||

## **Reading Prompts from a File**

|

||||

|

||||

@ -19,14 +19,15 @@ innovative packaging for a squid's dinner -S137038382

|

||||

Then pass this file's name to `invoke.py` when you invoke it:

|

||||

|

||||

```bash

|

||||

(ldm) ~/stable-diffusion$ python3 scripts/invoke.py --from_file "path/to/prompts.txt"

|

||||

(invokeai) ~/stable-diffusion$ python3 scripts/invoke.py --from_file "path/to/prompts.txt"

|

||||

```

|

||||

|

||||

You may read a series of prompts from standard input by providing a filename of `-`:

|

||||

|

||||

```bash

|

||||

(ldm) ~/stable-diffusion$ echo "a beautiful day" | python3 scripts/invoke.py --from_file -

|

||||

(invokeai) ~/stable-diffusion$ echo "a beautiful day" | python3 scripts/invoke.py --from_file -

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## **Negative and Unconditioned Prompts**

|

||||

@ -34,7 +35,7 @@ You may read a series of prompts from standard input by providing a filename of

|

||||

Any words between a pair of square brackets will instruct Stable

|

||||

Diffusion to attempt to ban the concept from the generated image.

|

||||

|

||||

```bash

|

||||

```text

|

||||

this is a test prompt [not really] to make you understand [cool] how this works.

|

||||

```

|

||||

|

||||

@ -46,25 +47,33 @@ original prompt:

|

||||

|

||||

`#!bash "A fantastical translucent poney made of water and foam, ethereal, radiant, hyperalism, scottish folklore, digital painting, artstation, concept art, smooth, 8 k frostbite 3 engine, ultra detailed, art by artgerm and greg rutkowski and magali villeneuve" -s 20 -W 512 -H 768 -C 7.5 -A k_euler_a -S 1654590180`

|

||||

|

||||

<div align="center" markdown>

|

||||

|

||||

</div>

|

||||

|

||||

That image has a woman, so if we want the horse without a rider, we can influence the image not to have a woman by putting [woman] in the prompt, like this:

|

||||

|

||||

`#!bash "A fantastical translucent poney made of water and foam, ethereal, radiant, hyperalism, scottish folklore, digital painting, artstation, concept art, smooth, 8 k frostbite 3 engine, ultra detailed, art by artgerm and greg rutkowski and magali villeneuve [woman]" -s 20 -W 512 -H 768 -C 7.5 -A k_euler_a -S 1654590180`

|

||||

|

||||

<div align="center" markdown>

|

||||

|

||||

</div>

|

||||

|

||||

That's nice - but say we also don't want the image to be quite so blue. We can add "blue" to the list of negative prompts, so it's now [woman blue]:

|

||||

|

||||

`#!bash "A fantastical translucent poney made of water and foam, ethereal, radiant, hyperalism, scottish folklore, digital painting, artstation, concept art, smooth, 8 k frostbite 3 engine, ultra detailed, art by artgerm and greg rutkowski and magali villeneuve [woman blue]" -s 20 -W 512 -H 768 -C 7.5 -A k_euler_a -S 1654590180`

|

||||

|

||||

<div align="center" markdown>

|

||||

|

||||

</div>

|

||||

|

||||

Getting close - but there's no sense in having a saddle when our horse doesn't have a rider, so we'll add one more negative prompt: [woman blue saddle].

|

||||

|

||||

`#!bash "A fantastical translucent poney made of water and foam, ethereal, radiant, hyperalism, scottish folklore, digital painting, artstation, concept art, smooth, 8 k frostbite 3 engine, ultra detailed, art by artgerm and greg rutkowski and magali villeneuve [woman blue saddle]" -s 20 -W 512 -H 768 -C 7.5 -A k_euler_a -S 1654590180`

|

||||

|

||||

<div align="center" markdown>

|

||||

|

||||

</div>

|

||||

|

||||

!!! notes "Notes about this feature:"

|

||||

|

||||

@ -101,44 +110,58 @@ illustrate, here are three images generated using various combinations

|

||||

of blend weights. As usual, unless you fix the seed, the prompts will give you

|

||||

different results each time you run them.

|

||||

|

||||

---

|

||||

|

||||

<div align="center" markdown>

|

||||

### "blue sphere, red cube, hybrid"

|

||||

</div>

|

||||

|

||||

This example doesn't use melding at all and represents the default way

|

||||

of mixing concepts.

|

||||

|

||||

<img src="../assets/prompt-blending/blue-sphere-red-cube-hybrid.png" width=256>

|

||||

<div align="center" markdown>

|

||||

|

||||

</div>

|

||||

|

||||

It's interesting to see how the AI expressed the concept of "cube" as

|

||||

the four quadrants of the enclosing frame. If you look closely, there

|

||||

is depth there, so the enclosing frame is actually a cube.

|

||||

|

||||

<div align="center" markdown>

|

||||

### "blue sphere:0.25 red cube:0.75 hybrid"

|

||||

|

||||

<img src="../assets/prompt-blending/blue-sphere-0.25-red-cube-0.75-hybrid.png" width=256>

|

||||

|

||||

</div>

|

||||

|

||||

Now that's interesting. We get neither a blue sphere nor a red cube,

|

||||

but a red sphere embedded in a brick wall, which represents a melding

|

||||

of concepts within the AI's "latent space" of semantic

|

||||

representations. Where is Ludwig Wittgenstein when you need him?

|

||||

|

||||

<div align="center" markdown>

|

||||

### "blue sphere:0.75 red cube:0.25 hybrid"

|

||||

|

||||

<img src="../assets/prompt-blending/blue-sphere-0.75-red-cube-0.25-hybrid.png" width=256>

|

||||

|

||||

</div>

|

||||

|

||||

Definitely more blue-spherey. The cube is gone entirely, but it's

|

||||

really cool abstract art.

|

||||

|

||||

<div align="center" markdown>

|

||||

### "blue sphere:0.5 red cube:0.5 hybrid"

|

||||

|

||||

<img src="../assets/prompt-blending/blue-sphere-0.5-red-cube-0.5-hybrid.png" width=256>

|

||||

|

||||

</div>

|

||||

|

||||

Whoa...! I see blue and red, but no spheres or cubes. Is the word

|

||||

"hybrid" summoning up the concept of some sort of scifi creature?

|

||||

Let's find out.

|

||||

|

||||

<div align="center" markdown>

|

||||

### "blue sphere:0.5 red cube:0.5"

|

||||

|

||||

<img src="../assets/prompt-blending/blue-sphere-0.5-red-cube-0.5.png" width=256>

|

||||

|

||||

</div>

|

||||

|

||||

Indeed, removing the word "hybrid" produces an image that is more like

|

||||

what we'd expect.

|

||||

@ -146,4 +169,3 @@ what we'd expect.

|

||||

In conclusion, prompt blending is great for exploring creative space,

|

||||

but can be difficult to direct. A forthcoming release of InvokeAI will

|

||||

feature more deterministic prompt weighting.

|

||||

|

||||

|

||||

@ -23,13 +23,13 @@ As the default backend is not available on Windows, if you're using that

|

||||

platform, set the environment variable `PL_TORCH_DISTRIBUTED_BACKEND` to `gloo`

|

||||

|

||||

```bash

|

||||

python3 ./main.py --base ./configs/stable-diffusion/v1-finetune.yaml \

|

||||

--actual_resume ./models/ldm/stable-diffusion-v1/model.ckpt \

|

||||

-t \

|

||||

-n my_cat \

|

||||

--gpus 0 \

|

||||

--data_root D:/textual-inversion/my_cat \

|

||||

--init_word 'cat'

|

||||

python3 ./main.py -t \

|

||||

--base ./configs/stable-diffusion/v1-finetune.yaml \

|

||||

--actual_resume ./models/ldm/stable-diffusion-v1/model.ckpt \

|

||||

-n my_cat \

|

||||

--gpus 0 \

|

||||

--data_root D:/textual-inversion/my_cat \

|

||||

--init_word 'cat'

|

||||

```

|

||||

|

||||

During the training process, files will be created in

|

||||

@ -59,7 +59,8 @@ Once the model is trained, specify the trained .pt or .bin file when starting

|

||||

invoke using

|

||||

|

||||

```bash

|

||||

python3 ./scripts/invoke.py --embedding_path /path/to/embedding.pt

|

||||

python3 ./scripts/invoke.py \

|

||||

--embedding_path /path/to/embedding.pt

|

||||

```

|

||||

|

||||

Then, to utilize your subject at the invoke prompt

|

||||

@ -80,9 +81,9 @@ LDM checkpoints using:

|

||||

|

||||

```bash

|

||||

python3 ./scripts/merge_embeddings.py \

|

||||

--manager_ckpts /path/to/first/embedding.pt \

|

||||

[</path/to/second/embedding.pt>,[...]] \

|

||||

--output_path /path/to/output/embedding.pt

|

||||

--manager_ckpts /path/to/first/embedding.pt \

|

||||

[</path/to/second/embedding.pt>,[...]] \

|

||||

--output_path /path/to/output/embedding.pt

|

||||

```

|

||||

|

||||

Credit goes to rinongal and the repository

|

||||

|

||||

@ -25,10 +25,11 @@ variations to create the desired image of Xena, Warrior Princess.

|

||||

|

||||

## Step 1 -- Find a base image that you like

|

||||

|

||||

The prompt we will use throughout is

|

||||

`lucy lawless as xena, warrior princess, character portrait, high resolution.`

|

||||

The prompt we will use throughout is:

|

||||

|

||||

This will be indicated as `prompt` in the examples below.

|

||||

`#!bash "lucy lawless as xena, warrior princess, character portrait, high resolution."`

|

||||

|

||||

This will be indicated as `#!bash "prompt"` in the examples below.

|

||||

|

||||

First we let SD create a series of images in the usual way, in this case

|

||||

requesting six iterations:

|

||||

@ -45,7 +46,10 @@ Outputs:

|

||||

./outputs/Xena/000001.3357757885.png: "prompt" -s50 -W512 -H512 -C7.5 -Ak_lms -S3357757885

|

||||

```

|

||||

|

||||

<figure markdown>

|

||||

|

||||

<figcaption> Seed 3357757885 looks nice </figcaption>

|

||||

</figure>

|

||||

|

||||

---

|

||||

|

||||

@ -77,9 +81,15 @@ used to generate it.

|

||||

This gives us a series of closely-related variations, including the two shown

|

||||

here.

|

||||

|

||||

<figure markdown>

|

||||

|

||||

<figcaption>subseed 3647897225</figcaption>

|

||||

</figure>

|

||||

|

||||

<figure markdown>

|

||||

|

||||

<figcaption>subseed 1614299449</figcaption>

|

||||

</figure>

|

||||

|

||||

I like the expression on Xena's face in the first one (subseed 3647897225), and

|

||||

the armor on her shoulder in the second one (subseed 1614299449). Can we combine

|

||||

@ -97,7 +107,10 @@ Outputs:

|

||||

Here we are providing equal weights (0.1 and 0.1) for both the subseeds. The

|

||||

resulting image is close, but not exactly what I wanted:

|

||||

|

||||

<figure markdown>

|

||||

|

||||

<figcaption> subseed 1614299449 </figcaption>

|

||||

</figure>

|

||||

|

||||

We could either try combining the images with different weights, or we can

|

||||

generate more variations around the almost-but-not-quite image. We do the

|

||||

@ -118,7 +131,10 @@ Outputs:

|

||||

This produces six images, all slight variations on the combination of the chosen

|

||||

two images. Here's the one I like best:

|

||||

|

||||

<figure markdown>

|

||||

|

||||

<figcaption> subseed 3747154981 </figcaption>

|

||||

</figure>

|

||||

|

||||

As you can see, this is a very powerful tool, which when combined with subprompt

|

||||

weighting, gives you great control over the content and quality of your

|

||||

|

||||

@ -9,7 +9,7 @@ server (see screenshot). To use it, run the `invoke.py` script by

|

||||

adding the `--web` option:

|

||||

|

||||

```bash

|

||||

(ldm) ~/InvokeAI$ python3 scripts/invoke.py --web

|

||||

(invokeai) ~/InvokeAI$ python3 scripts/invoke.py --web

|

||||

```

|

||||

|

||||

You can then connect to the server by pointing your web browser at

|

||||

@ -19,7 +19,7 @@ either the IP address of the host you are running it on, or the

|

||||

wildcard `0.0.0.0`. For example:

|

||||

|

||||

```bash

|

||||

(ldm) ~/InvokeAI$ python3 scripts/invoke.py --web --host 0.0.0.0

|

||||

(invokeai) ~/InvokeAI$ python3 scripts/invoke.py --web --host 0.0.0.0

|

||||

```

|

||||

|

||||

# Quick guided walkthrough of the WebGUI's features

|

||||

@ -55,7 +55,9 @@ There are also a series of icons to the left of the control panel (see

|

||||

highlighted area in the screenshot below) which select among a series

|

||||

of tabs for performing different types of operations.

|

||||

|

||||

<figure markdown>

|

||||

{:width="512px"}

|

||||

</figure>

|

||||

|

||||

From top to bottom, these are:

|

||||

|

||||

@ -88,51 +90,51 @@ using its IP address or domain name.

|

||||

|

||||

#### Basics

|

||||

|

||||

3. Generate an image by typing *strawberry sushi* into the large

|

||||

1. Generate an image by typing *strawberry sushi* into the large

|

||||

prompt field on the upper left and then clicking on the Invoke button

|

||||

(the one with the Camera icon). After a short wait, you'll see a large

|

||||

image of sushi in the image panel, and a new thumbnail in the gallery

|

||||

on the right.

|

||||

|

||||

If you need more room on the screen, you can turn the gallery off

|

||||

by clicking on the **x** to the right of "Your Invocations". You can

|

||||

turn it back on later by clicking the image icon that appears in the

|

||||

gallery's place.

|

||||

If you need more room on the screen, you can turn the gallery off

|

||||

by clicking on the **x** to the right of "Your Invocations". You can

|

||||

turn it back on later by clicking the image icon that appears in the

|

||||

gallery's place.

|

||||

|

||||

The images are written into the directory indicated by the `--outdir`

|

||||

option provided at script launch time. By default, this is

|

||||

`outputs/img-samples` under the InvokeAI directory.

|

||||

The images are written into the directory indicated by the `--outdir`

|

||||

option provided at script launch time. By default, this is

|

||||

`outputs/img-samples` under the InvokeAI directory.

|

||||

|

||||

4. Generate a bunch of strawberry sushi images by increasing the

|

||||

2. Generate a bunch of strawberry sushi images by increasing the

|

||||

number of requested images by adjusting the Images counter just below

|

||||

the Camera button. As each is generated, it will be added to the

|

||||

gallery. You can switch the active image by clicking on the gallery

|

||||

thumbnails.

|

||||

|

||||

5. Try playing with different settings, including image width and

|

||||

3. Try playing with different settings, including image width and

|

||||

height, the Sampler, the Steps and the CFG scale.

|

||||

|

||||

Image *Width* and *Height* do what you'd expect. However, be aware that

|

||||

larger images consume more VRAM memory and take longer to generate.

|

||||

Image *Width* and *Height* do what you'd expect. However, be aware that

|

||||

larger images consume more VRAM memory and take longer to generate.

|

||||

|

||||

The *Sampler* controls how the AI selects the image to display. Some

|

||||

samplers are more "creative" than others and will produce a wider

|

||||

range of variations (see next section). Some samplers run faster than

|

||||

others.

|

||||

The *Sampler* controls how the AI selects the image to display. Some

|

||||

samplers are more "creative" than others and will produce a wider

|

||||

range of variations (see next section). Some samplers run faster than

|

||||

others.

|

||||

|

||||

*Steps* controls how many noising/denoising/sampling steps the AI will

|

||||

take. The higher this value, the more refined the image will be, but

|

||||

the longer the image will take to generate. A typical strategy is to

|

||||

generate images with a low number of steps in order to select one to

|

||||

work on further, and then regenerate it using a higher number of

|

||||

steps.

|

||||

*Steps* controls how many noising/denoising/sampling steps the AI will

|

||||

take. The higher this value, the more refined the image will be, but

|

||||

the longer the image will take to generate. A typical strategy is to

|

||||

generate images with a low number of steps in order to select one to

|

||||

work on further, and then regenerate it using a higher number of

|

||||

steps.

|

||||

|

||||

The *CFG Scale* controls how hard the AI tries to match the generated

|

||||

image to the input prompt. You can go as high or low as you like, but

|

||||

generally values greater than 20 won't improve things much, and values

|

||||

lower than 5 will produce unexpected images. There are complex

|

||||

interactions between *Steps*, *CFG Scale* and the *Sampler*, so

|

||||

experiment to find out what works for you.

|

||||

The *CFG Scale* controls how hard the AI tries to match the generated

|

||||

image to the input prompt. You can go as high or low as you like, but

|

||||

generally values greater than 20 won't improve things much, and values

|

||||

lower than 5 will produce unexpected images. There are complex

|

||||

interactions between *Steps*, *CFG Scale* and the *Sampler*, so

|

||||

experiment to find out what works for you.

|

||||

|

||||

6. To regenerate a previously-generated image, select the image you

|

||||

want and click *Use All*. This loads the text prompt and other

|

||||

@ -140,8 +142,8 @@ original settings into the control panel. If you then press *Invoke*

|

||||

it will regenerate the image exactly. You can also selectively modify

|

||||

the prompt or other settings to tweak the image.

|

||||

|

||||

Alternatively, you may click on *Use Seed* to load just the image's

|

||||

seed, and leave other settings unchanged.

|

||||

Alternatively, you may click on *Use Seed* to load just the image's

|

||||

seed, and leave other settings unchanged.

|

||||

|

||||

7. To regenerate a Stable Diffusion image that was generated by

|

||||

another SD package, you need to know its text prompt and its

|

||||

@ -154,21 +156,21 @@ steps and dimensions, but it will (usually) be close.

|

||||

|

||||

#### Variations on a theme

|

||||

|

||||

5. Let's try generating some variations. Select your favorite sushi

|

||||

1. Let's try generating some variations. Select your favorite sushi

|

||||

image from the gallery to load it. Then select "Use All" from the list

|

||||

of buttons above. This will load up all the settings used to generate

|

||||

this image, including its unique seed.

|

||||

|

||||

Go down to the Variations section of the Control Panel and set the

|

||||

button to On. Set Variation Amount to 0.2 to generate a modest

|

||||

number of variations on the image, and also set the Image counter to

|

||||

4. Press the `invoke` button. This will generate a series of related

|

||||

images. To obtain smaller variations, just lower the Variation

|

||||

Amount. You may also experiment with changing the Sampler. Some

|

||||

samplers generate more variability than others. *k_euler_a* is

|

||||

particularly creative, while *ddim* is pretty conservative.

|

||||

Go down to the Variations section of the Control Panel and set the

|

||||

button to On. Set Variation Amount to 0.2 to generate a modest

|

||||

number of variations on the image, and also set the Image counter to

|

||||

`4`. Press the `invoke` button. This will generate a series of related

|

||||

images. To obtain smaller variations, just lower the Variation

|

||||

Amount. You may also experiment with changing the Sampler. Some

|

||||

samplers generate more variability than others. *k_euler_a* is

|

||||

particularly creative, while *ddim* is pretty conservative.

|

||||

|

||||

6. For even more variations, experiment with increasing the setting

|

||||

2. For even more variations, experiment with increasing the setting

|

||||

for *Perlin*. This adds a bit of noise to the image generation

|

||||

process. Note that values of Perlin noise greater than 0.15 produce

|

||||

poor images for several of the samplers.

|

||||

@ -181,7 +183,7 @@ particular issues with generating reallistic eyes. InvokeAI provides

|

||||

the ability to reconstruct faces using either the GFPGAN or CodeFormer

|

||||

libraries. For more information see [POSTPROCESS](POSTPROCESS.md).

|

||||

|

||||

7. Invoke a prompt that generates a mangled face. A prompt that often

|

||||

1. Invoke a prompt that generates a mangled face. A prompt that often

|

||||

gives this is "portrait of a lawyer, 3/4 shot" (this is not intended

|

||||

as a slur against lawyers!) Once you have an image that needs some

|

||||

touching up, load it into the Image panel, and press the button with

|

||||

@ -190,15 +192,16 @@ box will appear. Leave *Strength* at 0.8 and press *Restore Faces". If

|

||||

all goes well, the eyes and other aspects of the face will be improved

|

||||

(see the second screenshot)

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

The facial reconstruction *Strength* field adjusts how aggressively

|

||||

the face library will try to alter the face. It can be as high as 1.0,

|

||||

but be aware that this often softens the face airbrush style, losing

|

||||

some details. The default 0.8 is usually sufficient.

|

||||

|

||||

|

||||

8. "Upscaling" is the process of increasing the size of an image while

|

||||

The facial reconstruction *Strength* field adjusts how aggressively

|

||||

the face library will try to alter the face. It can be as high as 1.0,

|

||||

but be aware that this often softens the face airbrush style, losing

|

||||

some details. The default 0.8 is usually sufficient.

|

||||

|

||||

2. "Upscaling" is the process of increasing the size of an image while

|

||||

retaining the sharpness. InvokeAI uses an external library called

|

||||

"ESRGAN" to do this. To invoke upscaling, simply select an image and

|

||||

press the *HD* button above it. You can select between 2X and 4X

|

||||

@ -206,7 +209,7 @@ upscaling, and adjust the upscaling strength, which has much the same

|

||||

meaning as in facial reconstruction. Try running this on one of your

|

||||

previously-generated images.

|

||||

|

||||

9. Finally, you can run facial reconstruction and/or upscaling

|

||||

3. Finally, you can run facial reconstruction and/or upscaling

|

||||

automatically after each Invocation. Go to the Advanced Options

|

||||

section of the Control Panel and turn on *Restore Face* and/or

|

||||

*Upscale*.

|

||||

@ -224,28 +227,32 @@ and

|

||||

[Lincoln-and-Parrot-512-transparent.png](../assets/Lincoln-and-Parrot-512-transparent.png).

|

||||

Download these images to your local machine now to continue with the walkthrough.

|

||||

|

||||

10. Click on the *Image to Image* tab icon, which is the second icon

|

||||

1. Click on the *Image to Image* tab icon, which is the second icon

|

||||

from the top on the left-hand side of the screen:

|

||||

|

||||

|

||||

<figure markdown>

|

||||

|

||||

</figure>

|

||||

|

||||

This will bring you to a screen similar to the one shown here:

|

||||

This will bring you to a screen similar to the one shown here:

|

||||

|

||||

{:width="640px"}

|

||||

<figure markdown>

|

||||

{:width="640px"}

|

||||

</figure>

|

||||

|

||||

Drag-and-drop the Lincoln-and-Parrot image into the Image panel, or

|

||||

2. Drag-and-drop the Lincoln-and-Parrot image into the Image panel, or

|

||||

click the blank area to get an upload dialog. The image will load into

|

||||

an area marked *Initial Image*. (The WebGUI will also load the most

|

||||

recently-generated image from the gallery into a section on the left,

|

||||

but this image will be replaced in the next step.)

|

||||

|

||||

11. Go to the prompt box and type *old sea captain with raven on

|

||||

3. Go to the prompt box and type *old sea captain with raven on

|

||||

shoulder* and press Invoke. A derived image will appear to the right

|

||||

of the original one:

|

||||

|

||||

{:width="640px"}

|

||||

{:width="640px"}

|

||||

|

||||

12. Experiment with the different settings. The most influential one

|

||||

4. Experiment with the different settings. The most influential one

|

||||

in Image to Image is *Image to Image Strength* located about midway

|

||||

down the control panel. By default it is set to 0.75, but can range

|

||||

from 0.0 to 0.99. The higher the value, the more of the original image

|

||||

@ -255,7 +262,7 @@ the Sampler and CFG Scale also influence the final result. You can

|

||||

also generate variations in the same way as described in Text to

|

||||

Image.

|

||||

|

||||

13. What if we only want to change certain part(s) of the image and

|

||||

5. What if we only want to change certain part(s) of the image and

|

||||

leave the rest intact? This is called Inpainting, and a future version

|

||||

of the InvokeAI web server will provide an interactive painting canvas

|

||||

on which you can directly draw the areas you wish to Inpaint into. For

|

||||

@ -263,18 +270,20 @@ now, you can achieve this effect by using an external photoeditor tool

|

||||

to make one or more regions of the image transparent as described in

|

||||

[INPAINTING.md] and uploading that.

|

||||

|

||||

The file

|

||||

[Lincoln-and-Parrot-512-transparent.png](../assets/Lincoln-and-Parrot-512-transparent.png)

|

||||

is a version of the earlier image in which the area around the parrot

|

||||

has been replaced with transparency. Click on the "x" in the upper

|

||||

right of the Initial Image and upload the transparent version. Using

|

||||

the same prompt "old sea captain with raven on shoulder" try Invoking

|

||||

an image. This time, only the parrot will be replaced, leaving the

|

||||

rest of the original image intact:

|

||||

The file

|

||||

[Lincoln-and-Parrot-512-transparent.png](../assets/Lincoln-and-Parrot-512-transparent.png)

|

||||

is a version of the earlier image in which the area around the parrot

|

||||

has been replaced with transparency. Click on the "x" in the upper

|

||||

right of the Initial Image and upload the transparent version. Using

|

||||

the same prompt "old sea captain with raven on shoulder" try Invoking

|

||||

an image. This time, only the parrot will be replaced, leaving the

|

||||

rest of the original image intact:

|

||||

|

||||

<figure markdown>

|

||||

{:width="640px"}

|

||||

</figure>

|

||||

|

||||

14. Would you like to modify a previously-generated image using the

|

||||

6. Would you like to modify a previously-generated image using the

|

||||

Image to Image facility? Easy! While in the Image to Image panel,

|

||||

hover over any of the gallery images to see a little menu of icons pop

|

||||

up. Click the picture icon to instantly send the selected image to

|

||||

@ -299,19 +308,15 @@ updates!

|

||||

## Reference

|

||||

|

||||

### Additional Options

|

||||

`--web_develop` - Starts the web server in development mode.

|

||||

|

||||

`--web_verbose` - Enables verbose logging

|

||||

|

||||

`--cors [CORS ...]` - Additional allowed origins, comma-separated

|

||||

|

||||

`--host HOST` - Web server: Host or IP to listen on. Set to 0.0.0.0 to

|

||||

accept traffic from other devices on your network.

|

||||

|

||||

`--port PORT` - Web server: Port to listen on

|

||||

|

||||

`--gui` - Start InvokeAI GUI - This is the "desktop mode" version of the web app. It uses Flask

|

||||

to create a desktop app experience of the webserver.

|

||||

|

||||

parameter <img width=160 align="right"> | effect

|

||||

-- | --

|

||||

`--web_develop` | Starts the web server in development mode.

|

||||

`--web_verbose` | Enables verbose logging

|

||||

`--cors [CORS ...]` | Additional allowed origins, comma-separated

|

||||

`--host HOST` | Web server: Host or IP to listen on. Set to 0.0.0.0 to accept traffic from other devices on your network.

|

||||

`--port PORT` | Web server: Port to listen on

|

||||

`--gui` | Start InvokeAI GUI - This is the "desktop mode" version of the web app. It uses Flask to create a desktop app experience of the webserver.

|

||||

|

||||

### Web Specific Features

|

||||

|

||||

|

||||

@ -14,12 +14,12 @@ In this document, we will talk about sampler convergence.

|

||||

|

||||

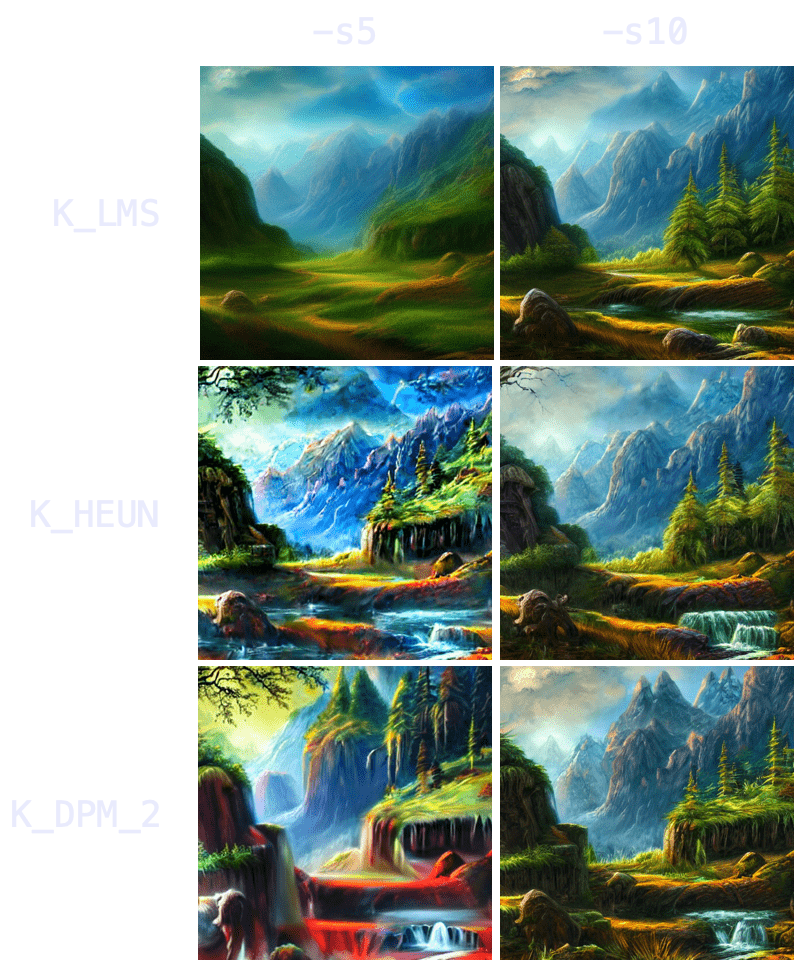

Looking for a short version? Here's a TL;DR in 3 tables.

|

||||

|

||||

| Remember |

|

||||

|:---|

|

||||

| Results converge as steps (`-s`) are increased (except for `K_DPM_2_A` and `K_EULER_A`). Often at ≥ `-s100`, but may require ≥ `-s700`). |

|

||||

| Producing a batch of candidate images at low (`-s8` to `-s30`) step counts can save you hours of computation. |

|

||||

| `K_HEUN` and `K_DPM_2` converge in less steps (but are slower). |

|

||||

| `K_DPM_2_A` and `K_EULER_A` incorporate a lot of creativity/variability. |

|

||||

!!! note "Remember"

|

||||

|

||||

- Results converge as steps (`-s`) are increased (except for `K_DPM_2_A` and `K_EULER_A`). Often at ≥ `-s100`, but may require ≥ `-s700`).

|

||||

- Producing a batch of candidate images at low (`-s8` to `-s30`) step counts can save you hours of computation.

|

||||

- `K_HEUN` and `K_DPM_2` converge in less steps (but are slower).

|

||||

- `K_DPM_2_A` and `K_EULER_A` incorporate a lot of creativity/variability.

|

||||

|

||||

<div align="center" markdown>

|

||||

|

||||

@ -36,10 +36,11 @@ Looking for a short version? Here's a TL;DR in 3 tables.

|

||||

|

||||

</div>

|

||||

|

||||

| Suggestions |

|

||||

|:---|

|

||||

| For most use cases, `K_LMS`, `K_HEUN` and `K_DPM_2` are the best choices (the latter 2 run 0.5x as quick, but tend to converge 2x as quick as `K_LMS`). At very low steps (≤ `-s8`), `K_HEUN` and `K_DPM_2` are not recommended. Use `K_LMS` instead.|

|

||||

| For variability, use `K_EULER_A` (runs 2x as quick as `K_DPM_2_A`). |

|

||||

!!! tip "suggestions"

|

||||

|

||||

For most use cases, `K_LMS`, `K_HEUN` and `K_DPM_2` are the best choices (the latter 2 run 0.5x as quick, but tend to converge 2x as quick as `K_LMS`). At very low steps (≤ `-s8`), `K_HEUN` and `K_DPM_2` are not recommended. Use `K_LMS` instead.

|

||||

|

||||

For variability, use `K_EULER_A` (runs 2x as quick as `K_DPM_2_A`).

|

||||

|

||||

---

|

||||

|

||||

@ -64,15 +65,15 @@ This realization is very useful because it means you don't need to create a batc

|

||||

You can produce the same 100 images at `-s10` to `-s30` using a K-sampler (since they converge faster), get a rough idea of the final result, choose your 2 or 3 favorite ones, and then run `-s100` on those images to polish some details.

|

||||

The latter technique is 3-8x as quick.

|

||||

|

||||

Example:

|

||||

!!! example

|

||||

|

||||

At 60s per 100 steps.

|

||||

At 60s per 100 steps.

|

||||

|

||||

(Option A) 60s * 100 images = 6000s (100 images at `-s100`, manually picking 3 favorites)

|

||||

A) 60s * 100 images = 6000s (100 images at `-s100`, manually picking 3 favorites)

|

||||

|

||||

(Option B) 6s * 100 images + 60s * 3 images = 780s (100 images at `-s10`, manually picking 3 favorites, and running those 3 at `-s100` to polish details)

|

||||

B) 6s *100 images + 60s* 3 images = 780s (100 images at `-s10`, manually picking 3 favorites, and running those 3 at `-s100` to polish details)

|

||||

|

||||

The result is 1 hour and 40 minutes (Option A) vs 13 minutes (Option B).

|

||||

The result is __1 hour and 40 minutes__ for Variant A, vs __13 minutes__ for Variant B.

|

||||

|

||||

### *Topic convergance*

|

||||

|

||||

@ -114,9 +115,12 @@ Note also the point of convergence may not be the most desirable state (e.g. I p

|

||||

|

||||

Once we understand the concept of sampler convergence, we must look into the performance of each sampler in terms of steps (iterations) per second, as not all samplers run at the same speed.

|

||||

|

||||

On my M1 Max with 64GB of RAM, for a 512x512 image:

|

||||

| Sampler | (3 sample average) it/s |

|

||||

|---|---|

|

||||

<div align="center" markdown>

|

||||

|

||||

On my M1 Max with 64GB of RAM, for a 512x512 image

|

||||

|

||||

| Sampler | (3 sample average) it/s |

|

||||

| :--- | :--- |

|

||||

| `DDIM` | 1.89 |

|

||||

| `PLMS` | 1.86 |

|

||||

| `K_EULER` | 1.86 |

|

||||

@ -126,11 +130,13 @@ On my M1 Max with 64GB of RAM, for a 512x512 image:

|

||||

| `K_DPM_2_A` | 0.95 (slower) |

|

||||

| `K_EULER_A` | 1.86 |

|

||||

|

||||

</div>

|

||||

|

||||

Combining our results with the steps per second of each sampler, three choices come out on top: `K_LMS`, `K_HEUN` and `K_DPM_2` (where the latter two run 0.5x as quick but tend to converge 2x as quick as `K_LMS`). For creativity and a lot of variation between iterations, `K_EULER_A` can be a good choice (which runs 2x as quick as `K_DPM_2_A`).

|

||||

|

||||

Additionally, image generation at very low steps (≤ `-s8`) is not recommended for `K_HEUN` and `K_DPM_2`. Use `K_LMS` instead.

|

||||

|

||||

<img width="397" alt="192044949-67d5d441-a0d5-4d5a-be30-5dda4fc28a00-min" src="https://user-images.githubusercontent.com/50542132/192046823-2714cb29-bbf3-4eb1-9213-e27a0963905c.png">

|

||||

{ width=600}

|

||||

|

||||

### *Three key points*

|

||||

|

||||

|

||||

@ -1,5 +1,7 @@

|

||||

---

|

||||

title: F.A.Q.

|

||||

hide:

|

||||

- toc

|

||||

---

|

||||

|

||||

# :material-frequently-asked-questions: F.A.Q.

|

||||

@ -63,7 +65,7 @@ Reinstall the stable diffusion modules. Enter the `stable-diffusion` directory a

|

||||

|

||||

### **QUESTION**

|

||||

|

||||

`invoke.py` dies, complaining of various missing modules, none of which starts with `ldm``.

|

||||

`invoke.py` dies, complaining of various missing modules, none of which starts with `ldm`.

|

||||

|

||||

### **SOLUTION**

|

||||

|

||||

@ -87,9 +89,7 @@ Usually this will be sufficient, but if you start to see errors about

|

||||

missing or incorrect modules, use the command `pip install -e .`

|

||||

and/or `conda env update` (These commands won't break anything.)

|

||||

|

||||

`pip install -e .` and/or

|

||||

|

||||

`conda env update -f environment.yaml`

|

||||

`pip install -e .` and/or `conda env update -f environment.yaml`

|

||||

|

||||

(These commands won't break anything.)

|

||||

|

||||

|

||||

@ -124,18 +124,18 @@ While that is downloading, open Terminal and run the following commands one at a

|

||||

conda activate invokeai

|

||||

|

||||

# This will download some bits and pieces and make take a while

|

||||

python scripts/preload_models.py

|

||||

(invokeai) python scripts/preload_models.py

|

||||

|

||||

# Run SD!

|

||||

python scripts/dream.py

|

||||

(invokeai) python scripts/dream.py

|

||||

|

||||

# or run the web interface!

|

||||

python scripts/invoke.py --web

|

||||

(invokeai) python scripts/invoke.py --web

|

||||

|

||||

# The original scripts should work as well.

|

||||

python scripts/orig_scripts/txt2img.py \

|

||||

--prompt "a photograph of an astronaut riding a horse" \

|

||||

--plms

|

||||

(invokeai) python scripts/orig_scripts/txt2img.py \

|

||||

--prompt "a photograph of an astronaut riding a horse" \

|

||||

--plms

|

||||

```

|

||||

!!! info

|

||||

|

||||

@ -207,7 +207,7 @@ conda update \

|

||||

There are several causes of these errors:

|

||||

|

||||

1. Did you remember to `conda activate ldm`? If your terminal prompt begins with

|

||||

"(ldm)" then you activated it. If it begins with "(base)" or something else

|

||||

"(invokeai)" then you activated it. If it begins with "(base)" or something else

|

||||

you haven't.

|

||||

|

||||

2. You might've run `./scripts/preload_models.py` or `./scripts/invoke.py`

|

||||

@ -224,7 +224,7 @@ There are several causes of these errors:

|

||||

conda env remove -n ldm

|

||||

conda env create -f environment-mac.yml

|

||||

```

|

||||

|

||||

|

||||

4. If you have activated the ldm virtual environment and tried rebuilding it,

|

||||

maybe the problem could be that I have something installed that you don't and

|

||||

you'll just need to manually install it. Make sure you activate the virtual

|

||||

@ -289,7 +289,7 @@ should actually be the _same python_, which you can verify by comparing the

|

||||

output of `python3 -V` and `python -V`.

|

||||

|

||||

```bash

|

||||

(ldm) % which python

|

||||

(invokeai) % which python

|

||||

/Users/name/miniforge3/envs/ldm/bin/python

|

||||

```

|

||||

|

||||

|

||||

@ -2,7 +2,7 @@

|

||||

site_name: Stable Diffusion Toolkit Docs

|

||||

site_url: https://invoke-ai.github.io/InvokeAI

|

||||

site_author: mauwii

|

||||

dev_addr: "127.0.0.1:8080"

|

||||

dev_addr: '127.0.0.1:8080'

|

||||

|

||||

# Repository

|

||||

repo_name: 'invoke-ai/InvokeAI'

|

||||

@ -26,6 +26,7 @@ theme:

|

||||

name: Switch to dark mode

|

||||

- media: '(prefers-color-scheme: dark)'

|

||||

scheme: slate

|

||||

primary: blue

|

||||

toggle:

|

||||

icon: material/lightbulb-outline

|

||||

name: Switch to light mode

|

||||

|

||||

Loading…

x

Reference in New Issue

Block a user