diff --git a/.github/workflows/pypi-release.yml b/.github/workflows/pypi-release.yml

index 9e58fb3ae0..5b7d2cd2fa 100644

--- a/.github/workflows/pypi-release.yml

+++ b/.github/workflows/pypi-release.yml

@@ -28,7 +28,7 @@ jobs:

run: twine check dist/*

- name: check PyPI versions

- if: github.ref == 'refs/heads/main' || github.ref == 'refs/heads/v2.3' || github.ref == 'refs/heads/v3.3.0post1'

+ if: github.ref == 'refs/heads/main' || startsWith(github.ref, 'refs/heads/release/')

run: |

pip install --upgrade requests

python -c "\

diff --git a/docs/installation/010_INSTALL_AUTOMATED.md b/docs/installation/010_INSTALL_AUTOMATED.md

index 0937c07bca..52192f33c0 100644

--- a/docs/installation/010_INSTALL_AUTOMATED.md

+++ b/docs/installation/010_INSTALL_AUTOMATED.md

@@ -40,7 +40,7 @@ experimental versions later.

this, open up a command-line window ("Terminal" on Linux and

Macintosh, "Command" or "Powershell" on Windows) and type `python

--version`. If Python is installed, it will print out the version

- number. If it is version `3.9.*`, `3.10.*` or `3.11.*` you meet

+ number. If it is version `3.10.*` or `3.11.*` you meet

requirements.

!!! warning "What to do if you have an unsupported version"

@@ -48,7 +48,7 @@ experimental versions later.

Go to [Python Downloads](https://www.python.org/downloads/)

and download the appropriate installer package for your

platform. We recommend [Version

- 3.10.9](https://www.python.org/downloads/release/python-3109/),

+ 3.10.12](https://www.python.org/downloads/release/python-3109/),

which has been extensively tested with InvokeAI.

_Please select your platform in the section below for platform-specific

diff --git a/docs/installation/020_INSTALL_MANUAL.md b/docs/installation/020_INSTALL_MANUAL.md

index a19992d266..27484c0ffd 100644

--- a/docs/installation/020_INSTALL_MANUAL.md

+++ b/docs/installation/020_INSTALL_MANUAL.md

@@ -32,7 +32,7 @@ gaming):

* **Python**

- version 3.9 through 3.11

+ version 3.10 through 3.11

* **CUDA Tools**

@@ -65,7 +65,7 @@ gaming):

To install InvokeAI with virtual environments and the PIP package

manager, please follow these steps:

-1. Please make sure you are using Python 3.9 through 3.11. The rest of the install

+1. Please make sure you are using Python 3.10 through 3.11. The rest of the install

procedure depends on this and will not work with other versions:

```bash

diff --git a/docs/installation/060_INSTALL_PATCHMATCH.md b/docs/installation/060_INSTALL_PATCHMATCH.md

index ccfd19d207..a9646f8b60 100644

--- a/docs/installation/060_INSTALL_PATCHMATCH.md

+++ b/docs/installation/060_INSTALL_PATCHMATCH.md

@@ -59,8 +59,7 @@ Prior to installing PyPatchMatch, you need to take the following steps:

`from patchmatch import patch_match`: It should look like the following:

```py

- Python 3.9.5 (default, Nov 23 2021, 15:27:38)

- [GCC 9.3.0] on linux

+ Python 3.10.12 (main, Jun 11 2023, 05:26:28) [GCC 11.4.0] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> from patchmatch import patch_match

Compiling and loading c extensions from "/home/lstein/Projects/InvokeAI/.invokeai-env/src/pypatchmatch/patchmatch".

diff --git a/docs/nodes/communityNodes.md b/docs/nodes/communityNodes.md

index 47ed407695..d5a5d5654f 100644

--- a/docs/nodes/communityNodes.md

+++ b/docs/nodes/communityNodes.md

@@ -8,28 +8,42 @@ To download a node, simply download the `.py` node file from the link and add it

To use a community workflow, download the the `.json` node graph file and load it into Invoke AI via the **Load Workflow** button in the Workflow Editor.

---------------------------------

+- Community Nodes

+ + [Depth Map from Wavefront OBJ](#depth-map-from-wavefront-obj)

+ + [Film Grain](#film-grain)

+ + [Generative Grammar-Based Prompt Nodes](#generative-grammar-based-prompt-nodes)

+ + [GPT2RandomPromptMaker](#gpt2randompromptmaker)

+ + [Grid to Gif](#grid-to-gif)

+ + [Halftone](#halftone)

+ + [Ideal Size](#ideal-size)

+ + [Image and Mask Composition Pack](#image-and-mask-composition-pack)

+ + [Image to Character Art Image Nodes](#image-to-character-art-image-nodes)

+ + [Image Picker](#image-picker)

+ + [Load Video Frame](#load-video-frame)

+ + [Make 3D](#make-3d)

+ + [Oobabooga](#oobabooga)

+ + [Prompt Tools](#prompt-tools)

+ + [Retroize](#retroize)

+ + [Size Stepper Nodes](#size-stepper-nodes)

+ + [Text font to Image](#text-font-to-image)

+ + [Thresholding](#thresholding)

+ + [XY Image to Grid and Images to Grids nodes](#xy-image-to-grid-and-images-to-grids-nodes)

+- [Example Node Template](#example-node-template)

+- [Disclaimer](#disclaimer)

+- [Help](#help)

+

--------------------------------

-### Make 3D

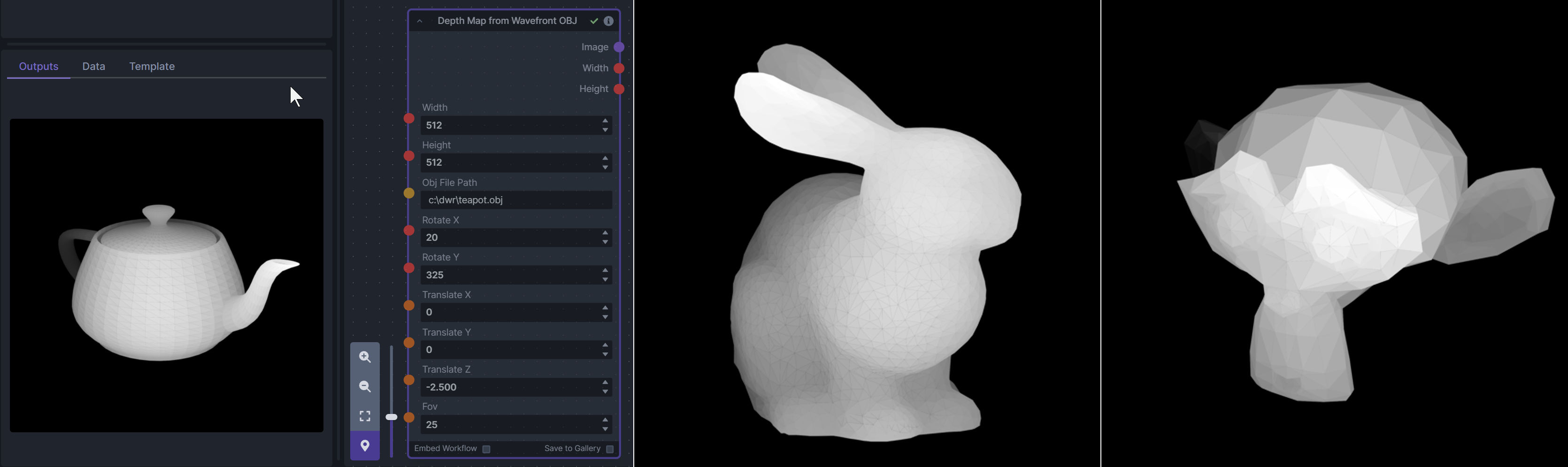

+### Depth Map from Wavefront OBJ

-**Description:** Create compelling 3D stereo images from 2D originals.

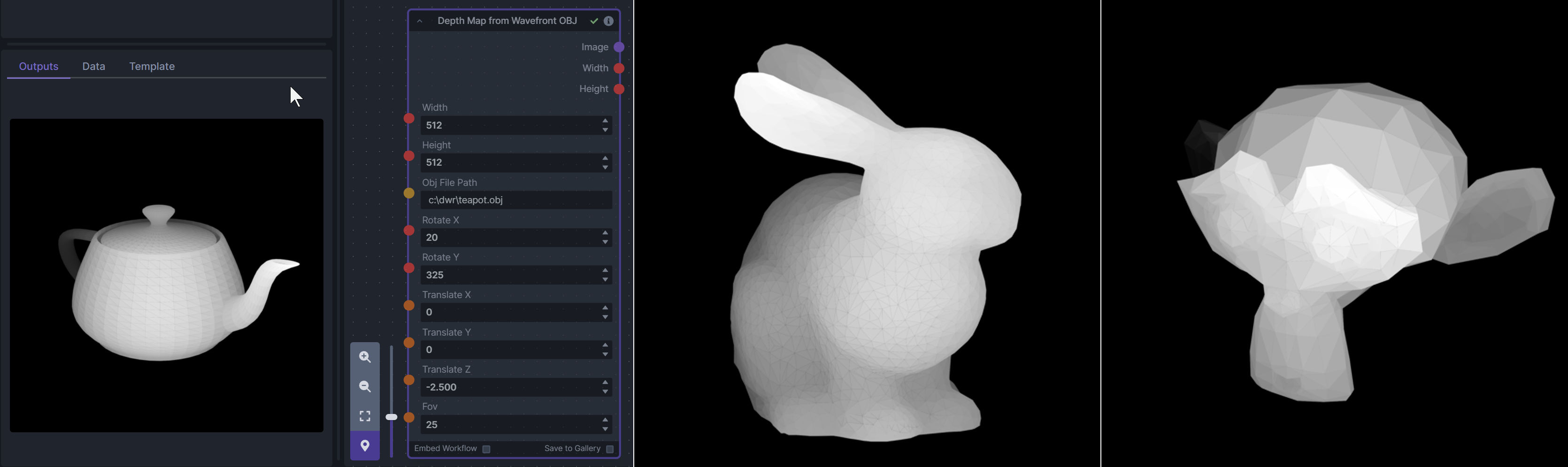

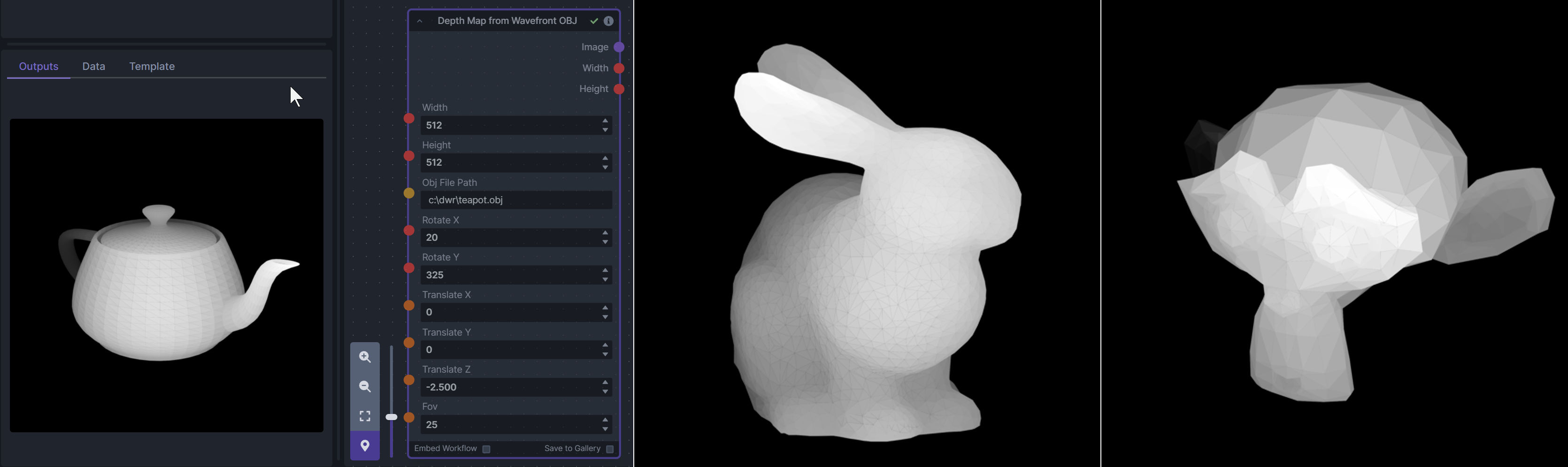

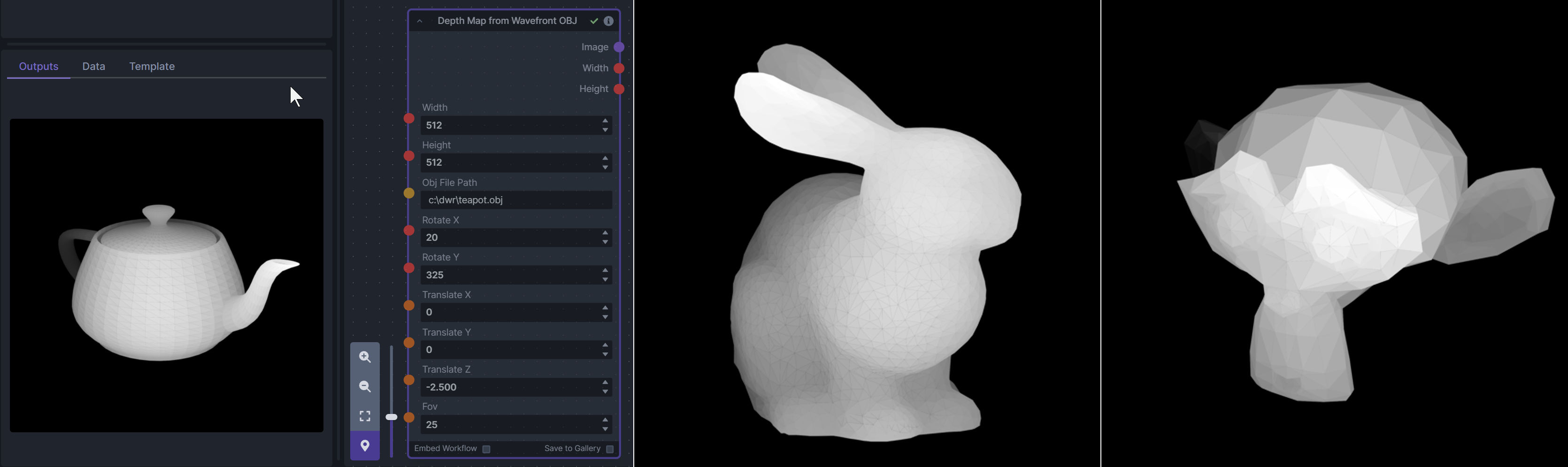

+**Description:** Render depth maps from Wavefront .obj files (triangulated) using this simple 3D renderer utilizing numpy and matplotlib to compute and color the scene. There are simple parameters to change the FOV, camera position, and model orientation.

-**Node Link:** [https://gitlab.com/srcrr/shift3d/-/raw/main/make3d.py](https://gitlab.com/srcrr/shift3d)

+To be imported, an .obj must use triangulated meshes, so make sure to enable that option if exporting from a 3D modeling program. This renderer makes each triangle a solid color based on its average depth, so it will cause anomalies if your .obj has large triangles. In Blender, the Remesh modifier can be helpful to subdivide a mesh into small pieces that work well given these limitations.

-**Example Node Graph:** https://gitlab.com/srcrr/shift3d/-/raw/main/example-workflow.json?ref_type=heads&inline=false

+**Node Link:** https://github.com/dwringer/depth-from-obj-node

-**Output Examples**

-

-{: style="height:512px;width:512px"}

-{: style="height:512px;width:512px"}

-

---------------------------------

-### Ideal Size

-

-**Description:** This node calculates an ideal image size for a first pass of a multi-pass upscaling. The aim is to avoid duplication that results from choosing a size larger than the model is capable of.

-

-**Node Link:** https://github.com/JPPhoto/ideal-size-node

+**Example Usage:**

+ --------------------------------

### Film Grain

@@ -39,68 +53,19 @@ To use a community workflow, download the the `.json` node graph file and load i

**Node Link:** https://github.com/JPPhoto/film-grain-node

--------------------------------

-### Image Picker

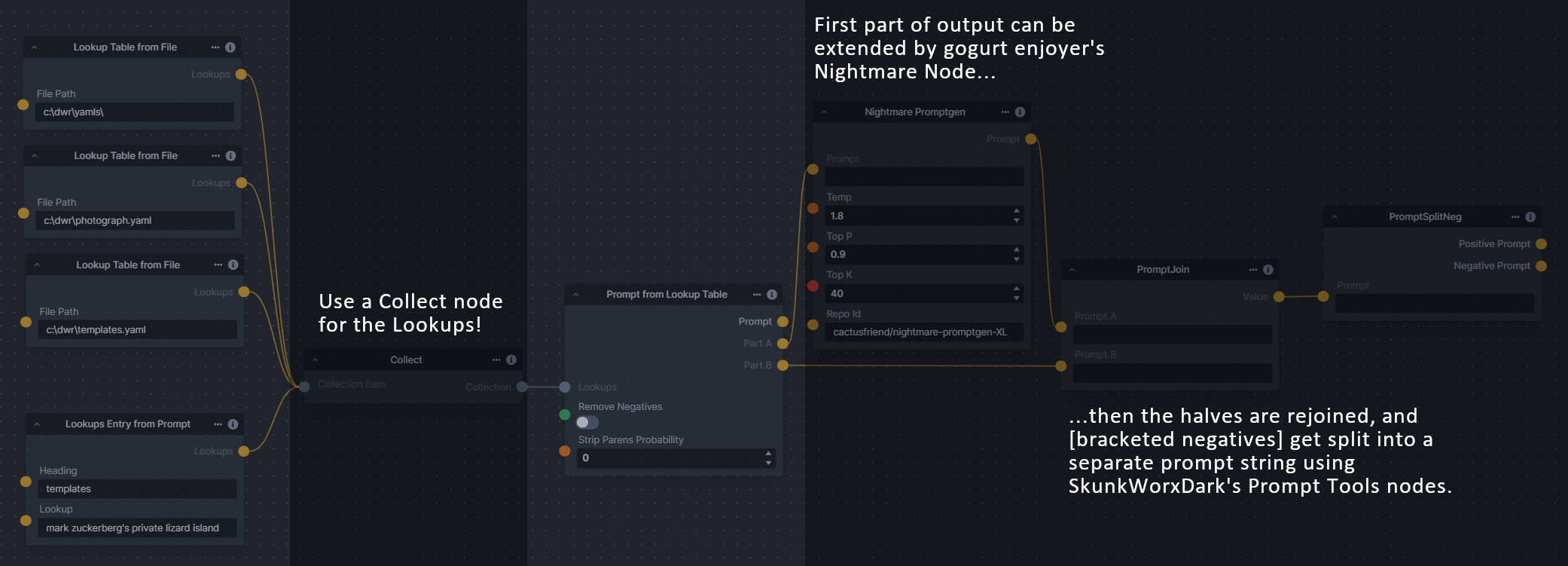

+### Generative Grammar-Based Prompt Nodes

-**Description:** This InvokeAI node takes in a collection of images and randomly chooses one. This can be useful when you have a number of poses to choose from for a ControlNet node, or a number of input images for another purpose.

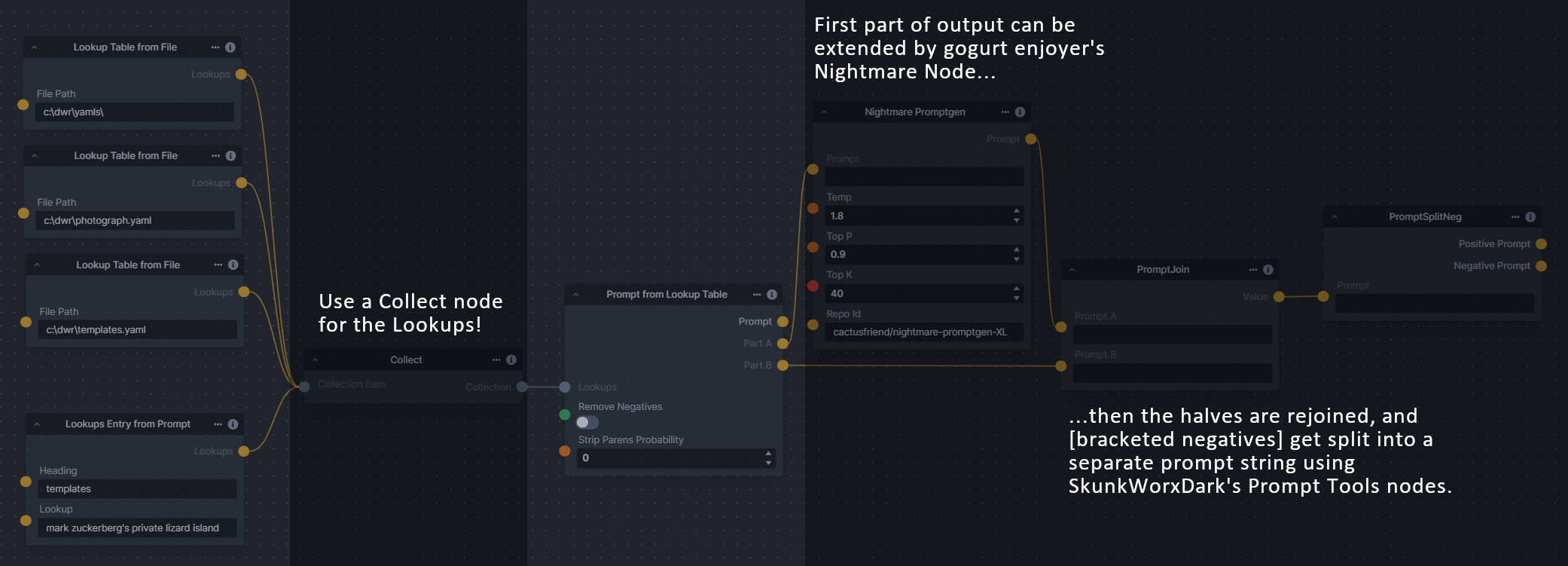

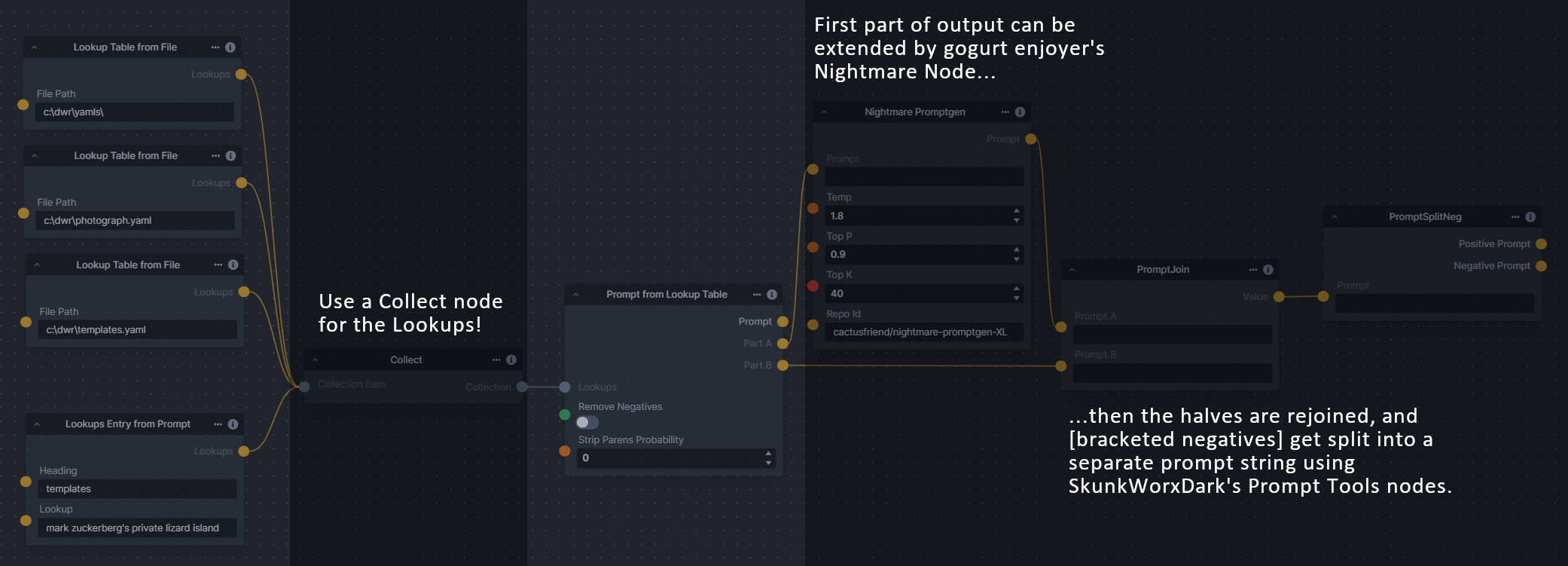

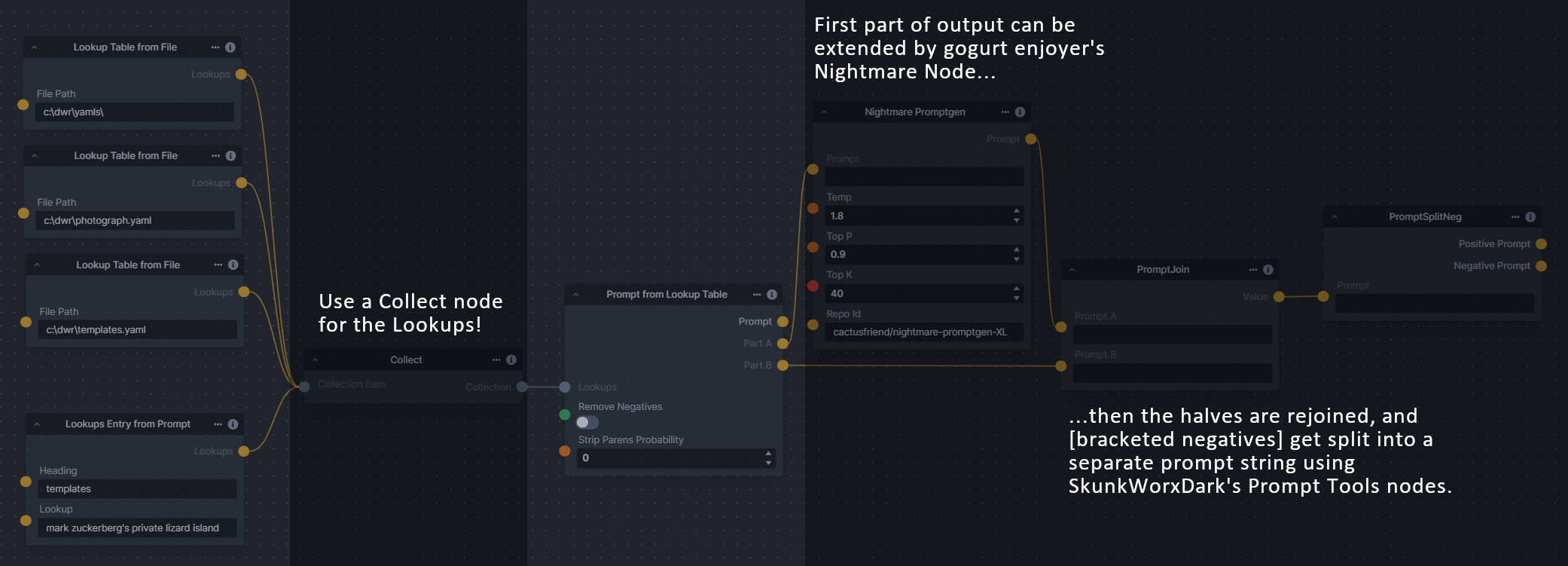

+**Description:** This set of 3 nodes generates prompts from simple user-defined grammar rules (loaded from custom files - examples provided below). The prompts are made by recursively expanding a special template string, replacing nonterminal "parts-of-speech" until no nonterminal terms remain in the string.

-**Node Link:** https://github.com/JPPhoto/image-picker-node

+This includes 3 Nodes:

+- *Lookup Table from File* - loads a YAML file "prompt" section (or of a whole folder of YAML's) into a JSON-ified dictionary (Lookups output)

+- *Lookups Entry from Prompt* - places a single entry in a new Lookups output under the specified heading

+- *Prompt from Lookup Table* - uses a Collection of Lookups as grammar rules from which to randomly generate prompts.

---------------------------------

-### Thresholding

+**Node Link:** https://github.com/dwringer/generative-grammar-prompt-nodes

-**Description:** This node generates masks for highlights, midtones, and shadows given an input image. You can optionally specify a blur for the lookup table used in making those masks from the source image.

-

-**Node Link:** https://github.com/JPPhoto/thresholding-node

-

-**Examples**

-

-Input:

-

-{: style="height:512px;width:512px"}

-

-Highlights/Midtones/Shadows:

-

-

--------------------------------

### Film Grain

@@ -39,68 +53,19 @@ To use a community workflow, download the the `.json` node graph file and load i

**Node Link:** https://github.com/JPPhoto/film-grain-node

--------------------------------

-### Image Picker

+### Generative Grammar-Based Prompt Nodes

-**Description:** This InvokeAI node takes in a collection of images and randomly chooses one. This can be useful when you have a number of poses to choose from for a ControlNet node, or a number of input images for another purpose.

+**Description:** This set of 3 nodes generates prompts from simple user-defined grammar rules (loaded from custom files - examples provided below). The prompts are made by recursively expanding a special template string, replacing nonterminal "parts-of-speech" until no nonterminal terms remain in the string.

-**Node Link:** https://github.com/JPPhoto/image-picker-node

+This includes 3 Nodes:

+- *Lookup Table from File* - loads a YAML file "prompt" section (or of a whole folder of YAML's) into a JSON-ified dictionary (Lookups output)

+- *Lookups Entry from Prompt* - places a single entry in a new Lookups output under the specified heading

+- *Prompt from Lookup Table* - uses a Collection of Lookups as grammar rules from which to randomly generate prompts.

---------------------------------

-### Thresholding

+**Node Link:** https://github.com/dwringer/generative-grammar-prompt-nodes

-**Description:** This node generates masks for highlights, midtones, and shadows given an input image. You can optionally specify a blur for the lookup table used in making those masks from the source image.

-

-**Node Link:** https://github.com/JPPhoto/thresholding-node

-

-**Examples**

-

-Input:

-

-{: style="height:512px;width:512px"}

-

-Highlights/Midtones/Shadows:

-

- -

- -

- -

-Highlights/Midtones/Shadows (with LUT blur enabled):

-

-

-

-Highlights/Midtones/Shadows (with LUT blur enabled):

-

- -

- -

- -

---------------------------------

-### Halftone

-

-**Description**: Halftone converts the source image to grayscale and then performs halftoning. CMYK Halftone converts the image to CMYK and applies a per-channel halftoning to make the source image look like a magazine or newspaper. For both nodes, you can specify angles and halftone dot spacing.

-

-**Node Link:** https://github.com/JPPhoto/halftone-node

-

-**Example**

-

-Input:

-

-{: style="height:512px;width:512px"}

-

-Halftone Output:

-

-{: style="height:512px;width:512px"}

-

-CMYK Halftone Output:

-

-{: style="height:512px;width:512px"}

-

---------------------------------

-### Retroize

-

-**Description:** Retroize is a collection of nodes for InvokeAI to "Retroize" images. Any image can be given a fresh coat of retro paint with these nodes, either from your gallery or from within the graph itself. It includes nodes to pixelize, quantize, palettize, and ditherize images; as well as to retrieve palettes from existing images.

-

-**Node Link:** https://github.com/Ar7ific1al/invokeai-retroizeinode/

-

-**Retroize Output Examples**

-

-

+**Example Usage:**

+

-

---------------------------------

-### Halftone

-

-**Description**: Halftone converts the source image to grayscale and then performs halftoning. CMYK Halftone converts the image to CMYK and applies a per-channel halftoning to make the source image look like a magazine or newspaper. For both nodes, you can specify angles and halftone dot spacing.

-

-**Node Link:** https://github.com/JPPhoto/halftone-node

-

-**Example**

-

-Input:

-

-{: style="height:512px;width:512px"}

-

-Halftone Output:

-

-{: style="height:512px;width:512px"}

-

-CMYK Halftone Output:

-

-{: style="height:512px;width:512px"}

-

---------------------------------

-### Retroize

-

-**Description:** Retroize is a collection of nodes for InvokeAI to "Retroize" images. Any image can be given a fresh coat of retro paint with these nodes, either from your gallery or from within the graph itself. It includes nodes to pixelize, quantize, palettize, and ditherize images; as well as to retrieve palettes from existing images.

-

-**Node Link:** https://github.com/Ar7ific1al/invokeai-retroizeinode/

-

-**Retroize Output Examples**

-

-

+**Example Usage:**

+ --------------------------------

### GPT2RandomPromptMaker

@@ -113,76 +78,49 @@ CMYK Halftone Output:

Generated Prompt: An enchanted weapon will be usable by any character regardless of their alignment.

-

+

--------------------------------

### GPT2RandomPromptMaker

@@ -113,76 +78,49 @@ CMYK Halftone Output:

Generated Prompt: An enchanted weapon will be usable by any character regardless of their alignment.

-

+ --------------------------------

-### Load Video Frame

+### Grid to Gif

-**Description:** This is a video frame image provider + indexer/video creation nodes for hooking up to iterators and ranges and ControlNets and such for invokeAI node experimentation. Think animation + ControlNet outputs.

+**Description:** One node that turns a grid image into an image collection, one node that turns an image collection into a gif.

-**Node Link:** https://github.com/helix4u/load_video_frame

+**Node Link:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/GridToGif.py

-**Example Node Graph:** https://github.com/helix4u/load_video_frame/blob/main/Example_Workflow.json

+**Example Node Graph:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/Grid%20to%20Gif%20Example%20Workflow.json

-**Output Example:**

+**Output Examples**

-

-[Full mp4 of Example Output test.mp4](https://github.com/helix4u/load_video_frame/blob/main/test.mp4)

+

--------------------------------

-### Load Video Frame

+### Grid to Gif

-**Description:** This is a video frame image provider + indexer/video creation nodes for hooking up to iterators and ranges and ControlNets and such for invokeAI node experimentation. Think animation + ControlNet outputs.

+**Description:** One node that turns a grid image into an image collection, one node that turns an image collection into a gif.

-**Node Link:** https://github.com/helix4u/load_video_frame

+**Node Link:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/GridToGif.py

-**Example Node Graph:** https://github.com/helix4u/load_video_frame/blob/main/Example_Workflow.json

+**Example Node Graph:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/Grid%20to%20Gif%20Example%20Workflow.json

-**Output Example:**

+**Output Examples**

-

-[Full mp4 of Example Output test.mp4](https://github.com/helix4u/load_video_frame/blob/main/test.mp4)

+ +

+ --------------------------------

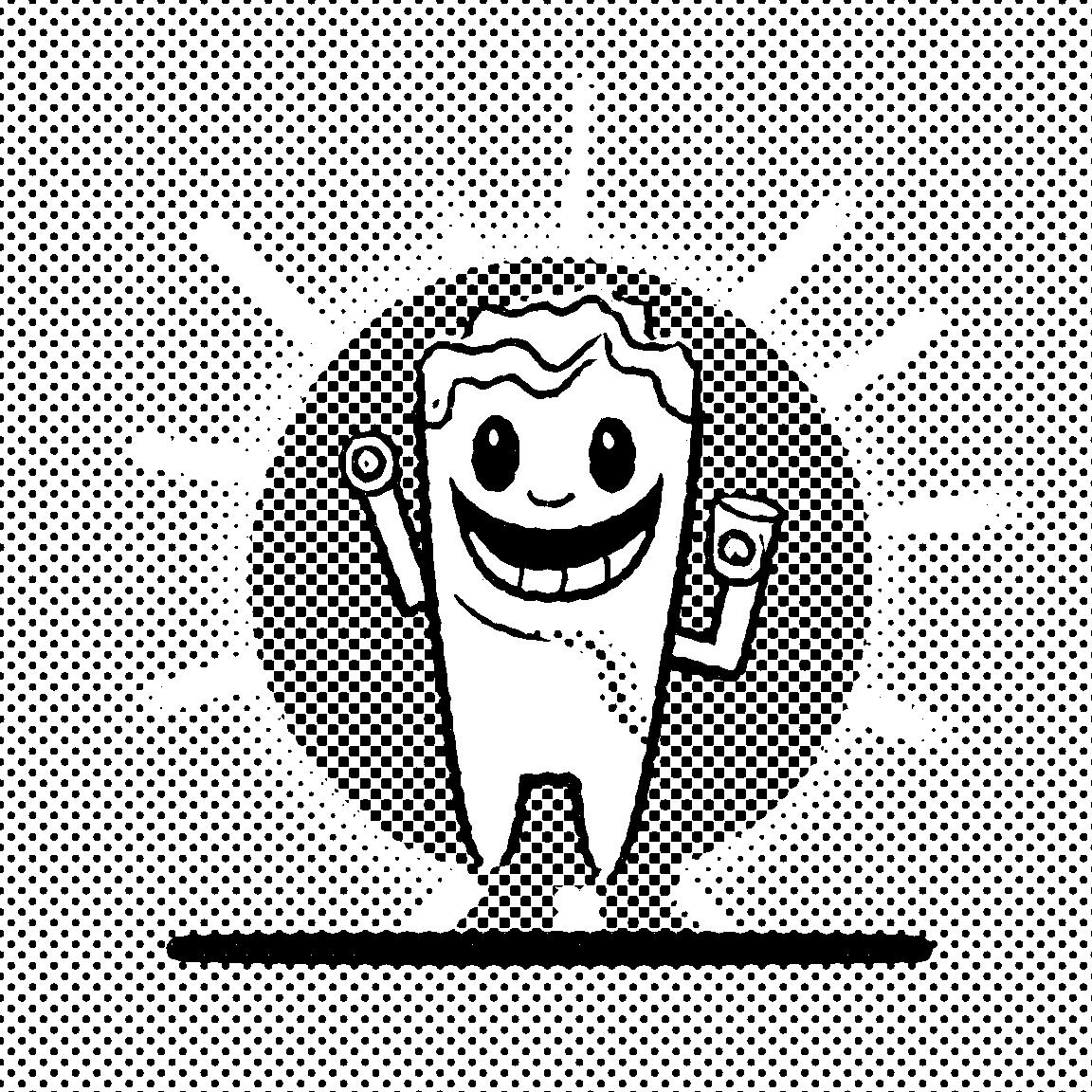

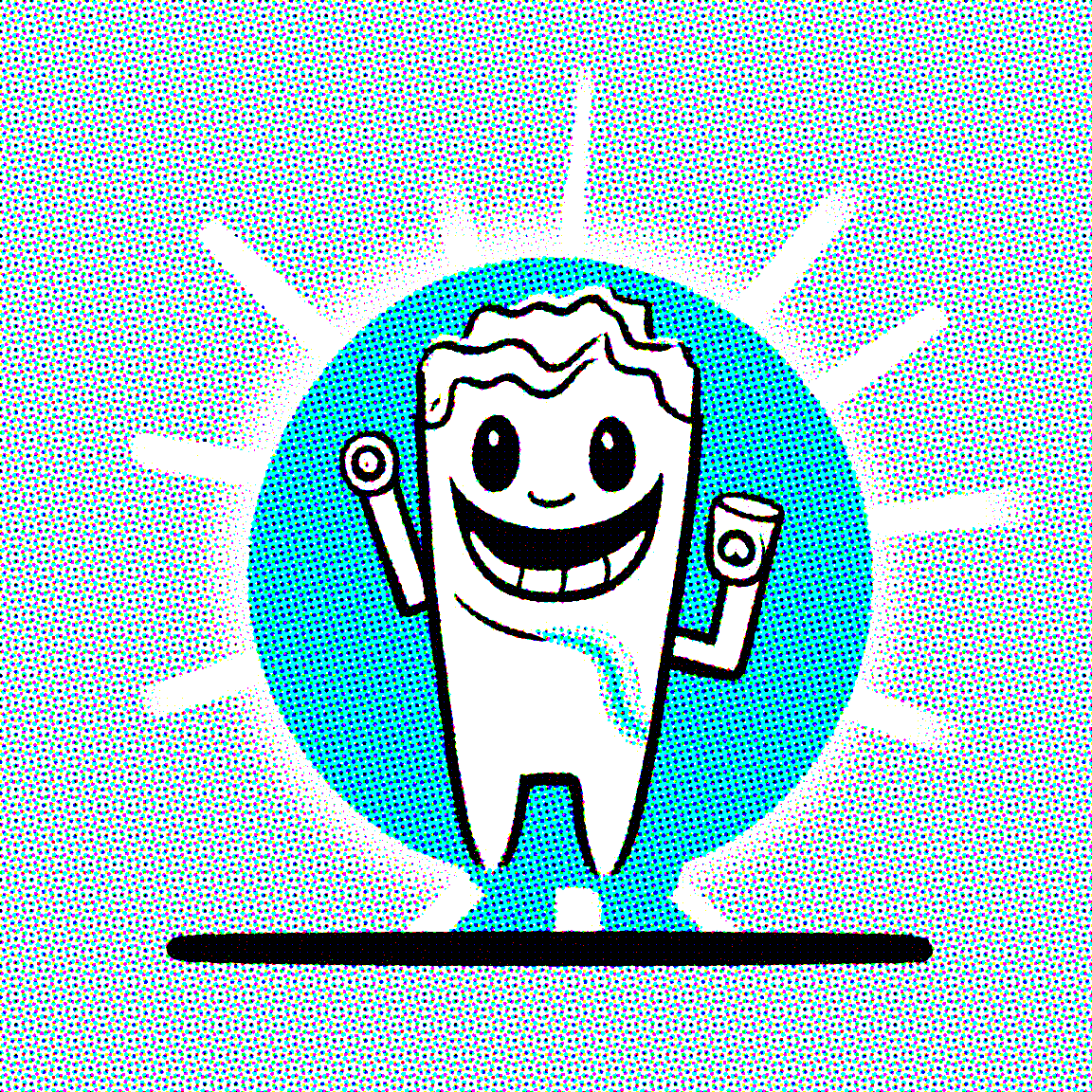

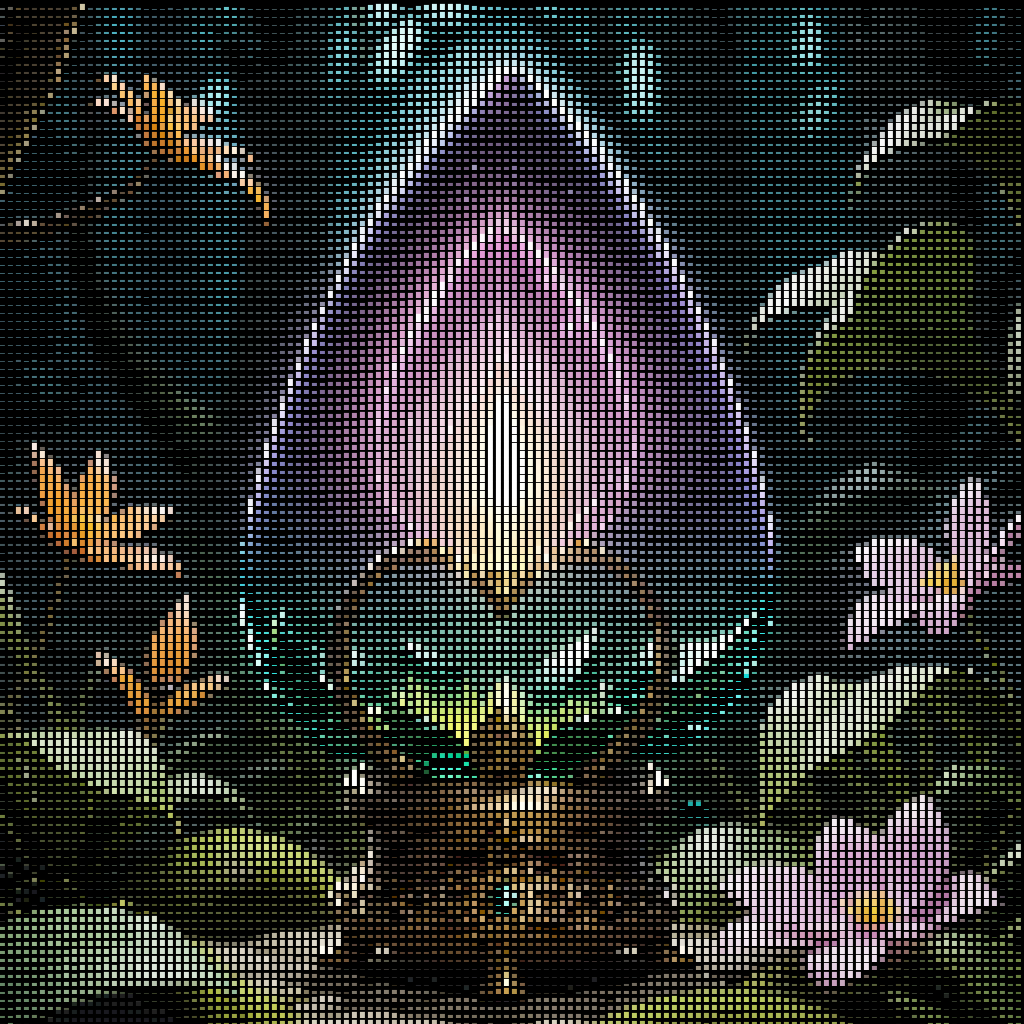

+### Halftone

-### Oobabooga

+**Description**: Halftone converts the source image to grayscale and then performs halftoning. CMYK Halftone converts the image to CMYK and applies a per-channel halftoning to make the source image look like a magazine or newspaper. For both nodes, you can specify angles and halftone dot spacing.

-**Description:** asks a local LLM running in Oobabooga's Text-Generation-Webui to write a prompt based on the user input.

+**Node Link:** https://github.com/JPPhoto/halftone-node

-**Link:** https://github.com/sammyf/oobabooga-node

+**Example**

+Input:

-**Example:**

+

--------------------------------

+### Halftone

-### Oobabooga

+**Description**: Halftone converts the source image to grayscale and then performs halftoning. CMYK Halftone converts the image to CMYK and applies a per-channel halftoning to make the source image look like a magazine or newspaper. For both nodes, you can specify angles and halftone dot spacing.

-**Description:** asks a local LLM running in Oobabooga's Text-Generation-Webui to write a prompt based on the user input.

+**Node Link:** https://github.com/JPPhoto/halftone-node

-**Link:** https://github.com/sammyf/oobabooga-node

+**Example**

+Input:

-**Example:**

+ -"describe a new mystical creature in its natural environment"

+Halftone Output:

-*can return*

+

-"describe a new mystical creature in its natural environment"

+Halftone Output:

-*can return*

+ -"The mystical creature I am describing to you is called the "Glimmerwing". It is a majestic, iridescent being that inhabits the depths of the most enchanted forests and glimmering lakes. Its body is covered in shimmering scales that reflect every color of the rainbow, and it has delicate, translucent wings that sparkle like diamonds in the sunlight. The Glimmerwing's home is a crystal-clear lake, surrounded by towering trees with leaves that shimmer like jewels. In this serene environment, the Glimmerwing spends its days swimming gracefully through the water, chasing schools of glittering fish and playing with the gentle ripples of the lake's surface.

-As the sun sets, the Glimmerwing perches on a branch of one of the trees, spreading its wings to catch the last rays of light. The creature's scales glow softly, casting a rainbow of colors across the forest floor. The Glimmerwing sings a haunting melody, its voice echoing through the stillness of the night air. Its song is said to have the power to heal the sick and bring peace to troubled souls. Those who are lucky enough to hear the Glimmerwing's song are forever changed by its beauty and grace."

+CMYK Halftone Output:

-

-

-**Requirement**

-

-a Text-Generation-Webui instance (might work remotely too, but I never tried it) and obviously InvokeAI 3.x

-

-**Note**

-

-This node works best with SDXL models, especially as the style can be described independantly of the LLM's output.

+

-"The mystical creature I am describing to you is called the "Glimmerwing". It is a majestic, iridescent being that inhabits the depths of the most enchanted forests and glimmering lakes. Its body is covered in shimmering scales that reflect every color of the rainbow, and it has delicate, translucent wings that sparkle like diamonds in the sunlight. The Glimmerwing's home is a crystal-clear lake, surrounded by towering trees with leaves that shimmer like jewels. In this serene environment, the Glimmerwing spends its days swimming gracefully through the water, chasing schools of glittering fish and playing with the gentle ripples of the lake's surface.

-As the sun sets, the Glimmerwing perches on a branch of one of the trees, spreading its wings to catch the last rays of light. The creature's scales glow softly, casting a rainbow of colors across the forest floor. The Glimmerwing sings a haunting melody, its voice echoing through the stillness of the night air. Its song is said to have the power to heal the sick and bring peace to troubled souls. Those who are lucky enough to hear the Glimmerwing's song are forever changed by its beauty and grace."

+CMYK Halftone Output:

-

-

-**Requirement**

-

-a Text-Generation-Webui instance (might work remotely too, but I never tried it) and obviously InvokeAI 3.x

-

-**Note**

-

-This node works best with SDXL models, especially as the style can be described independantly of the LLM's output.

+ --------------------------------

-### Depth Map from Wavefront OBJ

+### Ideal Size

-**Description:** Render depth maps from Wavefront .obj files (triangulated) using this simple 3D renderer utilizing numpy and matplotlib to compute and color the scene. There are simple parameters to change the FOV, camera position, and model orientation.

+**Description:** This node calculates an ideal image size for a first pass of a multi-pass upscaling. The aim is to avoid duplication that results from choosing a size larger than the model is capable of.

-To be imported, an .obj must use triangulated meshes, so make sure to enable that option if exporting from a 3D modeling program. This renderer makes each triangle a solid color based on its average depth, so it will cause anomalies if your .obj has large triangles. In Blender, the Remesh modifier can be helpful to subdivide a mesh into small pieces that work well given these limitations.

-

-**Node Link:** https://github.com/dwringer/depth-from-obj-node

-

-**Example Usage:**

-

-

---------------------------------

-### Generative Grammar-Based Prompt Nodes

-

-**Description:** This set of 3 nodes generates prompts from simple user-defined grammar rules (loaded from custom files - examples provided below). The prompts are made by recursively expanding a special template string, replacing nonterminal "parts-of-speech" until no more nonterminal terms remain in the string.

-

-This includes 3 Nodes:

-- *Lookup Table from File* - loads a YAML file "prompt" section (or of a whole folder of YAML's) into a JSON-ified dictionary (Lookups output)

-- *Lookups Entry from Prompt* - places a single entry in a new Lookups output under the specified heading

-- *Prompt from Lookup Table* - uses a Collection of Lookups as grammar rules from which to randomly generate prompts.

-

-**Node Link:** https://github.com/dwringer/generative-grammar-prompt-nodes

-

-**Example Usage:**

-

+**Node Link:** https://github.com/JPPhoto/ideal-size-node

--------------------------------

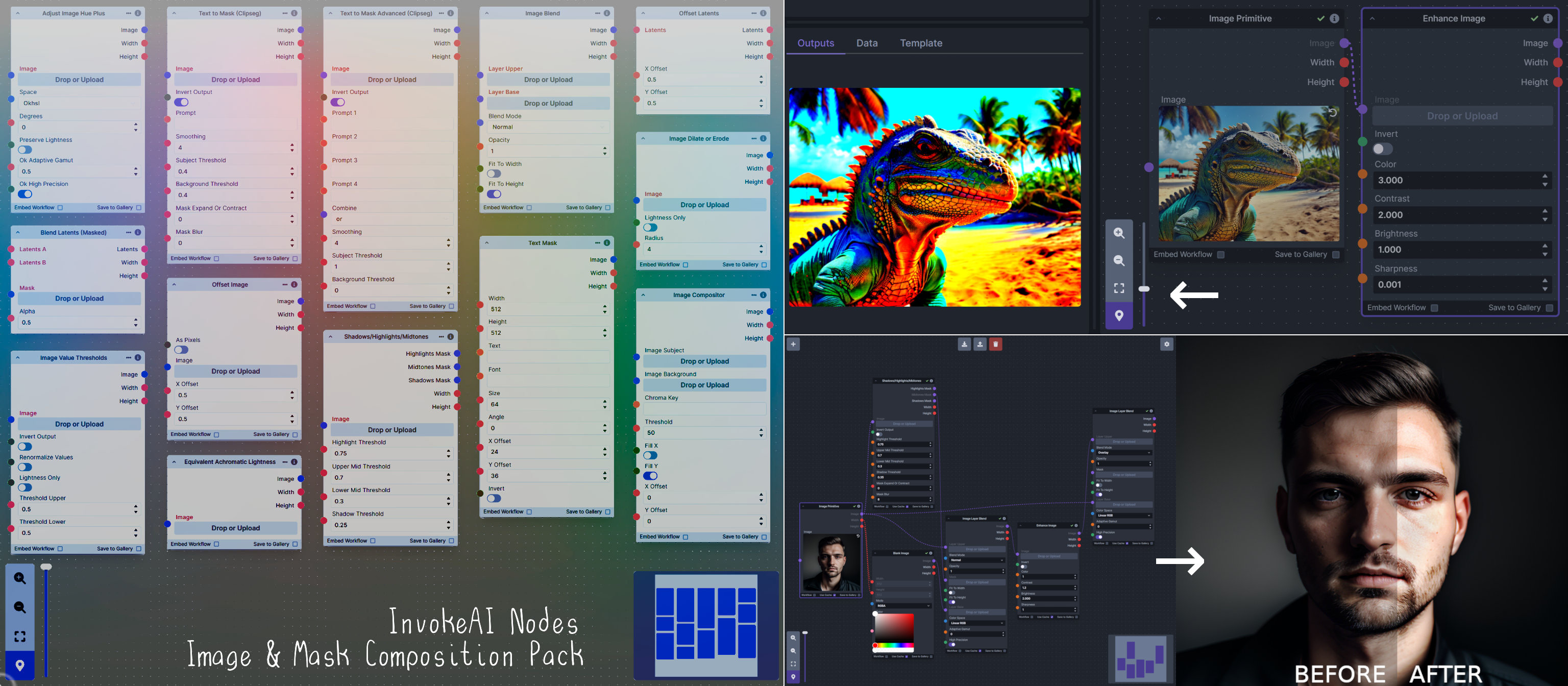

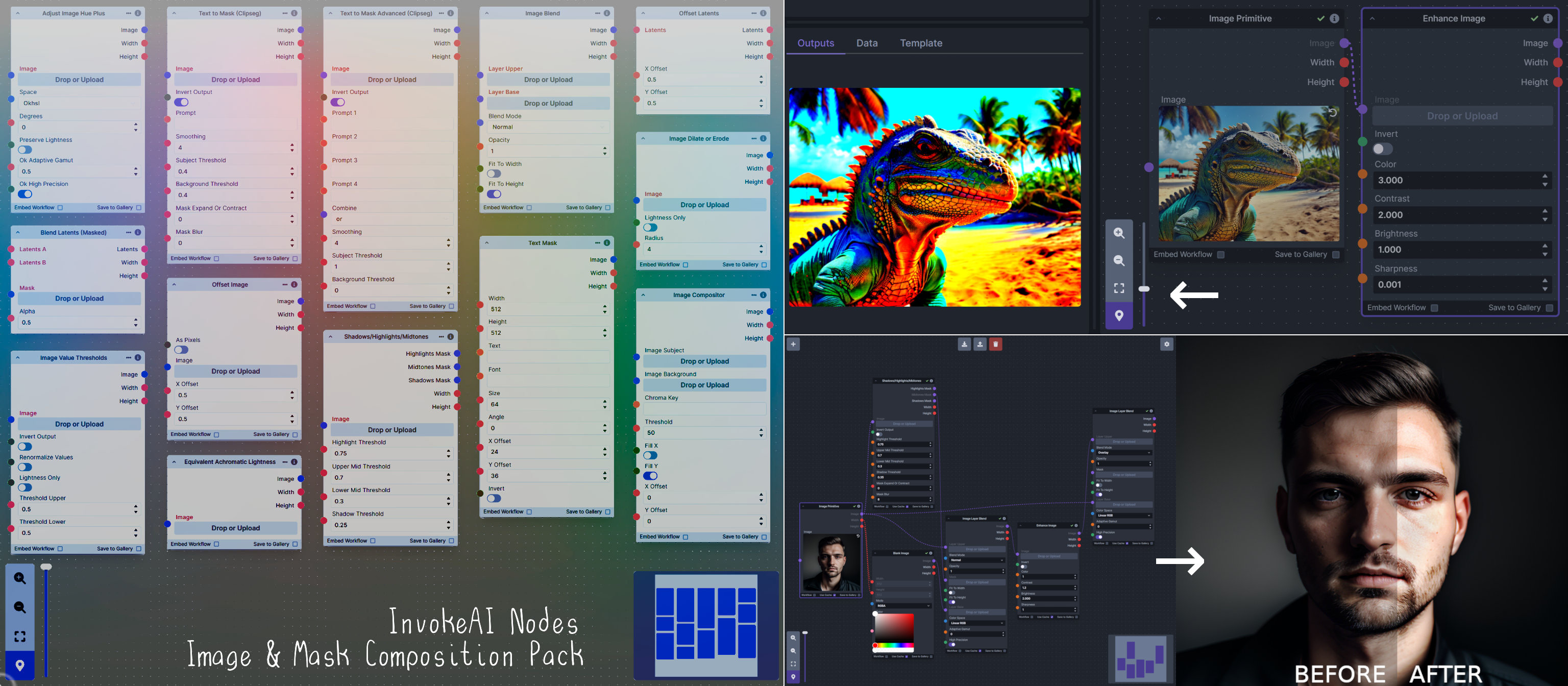

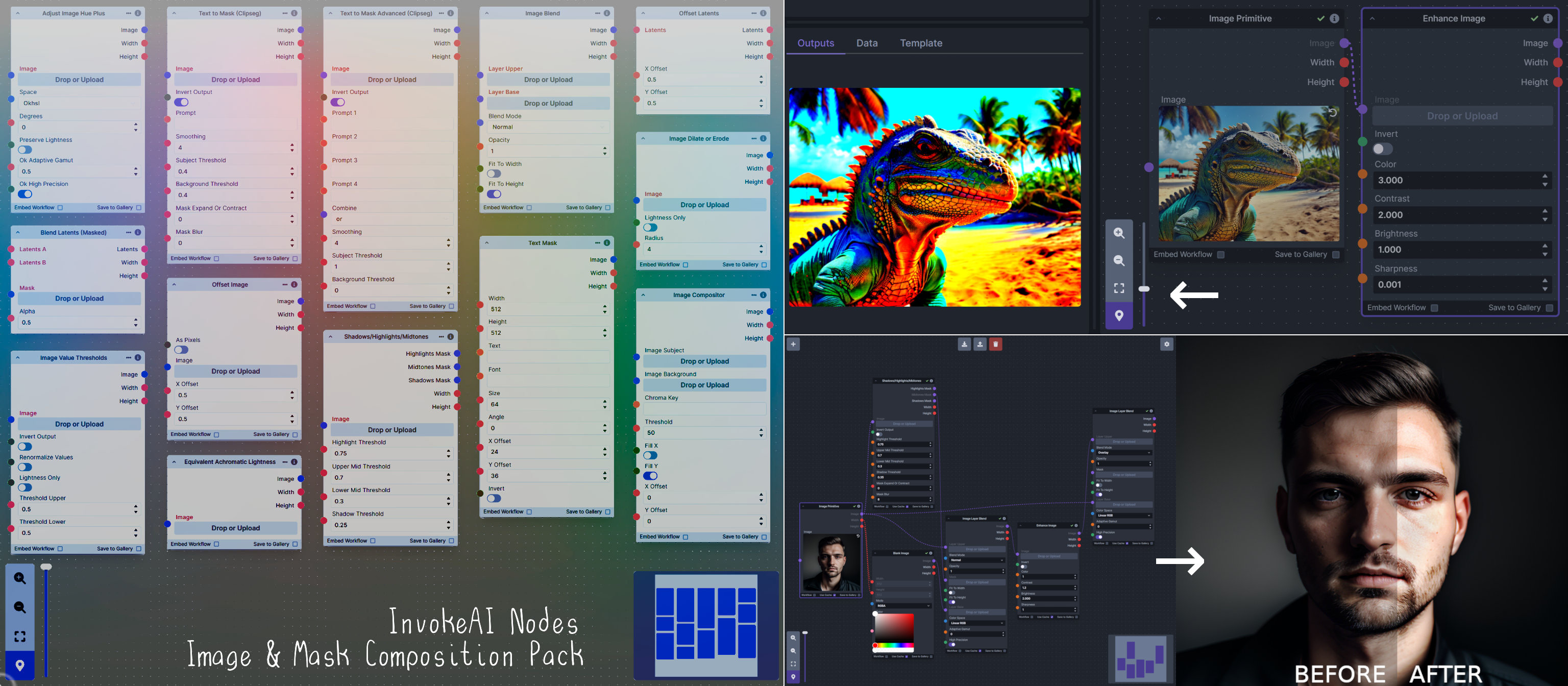

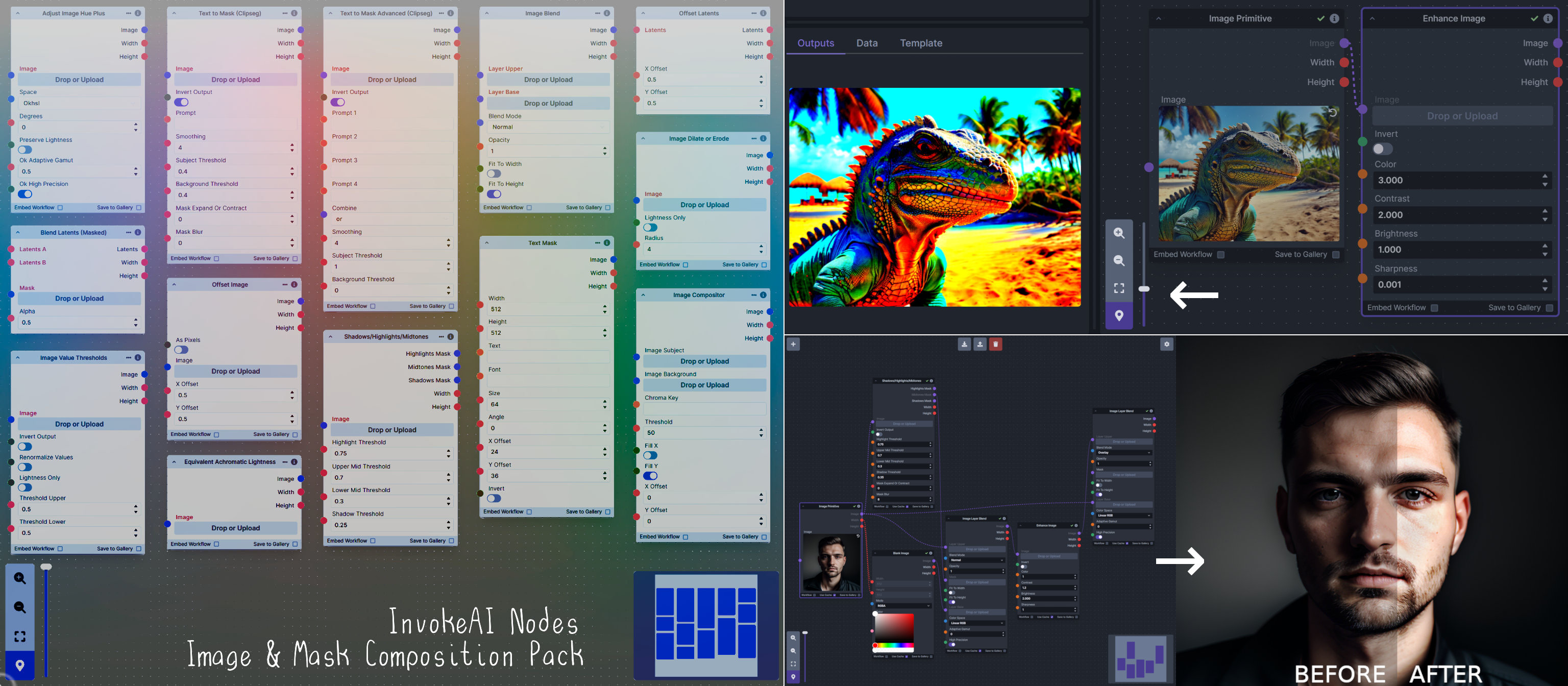

### Image and Mask Composition Pack

@@ -208,45 +146,88 @@ This includes 15 Nodes:

- *Text Mask (simple 2D)* - create and position a white on black (or black on white) line of text using any font locally available to Invoke.

**Node Link:** https://github.com/dwringer/composition-nodes

-

-**Nodes and Output Examples:**

-

+

+

--------------------------------

-### Depth Map from Wavefront OBJ

+### Ideal Size

-**Description:** Render depth maps from Wavefront .obj files (triangulated) using this simple 3D renderer utilizing numpy and matplotlib to compute and color the scene. There are simple parameters to change the FOV, camera position, and model orientation.

+**Description:** This node calculates an ideal image size for a first pass of a multi-pass upscaling. The aim is to avoid duplication that results from choosing a size larger than the model is capable of.

-To be imported, an .obj must use triangulated meshes, so make sure to enable that option if exporting from a 3D modeling program. This renderer makes each triangle a solid color based on its average depth, so it will cause anomalies if your .obj has large triangles. In Blender, the Remesh modifier can be helpful to subdivide a mesh into small pieces that work well given these limitations.

-

-**Node Link:** https://github.com/dwringer/depth-from-obj-node

-

-**Example Usage:**

-

-

---------------------------------

-### Generative Grammar-Based Prompt Nodes

-

-**Description:** This set of 3 nodes generates prompts from simple user-defined grammar rules (loaded from custom files - examples provided below). The prompts are made by recursively expanding a special template string, replacing nonterminal "parts-of-speech" until no more nonterminal terms remain in the string.

-

-This includes 3 Nodes:

-- *Lookup Table from File* - loads a YAML file "prompt" section (or of a whole folder of YAML's) into a JSON-ified dictionary (Lookups output)

-- *Lookups Entry from Prompt* - places a single entry in a new Lookups output under the specified heading

-- *Prompt from Lookup Table* - uses a Collection of Lookups as grammar rules from which to randomly generate prompts.

-

-**Node Link:** https://github.com/dwringer/generative-grammar-prompt-nodes

-

-**Example Usage:**

-

+**Node Link:** https://github.com/JPPhoto/ideal-size-node

--------------------------------

### Image and Mask Composition Pack

@@ -208,45 +146,88 @@ This includes 15 Nodes:

- *Text Mask (simple 2D)* - create and position a white on black (or black on white) line of text using any font locally available to Invoke.

**Node Link:** https://github.com/dwringer/composition-nodes

-

-**Nodes and Output Examples:**

-

+

+ --------------------------------

-### Size Stepper Nodes

+### Image to Character Art Image Nodes

-**Description:** This is a set of nodes for calculating the necessary size increments for doing upscaling workflows. Use the *Final Size & Orientation* node to enter your full size dimensions and orientation (portrait/landscape/random), then plug that and your initial generation dimensions into the *Ideal Size Stepper* and get 1, 2, or 3 intermediate pairs of dimensions for upscaling. Note this does not output the initial size or full size dimensions: the 1, 2, or 3 outputs of this node are only the intermediate sizes.

+**Description:** Group of nodes to convert an input image into ascii/unicode art Image

-A third node is included, *Random Switch (Integers)*, which is just a generic version of Final Size with no orientation selection.

-

-**Node Link:** https://github.com/dwringer/size-stepper-nodes

-

-**Example Usage:**

-

-

---------------------------------

-

-### Text font to Image

-

-**Description:** text font to text image node for InvokeAI, download a font to use (or if in font cache uses it from there), the text is always resized to the image size, but can control that with padding, optional 2nd line

-

-**Node Link:** https://github.com/mickr777/textfontimage

+**Node Link:** https://github.com/mickr777/imagetoasciiimage

**Output Examples**

-

-

-Results after using the depth controlnet

-

-

-

-

+

--------------------------------

-### Size Stepper Nodes

+### Image to Character Art Image Nodes

-**Description:** This is a set of nodes for calculating the necessary size increments for doing upscaling workflows. Use the *Final Size & Orientation* node to enter your full size dimensions and orientation (portrait/landscape/random), then plug that and your initial generation dimensions into the *Ideal Size Stepper* and get 1, 2, or 3 intermediate pairs of dimensions for upscaling. Note this does not output the initial size or full size dimensions: the 1, 2, or 3 outputs of this node are only the intermediate sizes.

+**Description:** Group of nodes to convert an input image into ascii/unicode art Image

-A third node is included, *Random Switch (Integers)*, which is just a generic version of Final Size with no orientation selection.

-

-**Node Link:** https://github.com/dwringer/size-stepper-nodes

-

-**Example Usage:**

-

-

---------------------------------

-

-### Text font to Image

-

-**Description:** text font to text image node for InvokeAI, download a font to use (or if in font cache uses it from there), the text is always resized to the image size, but can control that with padding, optional 2nd line

-

-**Node Link:** https://github.com/mickr777/textfontimage

+**Node Link:** https://github.com/mickr777/imagetoasciiimage

**Output Examples**

-

-

-Results after using the depth controlnet

-

-

-

-

+

+

+ +

+ --------------------------------

+### Image Picker

+

+**Description:** This InvokeAI node takes in a collection of images and randomly chooses one. This can be useful when you have a number of poses to choose from for a ControlNet node, or a number of input images for another purpose.

+

+**Node Link:** https://github.com/JPPhoto/image-picker-node

+

+--------------------------------

+### Load Video Frame

+

+**Description:** This is a video frame image provider + indexer/video creation nodes for hooking up to iterators and ranges and ControlNets and such for invokeAI node experimentation. Think animation + ControlNet outputs.

+

+**Node Link:** https://github.com/helix4u/load_video_frame

+

+**Example Node Graph:** https://github.com/helix4u/load_video_frame/blob/main/Example_Workflow.json

+

+**Output Example:**

+

+

--------------------------------

+### Image Picker

+

+**Description:** This InvokeAI node takes in a collection of images and randomly chooses one. This can be useful when you have a number of poses to choose from for a ControlNet node, or a number of input images for another purpose.

+

+**Node Link:** https://github.com/JPPhoto/image-picker-node

+

+--------------------------------

+### Load Video Frame

+

+**Description:** This is a video frame image provider + indexer/video creation nodes for hooking up to iterators and ranges and ControlNets and such for invokeAI node experimentation. Think animation + ControlNet outputs.

+

+**Node Link:** https://github.com/helix4u/load_video_frame

+

+**Example Node Graph:** https://github.com/helix4u/load_video_frame/blob/main/Example_Workflow.json

+

+**Output Example:**

+

+ +[Full mp4 of Example Output test.mp4](https://github.com/helix4u/load_video_frame/blob/main/test.mp4)

+

+--------------------------------

+### Make 3D

+

+**Description:** Create compelling 3D stereo images from 2D originals.

+

+**Node Link:** [https://gitlab.com/srcrr/shift3d/-/raw/main/make3d.py](https://gitlab.com/srcrr/shift3d)

+

+**Example Node Graph:** https://gitlab.com/srcrr/shift3d/-/raw/main/example-workflow.json?ref_type=heads&inline=false

+

+**Output Examples**

+

+

+[Full mp4 of Example Output test.mp4](https://github.com/helix4u/load_video_frame/blob/main/test.mp4)

+

+--------------------------------

+### Make 3D

+

+**Description:** Create compelling 3D stereo images from 2D originals.

+

+**Node Link:** [https://gitlab.com/srcrr/shift3d/-/raw/main/make3d.py](https://gitlab.com/srcrr/shift3d)

+

+**Example Node Graph:** https://gitlab.com/srcrr/shift3d/-/raw/main/example-workflow.json?ref_type=heads&inline=false

+

+**Output Examples**

+

+ +

+ +

+--------------------------------

+### Oobabooga

+

+**Description:** asks a local LLM running in Oobabooga's Text-Generation-Webui to write a prompt based on the user input.

+

+**Link:** https://github.com/sammyf/oobabooga-node

+

+**Example:**

+

+"describe a new mystical creature in its natural environment"

+

+*can return*

+

+"The mystical creature I am describing to you is called the "Glimmerwing". It is a majestic, iridescent being that inhabits the depths of the most enchanted forests and glimmering lakes. Its body is covered in shimmering scales that reflect every color of the rainbow, and it has delicate, translucent wings that sparkle like diamonds in the sunlight. The Glimmerwing's home is a crystal-clear lake, surrounded by towering trees with leaves that shimmer like jewels. In this serene environment, the Glimmerwing spends its days swimming gracefully through the water, chasing schools of glittering fish and playing with the gentle ripples of the lake's surface.

+As the sun sets, the Glimmerwing perches on a branch of one of the trees, spreading its wings to catch the last rays of light. The creature's scales glow softly, casting a rainbow of colors across the forest floor. The Glimmerwing sings a haunting melody, its voice echoing through the stillness of the night air. Its song is said to have the power to heal the sick and bring peace to troubled souls. Those who are lucky enough to hear the Glimmerwing's song are forever changed by its beauty and grace."

+

+

+

+--------------------------------

+### Oobabooga

+

+**Description:** asks a local LLM running in Oobabooga's Text-Generation-Webui to write a prompt based on the user input.

+

+**Link:** https://github.com/sammyf/oobabooga-node

+

+**Example:**

+

+"describe a new mystical creature in its natural environment"

+

+*can return*

+

+"The mystical creature I am describing to you is called the "Glimmerwing". It is a majestic, iridescent being that inhabits the depths of the most enchanted forests and glimmering lakes. Its body is covered in shimmering scales that reflect every color of the rainbow, and it has delicate, translucent wings that sparkle like diamonds in the sunlight. The Glimmerwing's home is a crystal-clear lake, surrounded by towering trees with leaves that shimmer like jewels. In this serene environment, the Glimmerwing spends its days swimming gracefully through the water, chasing schools of glittering fish and playing with the gentle ripples of the lake's surface.

+As the sun sets, the Glimmerwing perches on a branch of one of the trees, spreading its wings to catch the last rays of light. The creature's scales glow softly, casting a rainbow of colors across the forest floor. The Glimmerwing sings a haunting melody, its voice echoing through the stillness of the night air. Its song is said to have the power to heal the sick and bring peace to troubled souls. Those who are lucky enough to hear the Glimmerwing's song are forever changed by its beauty and grace."

+

+ +

+**Requirement**

+

+a Text-Generation-Webui instance (might work remotely too, but I never tried it) and obviously InvokeAI 3.x

+

+**Note**

+

+This node works best with SDXL models, especially as the style can be described independently of the LLM's output.

+

+--------------------------------

### Prompt Tools

-**Description:** A set of InvokeAI nodes that add general prompt manipulation tools. These where written to accompany the PromptsFromFile node and other prompt generation nodes.

+**Description:** A set of InvokeAI nodes that add general prompt manipulation tools. These were written to accompany the PromptsFromFile node and other prompt generation nodes.

1. PromptJoin - Joins to prompts into one.

2. PromptReplace - performs a search and replace on a prompt. With the option of using regex.

@@ -263,51 +244,83 @@ See full docs here: https://github.com/skunkworxdark/Prompt-tools-nodes/edit/mai

**Node Link:** https://github.com/skunkworxdark/Prompt-tools-nodes

--------------------------------

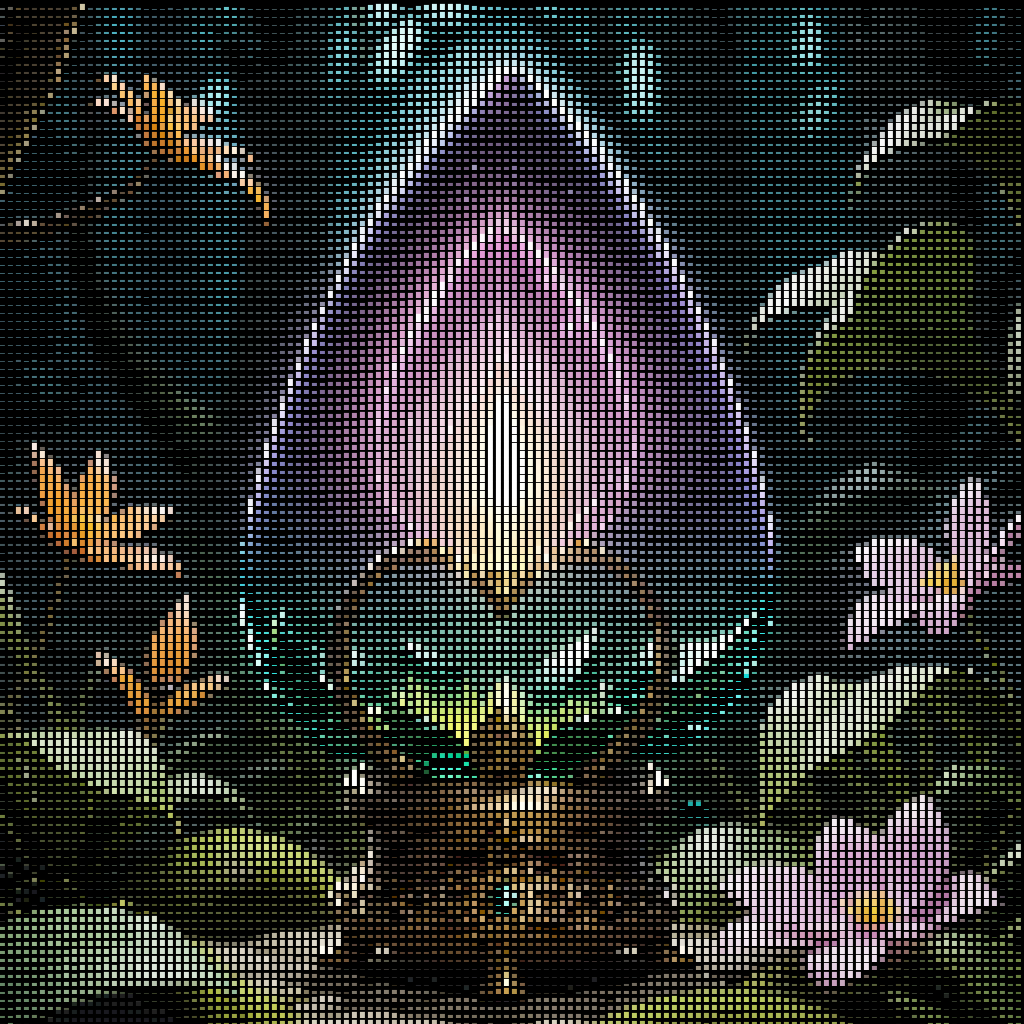

+### Retroize

+**Description:** Retroize is a collection of nodes for InvokeAI to "Retroize" images. Any image can be given a fresh coat of retro paint with these nodes, either from your gallery or from within the graph itself. It includes nodes to pixelize, quantize, palettize, and ditherize images; as well as to retrieve palettes from existing images.

+

+**Node Link:** https://github.com/Ar7ific1al/invokeai-retroizeinode/

+

+**Retroize Output Examples**

+

+

+

+**Requirement**

+

+a Text-Generation-Webui instance (might work remotely too, but I never tried it) and obviously InvokeAI 3.x

+

+**Note**

+

+This node works best with SDXL models, especially as the style can be described independently of the LLM's output.

+

+--------------------------------

### Prompt Tools

-**Description:** A set of InvokeAI nodes that add general prompt manipulation tools. These where written to accompany the PromptsFromFile node and other prompt generation nodes.

+**Description:** A set of InvokeAI nodes that add general prompt manipulation tools. These were written to accompany the PromptsFromFile node and other prompt generation nodes.

1. PromptJoin - Joins to prompts into one.

2. PromptReplace - performs a search and replace on a prompt. With the option of using regex.

@@ -263,51 +244,83 @@ See full docs here: https://github.com/skunkworxdark/Prompt-tools-nodes/edit/mai

**Node Link:** https://github.com/skunkworxdark/Prompt-tools-nodes

--------------------------------

+### Retroize

+**Description:** Retroize is a collection of nodes for InvokeAI to "Retroize" images. Any image can be given a fresh coat of retro paint with these nodes, either from your gallery or from within the graph itself. It includes nodes to pixelize, quantize, palettize, and ditherize images; as well as to retrieve palettes from existing images.

+

+**Node Link:** https://github.com/Ar7ific1al/invokeai-retroizeinode/

+

+**Retroize Output Examples**

+

+ +

+--------------------------------

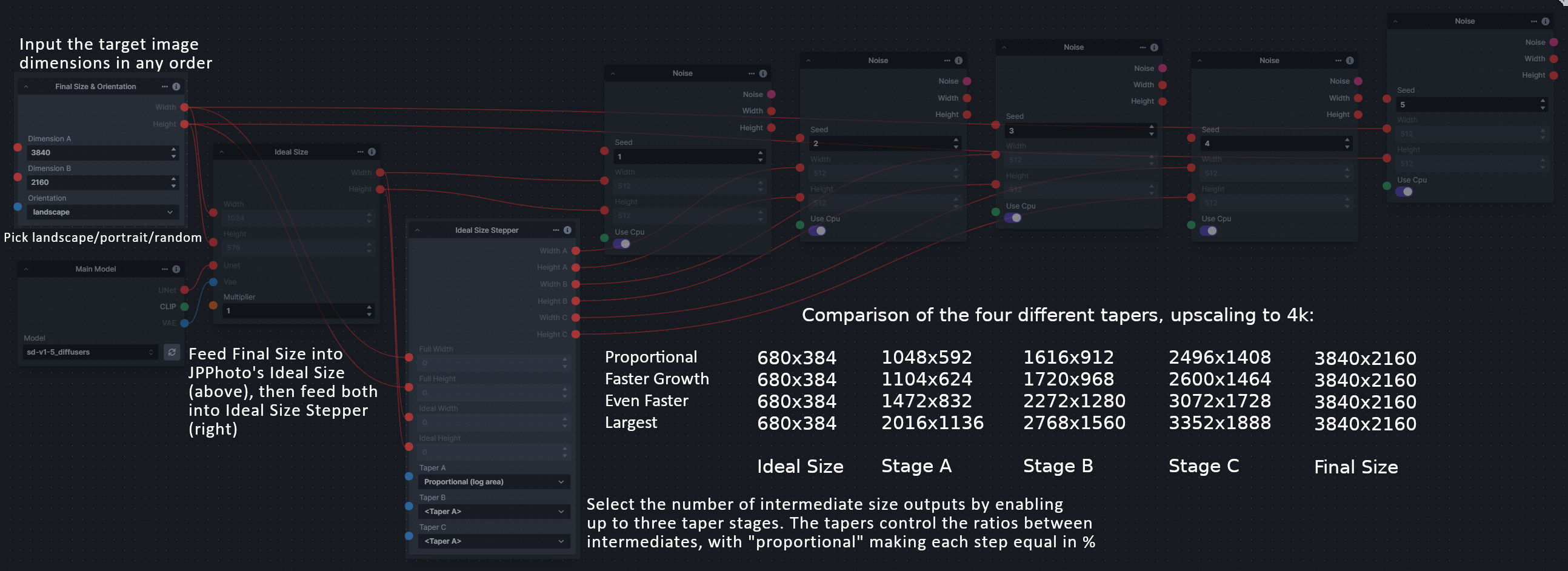

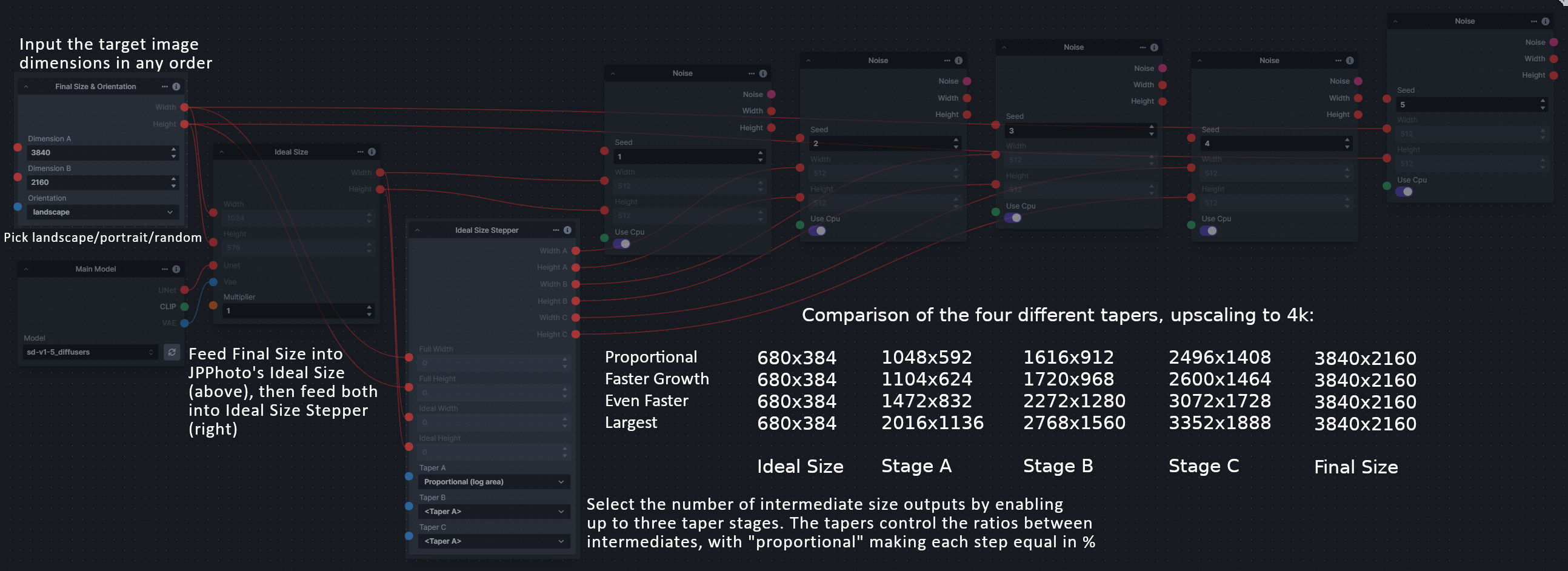

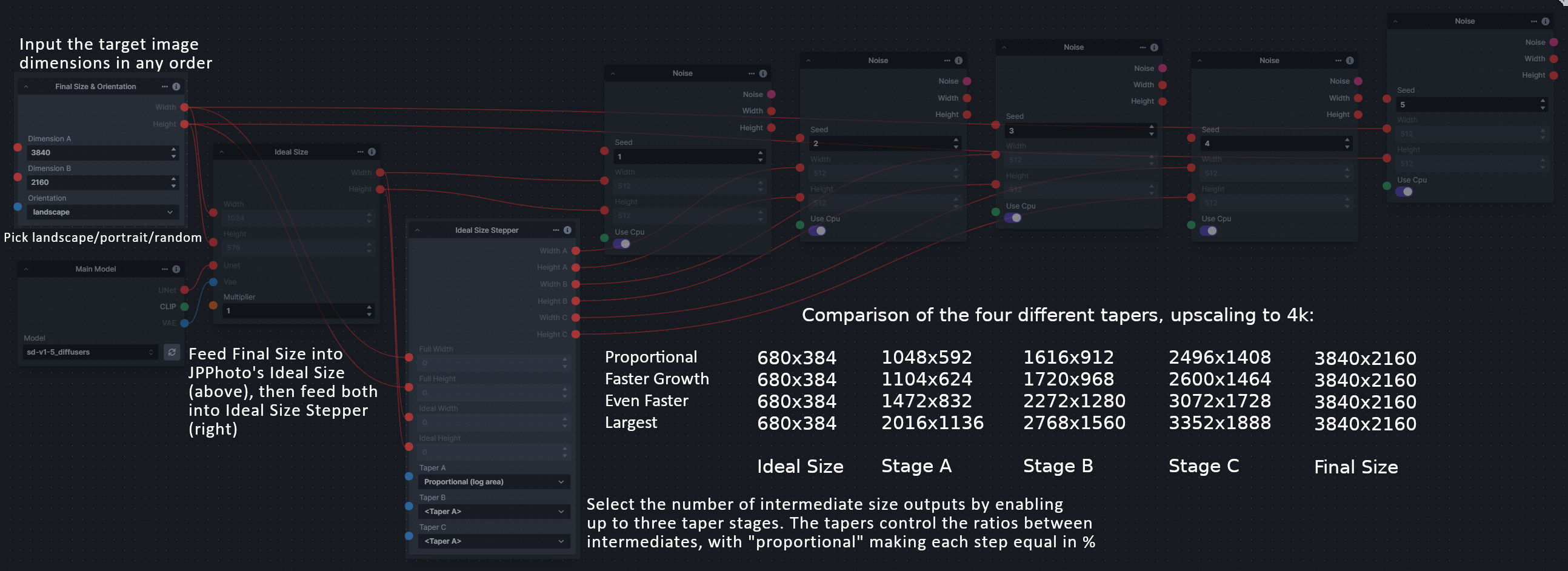

+### Size Stepper Nodes

+

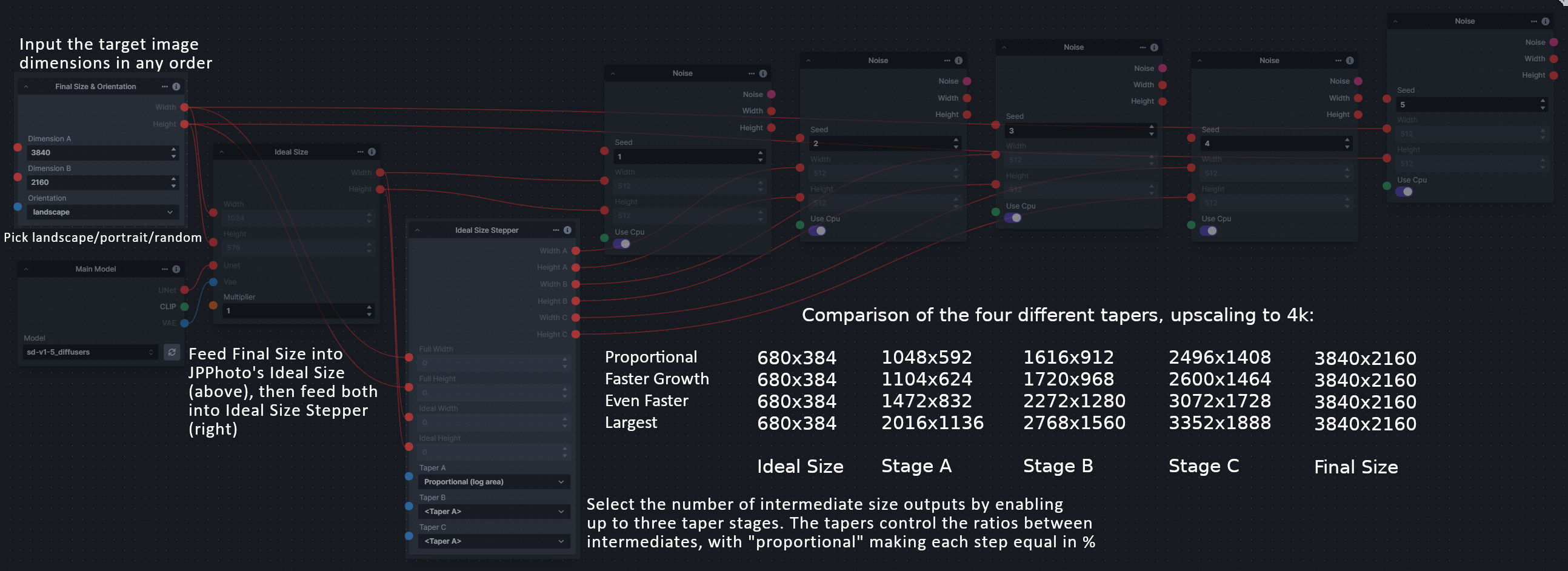

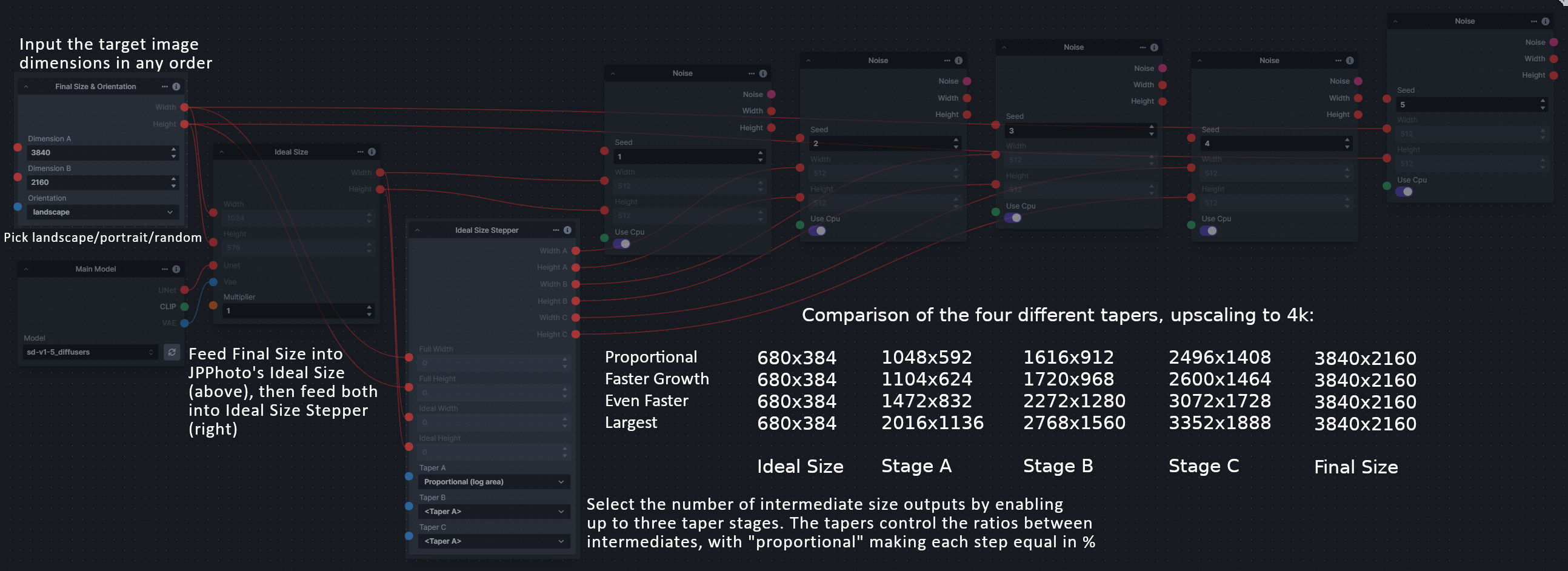

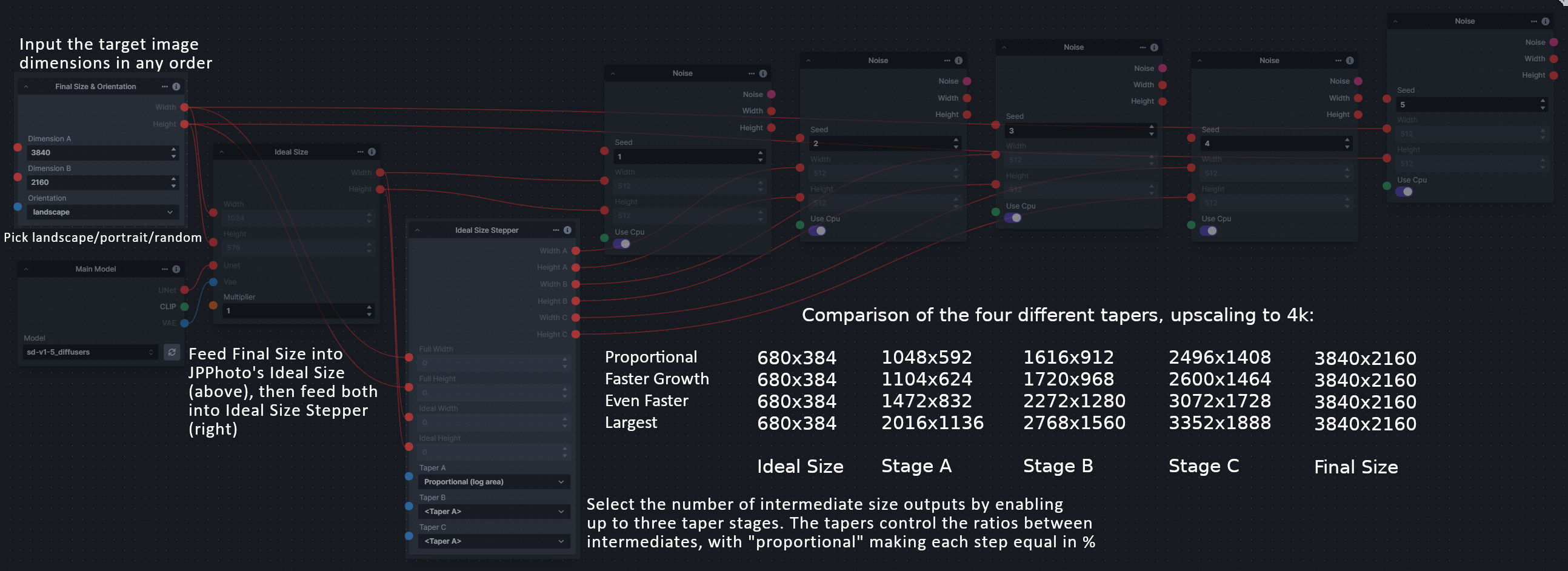

+**Description:** This is a set of nodes for calculating the necessary size increments for doing upscaling workflows. Use the *Final Size & Orientation* node to enter your full size dimensions and orientation (portrait/landscape/random), then plug that and your initial generation dimensions into the *Ideal Size Stepper* and get 1, 2, or 3 intermediate pairs of dimensions for upscaling. Note this does not output the initial size or full size dimensions: the 1, 2, or 3 outputs of this node are only the intermediate sizes.

+

+A third node is included, *Random Switch (Integers)*, which is just a generic version of Final Size with no orientation selection.

+

+**Node Link:** https://github.com/dwringer/size-stepper-nodes

+

+**Example Usage:**

+

+

+--------------------------------

+### Size Stepper Nodes

+

+**Description:** This is a set of nodes for calculating the necessary size increments for doing upscaling workflows. Use the *Final Size & Orientation* node to enter your full size dimensions and orientation (portrait/landscape/random), then plug that and your initial generation dimensions into the *Ideal Size Stepper* and get 1, 2, or 3 intermediate pairs of dimensions for upscaling. Note this does not output the initial size or full size dimensions: the 1, 2, or 3 outputs of this node are only the intermediate sizes.

+

+A third node is included, *Random Switch (Integers)*, which is just a generic version of Final Size with no orientation selection.

+

+**Node Link:** https://github.com/dwringer/size-stepper-nodes

+

+**Example Usage:**

+ +

+--------------------------------

+### Text font to Image

+

+**Description:** text font to text image node for InvokeAI, download a font to use (or if in font cache uses it from there), the text is always resized to the image size, but can control that with padding, optional 2nd line

+

+**Node Link:** https://github.com/mickr777/textfontimage

+

+**Output Examples**

+

+

+

+--------------------------------

+### Text font to Image

+

+**Description:** text font to text image node for InvokeAI, download a font to use (or if in font cache uses it from there), the text is always resized to the image size, but can control that with padding, optional 2nd line

+

+**Node Link:** https://github.com/mickr777/textfontimage

+

+**Output Examples**

+

+ +

+Results after using the depth controlnet

+

+

+

+Results after using the depth controlnet

+

+ +

+ +

+ +

+--------------------------------

+### Thresholding

+

+**Description:** This node generates masks for highlights, midtones, and shadows given an input image. You can optionally specify a blur for the lookup table used in making those masks from the source image.

+

+**Node Link:** https://github.com/JPPhoto/thresholding-node

+

+**Examples**

+

+Input:

+

+

+

+--------------------------------

+### Thresholding

+

+**Description:** This node generates masks for highlights, midtones, and shadows given an input image. You can optionally specify a blur for the lookup table used in making those masks from the source image.

+

+**Node Link:** https://github.com/JPPhoto/thresholding-node

+

+**Examples**

+

+Input:

+

+ +

+Highlights/Midtones/Shadows:

+

+

+

+Highlights/Midtones/Shadows:

+

+ +

+ +

+ +

+Highlights/Midtones/Shadows (with LUT blur enabled):

+

+

+

+Highlights/Midtones/Shadows (with LUT blur enabled):

+

+ +

+ +

+ +

+--------------------------------

### XY Image to Grid and Images to Grids nodes

**Description:** Image to grid nodes and supporting tools.

-1. "Images To Grids" node - Takes a collection of images and creates a grid(s) of images. If there are more images than the size of a single grid then mutilple grids will be created until it runs out of images.

-2. "XYImage To Grid" node - Converts a collection of XYImages into a labeled Grid of images. The XYImages collection has to be built using the supporoting nodes. See example node setups for more details.

-

+1. "Images To Grids" node - Takes a collection of images and creates a grid(s) of images. If there are more images than the size of a single grid then multiple grids will be created until it runs out of images.

+2. "XYImage To Grid" node - Converts a collection of XYImages into a labeled Grid of images. The XYImages collection has to be built using the supporting nodes. See example node setups for more details.

See full docs here: https://github.com/skunkworxdark/XYGrid_nodes/edit/main/README.md

**Node Link:** https://github.com/skunkworxdark/XYGrid_nodes

--------------------------------

-

-### Image to Character Art Image Node's

-

-**Description:** Group of nodes to convert an input image into ascii/unicode art Image

-

-**Node Link:** https://github.com/mickr777/imagetoasciiimage

-

-**Output Examples**

-

-

+

+--------------------------------

### XY Image to Grid and Images to Grids nodes

**Description:** Image to grid nodes and supporting tools.

-1. "Images To Grids" node - Takes a collection of images and creates a grid(s) of images. If there are more images than the size of a single grid then mutilple grids will be created until it runs out of images.

-2. "XYImage To Grid" node - Converts a collection of XYImages into a labeled Grid of images. The XYImages collection has to be built using the supporoting nodes. See example node setups for more details.

-

+1. "Images To Grids" node - Takes a collection of images and creates a grid(s) of images. If there are more images than the size of a single grid then multiple grids will be created until it runs out of images.

+2. "XYImage To Grid" node - Converts a collection of XYImages into a labeled Grid of images. The XYImages collection has to be built using the supporting nodes. See example node setups for more details.

See full docs here: https://github.com/skunkworxdark/XYGrid_nodes/edit/main/README.md

**Node Link:** https://github.com/skunkworxdark/XYGrid_nodes

--------------------------------

-

-### Image to Character Art Image Node's

-

-**Description:** Group of nodes to convert an input image into ascii/unicode art Image

-

-**Node Link:** https://github.com/mickr777/imagetoasciiimage

-

-**Output Examples**

-

- -

- -

- -

- -

---------------------------------

-

-### Grid to Gif

-

-**Description:** One node that turns a grid image into an image colletion, one node that turns an image collection into a gif

-

-**Node Link:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/GridToGif.py

-

-**Example Node Graph:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/Grid%20to%20Gif%20Example%20Workflow.json

-

-**Output Examples**

-

-

-

---------------------------------

-

-### Grid to Gif

-

-**Description:** One node that turns a grid image into an image colletion, one node that turns an image collection into a gif

-

-**Node Link:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/GridToGif.py

-

-**Example Node Graph:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/Grid%20to%20Gif%20Example%20Workflow.json

-

-**Output Examples**

-

- -

- -

---------------------------------

-

### Example Node Template

**Description:** This node allows you to do super cool things with InvokeAI.

@@ -318,7 +331,7 @@ See full docs here: https://github.com/skunkworxdark/XYGrid_nodes/edit/main/READ

**Output Examples**

-{: style="height:115px;width:240px"}

+

-

---------------------------------

-

### Example Node Template

**Description:** This node allows you to do super cool things with InvokeAI.

@@ -318,7 +331,7 @@ See full docs here: https://github.com/skunkworxdark/XYGrid_nodes/edit/main/READ

**Output Examples**

-{: style="height:115px;width:240px"}

+ ## Disclaimer

diff --git a/installer/install.bat.in b/installer/install.bat.in

index ffe96d4355..5fa76471de 100644

--- a/installer/install.bat.in

+++ b/installer/install.bat.in

@@ -1,7 +1,7 @@

@echo off

setlocal EnableExtensions EnableDelayedExpansion

-@rem This script requires the user to install Python 3.9 or higher. All other

+@rem This script requires the user to install Python 3.10 or higher. All other

@rem requirements are downloaded as needed.

@rem change to the script's directory

@@ -19,7 +19,7 @@ set INVOKEAI_VERSION=latest

set INSTRUCTIONS=https://invoke-ai.github.io/InvokeAI/installation/INSTALL_AUTOMATED/

set TROUBLESHOOTING=https://invoke-ai.github.io/InvokeAI/installation/INSTALL_AUTOMATED/#troubleshooting

set PYTHON_URL=https://www.python.org/downloads/windows/

-set MINIMUM_PYTHON_VERSION=3.9.0

+set MINIMUM_PYTHON_VERSION=3.10.0

set PYTHON_URL=https://www.python.org/downloads/release/python-3109/

set err_msg=An error has occurred and the script could not continue.

@@ -28,8 +28,7 @@ set err_msg=An error has occurred and the script could not continue.

echo This script will install InvokeAI and its dependencies.

echo.

echo BEFORE YOU START PLEASE MAKE SURE TO DO THE FOLLOWING

-echo 1. Install python 3.9 or 3.10. Python version 3.11 and above are

-echo not supported at the moment.

+echo 1. Install python 3.10 or 3.11. Python version 3.9 is no longer supported.

echo 2. Double-click on the file WinLongPathsEnabled.reg in order to

echo enable long path support on your system.

echo 3. Install the Visual C++ core libraries.

@@ -46,19 +45,19 @@ echo ***** Checking and Updating Python *****

call python --version >.tmp1 2>.tmp2

if %errorlevel% == 1 (

- set err_msg=Please install Python 3.10. See %INSTRUCTIONS% for details.

+ set err_msg=Please install Python 3.10-11. See %INSTRUCTIONS% for details.

goto err_exit

)

for /f "tokens=2" %%i in (.tmp1) do set python_version=%%i

if "%python_version%" == "" (

- set err_msg=No python was detected on your system. Please install Python version %MINIMUM_PYTHON_VERSION% or higher. We recommend Python 3.10.9 from %PYTHON_URL%

+ set err_msg=No python was detected on your system. Please install Python version %MINIMUM_PYTHON_VERSION% or higher. We recommend Python 3.10.12 from %PYTHON_URL%

goto err_exit

)

call :compareVersions %MINIMUM_PYTHON_VERSION% %python_version%

if %errorlevel% == 1 (

- set err_msg=Your version of Python is too low. You need at least %MINIMUM_PYTHON_VERSION% but you have %python_version%. We recommend Python 3.10.9 from %PYTHON_URL%

+ set err_msg=Your version of Python is too low. You need at least %MINIMUM_PYTHON_VERSION% but you have %python_version%. We recommend Python 3.10.12 from %PYTHON_URL%

goto err_exit

)

diff --git a/installer/install.sh.in b/installer/install.sh.in

index 1b8ba92ea6..9cf41192bf 100755

--- a/installer/install.sh.in

+++ b/installer/install.sh.in

@@ -8,10 +8,10 @@ cd $scriptdir

function version { echo "$@" | awk -F. '{ printf("%d%03d%03d%03d\n", $1,$2,$3,$4); }'; }

-MINIMUM_PYTHON_VERSION=3.9.0

+MINIMUM_PYTHON_VERSION=3.10.0

MAXIMUM_PYTHON_VERSION=3.11.100

PYTHON=""

-for candidate in python3.11 python3.10 python3.9 python3 python ; do

+for candidate in python3.11 python3.10 python3 python ; do

if ppath=`which $candidate`; then

# when using `pyenv`, the executable for an inactive Python version will exist but will not be operational

# we check that this found executable can actually run

diff --git a/installer/lib/installer.py b/installer/lib/installer.py

index 70ed4d4331..bf48e3b06d 100644

--- a/installer/lib/installer.py

+++ b/installer/lib/installer.py

@@ -13,7 +13,7 @@ from pathlib import Path

from tempfile import TemporaryDirectory

from typing import Union

-SUPPORTED_PYTHON = ">=3.9.0,<=3.11.100"

+SUPPORTED_PYTHON = ">=3.10.0,<=3.11.100"

INSTALLER_REQS = ["rich", "semver", "requests", "plumbum", "prompt-toolkit"]

BOOTSTRAP_VENV_PREFIX = "invokeai-installer-tmp"

@@ -67,7 +67,6 @@ class Installer:

# Cleaning up temporary directories on Windows results in a race condition

# and a stack trace.

# `ignore_cleanup_errors` was only added in Python 3.10

- # users of Python 3.9 will see a gnarly stack trace on installer exit

if OS == "Windows" and int(platform.python_version_tuple()[1]) >= 10:

venv_dir = TemporaryDirectory(prefix=BOOTSTRAP_VENV_PREFIX, ignore_cleanup_errors=True)

else:

@@ -139,13 +138,6 @@ class Installer:

except shutil.SameFileError:

venv.create(venv_dir, with_pip=True, symlinks=True)

- # upgrade pip in Python 3.9 environments

- if int(platform.python_version_tuple()[1]) == 9:

- from plumbum import FG, local

-

- pip = local[get_pip_from_venv(venv_dir)]

- pip["install", "--upgrade", "pip"] & FG

-

return venv_dir

def install(

diff --git a/installer/readme.txt b/installer/readme.txt

index b9a97e2093..ef040c3913 100644

--- a/installer/readme.txt

+++ b/installer/readme.txt

@@ -4,7 +4,7 @@ Project homepage: https://github.com/invoke-ai/InvokeAI

Preparations:

- You will need to install Python 3.9 or higher for this installer

+ You will need to install Python 3.10 or higher for this installer

to work. Instructions are given here:

https://invoke-ai.github.io/InvokeAI/installation/INSTALL_AUTOMATED/

@@ -14,15 +14,15 @@ Preparations:

python --version

If all is well, it will print "Python 3.X.X", where the version number

- is at least 3.9.*, and not higher than 3.11.*.

+ is at least 3.10.*, and not higher than 3.11.*.

If this works, check the version of the Python package manager, pip:

pip --version

You should get a message that indicates that the pip package

- installer was derived from Python 3.9 or 3.10. For example:

- "pip 22.3.1 from /usr/bin/pip (python 3.9)"

+ installer was derived from Python 3.10 or 3.11. For example:

+ "pip 22.0.1 from /usr/bin/pip (python 3.10)"

Long Paths on Windows:

diff --git a/invokeai/app/api/routers/images.py b/invokeai/app/api/routers/images.py

index 7b61887eb8..43a72943ee 100644

--- a/invokeai/app/api/routers/images.py

+++ b/invokeai/app/api/routers/images.py

@@ -42,7 +42,7 @@ async def upload_image(

crop_visible: Optional[bool] = Query(default=False, description="Whether to crop the image"),

) -> ImageDTO:

"""Uploads an image"""

- if not file.content_type.startswith("image"):

+ if not file.content_type or not file.content_type.startswith("image"):

raise HTTPException(status_code=415, detail="Not an image")

contents = await file.read()

diff --git a/invokeai/app/api/routers/models.py b/invokeai/app/api/routers/models.py

index ebc40f5ce5..018f3af02b 100644

--- a/invokeai/app/api/routers/models.py

+++ b/invokeai/app/api/routers/models.py

@@ -2,11 +2,11 @@

import pathlib

-from typing import List, Literal, Optional, Union

+from typing import Annotated, List, Literal, Optional, Union

from fastapi import Body, Path, Query, Response

from fastapi.routing import APIRouter

-from pydantic import BaseModel, parse_obj_as

+from pydantic import BaseModel, ConfigDict, Field, TypeAdapter

from starlette.exceptions import HTTPException

from invokeai.backend import BaseModelType, ModelType

@@ -23,8 +23,14 @@ from ..dependencies import ApiDependencies

models_router = APIRouter(prefix="/v1/models", tags=["models"])

UpdateModelResponse = Union[tuple(OPENAPI_MODEL_CONFIGS)]

+update_models_response_adapter = TypeAdapter(UpdateModelResponse)

+

ImportModelResponse = Union[tuple(OPENAPI_MODEL_CONFIGS)]

+import_models_response_adapter = TypeAdapter(ImportModelResponse)

+

ConvertModelResponse = Union[tuple(OPENAPI_MODEL_CONFIGS)]

+convert_models_response_adapter = TypeAdapter(ConvertModelResponse)

+

MergeModelResponse = Union[tuple(OPENAPI_MODEL_CONFIGS)]

ImportModelAttributes = Union[tuple(OPENAPI_MODEL_CONFIGS)]

@@ -32,6 +38,11 @@ ImportModelAttributes = Union[tuple(OPENAPI_MODEL_CONFIGS)]

class ModelsList(BaseModel):

models: list[Union[tuple(OPENAPI_MODEL_CONFIGS)]]

+ model_config = ConfigDict(use_enum_values=True)

+

+

+models_list_adapter = TypeAdapter(ModelsList)

+

@models_router.get(

"/",

@@ -49,7 +60,7 @@ async def list_models(

models_raw.extend(ApiDependencies.invoker.services.model_manager.list_models(base_model, model_type))

else:

models_raw = ApiDependencies.invoker.services.model_manager.list_models(None, model_type)

- models = parse_obj_as(ModelsList, {"models": models_raw})

+ models = models_list_adapter.validate_python({"models": models_raw})

return models

@@ -105,11 +116,14 @@ async def update_model(

info.path = new_info.get("path")

# replace empty string values with None/null to avoid phenomenon of vae: ''

- info_dict = info.dict()

+ info_dict = info.model_dump()

info_dict = {x: info_dict[x] if info_dict[x] else None for x in info_dict.keys()}

ApiDependencies.invoker.services.model_manager.update_model(

- model_name=model_name, base_model=base_model, model_type=model_type, model_attributes=info_dict

+ model_name=model_name,

+ base_model=base_model,

+ model_type=model_type,

+ model_attributes=info_dict,

)

model_raw = ApiDependencies.invoker.services.model_manager.list_model(

@@ -117,7 +131,7 @@ async def update_model(

base_model=base_model,

model_type=model_type,

)

- model_response = parse_obj_as(UpdateModelResponse, model_raw)

+ model_response = update_models_response_adapter.validate_python(model_raw)

except ModelNotFoundException as e:

raise HTTPException(status_code=404, detail=str(e))

except ValueError as e:

@@ -152,13 +166,15 @@ async def import_model(

) -> ImportModelResponse:

"""Add a model using its local path, repo_id, or remote URL. Model characteristics will be probed and configured automatically"""

+ location = location.strip("\"' ")

items_to_import = {location}

prediction_types = {x.value: x for x in SchedulerPredictionType}

logger = ApiDependencies.invoker.services.logger

try:

installed_models = ApiDependencies.invoker.services.model_manager.heuristic_import(

- items_to_import=items_to_import, prediction_type_helper=lambda x: prediction_types.get(prediction_type)

+ items_to_import=items_to_import,

+ prediction_type_helper=lambda x: prediction_types.get(prediction_type),

)

info = installed_models.get(location)

@@ -170,7 +186,7 @@ async def import_model(

model_raw = ApiDependencies.invoker.services.model_manager.list_model(

model_name=info.name, base_model=info.base_model, model_type=info.model_type

)

- return parse_obj_as(ImportModelResponse, model_raw)

+ return import_models_response_adapter.validate_python(model_raw)

except ModelNotFoundException as e:

logger.error(str(e))

@@ -204,13 +220,18 @@ async def add_model(

try:

ApiDependencies.invoker.services.model_manager.add_model(

- info.model_name, info.base_model, info.model_type, model_attributes=info.dict()

+ info.model_name,

+ info.base_model,

+ info.model_type,

+ model_attributes=info.model_dump(),

)

logger.info(f"Successfully added {info.model_name}")

model_raw = ApiDependencies.invoker.services.model_manager.list_model(

- model_name=info.model_name, base_model=info.base_model, model_type=info.model_type

+ model_name=info.model_name,

+ base_model=info.base_model,

+ model_type=info.model_type,

)

- return parse_obj_as(ImportModelResponse, model_raw)

+ return import_models_response_adapter.validate_python(model_raw)

except ModelNotFoundException as e:

logger.error(str(e))

raise HTTPException(status_code=404, detail=str(e))

@@ -222,7 +243,10 @@ async def add_model(

@models_router.delete(

"/{base_model}/{model_type}/{model_name}",

operation_id="del_model",

- responses={204: {"description": "Model deleted successfully"}, 404: {"description": "Model not found"}},

+ responses={

+ 204: {"description": "Model deleted successfully"},

+ 404: {"description": "Model not found"},

+ },

status_code=204,

response_model=None,

)

@@ -278,7 +302,7 @@ async def convert_model(

model_raw = ApiDependencies.invoker.services.model_manager.list_model(

model_name, base_model=base_model, model_type=model_type

)

- response = parse_obj_as(ConvertModelResponse, model_raw)

+ response = convert_models_response_adapter.validate_python(model_raw)

except ModelNotFoundException as e:

raise HTTPException(status_code=404, detail=f"Model '{model_name}' not found: {str(e)}")

except ValueError as e:

@@ -301,7 +325,8 @@ async def search_for_models(

) -> List[pathlib.Path]:

if not search_path.is_dir():

raise HTTPException(

- status_code=404, detail=f"The search path '{search_path}' does not exist or is not directory"

+ status_code=404,

+ detail=f"The search path '{search_path}' does not exist or is not directory",

)

return ApiDependencies.invoker.services.model_manager.search_for_models(search_path)

@@ -336,6 +361,26 @@ async def sync_to_config() -> bool:

return True

+# There's some weird pydantic-fastapi behaviour that requires this to be a separate class

+# TODO: After a few updates, see if it works inside the route operation handler?

+class MergeModelsBody(BaseModel):

+ model_names: List[str] = Field(description="model name", min_length=2, max_length=3)

+ merged_model_name: Optional[str] = Field(description="Name of destination model")

+ alpha: Optional[float] = Field(description="Alpha weighting strength to apply to 2d and 3d models", default=0.5)

+ interp: Optional[MergeInterpolationMethod] = Field(description="Interpolation method")

+ force: Optional[bool] = Field(

+ description="Force merging of models created with different versions of diffusers",

+ default=False,

+ )

+

+ merge_dest_directory: Optional[str] = Field(

+ description="Save the merged model to the designated directory (with 'merged_model_name' appended)",

+ default=None,

+ )

+

+ model_config = ConfigDict(protected_namespaces=())

+

+

@models_router.put(

"/merge/{base_model}",

operation_id="merge_models",

@@ -348,31 +393,23 @@ async def sync_to_config() -> bool:

response_model=MergeModelResponse,

)

async def merge_models(

+ body: Annotated[MergeModelsBody, Body(description="Model configuration", embed=True)],

base_model: BaseModelType = Path(description="Base model"),

- model_names: List[str] = Body(description="model name", min_items=2, max_items=3),

- merged_model_name: Optional[str] = Body(description="Name of destination model"),

- alpha: Optional[float] = Body(description="Alpha weighting strength to apply to 2d and 3d models", default=0.5),

- interp: Optional[MergeInterpolationMethod] = Body(description="Interpolation method"),

- force: Optional[bool] = Body(

- description="Force merging of models created with different versions of diffusers", default=False

- ),

- merge_dest_directory: Optional[str] = Body(

- description="Save the merged model to the designated directory (with 'merged_model_name' appended)",

- default=None,

- ),

) -> MergeModelResponse:

"""Convert a checkpoint model into a diffusers model"""

logger = ApiDependencies.invoker.services.logger

try:

- logger.info(f"Merging models: {model_names} into {merge_dest_directory or ''}/{merged_model_name}")

- dest = pathlib.Path(merge_dest_directory) if merge_dest_directory else None

+ logger.info(

+ f"Merging models: {body.model_names} into {body.merge_dest_directory or ''}/{body.merged_model_name}"

+ )

+ dest = pathlib.Path(body.merge_dest_directory) if body.merge_dest_directory else None

result = ApiDependencies.invoker.services.model_manager.merge_models(

- model_names,

- base_model,

- merged_model_name=merged_model_name or "+".join(model_names),

- alpha=alpha,

- interp=interp,

- force=force,

+ model_names=body.model_names,

+ base_model=base_model,

+ merged_model_name=body.merged_model_name or "+".join(body.model_names),

+ alpha=body.alpha,

+ interp=body.interp,

+ force=body.force,

merge_dest_directory=dest,

)

model_raw = ApiDependencies.invoker.services.model_manager.list_model(

@@ -380,9 +417,12 @@ async def merge_models(

base_model=base_model,

model_type=ModelType.Main,

)

- response = parse_obj_as(ConvertModelResponse, model_raw)

+ response = convert_models_response_adapter.validate_python(model_raw)

except ModelNotFoundException:

- raise HTTPException(status_code=404, detail=f"One or more of the models '{model_names}' not found")

+ raise HTTPException(

+ status_code=404,

+ detail=f"One or more of the models '{body.model_names}' not found",

+ )

except ValueError as e:

raise HTTPException(status_code=400, detail=str(e))

return response

diff --git a/invokeai/app/api/routers/sessions.py b/invokeai/app/api/routers/sessions.py

index cd93a267ad..fb850d0b2b 100644

--- a/invokeai/app/api/routers/sessions.py

+++ b/invokeai/app/api/routers/sessions.py

@@ -1,57 +1,50 @@

# Copyright (c) 2022 Kyle Schouviller (https://github.com/kyle0654)

-from typing import Annotated, Optional, Union

-from fastapi import Body, HTTPException, Path, Query, Response

+from fastapi import HTTPException, Path

from fastapi.routing import APIRouter

-from pydantic.fields import Field

-from invokeai.app.services.shared.pagination import PaginatedResults

-

-# Importing * is bad karma but needed here for node detection

-from ...invocations import * # noqa: F401 F403

-from ...invocations.baseinvocation import BaseInvocation

-from ...services.shared.graph import Edge, EdgeConnection, Graph, GraphExecutionState, NodeAlreadyExecutedError

+from ...services.shared.graph import GraphExecutionState

from ..dependencies import ApiDependencies

session_router = APIRouter(prefix="/v1/sessions", tags=["sessions"])

-@session_router.post(

- "/",

- operation_id="create_session",

- responses={

- 200: {"model": GraphExecutionState},

- 400: {"description": "Invalid json"},

- },

- deprecated=True,

-)

-async def create_session(

- queue_id: str = Query(default="", description="The id of the queue to associate the session with"),

- graph: Optional[Graph] = Body(default=None, description="The graph to initialize the session with"),

-) -> GraphExecutionState:

- """Creates a new session, optionally initializing it with an invocation graph"""

- session = ApiDependencies.invoker.create_execution_state(queue_id=queue_id, graph=graph)

- return session

+# @session_router.post(

+# "/",

+# operation_id="create_session",

+# responses={

+# 200: {"model": GraphExecutionState},

+# 400: {"description": "Invalid json"},

+# },

+# deprecated=True,

+# )

+# async def create_session(

+# queue_id: str = Query(default="", description="The id of the queue to associate the session with"),

+# graph: Optional[Graph] = Body(default=None, description="The graph to initialize the session with"),

+# ) -> GraphExecutionState:

+# """Creates a new session, optionally initializing it with an invocation graph"""

+# session = ApiDependencies.invoker.create_execution_state(queue_id=queue_id, graph=graph)

+# return session

-@session_router.get(

- "/",

- operation_id="list_sessions",

- responses={200: {"model": PaginatedResults[GraphExecutionState]}},

- deprecated=True,

-)

-async def list_sessions(

- page: int = Query(default=0, description="The page of results to get"),

- per_page: int = Query(default=10, description="The number of results per page"),

- query: str = Query(default="", description="The query string to search for"),

-) -> PaginatedResults[GraphExecutionState]:

- """Gets a list of sessions, optionally searching"""

- if query == "":

- result = ApiDependencies.invoker.services.graph_execution_manager.list(page, per_page)

- else:

- result = ApiDependencies.invoker.services.graph_execution_manager.search(query, page, per_page)

- return result

+# @session_router.get(

+# "/",

+# operation_id="list_sessions",

+# responses={200: {"model": PaginatedResults[GraphExecutionState]}},

+# deprecated=True,

+# )

+# async def list_sessions(

+# page: int = Query(default=0, description="The page of results to get"),

+# per_page: int = Query(default=10, description="The number of results per page"),

+# query: str = Query(default="", description="The query string to search for"),

+# ) -> PaginatedResults[GraphExecutionState]:

+# """Gets a list of sessions, optionally searching"""

+# if query == "":

+# result = ApiDependencies.invoker.services.graph_execution_manager.list(page, per_page)

+# else:

+# result = ApiDependencies.invoker.services.graph_execution_manager.search(query, page, per_page)

+# return result

@session_router.get(

@@ -61,7 +54,6 @@ async def list_sessions(

200: {"model": GraphExecutionState},

404: {"description": "Session not found"},

},

- deprecated=True,

)

async def get_session(

session_id: str = Path(description="The id of the session to get"),

@@ -74,211 +66,211 @@ async def get_session(

return session

-@session_router.post(

- "/{session_id}/nodes",

- operation_id="add_node",

- responses={

- 200: {"model": str},

- 400: {"description": "Invalid node or link"},

- 404: {"description": "Session not found"},

- },

- deprecated=True,

-)

-async def add_node(

- session_id: str = Path(description="The id of the session"),

- node: Annotated[Union[BaseInvocation.get_invocations()], Field(discriminator="type")] = Body( # type: ignore

- description="The node to add"

- ),

-) -> str:

- """Adds a node to the graph"""

- session = ApiDependencies.invoker.services.graph_execution_manager.get(session_id)

- if session is None:

- raise HTTPException(status_code=404)

+# @session_router.post(

+# "/{session_id}/nodes",

+# operation_id="add_node",

+# responses={

+# 200: {"model": str},

+# 400: {"description": "Invalid node or link"},

+# 404: {"description": "Session not found"},

+# },

+# deprecated=True,

+# )

+# async def add_node(

+# session_id: str = Path(description="The id of the session"),

+# node: Annotated[Union[BaseInvocation.get_invocations()], Field(discriminator="type")] = Body( # type: ignore

+# description="The node to add"

+# ),

+# ) -> str:

+# """Adds a node to the graph"""

+# session = ApiDependencies.invoker.services.graph_execution_manager.get(session_id)

+# if session is None:

+# raise HTTPException(status_code=404)

- try:

- session.add_node(node)

- ApiDependencies.invoker.services.graph_execution_manager.set(

- session

- ) # TODO: can this be done automatically, or add node through an API?

- return session.id

- except NodeAlreadyExecutedError:

- raise HTTPException(status_code=400)

- except IndexError:

- raise HTTPException(status_code=400)

+# try:

+# session.add_node(node)

+# ApiDependencies.invoker.services.graph_execution_manager.set(

+# session

+# ) # TODO: can this be done automatically, or add node through an API?

+# return session.id

+# except NodeAlreadyExecutedError:

+# raise HTTPException(status_code=400)

+# except IndexError:

+# raise HTTPException(status_code=400)

-@session_router.put(

- "/{session_id}/nodes/{node_path}",

- operation_id="update_node",

- responses={

- 200: {"model": GraphExecutionState},

- 400: {"description": "Invalid node or link"},

- 404: {"description": "Session not found"},

- },

- deprecated=True,

-)

-async def update_node(

- session_id: str = Path(description="The id of the session"),

- node_path: str = Path(description="The path to the node in the graph"),

- node: Annotated[Union[BaseInvocation.get_invocations()], Field(discriminator="type")] = Body( # type: ignore

- description="The new node"

- ),

-) -> GraphExecutionState:

- """Updates a node in the graph and removes all linked edges"""

- session = ApiDependencies.invoker.services.graph_execution_manager.get(session_id)

- if session is None:

- raise HTTPException(status_code=404)

+# @session_router.put(

+# "/{session_id}/nodes/{node_path}",

+# operation_id="update_node",

+# responses={

+# 200: {"model": GraphExecutionState},

+# 400: {"description": "Invalid node or link"},

+# 404: {"description": "Session not found"},

+# },

+# deprecated=True,

+# )

+# async def update_node(

+# session_id: str = Path(description="The id of the session"),

+# node_path: str = Path(description="The path to the node in the graph"),

+# node: Annotated[Union[BaseInvocation.get_invocations()], Field(discriminator="type")] = Body( # type: ignore

+# description="The new node"

+# ),

+# ) -> GraphExecutionState:

+# """Updates a node in the graph and removes all linked edges"""

+# session = ApiDependencies.invoker.services.graph_execution_manager.get(session_id)

+# if session is None:

+# raise HTTPException(status_code=404)

- try:

- session.update_node(node_path, node)

- ApiDependencies.invoker.services.graph_execution_manager.set(

- session

- ) # TODO: can this be done automatically, or add node through an API?

- return session

- except NodeAlreadyExecutedError:

- raise HTTPException(status_code=400)

- except IndexError:

- raise HTTPException(status_code=400)

+# try:

+# session.update_node(node_path, node)

+# ApiDependencies.invoker.services.graph_execution_manager.set(

+# session

+# ) # TODO: can this be done automatically, or add node through an API?

+# return session

+# except NodeAlreadyExecutedError:

+# raise HTTPException(status_code=400)

+# except IndexError:

+# raise HTTPException(status_code=400)

-@session_router.delete(

- "/{session_id}/nodes/{node_path}",

- operation_id="delete_node",

- responses={

- 200: {"model": GraphExecutionState},

- 400: {"description": "Invalid node or link"},

- 404: {"description": "Session not found"},

- },

- deprecated=True,

-)

-async def delete_node(

- session_id: str = Path(description="The id of the session"),

- node_path: str = Path(description="The path to the node to delete"),

-) -> GraphExecutionState:

- """Deletes a node in the graph and removes all linked edges"""

- session = ApiDependencies.invoker.services.graph_execution_manager.get(session_id)

- if session is None:

- raise HTTPException(status_code=404)

+# @session_router.delete(

+# "/{session_id}/nodes/{node_path}",

+# operation_id="delete_node",

+# responses={

+# 200: {"model": GraphExecutionState},

+# 400: {"description": "Invalid node or link"},

+# 404: {"description": "Session not found"},

+# },

+# deprecated=True,

+# )

+# async def delete_node(

+# session_id: str = Path(description="The id of the session"),

+# node_path: str = Path(description="The path to the node to delete"),

+# ) -> GraphExecutionState:

+# """Deletes a node in the graph and removes all linked edges"""

+# session = ApiDependencies.invoker.services.graph_execution_manager.get(session_id)

+# if session is None:

+# raise HTTPException(status_code=404)

- try:

- session.delete_node(node_path)

- ApiDependencies.invoker.services.graph_execution_manager.set(

- session

- ) # TODO: can this be done automatically, or add node through an API?

- return session

- except NodeAlreadyExecutedError:

- raise HTTPException(status_code=400)

- except IndexError:

- raise HTTPException(status_code=400)

+# try:

+# session.delete_node(node_path)

+# ApiDependencies.invoker.services.graph_execution_manager.set(

+# session

+# ) # TODO: can this be done automatically, or add node through an API?

+# return session

+# except NodeAlreadyExecutedError:

+# raise HTTPException(status_code=400)

+# except IndexError:

+# raise HTTPException(status_code=400)

-@session_router.post(

- "/{session_id}/edges",

- operation_id="add_edge",

- responses={

- 200: {"model": GraphExecutionState},

- 400: {"description": "Invalid node or link"},

- 404: {"description": "Session not found"},

- },

- deprecated=True,

-)

-async def add_edge(

- session_id: str = Path(description="The id of the session"),

- edge: Edge = Body(description="The edge to add"),

-) -> GraphExecutionState:

- """Adds an edge to the graph"""

- session = ApiDependencies.invoker.services.graph_execution_manager.get(session_id)

- if session is None:

- raise HTTPException(status_code=404)

+# @session_router.post(

+# "/{session_id}/edges",

+# operation_id="add_edge",

+# responses={

+# 200: {"model": GraphExecutionState},

+# 400: {"description": "Invalid node or link"},

+# 404: {"description": "Session not found"},

+# },

+# deprecated=True,

+# )

+# async def add_edge(

+# session_id: str = Path(description="The id of the session"),

+# edge: Edge = Body(description="The edge to add"),

+# ) -> GraphExecutionState:

+# """Adds an edge to the graph"""

+# session = ApiDependencies.invoker.services.graph_execution_manager.get(session_id)

+# if session is None:

+# raise HTTPException(status_code=404)

- try:

- session.add_edge(edge)

- ApiDependencies.invoker.services.graph_execution_manager.set(

- session

- ) # TODO: can this be done automatically, or add node through an API?

- return session

- except NodeAlreadyExecutedError:

- raise HTTPException(status_code=400)

- except IndexError: