mirror of

https://github.com/invoke-ai/InvokeAI

synced 2024-08-30 20:32:17 +00:00

Merge branch 'main' into bugfix/set-vram-on-macs

This commit is contained in:

commit

c54c1f603b

36

.github/CODEOWNERS

vendored

36

.github/CODEOWNERS

vendored

@ -1,34 +1,34 @@

|

||||

# continuous integration

|

||||

/.github/workflows/ @lstein @blessedcoolant

|

||||

/.github/workflows/ @lstein @blessedcoolant @hipsterusername

|

||||

|

||||

# documentation

|

||||

/docs/ @lstein @blessedcoolant @hipsterusername @Millu

|

||||

/mkdocs.yml @lstein @blessedcoolant

|

||||

/mkdocs.yml @lstein @blessedcoolant @hipsterusername @Millu

|

||||

|

||||

# nodes

|

||||

/invokeai/app/ @Kyle0654 @blessedcoolant @psychedelicious @brandonrising

|

||||

/invokeai/app/ @Kyle0654 @blessedcoolant @psychedelicious @brandonrising @hipsterusername

|

||||

|

||||

# installation and configuration

|

||||

/pyproject.toml @lstein @blessedcoolant

|

||||

/docker/ @lstein @blessedcoolant

|

||||

/scripts/ @ebr @lstein

|

||||

/installer/ @lstein @ebr

|

||||

/invokeai/assets @lstein @ebr

|

||||

/invokeai/configs @lstein

|

||||

/invokeai/version @lstein @blessedcoolant

|

||||

/pyproject.toml @lstein @blessedcoolant @hipsterusername

|

||||

/docker/ @lstein @blessedcoolant @hipsterusername

|

||||

/scripts/ @ebr @lstein @hipsterusername

|

||||

/installer/ @lstein @ebr @hipsterusername

|

||||

/invokeai/assets @lstein @ebr @hipsterusername

|

||||

/invokeai/configs @lstein @hipsterusername

|

||||

/invokeai/version @lstein @blessedcoolant @hipsterusername

|

||||

|

||||

# web ui

|

||||

/invokeai/frontend @blessedcoolant @psychedelicious @lstein @maryhipp

|

||||

/invokeai/backend @blessedcoolant @psychedelicious @lstein @maryhipp

|

||||

/invokeai/frontend @blessedcoolant @psychedelicious @lstein @maryhipp @hipsterusername

|

||||

/invokeai/backend @blessedcoolant @psychedelicious @lstein @maryhipp @hipsterusername

|

||||

|

||||

# generation, model management, postprocessing

|

||||

/invokeai/backend @damian0815 @lstein @blessedcoolant @gregghelt2 @StAlKeR7779 @brandonrising @ryanjdick

|

||||

/invokeai/backend @damian0815 @lstein @blessedcoolant @gregghelt2 @StAlKeR7779 @brandonrising @ryanjdick @hipsterusername

|

||||

|

||||

# front ends

|

||||

/invokeai/frontend/CLI @lstein

|

||||

/invokeai/frontend/install @lstein @ebr

|

||||

/invokeai/frontend/merge @lstein @blessedcoolant

|

||||

/invokeai/frontend/training @lstein @blessedcoolant

|

||||

/invokeai/frontend/web @psychedelicious @blessedcoolant @maryhipp

|

||||

/invokeai/frontend/CLI @lstein @hipsterusername

|

||||

/invokeai/frontend/install @lstein @ebr @hipsterusername

|

||||

/invokeai/frontend/merge @lstein @blessedcoolant @hipsterusername

|

||||

/invokeai/frontend/training @lstein @blessedcoolant @hipsterusername

|

||||

/invokeai/frontend/web @psychedelicious @blessedcoolant @maryhipp @hipsterusername

|

||||

|

||||

|

||||

|

||||

@ -244,8 +244,12 @@ copy-paste the template above.

|

||||

We can use the `@invocation` decorator to provide some additional info to the

|

||||

UI, like a custom title, tags and category.

|

||||

|

||||

We also encourage providing a version. This must be a

|

||||

[semver](https://semver.org/) version string ("$MAJOR.$MINOR.$PATCH"). The UI

|

||||

will let users know if their workflow is using a mismatched version of the node.

|

||||

|

||||

```python

|

||||

@invocation("resize", title="My Resizer", tags=["resize", "image"], category="My Invocations")

|

||||

@invocation("resize", title="My Resizer", tags=["resize", "image"], category="My Invocations", version="1.0.0")

|

||||

class ResizeInvocation(BaseInvocation):

|

||||

"""Resizes an image"""

|

||||

|

||||

@ -279,8 +283,6 @@ take a look a at our [contributing nodes overview](contributingNodes).

|

||||

|

||||

## Advanced

|

||||

|

||||

-->

|

||||

|

||||

### Custom Output Types

|

||||

|

||||

Like with custom inputs, sometimes you might find yourself needing custom

|

||||

|

||||

@ -22,12 +22,26 @@ To use a community node graph, download the the `.json` node graph file and load

|

||||

|

||||

|

||||

|

||||

--------------------------------

|

||||

### Ideal Size

|

||||

|

||||

**Description:** This node calculates an ideal image size for a first pass of a multi-pass upscaling. The aim is to avoid duplication that results from choosing a size larger than the model is capable of.

|

||||

|

||||

**Node Link:** https://github.com/JPPhoto/ideal-size-node

|

||||

|

||||

--------------------------------

|

||||

### Film Grain

|

||||

|

||||

**Description:** This node adds a film grain effect to the input image based on the weights, seeds, and blur radii parameters. It works with RGB input images only.

|

||||

|

||||

**Node Link:** https://github.com/JPPhoto/film-grain-node

|

||||

|

||||

--------------------------------

|

||||

### Image Picker

|

||||

|

||||

**Description:** This InvokeAI node takes in a collection of images and randomly chooses one. This can be useful when you have a number of poses to choose from for a ControlNet node, or a number of input images for another purpose.

|

||||

|

||||

**Node Link:** https://github.com/JPPhoto/image-picker-node

|

||||

|

||||

--------------------------------

|

||||

### Retroize

|

||||

@ -95,6 +109,91 @@ a Text-Generation-Webui instance (might work remotely too, but I never tried it)

|

||||

|

||||

This node works best with SDXL models, especially as the style can be described independantly of the LLM's output.

|

||||

|

||||

--------------------------------

|

||||

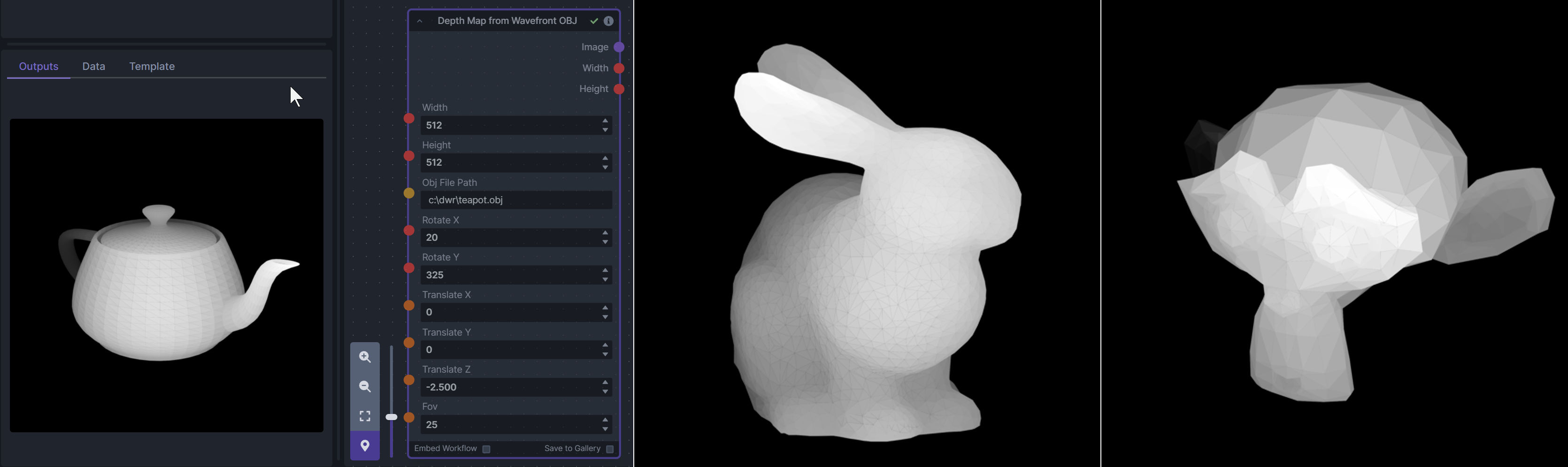

### Depth Map from Wavefront OBJ

|

||||

|

||||

**Description:** Render depth maps from Wavefront .obj files (triangulated) using this simple 3D renderer utilizing numpy and matplotlib to compute and color the scene. There are simple parameters to change the FOV, camera position, and model orientation.

|

||||

|

||||

To be imported, an .obj must use triangulated meshes, so make sure to enable that option if exporting from a 3D modeling program. This renderer makes each triangle a solid color based on its average depth, so it will cause anomalies if your .obj has large triangles. In Blender, the Remesh modifier can be helpful to subdivide a mesh into small pieces that work well given these limitations.

|

||||

|

||||

**Node Link:** https://github.com/dwringer/depth-from-obj-node

|

||||

|

||||

**Example Usage:**

|

||||

|

||||

|

||||

--------------------------------

|

||||

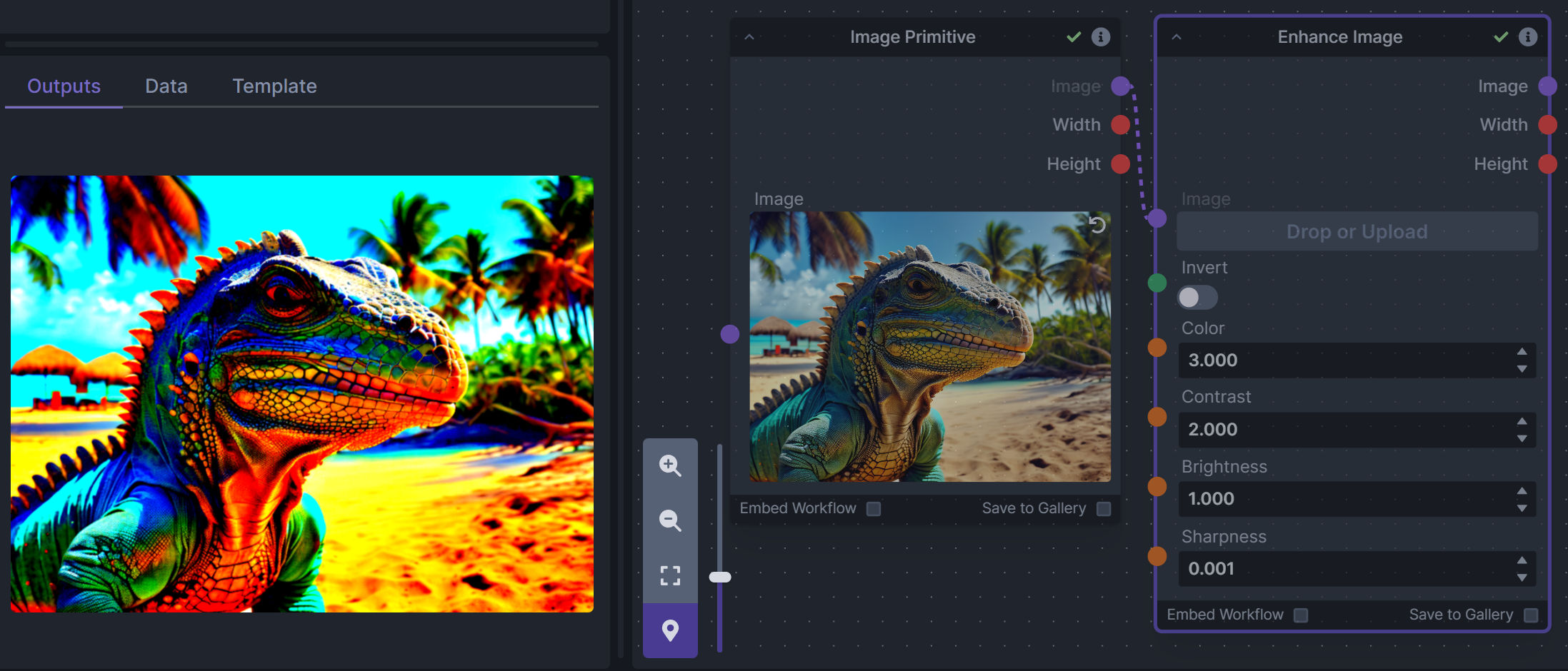

### Enhance Image (simple adjustments)

|

||||

|

||||

**Description:** Boost or reduce color saturation, contrast, brightness, sharpness, or invert colors of any image at any stage with this simple wrapper for pillow [PIL]'s ImageEnhance module.

|

||||

|

||||

Color inversion is toggled with a simple switch, while each of the four enhancer modes are activated by entering a value other than 1 in each corresponding input field. Values less than 1 will reduce the corresponding property, while values greater than 1 will enhance it.

|

||||

|

||||

**Node Link:** https://github.com/dwringer/image-enhance-node

|

||||

|

||||

**Example Usage:**

|

||||

|

||||

|

||||

--------------------------------

|

||||

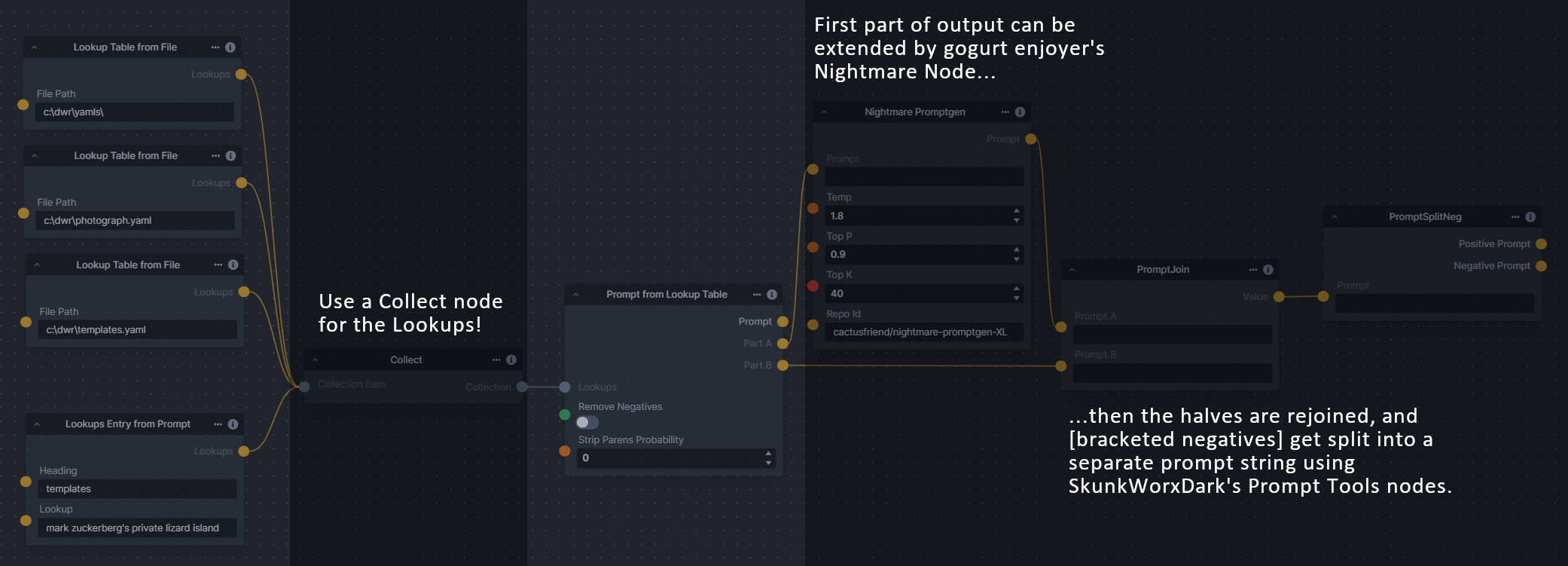

### Generative Grammar-Based Prompt Nodes

|

||||

|

||||

**Description:** This set of 3 nodes generates prompts from simple user-defined grammar rules (loaded from custom files - examples provided below). The prompts are made by recursively expanding a special template string, replacing nonterminal "parts-of-speech" until no more nonterminal terms remain in the string.

|

||||

|

||||

This includes 3 Nodes:

|

||||

- *Lookup Table from File* - loads a YAML file "prompt" section (or of a whole folder of YAML's) into a JSON-ified dictionary (Lookups output)

|

||||

- *Lookups Entry from Prompt* - places a single entry in a new Lookups output under the specified heading

|

||||

- *Prompt from Lookup Table* - uses a Collection of Lookups as grammar rules from which to randomly generate prompts.

|

||||

|

||||

**Node Link:** https://github.com/dwringer/generative-grammar-prompt-nodes

|

||||

|

||||

**Example Usage:**

|

||||

|

||||

|

||||

--------------------------------

|

||||

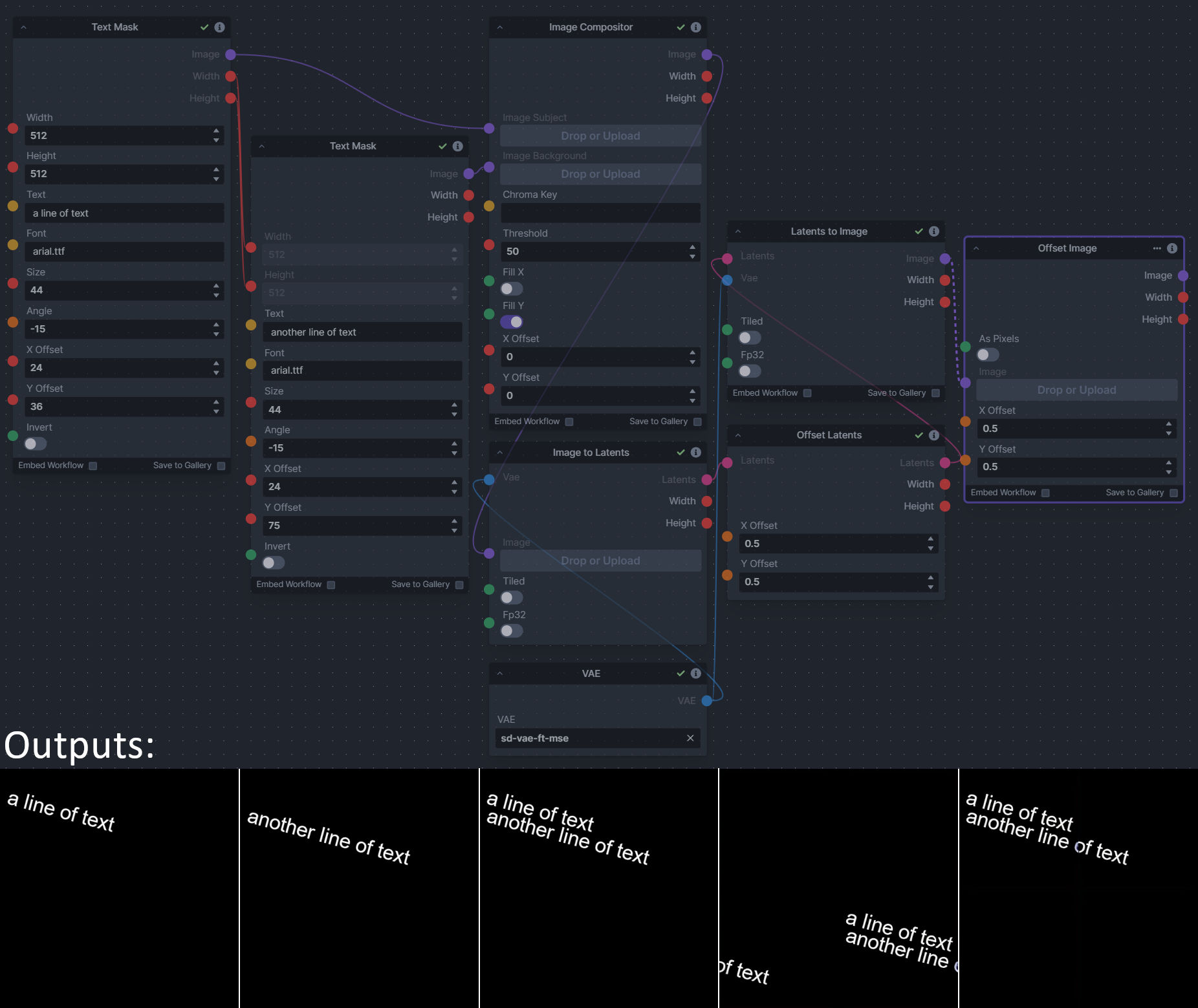

### Image and Mask Composition Pack

|

||||

|

||||

**Description:** This is a pack of nodes for composing masks and images, including a simple text mask creator and both image and latent offset nodes. The offsets wrap around, so these can be used in conjunction with the Seamless node to progressively generate centered on different parts of the seamless tiling.

|

||||

|

||||

This includes 4 Nodes:

|

||||

- *Text Mask (simple 2D)* - create and position a white on black (or black on white) line of text using any font locally available to Invoke.

|

||||

- *Image Compositor* - Take a subject from an image with a flat backdrop and layer it on another image using a chroma key or flood select background removal.

|

||||

- *Offset Latents* - Offset a latents tensor in the vertical and/or horizontal dimensions, wrapping it around.

|

||||

- *Offset Image* - Offset an image in the vertical and/or horizontal dimensions, wrapping it around.

|

||||

|

||||

**Node Link:** https://github.com/dwringer/composition-nodes

|

||||

|

||||

**Example Usage:**

|

||||

|

||||

|

||||

--------------------------------

|

||||

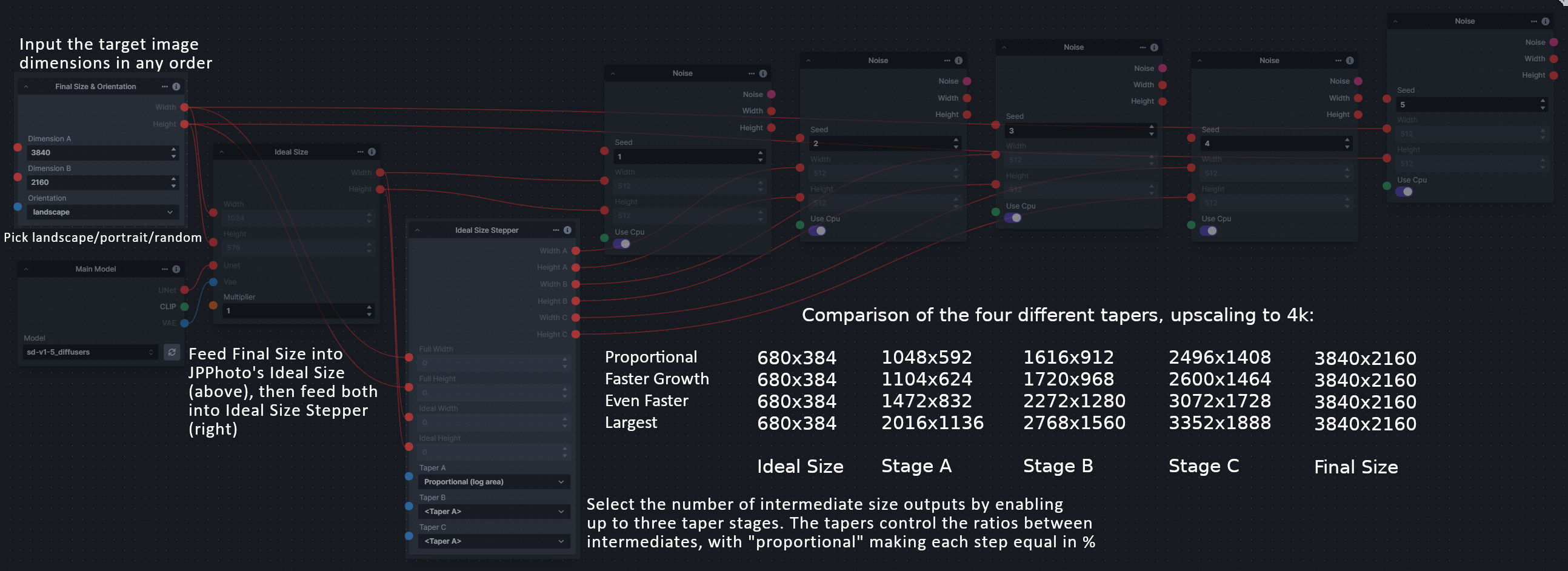

### Size Stepper Nodes

|

||||

|

||||

**Description:** This is a set of nodes for calculating the necessary size increments for doing upscaling workflows. Use the *Final Size & Orientation* node to enter your full size dimensions and orientation (portrait/landscape/random), then plug that and your initial generation dimensions into the *Ideal Size Stepper* and get 1, 2, or 3 intermediate pairs of dimensions for upscaling. Note this does not output the initial size or full size dimensions: the 1, 2, or 3 outputs of this node are only the intermediate sizes.

|

||||

|

||||

A third node is included, *Random Switch (Integers)*, which is just a generic version of Final Size with no orientation selection.

|

||||

|

||||

**Node Link:** https://github.com/dwringer/size-stepper-nodes

|

||||

|

||||

**Example Usage:**

|

||||

|

||||

|

||||

--------------------------------

|

||||

|

||||

### Text font to Image

|

||||

|

||||

**Description:** text font to text image node for InvokeAI, download a font to use (or if in font cache uses it from there), the text is always resized to the image size, but can control that with padding, optional 2nd line

|

||||

|

||||

**Node Link:** https://github.com/mickr777/textfontimage

|

||||

|

||||

**Output Examples**

|

||||

|

||||

|

||||

|

||||

Results after using the depth controlnet

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

--------------------------------

|

||||

|

||||

### Example Node Template

|

||||

|

||||

@ -35,13 +35,13 @@ The table below contains a list of the default nodes shipped with InvokeAI and t

|

||||

|Inverse Lerp Image | Inverse linear interpolation of all pixels of an image|

|

||||

|Image Primitive | An image primitive value|

|

||||

|Lerp Image | Linear interpolation of all pixels of an image|

|

||||

|Image Luminosity Adjustment | Adjusts the Luminosity (Value) of an image.|

|

||||

|Offset Image Channel | Add to or subtract from an image color channel by a uniform value.|

|

||||

|Multiply Image Channel | Multiply or Invert an image color channel by a scalar value.|

|

||||

|Multiply Images | Multiplies two images together using `PIL.ImageChops.multiply()`.|

|

||||

|Blur NSFW Image | Add blur to NSFW-flagged images|

|

||||

|Paste Image | Pastes an image into another image.|

|

||||

|ImageProcessor | Base class for invocations that preprocess images for ControlNet|

|

||||

|Resize Image | Resizes an image to specific dimensions|

|

||||

|Image Saturation Adjustment | Adjusts the Saturation of an image.|

|

||||

|Scale Image | Scales an image by a factor|

|

||||

|Image to Latents | Encodes an image into latents.|

|

||||

|Add Invisible Watermark | Add an invisible watermark to an image|

|

||||

|

||||

@ -1,19 +1,19 @@

|

||||

import typing

|

||||

from enum import Enum

|

||||

from pathlib import Path

|

||||

|

||||

from fastapi import Body

|

||||

from fastapi.routing import APIRouter

|

||||

from pathlib import Path

|

||||

from pydantic import BaseModel, Field

|

||||

|

||||

from invokeai.app.invocations.upscale import ESRGAN_MODELS

|

||||

from invokeai.backend.image_util.invisible_watermark import InvisibleWatermark

|

||||

from invokeai.backend.image_util.patchmatch import PatchMatch

|

||||

from invokeai.backend.image_util.safety_checker import SafetyChecker

|

||||

from invokeai.backend.image_util.invisible_watermark import InvisibleWatermark

|

||||

from invokeai.app.invocations.upscale import ESRGAN_MODELS

|

||||

|

||||

from invokeai.backend.util.logging import logging

|

||||

from invokeai.version import __version__

|

||||

|

||||

from ..dependencies import ApiDependencies

|

||||

from invokeai.backend.util.logging import logging

|

||||

|

||||

|

||||

class LogLevel(int, Enum):

|

||||

@ -55,7 +55,7 @@ async def get_version() -> AppVersion:

|

||||

|

||||

@app_router.get("/config", operation_id="get_config", status_code=200, response_model=AppConfig)

|

||||

async def get_config() -> AppConfig:

|

||||

infill_methods = ["tile", "lama"]

|

||||

infill_methods = ["tile", "lama", "cv2"]

|

||||

if PatchMatch.patchmatch_available():

|

||||

infill_methods.append("patchmatch")

|

||||

|

||||

|

||||

@ -26,11 +26,16 @@ from typing import (

|

||||

from pydantic import BaseModel, Field, validator

|

||||

from pydantic.fields import Undefined, ModelField

|

||||

from pydantic.typing import NoArgAnyCallable

|

||||

import semver

|

||||

|

||||

if TYPE_CHECKING:

|

||||

from ..services.invocation_services import InvocationServices

|

||||

|

||||

|

||||

class InvalidVersionError(ValueError):

|

||||

pass

|

||||

|

||||

|

||||

class FieldDescriptions:

|

||||

denoising_start = "When to start denoising, expressed a percentage of total steps"

|

||||

denoising_end = "When to stop denoising, expressed a percentage of total steps"

|

||||

@ -105,24 +110,39 @@ class UIType(str, Enum):

|

||||

"""

|

||||

|

||||

# region Primitives

|

||||

Integer = "integer"

|

||||

Float = "float"

|

||||

Boolean = "boolean"

|

||||

String = "string"

|

||||

Array = "array"

|

||||

Image = "ImageField"

|

||||

Latents = "LatentsField"

|

||||

Color = "ColorField"

|

||||

Conditioning = "ConditioningField"

|

||||

Control = "ControlField"

|

||||

Color = "ColorField"

|

||||

ImageCollection = "ImageCollection"

|

||||

ConditioningCollection = "ConditioningCollection"

|

||||

ColorCollection = "ColorCollection"

|

||||

LatentsCollection = "LatentsCollection"

|

||||

IntegerCollection = "IntegerCollection"

|

||||

FloatCollection = "FloatCollection"

|

||||

StringCollection = "StringCollection"

|

||||

Float = "float"

|

||||

Image = "ImageField"

|

||||

Integer = "integer"

|

||||

Latents = "LatentsField"

|

||||

String = "string"

|

||||

# endregion

|

||||

|

||||

# region Collection Primitives

|

||||

BooleanCollection = "BooleanCollection"

|

||||

ColorCollection = "ColorCollection"

|

||||

ConditioningCollection = "ConditioningCollection"

|

||||

ControlCollection = "ControlCollection"

|

||||

FloatCollection = "FloatCollection"

|

||||

ImageCollection = "ImageCollection"

|

||||

IntegerCollection = "IntegerCollection"

|

||||

LatentsCollection = "LatentsCollection"

|

||||

StringCollection = "StringCollection"

|

||||

# endregion

|

||||

|

||||

# region Polymorphic Primitives

|

||||

BooleanPolymorphic = "BooleanPolymorphic"

|

||||

ColorPolymorphic = "ColorPolymorphic"

|

||||

ConditioningPolymorphic = "ConditioningPolymorphic"

|

||||

ControlPolymorphic = "ControlPolymorphic"

|

||||

FloatPolymorphic = "FloatPolymorphic"

|

||||

ImagePolymorphic = "ImagePolymorphic"

|

||||

IntegerPolymorphic = "IntegerPolymorphic"

|

||||

LatentsPolymorphic = "LatentsPolymorphic"

|

||||

StringPolymorphic = "StringPolymorphic"

|

||||

# endregion

|

||||

|

||||

# region Models

|

||||

@ -176,6 +196,7 @@ class _InputField(BaseModel):

|

||||

ui_type: Optional[UIType]

|

||||

ui_component: Optional[UIComponent]

|

||||

ui_order: Optional[int]

|

||||

item_default: Optional[Any]

|

||||

|

||||

|

||||

class _OutputField(BaseModel):

|

||||

@ -223,6 +244,7 @@ def InputField(

|

||||

ui_component: Optional[UIComponent] = None,

|

||||

ui_hidden: bool = False,

|

||||

ui_order: Optional[int] = None,

|

||||

item_default: Optional[Any] = None,

|

||||

**kwargs: Any,

|

||||

) -> Any:

|

||||

"""

|

||||

@ -249,6 +271,11 @@ def InputField(

|

||||

For this case, you could provide `UIComponent.Textarea`.

|

||||

|

||||

: param bool ui_hidden: [False] Specifies whether or not this field should be hidden in the UI.

|

||||

|

||||

: param int ui_order: [None] Specifies the order in which this field should be rendered in the UI. \

|

||||

|

||||

: param bool item_default: [None] Specifies the default item value, if this is a collection input. \

|

||||

Ignored for non-collection fields..

|

||||

"""

|

||||

return Field(

|

||||

*args,

|

||||

@ -282,6 +309,7 @@ def InputField(

|

||||

ui_component=ui_component,

|

||||

ui_hidden=ui_hidden,

|

||||

ui_order=ui_order,

|

||||

item_default=item_default,

|

||||

**kwargs,

|

||||

)

|

||||

|

||||

@ -332,6 +360,8 @@ def OutputField(

|

||||

`UIType.SDXLMainModelField` to indicate that the field is an SDXL main model field.

|

||||

|

||||

: param bool ui_hidden: [False] Specifies whether or not this field should be hidden in the UI. \

|

||||

|

||||

: param int ui_order: [None] Specifies the order in which this field should be rendered in the UI. \

|

||||

"""

|

||||

return Field(

|

||||

*args,

|

||||

@ -376,6 +406,9 @@ class UIConfigBase(BaseModel):

|

||||

tags: Optional[list[str]] = Field(default_factory=None, description="The node's tags")

|

||||

title: Optional[str] = Field(default=None, description="The node's display name")

|

||||

category: Optional[str] = Field(default=None, description="The node's category")

|

||||

version: Optional[str] = Field(

|

||||

default=None, description='The node\'s version. Should be a valid semver string e.g. "1.0.0" or "3.8.13".'

|

||||

)

|

||||

|

||||

|

||||

class InvocationContext:

|

||||

@ -474,6 +507,8 @@ class BaseInvocation(ABC, BaseModel):

|

||||

schema["tags"] = uiconfig.tags

|

||||

if uiconfig and hasattr(uiconfig, "category"):

|

||||

schema["category"] = uiconfig.category

|

||||

if uiconfig and hasattr(uiconfig, "version"):

|

||||

schema["version"] = uiconfig.version

|

||||

if "required" not in schema or not isinstance(schema["required"], list):

|

||||

schema["required"] = list()

|

||||

schema["required"].extend(["type", "id"])

|

||||

@ -542,7 +577,11 @@ GenericBaseInvocation = TypeVar("GenericBaseInvocation", bound=BaseInvocation)

|

||||

|

||||

|

||||

def invocation(

|

||||

invocation_type: str, title: Optional[str] = None, tags: Optional[list[str]] = None, category: Optional[str] = None

|

||||

invocation_type: str,

|

||||

title: Optional[str] = None,

|

||||

tags: Optional[list[str]] = None,

|

||||

category: Optional[str] = None,

|

||||

version: Optional[str] = None,

|

||||

) -> Callable[[Type[GenericBaseInvocation]], Type[GenericBaseInvocation]]:

|

||||

"""

|

||||

Adds metadata to an invocation.

|

||||

@ -569,6 +608,12 @@ def invocation(

|

||||

cls.UIConfig.tags = tags

|

||||

if category is not None:

|

||||

cls.UIConfig.category = category

|

||||

if version is not None:

|

||||

try:

|

||||

semver.Version.parse(version)

|

||||

except ValueError as e:

|

||||

raise InvalidVersionError(f'Invalid version string for node "{invocation_type}": "{version}"') from e

|

||||

cls.UIConfig.version = version

|

||||

|

||||

# Add the invocation type to the pydantic model of the invocation

|

||||

invocation_type_annotation = Literal[invocation_type] # type: ignore

|

||||

@ -580,8 +625,9 @@ def invocation(

|

||||

config=cls.__config__,

|

||||

)

|

||||

cls.__fields__.update({"type": invocation_type_field})

|

||||

cls.__annotations__.update({"type": invocation_type_annotation})

|

||||

|

||||

# to support 3.9, 3.10 and 3.11, as described in https://docs.python.org/3/howto/annotations.html

|

||||

if annotations := cls.__dict__.get("__annotations__", None):

|

||||

annotations.update({"type": invocation_type_annotation})

|

||||

return cls

|

||||

|

||||

return wrapper

|

||||

@ -615,7 +661,10 @@ def invocation_output(

|

||||

config=cls.__config__,

|

||||

)

|

||||

cls.__fields__.update({"type": output_type_field})

|

||||

cls.__annotations__.update({"type": output_type_annotation})

|

||||

|

||||

# to support 3.9, 3.10 and 3.11, as described in https://docs.python.org/3/howto/annotations.html

|

||||

if annotations := cls.__dict__.get("__annotations__", None):

|

||||

annotations.update({"type": output_type_annotation})

|

||||

|

||||

return cls

|

||||

|

||||

|

||||

@ -10,7 +10,9 @@ from invokeai.app.util.misc import SEED_MAX, get_random_seed

|

||||

from .baseinvocation import BaseInvocation, InputField, InvocationContext, invocation

|

||||

|

||||

|

||||

@invocation("range", title="Integer Range", tags=["collection", "integer", "range"], category="collections")

|

||||

@invocation(

|

||||

"range", title="Integer Range", tags=["collection", "integer", "range"], category="collections", version="1.0.0"

|

||||

)

|

||||

class RangeInvocation(BaseInvocation):

|

||||

"""Creates a range of numbers from start to stop with step"""

|

||||

|

||||

@ -33,6 +35,7 @@ class RangeInvocation(BaseInvocation):

|

||||

title="Integer Range of Size",

|

||||

tags=["collection", "integer", "size", "range"],

|

||||

category="collections",

|

||||

version="1.0.0",

|

||||

)

|

||||

class RangeOfSizeInvocation(BaseInvocation):

|

||||

"""Creates a range from start to start + size with step"""

|

||||

@ -50,6 +53,7 @@ class RangeOfSizeInvocation(BaseInvocation):

|

||||

title="Random Range",

|

||||

tags=["range", "integer", "random", "collection"],

|

||||

category="collections",

|

||||

version="1.0.0",

|

||||

)

|

||||

class RandomRangeInvocation(BaseInvocation):

|

||||

"""Creates a collection of random numbers"""

|

||||

|

||||

@ -44,7 +44,7 @@ class ConditioningFieldData:

|

||||

# PerpNeg = "perp_neg"

|

||||

|

||||

|

||||

@invocation("compel", title="Prompt", tags=["prompt", "compel"], category="conditioning")

|

||||

@invocation("compel", title="Prompt", tags=["prompt", "compel"], category="conditioning", version="1.0.0")

|

||||

class CompelInvocation(BaseInvocation):

|

||||

"""Parse prompt using compel package to conditioning."""

|

||||

|

||||

@ -267,6 +267,7 @@ class SDXLPromptInvocationBase:

|

||||

title="SDXL Prompt",

|

||||

tags=["sdxl", "compel", "prompt"],

|

||||

category="conditioning",

|

||||

version="1.0.0",

|

||||

)

|

||||

class SDXLCompelPromptInvocation(BaseInvocation, SDXLPromptInvocationBase):

|

||||

"""Parse prompt using compel package to conditioning."""

|

||||

@ -351,6 +352,7 @@ class SDXLCompelPromptInvocation(BaseInvocation, SDXLPromptInvocationBase):

|

||||

title="SDXL Refiner Prompt",

|

||||

tags=["sdxl", "compel", "prompt"],

|

||||

category="conditioning",

|

||||

version="1.0.0",

|

||||

)

|

||||

class SDXLRefinerCompelPromptInvocation(BaseInvocation, SDXLPromptInvocationBase):

|

||||

"""Parse prompt using compel package to conditioning."""

|

||||

@ -403,7 +405,7 @@ class ClipSkipInvocationOutput(BaseInvocationOutput):

|

||||

clip: ClipField = OutputField(default=None, description=FieldDescriptions.clip, title="CLIP")

|

||||

|

||||

|

||||

@invocation("clip_skip", title="CLIP Skip", tags=["clipskip", "clip", "skip"], category="conditioning")

|

||||

@invocation("clip_skip", title="CLIP Skip", tags=["clipskip", "clip", "skip"], category="conditioning", version="1.0.0")

|

||||

class ClipSkipInvocation(BaseInvocation):

|

||||

"""Skip layers in clip text_encoder model."""

|

||||

|

||||

|

||||

@ -95,14 +95,12 @@ class ControlOutput(BaseInvocationOutput):

|

||||

control: ControlField = OutputField(description=FieldDescriptions.control)

|

||||

|

||||

|

||||

@invocation("controlnet", title="ControlNet", tags=["controlnet"], category="controlnet")

|

||||

@invocation("controlnet", title="ControlNet", tags=["controlnet"], category="controlnet", version="1.0.0")

|

||||

class ControlNetInvocation(BaseInvocation):

|

||||

"""Collects ControlNet info to pass to other nodes"""

|

||||

|

||||

image: ImageField = InputField(description="The control image")

|

||||

control_model: ControlNetModelField = InputField(

|

||||

default="lllyasviel/sd-controlnet-canny", description=FieldDescriptions.controlnet_model, input=Input.Direct

|

||||

)

|

||||

control_model: ControlNetModelField = InputField(description=FieldDescriptions.controlnet_model, input=Input.Direct)

|

||||

control_weight: Union[float, List[float]] = InputField(

|

||||

default=1.0, description="The weight given to the ControlNet", ui_type=UIType.Float

|

||||

)

|

||||

@ -129,7 +127,9 @@ class ControlNetInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("image_processor", title="Base Image Processor", tags=["controlnet"], category="controlnet")

|

||||

@invocation(

|

||||

"image_processor", title="Base Image Processor", tags=["controlnet"], category="controlnet", version="1.0.0"

|

||||

)

|

||||

class ImageProcessorInvocation(BaseInvocation):

|

||||

"""Base class for invocations that preprocess images for ControlNet"""

|

||||

|

||||

@ -173,6 +173,7 @@ class ImageProcessorInvocation(BaseInvocation):

|

||||

title="Canny Processor",

|

||||

tags=["controlnet", "canny"],

|

||||

category="controlnet",

|

||||

version="1.0.0",

|

||||

)

|

||||

class CannyImageProcessorInvocation(ImageProcessorInvocation):

|

||||

"""Canny edge detection for ControlNet"""

|

||||

@ -195,6 +196,7 @@ class CannyImageProcessorInvocation(ImageProcessorInvocation):

|

||||

title="HED (softedge) Processor",

|

||||

tags=["controlnet", "hed", "softedge"],

|

||||

category="controlnet",

|

||||

version="1.0.0",

|

||||

)

|

||||

class HedImageProcessorInvocation(ImageProcessorInvocation):

|

||||

"""Applies HED edge detection to image"""

|

||||

@ -223,6 +225,7 @@ class HedImageProcessorInvocation(ImageProcessorInvocation):

|

||||

title="Lineart Processor",

|

||||

tags=["controlnet", "lineart"],

|

||||

category="controlnet",

|

||||

version="1.0.0",

|

||||

)

|

||||

class LineartImageProcessorInvocation(ImageProcessorInvocation):

|

||||

"""Applies line art processing to image"""

|

||||

@ -244,6 +247,7 @@ class LineartImageProcessorInvocation(ImageProcessorInvocation):

|

||||

title="Lineart Anime Processor",

|

||||

tags=["controlnet", "lineart", "anime"],

|

||||

category="controlnet",

|

||||

version="1.0.0",

|

||||

)

|

||||

class LineartAnimeImageProcessorInvocation(ImageProcessorInvocation):

|

||||

"""Applies line art anime processing to image"""

|

||||

@ -266,6 +270,7 @@ class LineartAnimeImageProcessorInvocation(ImageProcessorInvocation):

|

||||

title="Openpose Processor",

|

||||

tags=["controlnet", "openpose", "pose"],

|

||||

category="controlnet",

|

||||

version="1.0.0",

|

||||

)

|

||||

class OpenposeImageProcessorInvocation(ImageProcessorInvocation):

|

||||

"""Applies Openpose processing to image"""

|

||||

@ -290,6 +295,7 @@ class OpenposeImageProcessorInvocation(ImageProcessorInvocation):

|

||||

title="Midas Depth Processor",

|

||||

tags=["controlnet", "midas"],

|

||||

category="controlnet",

|

||||

version="1.0.0",

|

||||

)

|

||||

class MidasDepthImageProcessorInvocation(ImageProcessorInvocation):

|

||||

"""Applies Midas depth processing to image"""

|

||||

@ -316,6 +322,7 @@ class MidasDepthImageProcessorInvocation(ImageProcessorInvocation):

|

||||

title="Normal BAE Processor",

|

||||

tags=["controlnet"],

|

||||

category="controlnet",

|

||||

version="1.0.0",

|

||||

)

|

||||

class NormalbaeImageProcessorInvocation(ImageProcessorInvocation):

|

||||

"""Applies NormalBae processing to image"""

|

||||

@ -331,7 +338,9 @@ class NormalbaeImageProcessorInvocation(ImageProcessorInvocation):

|

||||

return processed_image

|

||||

|

||||

|

||||

@invocation("mlsd_image_processor", title="MLSD Processor", tags=["controlnet", "mlsd"], category="controlnet")

|

||||

@invocation(

|

||||

"mlsd_image_processor", title="MLSD Processor", tags=["controlnet", "mlsd"], category="controlnet", version="1.0.0"

|

||||

)

|

||||

class MlsdImageProcessorInvocation(ImageProcessorInvocation):

|

||||

"""Applies MLSD processing to image"""

|

||||

|

||||

@ -352,7 +361,9 @@ class MlsdImageProcessorInvocation(ImageProcessorInvocation):

|

||||

return processed_image

|

||||

|

||||

|

||||

@invocation("pidi_image_processor", title="PIDI Processor", tags=["controlnet", "pidi"], category="controlnet")

|

||||

@invocation(

|

||||

"pidi_image_processor", title="PIDI Processor", tags=["controlnet", "pidi"], category="controlnet", version="1.0.0"

|

||||

)

|

||||

class PidiImageProcessorInvocation(ImageProcessorInvocation):

|

||||

"""Applies PIDI processing to image"""

|

||||

|

||||

@ -378,6 +389,7 @@ class PidiImageProcessorInvocation(ImageProcessorInvocation):

|

||||

title="Content Shuffle Processor",

|

||||

tags=["controlnet", "contentshuffle"],

|

||||

category="controlnet",

|

||||

version="1.0.0",

|

||||

)

|

||||

class ContentShuffleImageProcessorInvocation(ImageProcessorInvocation):

|

||||

"""Applies content shuffle processing to image"""

|

||||

@ -407,6 +419,7 @@ class ContentShuffleImageProcessorInvocation(ImageProcessorInvocation):

|

||||

title="Zoe (Depth) Processor",

|

||||

tags=["controlnet", "zoe", "depth"],

|

||||

category="controlnet",

|

||||

version="1.0.0",

|

||||

)

|

||||

class ZoeDepthImageProcessorInvocation(ImageProcessorInvocation):

|

||||

"""Applies Zoe depth processing to image"""

|

||||

@ -422,6 +435,7 @@ class ZoeDepthImageProcessorInvocation(ImageProcessorInvocation):

|

||||

title="Mediapipe Face Processor",

|

||||

tags=["controlnet", "mediapipe", "face"],

|

||||

category="controlnet",

|

||||

version="1.0.0",

|

||||

)

|

||||

class MediapipeFaceProcessorInvocation(ImageProcessorInvocation):

|

||||

"""Applies mediapipe face processing to image"""

|

||||

@ -444,6 +458,7 @@ class MediapipeFaceProcessorInvocation(ImageProcessorInvocation):

|

||||

title="Leres (Depth) Processor",

|

||||

tags=["controlnet", "leres", "depth"],

|

||||

category="controlnet",

|

||||

version="1.0.0",

|

||||

)

|

||||

class LeresImageProcessorInvocation(ImageProcessorInvocation):

|

||||

"""Applies leres processing to image"""

|

||||

@ -472,6 +487,7 @@ class LeresImageProcessorInvocation(ImageProcessorInvocation):

|

||||

title="Tile Resample Processor",

|

||||

tags=["controlnet", "tile"],

|

||||

category="controlnet",

|

||||

version="1.0.0",

|

||||

)

|

||||

class TileResamplerProcessorInvocation(ImageProcessorInvocation):

|

||||

"""Tile resampler processor"""

|

||||

@ -511,6 +527,7 @@ class TileResamplerProcessorInvocation(ImageProcessorInvocation):

|

||||

title="Segment Anything Processor",

|

||||

tags=["controlnet", "segmentanything"],

|

||||

category="controlnet",

|

||||

version="1.0.0",

|

||||

)

|

||||

class SegmentAnythingProcessorInvocation(ImageProcessorInvocation):

|

||||

"""Applies segment anything processing to image"""

|

||||

|

||||

@ -10,12 +10,7 @@ from invokeai.app.models.image import ImageCategory, ResourceOrigin

|

||||

from .baseinvocation import BaseInvocation, InputField, InvocationContext, invocation

|

||||

|

||||

|

||||

@invocation(

|

||||

"cv_inpaint",

|

||||

title="OpenCV Inpaint",

|

||||

tags=["opencv", "inpaint"],

|

||||

category="inpaint",

|

||||

)

|

||||

@invocation("cv_inpaint", title="OpenCV Inpaint", tags=["opencv", "inpaint"], category="inpaint", version="1.0.0")

|

||||

class CvInpaintInvocation(BaseInvocation):

|

||||

"""Simple inpaint using opencv."""

|

||||

|

||||

|

||||

@ -16,7 +16,7 @@ from ..models.image import ImageCategory, ResourceOrigin

|

||||

from .baseinvocation import BaseInvocation, FieldDescriptions, InputField, InvocationContext, invocation

|

||||

|

||||

|

||||

@invocation("show_image", title="Show Image", tags=["image"], category="image")

|

||||

@invocation("show_image", title="Show Image", tags=["image"], category="image", version="1.0.0")

|

||||

class ShowImageInvocation(BaseInvocation):

|

||||

"""Displays a provided image using the OS image viewer, and passes it forward in the pipeline."""

|

||||

|

||||

@ -36,7 +36,7 @@ class ShowImageInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("blank_image", title="Blank Image", tags=["image"], category="image")

|

||||

@invocation("blank_image", title="Blank Image", tags=["image"], category="image", version="1.0.0")

|

||||

class BlankImageInvocation(BaseInvocation):

|

||||

"""Creates a blank image and forwards it to the pipeline"""

|

||||

|

||||

@ -65,7 +65,7 @@ class BlankImageInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("img_crop", title="Crop Image", tags=["image", "crop"], category="image")

|

||||

@invocation("img_crop", title="Crop Image", tags=["image", "crop"], category="image", version="1.0.0")

|

||||

class ImageCropInvocation(BaseInvocation):

|

||||

"""Crops an image to a specified box. The box can be outside of the image."""

|

||||

|

||||

@ -98,7 +98,7 @@ class ImageCropInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("img_paste", title="Paste Image", tags=["image", "paste"], category="image")

|

||||

@invocation("img_paste", title="Paste Image", tags=["image", "paste"], category="image", version="1.0.0")

|

||||

class ImagePasteInvocation(BaseInvocation):

|

||||

"""Pastes an image into another image."""

|

||||

|

||||

@ -146,7 +146,7 @@ class ImagePasteInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("tomask", title="Mask from Alpha", tags=["image", "mask"], category="image")

|

||||

@invocation("tomask", title="Mask from Alpha", tags=["image", "mask"], category="image", version="1.0.0")

|

||||

class MaskFromAlphaInvocation(BaseInvocation):

|

||||

"""Extracts the alpha channel of an image as a mask."""

|

||||

|

||||

@ -177,7 +177,7 @@ class MaskFromAlphaInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("img_mul", title="Multiply Images", tags=["image", "multiply"], category="image")

|

||||

@invocation("img_mul", title="Multiply Images", tags=["image", "multiply"], category="image", version="1.0.0")

|

||||

class ImageMultiplyInvocation(BaseInvocation):

|

||||

"""Multiplies two images together using `PIL.ImageChops.multiply()`."""

|

||||

|

||||

@ -210,7 +210,7 @@ class ImageMultiplyInvocation(BaseInvocation):

|

||||

IMAGE_CHANNELS = Literal["A", "R", "G", "B"]

|

||||

|

||||

|

||||

@invocation("img_chan", title="Extract Image Channel", tags=["image", "channel"], category="image")

|

||||

@invocation("img_chan", title="Extract Image Channel", tags=["image", "channel"], category="image", version="1.0.0")

|

||||

class ImageChannelInvocation(BaseInvocation):

|

||||

"""Gets a channel from an image."""

|

||||

|

||||

@ -242,7 +242,7 @@ class ImageChannelInvocation(BaseInvocation):

|

||||

IMAGE_MODES = Literal["L", "RGB", "RGBA", "CMYK", "YCbCr", "LAB", "HSV", "I", "F"]

|

||||

|

||||

|

||||

@invocation("img_conv", title="Convert Image Mode", tags=["image", "convert"], category="image")

|

||||

@invocation("img_conv", title="Convert Image Mode", tags=["image", "convert"], category="image", version="1.0.0")

|

||||

class ImageConvertInvocation(BaseInvocation):

|

||||

"""Converts an image to a different mode."""

|

||||

|

||||

@ -271,7 +271,7 @@ class ImageConvertInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("img_blur", title="Blur Image", tags=["image", "blur"], category="image")

|

||||

@invocation("img_blur", title="Blur Image", tags=["image", "blur"], category="image", version="1.0.0")

|

||||

class ImageBlurInvocation(BaseInvocation):

|

||||

"""Blurs an image"""

|

||||

|

||||

@ -325,7 +325,7 @@ PIL_RESAMPLING_MAP = {

|

||||

}

|

||||

|

||||

|

||||

@invocation("img_resize", title="Resize Image", tags=["image", "resize"], category="image")

|

||||

@invocation("img_resize", title="Resize Image", tags=["image", "resize"], category="image", version="1.0.0")

|

||||

class ImageResizeInvocation(BaseInvocation):

|

||||

"""Resizes an image to specific dimensions"""

|

||||

|

||||

@ -365,7 +365,7 @@ class ImageResizeInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("img_scale", title="Scale Image", tags=["image", "scale"], category="image")

|

||||

@invocation("img_scale", title="Scale Image", tags=["image", "scale"], category="image", version="1.0.0")

|

||||

class ImageScaleInvocation(BaseInvocation):

|

||||

"""Scales an image by a factor"""

|

||||

|

||||

@ -406,7 +406,7 @@ class ImageScaleInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("img_lerp", title="Lerp Image", tags=["image", "lerp"], category="image")

|

||||

@invocation("img_lerp", title="Lerp Image", tags=["image", "lerp"], category="image", version="1.0.0")

|

||||

class ImageLerpInvocation(BaseInvocation):

|

||||

"""Linear interpolation of all pixels of an image"""

|

||||

|

||||

@ -439,7 +439,7 @@ class ImageLerpInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("img_ilerp", title="Inverse Lerp Image", tags=["image", "ilerp"], category="image")

|

||||

@invocation("img_ilerp", title="Inverse Lerp Image", tags=["image", "ilerp"], category="image", version="1.0.0")

|

||||

class ImageInverseLerpInvocation(BaseInvocation):

|

||||

"""Inverse linear interpolation of all pixels of an image"""

|

||||

|

||||

@ -472,7 +472,7 @@ class ImageInverseLerpInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("img_nsfw", title="Blur NSFW Image", tags=["image", "nsfw"], category="image")

|

||||

@invocation("img_nsfw", title="Blur NSFW Image", tags=["image", "nsfw"], category="image", version="1.0.0")

|

||||

class ImageNSFWBlurInvocation(BaseInvocation):

|

||||

"""Add blur to NSFW-flagged images"""

|

||||

|

||||

@ -517,7 +517,9 @@ class ImageNSFWBlurInvocation(BaseInvocation):

|

||||

return caution.resize((caution.width // 2, caution.height // 2))

|

||||

|

||||

|

||||

@invocation("img_watermark", title="Add Invisible Watermark", tags=["image", "watermark"], category="image")

|

||||

@invocation(

|

||||

"img_watermark", title="Add Invisible Watermark", tags=["image", "watermark"], category="image", version="1.0.0"

|

||||

)

|

||||

class ImageWatermarkInvocation(BaseInvocation):

|

||||

"""Add an invisible watermark to an image"""

|

||||

|

||||

@ -548,7 +550,7 @@ class ImageWatermarkInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("mask_edge", title="Mask Edge", tags=["image", "mask", "inpaint"], category="image")

|

||||

@invocation("mask_edge", title="Mask Edge", tags=["image", "mask", "inpaint"], category="image", version="1.0.0")

|

||||

class MaskEdgeInvocation(BaseInvocation):

|

||||

"""Applies an edge mask to an image"""

|

||||

|

||||

@ -561,7 +563,7 @@ class MaskEdgeInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

def invoke(self, context: InvocationContext) -> ImageOutput:

|

||||

mask = context.services.images.get_pil_image(self.image.image_name)

|

||||

mask = context.services.images.get_pil_image(self.image.image_name).convert("L")

|

||||

|

||||

npimg = numpy.asarray(mask, dtype=numpy.uint8)

|

||||

npgradient = numpy.uint8(255 * (1.0 - numpy.floor(numpy.abs(0.5 - numpy.float32(npimg) / 255.0) * 2.0)))

|

||||

@ -593,7 +595,9 @@ class MaskEdgeInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("mask_combine", title="Combine Masks", tags=["image", "mask", "multiply"], category="image")

|

||||

@invocation(

|

||||

"mask_combine", title="Combine Masks", tags=["image", "mask", "multiply"], category="image", version="1.0.0"

|

||||

)

|

||||

class MaskCombineInvocation(BaseInvocation):

|

||||

"""Combine two masks together by multiplying them using `PIL.ImageChops.multiply()`."""

|

||||

|

||||

@ -623,7 +627,7 @@ class MaskCombineInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("color_correct", title="Color Correct", tags=["image", "color"], category="image")

|

||||

@invocation("color_correct", title="Color Correct", tags=["image", "color"], category="image", version="1.0.0")

|

||||

class ColorCorrectInvocation(BaseInvocation):

|

||||

"""

|

||||

Shifts the colors of a target image to match the reference image, optionally

|

||||

@ -696,8 +700,13 @@ class ColorCorrectInvocation(BaseInvocation):

|

||||

# Blur the mask out (into init image) by specified amount

|

||||

if self.mask_blur_radius > 0:

|

||||

nm = numpy.asarray(pil_init_mask, dtype=numpy.uint8)

|

||||

inverted_nm = 255 - nm

|

||||

dilation_size = int(round(self.mask_blur_radius) + 20)

|

||||

dilating_kernel = cv2.getStructuringElement(cv2.MORPH_ELLIPSE, (dilation_size, dilation_size))

|

||||

inverted_dilated_nm = cv2.dilate(inverted_nm, dilating_kernel)

|

||||

dilated_nm = 255 - inverted_dilated_nm

|

||||

nmd = cv2.erode(

|

||||

nm,

|

||||

dilated_nm,

|

||||

kernel=numpy.ones((3, 3), dtype=numpy.uint8),

|

||||

iterations=int(self.mask_blur_radius / 2),

|

||||

)

|

||||

@ -728,7 +737,7 @@ class ColorCorrectInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("img_hue_adjust", title="Adjust Image Hue", tags=["image", "hue"], category="image")

|

||||

@invocation("img_hue_adjust", title="Adjust Image Hue", tags=["image", "hue"], category="image", version="1.0.0")

|

||||

class ImageHueAdjustmentInvocation(BaseInvocation):

|

||||

"""Adjusts the Hue of an image."""

|

||||

|

||||

@ -769,38 +778,95 @@ class ImageHueAdjustmentInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

COLOR_CHANNELS = Literal[

|

||||

"Red (RGBA)",

|

||||

"Green (RGBA)",

|

||||

"Blue (RGBA)",

|

||||

"Alpha (RGBA)",

|

||||

"Cyan (CMYK)",

|

||||

"Magenta (CMYK)",

|

||||

"Yellow (CMYK)",

|

||||

"Black (CMYK)",

|

||||

"Hue (HSV)",

|

||||

"Saturation (HSV)",

|

||||

"Value (HSV)",

|

||||

"Luminosity (LAB)",

|

||||

"A (LAB)",

|

||||

"B (LAB)",

|

||||

"Y (YCbCr)",

|

||||

"Cb (YCbCr)",

|

||||

"Cr (YCbCr)",

|

||||

]

|

||||

|

||||

CHANNEL_FORMATS = {

|

||||

"Red (RGBA)": ("RGBA", 0),

|

||||

"Green (RGBA)": ("RGBA", 1),

|

||||

"Blue (RGBA)": ("RGBA", 2),

|

||||

"Alpha (RGBA)": ("RGBA", 3),

|

||||

"Cyan (CMYK)": ("CMYK", 0),

|

||||

"Magenta (CMYK)": ("CMYK", 1),

|

||||

"Yellow (CMYK)": ("CMYK", 2),

|

||||

"Black (CMYK)": ("CMYK", 3),

|

||||

"Hue (HSV)": ("HSV", 0),

|

||||

"Saturation (HSV)": ("HSV", 1),

|

||||

"Value (HSV)": ("HSV", 2),

|

||||

"Luminosity (LAB)": ("LAB", 0),

|

||||

"A (LAB)": ("LAB", 1),

|

||||

"B (LAB)": ("LAB", 2),

|

||||

"Y (YCbCr)": ("YCbCr", 0),

|

||||

"Cb (YCbCr)": ("YCbCr", 1),

|

||||

"Cr (YCbCr)": ("YCbCr", 2),

|

||||

}

|

||||

|

||||

|

||||

@invocation(

|

||||

"img_luminosity_adjust",

|

||||

title="Adjust Image Luminosity",

|

||||

tags=["image", "luminosity", "hsl"],

|

||||

"img_channel_offset",

|

||||

title="Offset Image Channel",

|

||||

tags=[

|

||||

"image",

|

||||

"offset",

|

||||

"red",

|

||||

"green",

|

||||

"blue",

|

||||

"alpha",

|

||||

"cyan",

|

||||

"magenta",

|

||||

"yellow",

|

||||

"black",

|

||||

"hue",

|

||||

"saturation",

|

||||

"luminosity",

|

||||

"value",

|

||||

],

|

||||

category="image",

|

||||

version="1.0.0",

|

||||

)

|

||||

class ImageLuminosityAdjustmentInvocation(BaseInvocation):

|

||||

"""Adjusts the Luminosity (Value) of an image."""

|

||||

class ImageChannelOffsetInvocation(BaseInvocation):

|

||||

"""Add or subtract a value from a specific color channel of an image."""

|

||||

|

||||

image: ImageField = InputField(description="The image to adjust")

|

||||

luminosity: float = InputField(

|

||||

default=1.0, ge=0, le=1, description="The factor by which to adjust the luminosity (value)"

|

||||

)

|

||||

channel: COLOR_CHANNELS = InputField(description="Which channel to adjust")

|

||||

offset: int = InputField(default=0, ge=-255, le=255, description="The amount to adjust the channel by")

|

||||

|

||||

def invoke(self, context: InvocationContext) -> ImageOutput:

|

||||

pil_image = context.services.images.get_pil_image(self.image.image_name)

|

||||

|

||||

# Convert PIL image to OpenCV format (numpy array), note color channel

|

||||

# ordering is changed from RGB to BGR

|

||||

image = numpy.array(pil_image.convert("RGB"))[:, :, ::-1]

|

||||

# extract the channel and mode from the input and reference tuple

|

||||

mode = CHANNEL_FORMATS[self.channel][0]

|

||||

channel_number = CHANNEL_FORMATS[self.channel][1]

|

||||

|

||||

# Convert image to HSV color space

|

||||

hsv_image = cv2.cvtColor(image, cv2.COLOR_BGR2HSV)

|

||||

# Convert PIL image to new format

|

||||

converted_image = numpy.array(pil_image.convert(mode)).astype(int)

|

||||

image_channel = converted_image[:, :, channel_number]

|

||||

|

||||

# Adjust the luminosity (value)

|

||||

hsv_image[:, :, 2] = numpy.clip(hsv_image[:, :, 2] * self.luminosity, 0, 255)

|

||||

# Adjust the value, clipping to 0..255

|

||||

image_channel = numpy.clip(image_channel + self.offset, 0, 255)

|

||||

|

||||

# Convert image back to BGR color space

|

||||

image = cv2.cvtColor(hsv_image, cv2.COLOR_HSV2BGR)

|

||||

# Put the channel back into the image

|

||||

converted_image[:, :, channel_number] = image_channel

|

||||

|

||||

# Convert back to PIL format and to original color mode

|

||||

pil_image = Image.fromarray(image[:, :, ::-1], "RGB").convert("RGBA")

|

||||

# Convert back to RGBA format and output

|

||||

pil_image = Image.fromarray(converted_image.astype(numpy.uint8), mode=mode).convert("RGBA")

|

||||

|

||||

image_dto = context.services.images.create(

|

||||

image=pil_image,

|

||||

@ -822,35 +888,60 @@ class ImageLuminosityAdjustmentInvocation(BaseInvocation):

|

||||

|

||||

|

||||

@invocation(

|

||||

"img_saturation_adjust",

|

||||

title="Adjust Image Saturation",

|

||||

tags=["image", "saturation", "hsl"],

|

||||

"img_channel_multiply",

|

||||

title="Multiply Image Channel",

|

||||

tags=[

|

||||

"image",

|

||||

"invert",

|

||||

"scale",

|

||||

"multiply",

|

||||

"red",

|

||||

"green",

|

||||

"blue",

|

||||

"alpha",

|

||||

"cyan",

|

||||

"magenta",

|

||||

"yellow",

|

||||

"black",

|

||||

"hue",

|

||||

"saturation",

|

||||

"luminosity",

|

||||

"value",

|

||||

],

|

||||

category="image",

|

||||

version="1.0.0",

|

||||

)

|

||||

class ImageSaturationAdjustmentInvocation(BaseInvocation):

|

||||

"""Adjusts the Saturation of an image."""

|

||||

class ImageChannelMultiplyInvocation(BaseInvocation):

|

||||

"""Scale a specific color channel of an image."""

|

||||

|

||||

image: ImageField = InputField(description="The image to adjust")

|

||||

saturation: float = InputField(default=1.0, ge=0, le=1, description="The factor by which to adjust the saturation")

|

||||

channel: COLOR_CHANNELS = InputField(description="Which channel to adjust")

|

||||

scale: float = InputField(default=1.0, ge=0.0, description="The amount to scale the channel by.")

|

||||

invert_channel: bool = InputField(default=False, description="Invert the channel after scaling")

|

||||

|

||||

def invoke(self, context: InvocationContext) -> ImageOutput:

|

||||

pil_image = context.services.images.get_pil_image(self.image.image_name)

|

||||

|

||||

# Convert PIL image to OpenCV format (numpy array), note color channel

|

||||

# ordering is changed from RGB to BGR

|

||||

image = numpy.array(pil_image.convert("RGB"))[:, :, ::-1]

|

||||

# extract the channel and mode from the input and reference tuple

|

||||

mode = CHANNEL_FORMATS[self.channel][0]

|

||||

channel_number = CHANNEL_FORMATS[self.channel][1]

|

||||

|

||||

# Convert image to HSV color space

|

||||

hsv_image = cv2.cvtColor(image, cv2.COLOR_BGR2HSV)

|

||||

# Convert PIL image to new format

|

||||

converted_image = numpy.array(pil_image.convert(mode)).astype(float)

|

||||

image_channel = converted_image[:, :, channel_number]

|

||||

|

||||

# Adjust the saturation

|

||||

hsv_image[:, :, 1] = numpy.clip(hsv_image[:, :, 1] * self.saturation, 0, 255)

|

||||

# Adjust the value, clipping to 0..255

|

||||

image_channel = numpy.clip(image_channel * self.scale, 0, 255)

|

||||

|

||||

# Convert image back to BGR color space

|

||||

image = cv2.cvtColor(hsv_image, cv2.COLOR_HSV2BGR)

|

||||

# Invert the channel if requested

|

||||

if self.invert_channel:

|

||||

image_channel = 255 - image_channel

|

||||

|

||||

# Convert back to PIL format and to original color mode

|

||||

pil_image = Image.fromarray(image[:, :, ::-1], "RGB").convert("RGBA")

|

||||

# Put the channel back into the image

|

||||

converted_image[:, :, channel_number] = image_channel

|

||||

|

||||

# Convert back to RGBA format and output

|

||||

pil_image = Image.fromarray(converted_image.astype(numpy.uint8), mode=mode).convert("RGBA")

|

||||

|

||||

image_dto = context.services.images.create(

|

||||

image=pil_image,

|

||||

|

||||

@ -8,19 +8,17 @@ from PIL import Image, ImageOps

|

||||

|

||||

from invokeai.app.invocations.primitives import ColorField, ImageField, ImageOutput

|

||||

from invokeai.app.util.misc import SEED_MAX, get_random_seed

|

||||

from invokeai.backend.image_util.cv2_inpaint import cv2_inpaint

|

||||

from invokeai.backend.image_util.lama import LaMA

|

||||

from invokeai.backend.image_util.patchmatch import PatchMatch

|

||||

|

||||

from ..models.image import ImageCategory, ResourceOrigin

|

||||

from .baseinvocation import BaseInvocation, InputField, InvocationContext, invocation

|

||||

from .image import PIL_RESAMPLING_MAP, PIL_RESAMPLING_MODES

|

||||

|

||||

|

||||

def infill_methods() -> list[str]:

|

||||

methods = [

|

||||

"tile",

|

||||

"solid",

|

||||

"lama",

|

||||

]

|

||||

methods = ["tile", "solid", "lama", "cv2"]

|

||||

if PatchMatch.patchmatch_available():

|

||||

methods.insert(0, "patchmatch")

|

||||

return methods

|

||||

@ -49,6 +47,10 @@ def infill_patchmatch(im: Image.Image) -> Image.Image:

|

||||

return im_patched

|

||||

|

||||

|

||||

def infill_cv2(im: Image.Image) -> Image.Image:

|

||||

return cv2_inpaint(im)

|

||||

|

||||

|

||||

def get_tile_images(image: np.ndarray, width=8, height=8):

|

||||

_nrows, _ncols, depth = image.shape

|

||||

_strides = image.strides

|

||||

@ -116,7 +118,7 @@ def tile_fill_missing(im: Image.Image, tile_size: int = 16, seed: Optional[int]

|

||||

return si

|

||||

|

||||

|

||||

@invocation("infill_rgba", title="Solid Color Infill", tags=["image", "inpaint"], category="inpaint")

|

||||

@invocation("infill_rgba", title="Solid Color Infill", tags=["image", "inpaint"], category="inpaint", version="1.0.0")

|

||||

class InfillColorInvocation(BaseInvocation):

|

||||

"""Infills transparent areas of an image with a solid color"""

|

||||

|

||||

@ -151,7 +153,7 @@ class InfillColorInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("infill_tile", title="Tile Infill", tags=["image", "inpaint"], category="inpaint")

|

||||

@invocation("infill_tile", title="Tile Infill", tags=["image", "inpaint"], category="inpaint", version="1.0.0")

|

||||

class InfillTileInvocation(BaseInvocation):

|

||||

"""Infills transparent areas of an image with tiles of the image"""

|

||||

|

||||

@ -187,20 +189,42 @@ class InfillTileInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("infill_patchmatch", title="PatchMatch Infill", tags=["image", "inpaint"], category="inpaint")

|

||||

@invocation(

|

||||

"infill_patchmatch", title="PatchMatch Infill", tags=["image", "inpaint"], category="inpaint", version="1.0.0"

|

||||

)

|

||||

class InfillPatchMatchInvocation(BaseInvocation):

|

||||

"""Infills transparent areas of an image using the PatchMatch algorithm"""

|

||||

|

||||

image: ImageField = InputField(description="The image to infill")

|

||||

downscale: float = InputField(default=2.0, gt=0, description="Run patchmatch on downscaled image to speedup infill")

|

||||

resample_mode: PIL_RESAMPLING_MODES = InputField(default="bicubic", description="The resampling mode")

|

||||

|

||||

def invoke(self, context: InvocationContext) -> ImageOutput:

|

||||

image = context.services.images.get_pil_image(self.image.image_name)

|

||||

image = context.services.images.get_pil_image(self.image.image_name).convert("RGBA")

|

||||

|

||||

resample_mode = PIL_RESAMPLING_MAP[self.resample_mode]

|

||||

|

||||

infill_image = image.copy()

|

||||

width = int(image.width / self.downscale)

|

||||

height = int(image.height / self.downscale)

|

||||

infill_image = infill_image.resize(

|

||||

(width, height),

|

||||

resample=resample_mode,

|

||||

)

|

||||

|

||||

if PatchMatch.patchmatch_available():

|

||||

infilled = infill_patchmatch(image.copy())

|

||||

infilled = infill_patchmatch(infill_image)

|

||||

else:

|

||||

raise ValueError("PatchMatch is not available on this system")

|

||||

|

||||

infilled = infilled.resize(

|

||||

(image.width, image.height),

|

||||

resample=resample_mode,

|

||||

)

|

||||

|

||||

infilled.paste(image, (0, 0), mask=image.split()[-1])

|

||||

# image.paste(infilled, (0, 0), mask=image.split()[-1])

|

||||

|

||||

image_dto = context.services.images.create(

|

||||

image=infilled,

|

||||

image_origin=ResourceOrigin.INTERNAL,

|

||||

@ -218,7 +242,7 @@ class InfillPatchMatchInvocation(BaseInvocation):

|

||||

)

|

||||

|

||||

|

||||

@invocation("infill_lama", title="LaMa Infill", tags=["image", "inpaint"], category="inpaint")

|

||||

@invocation("infill_lama", title="LaMa Infill", tags=["image", "inpaint"], category="inpaint", version="1.0.0")

|

||||

class LaMaInfillInvocation(BaseInvocation):

|

||||

"""Infills transparent areas of an image using the LaMa model"""

|

||||

|

||||

@ -243,3 +267,30 @@ class LaMaInfillInvocation(BaseInvocation):

|

||||

width=image_dto.width,

|

||||

height=image_dto.height,

|

||||

)

|

||||

|

||||

|

||||

@invocation("infill_cv2", title="CV2 Infill", tags=["image", "inpaint"], category="inpaint")

|

||||

class CV2InfillInvocation(BaseInvocation):

|

||||

"""Infills transparent areas of an image using OpenCV Inpainting"""

|

||||

|

||||

image: ImageField = InputField(description="The image to infill")

|

||||

|

||||

def invoke(self, context: InvocationContext) -> ImageOutput:

|

||||

image = context.services.images.get_pil_image(self.image.image_name)

|

||||

|

||||

infilled = infill_cv2(image.copy())

|

||||

|

||||

image_dto = context.services.images.create(

|

||||

image=infilled,

|

||||

image_origin=ResourceOrigin.INTERNAL,

|

||||

image_category=ImageCategory.GENERAL,

|

||||

node_id=self.id,

|

||||

session_id=context.graph_execution_state_id,

|

||||

is_intermediate=self.is_intermediate,

|

||||

)

|

||||

|

||||

return ImageOutput(

|

||||

image=ImageField(image_name=image_dto.image_name),

|

||||

width=image_dto.width,

|

||||

height=image_dto.height,

|

||||

)

|

||||

|

||||

@ -74,7 +74,7 @@ class SchedulerOutput(BaseInvocationOutput):

|

||||

scheduler: SAMPLER_NAME_VALUES = OutputField(description=FieldDescriptions.scheduler, ui_type=UIType.Scheduler)

|

||||

|

||||

|

||||

@invocation("scheduler", title="Scheduler", tags=["scheduler"], category="latents")

|

||||

@invocation("scheduler", title="Scheduler", tags=["scheduler"], category="latents", version="1.0.0")

|

||||

class SchedulerInvocation(BaseInvocation):

|

||||

"""Selects a scheduler."""

|

||||

|

||||

@ -86,7 +86,9 @@ class SchedulerInvocation(BaseInvocation):

|

||||

return SchedulerOutput(scheduler=self.scheduler)

|

||||

|

||||

|

||||

@invocation("create_denoise_mask", title="Create Denoise Mask", tags=["mask", "denoise"], category="latents")

|

||||

@invocation(

|

||||

"create_denoise_mask", title="Create Denoise Mask", tags=["mask", "denoise"], category="latents", version="1.0.0"

|

||||

)

|

||||

class CreateDenoiseMaskInvocation(BaseInvocation):

|

||||

"""Creates mask for denoising model run."""

|

||||

|

||||

@ -186,6 +188,7 @@ def get_scheduler(

|

||||

title="Denoise Latents",

|

||||

tags=["latents", "denoise", "txt2img", "t2i", "t2l", "img2img", "i2i", "l2l"],

|

||||

category="latents",

|

||||

version="1.0.0",

|

||||

)

|

||||

class DenoiseLatentsInvocation(BaseInvocation):

|

||||

"""Denoises noisy latents to decodable images"""

|

||||

@ -208,12 +211,14 @@ class DenoiseLatentsInvocation(BaseInvocation):

|

||||

)

|

||||

unet: UNetField = InputField(description=FieldDescriptions.unet, input=Input.Connection, title="UNet", ui_order=2)

|

||||

control: Union[ControlField, list[ControlField]] = InputField(

|

||||

default=None, description=FieldDescriptions.control, input=Input.Connection, ui_order=5

|

||||

default=None,

|

||||

description=FieldDescriptions.control,

|

||||

input=Input.Connection,

|

||||

ui_order=5,

|

||||

)

|

||||

latents: Optional[LatentsField] = InputField(description=FieldDescriptions.latents, input=Input.Connection)

|

||||

denoise_mask: Optional[DenoiseMaskField] = InputField(

|

||||

default=None,

|

||||

description=FieldDescriptions.mask,

|

||||

default=None, description=FieldDescriptions.mask, input=Input.Connection, ui_order=6

|

||||

)

|

||||

|

||||

@validator("cfg_scale")

|

||||

@ -317,7 +322,7 @@ class DenoiseLatentsInvocation(BaseInvocation):

|

||||

context: InvocationContext,

|

||||

# really only need model for dtype and device

|

||||

model: StableDiffusionGeneratorPipeline,

|

||||

control_input: List[ControlField],

|

||||

control_input: Union[ControlField, List[ControlField]],

|

||||

latents_shape: List[int],

|