## What type of PR is this? (check all applicable)

- [ ] Refactor

- [x] Feature

- [ ] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [x] No, because: n/a

## Have you updated all relevant documentation?

- [ ] Yes

- [x] No n/a

## Description

Add a generation mode indicator to canvas.

- use the existing logic to determine if generation is txt2img, img2img,

inpaint or outpaint

- technically `outpaint` and `inpaint` are the same, just display

"Inpaint" if its either

- debounce this by 1s to prevent jank

I was going to disable controlnet conditionally when the mode is inpaint

but that involves a lot of fiddly changes to the controlnet UI

components. Instead, I'm hoping we can get inpaint moved over to latents

by next release, at which point controlnet will work.

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

https://github.com/invoke-ai/InvokeAI/assets/4822129/87464ae9-4136-4367-b992-e243ff0d05b4

## Added/updated tests?

- [ ] Yes

- [x] No : n/a

## [optional] Are there any post deployment tasks we need to perform?

n/a

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [x] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [x] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [ ] Yes

- [x] No, n/a

## Description

When a queue item is popped for processing, we need to retrieve its

session from the DB. Pydantic serializes the graph at this stage.

It's possible for a graph to have been made invalid during the graph

preparation stage (e.g. an ancestor node executes, and its output is not

valid for its successor node's input field).

When this occurs, the session in the DB will fail validation, but we

don't have a chance to find out until it is retrieved and parsed by

pydantic.

This logic was previously not wrapped in any exception handling.

Just after retrieving a session, we retrieve the specific invocation to

execute from the session. It's possible that this could also have some

sort of error, though it should be impossible for it to be a pydantic

validation error (that would have been caught during session

validation). There was also no exception handling here.

When either of these processes fail, the processor gets soft-locked

because the processor's cleanup logic is never run. (I didn't dig deeper

into exactly what cleanup is not happening, because the fix is to just

handle the exceptions.)

This PR adds exception handling to both the session retrieval and node

retrieval and events for each: `session_retrieval_error` and

`invocation_retrieval_error`.

These events are caught and displayed in the UI as toasts, along with

the type of the python exception (e.g. `Validation Error`). The events

are also logged to the browser console.

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

Closes#3860 , #3412

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

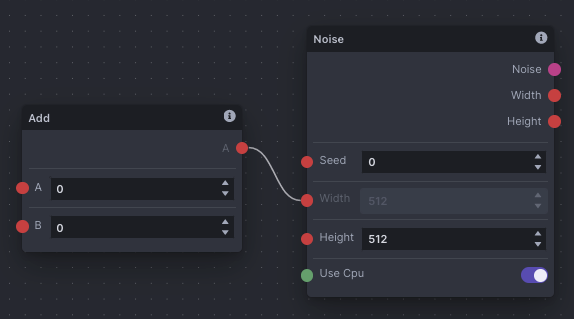

Create an valid graph that will become invalid during execution. Here's

an example:

This is valid before execution, but the `width` field of the `Noise`

node will end up with an invalid value (`0`). Previously, this would

soft-lock the app and you'd have to restart it.

Now, with this graph, you will get an error toast, and the app will not

get locked up.

## Added/updated tests?

- [x] Yes (ish)

- [ ] No

@Kyle0654 @brandonrising

It seems because the processor runs in its own thread, `pytest` cannot

catch exceptions raised in the processor.

I added a test that does work, insofar as it does recreate the issue.

But, because the exception occurs in a separate thread, the test doesn't

see it. The result is that the test passes even without the fix.

So when running the test, we see the exception:

```py

Exception in thread invoker_processor:

Traceback (most recent call last):

File "/usr/lib/python3.10/threading.py", line 1016, in _bootstrap_inner

self.run()

File "/usr/lib/python3.10/threading.py", line 953, in run

self._target(*self._args, **self._kwargs)

File "/home/bat/Documents/Code/InvokeAI/invokeai/app/services/processor.py", line 50, in __process

self.__invoker.services.graph_execution_manager.get(

File "/home/bat/Documents/Code/InvokeAI/invokeai/app/services/sqlite.py", line 79, in get

return self._parse_item(result[0])

File "/home/bat/Documents/Code/InvokeAI/invokeai/app/services/sqlite.py", line 52, in _parse_item

return parse_raw_as(item_type, item)

File "pydantic/tools.py", line 82, in pydantic.tools.parse_raw_as

File "pydantic/tools.py", line 38, in pydantic.tools.parse_obj_as

File "pydantic/main.py", line 341, in pydantic.main.BaseModel.__init__

```

But `pytest` doesn't actually see it as an exception. Not sure how to

fix this, it's a bit beyond me.

## [optional] Are there any post deployment tasks we need to perform?

nope don't think so

## What type of PR is this? (check all applicable)

- [x] Refactor

- [ ] Feature

- [ ] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Description

`search_for_models` is explicitly typed as taking a singular `Path` but

was given a list because some later function in the stack expects a

list. Fixed that to be compatible with the paths. This is the only use

of that function.

The `list()` call is unrelated but removes a type warning since it's

supposed to return a list, not a set. I can revert it if requested.

This was found through pylance type errors. Go types!

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [x] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Description

This import is missing and used later in the file.

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [x] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [ ] Yes

- [ ] No

## Description

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [ ] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [x] Feature

- [ ] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [x] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [ ] Yes

- [x] No: n/a

## Description

At some point I typo'd this and set the max seed to signed int32 max. It

should be *un*signed int32 max.

This restored the seed range to what it was in v2.3.

Also fixed a bug in the Noise node which resulted in the max valid seed

being one less than intended.

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issues

#2843 is against v2.3 and increases the range of valid seeds

substantially. Maybe we can explore this in the future but as of v3.0,

we use numpy for a RNG in a few places, and it maxes out at the max

`uint32`. I will close this PR as this supersedes it.

- Closes#3866

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

You should be able to use seeds up to and including `4294967295`.

## Added/updated tests?

- [ ] Yes

- [x] No : don't think we have any relevant tests

## [optional] Are there any post deployment tasks we need to perform?

nope!

At some point I typo'd this and set the max seed to signed int32 max. It should be *un*signed int32 max.

This restored the seed range to what it was in v2.3.

## What type of PR is this? (check all applicable)

- [x] Bug Fix

## Have you discussed this change with the InvokeAI team?

- [x] Yes, we feel very passionate about this.

## Description

Uploading an incorrect JSON file to the Node Editor would crash the app.

While this is a much larger problem that we will tackle while refining

the Node Editor, this is a fix that should address 99% of the cases out

there.

When saving an InvokeAI node graph, there are three primary keys.

1. `nodes` - which has all the node related data.

2. `edges` - which has all the edges related data

3. `viewport` - which has all the viewport related data.

So when we load back the JSON, we now check if all three of these keys

exist in the retrieved JSON object. While the `viewport` itself is not a

mandatory key to repopulate the graph, checking for it will allow us to

treat it as an additional check to ensure that the graph was saved from

InvokeAI.

As a result ...

- If you upload an invalid JSON file, the app now warns you that the

JSON is invalid.

- If you upload a JSON of a graph editor that is not InvokeAI, it simply

warns you that you are uploading a non InvokeAI graph.

So effectively, you should not be able to load any graph that is not

generated by ReactFlow.

Here are the edge cases:

- What happens if a user maintains the above key structure but tampers

with the data inside them? Well tested it. Turns out because we validate

and build the graph based on the JSON data, if you tamper with any data

that is needed to rebuild that node, it simply will skip that and load

the rest of the graph with valid data.

- What happens if a user uploads a graph that was made by some other

random ReactFlow app? Well, same as above. Because we do not have to

parse that in our setup, it simply will skip it and only display what

are setup to do.

I think that just about covers 99% of the cases where this could go

wrong. If there's any other edges cases, can add checks if need be. But

can't think of any at the moment.

## Related Tickets & Documents

### Closes

- #3893

- #3881

## [optional] Are there any post deployment tasks we need to perform?

Yes. Making @psychedelicious a little bit happier. :P

- use the existing logic to determine if generation is txt2img, img2img, inpaint or outpaint

- technically `outpaint` and `inpaint` are the same, just display

"Inpaint" if its either

- debounce this by 1s to prevent jank

When a queue item is popped for processing, we need to retrieve its session from the DB. Pydantic serializes the graph at this stage.

It's possible for a graph to have been made invalid during the graph preparation stage (e.g. an ancestor node executes, and its output is not valid for its successor node's input field).

When this occurs, the session in the DB will fail validation, but we don't have a chance to find out until it is retrieved and parsed by pydantic.

This logic was previously not wrapped in any exception handling.

Just after retrieving a session, we retrieve the specific invocation to execute from the session. It's possible that this could also have some sort of error, though it should be impossible for it to be a pydantic validation error (that would have been caught during session validation). There was also no exception handling here.

When either of these processes fail, the processor gets soft-locked because the processor's cleanup logic is never run. (I didn't dig deeper into exactly what cleanup is not happening, because the fix is to just handle the exceptions.)

This PR adds exception handling to both the session retrieval and node retrieval and events for each: `session_retrieval_error` and `invocation_retrieval_error`.

These events are caught and displayed in the UI as toasts, along with the type of the python exception (e.g. `Validation Error`). The events are also logged to the browser console.

## What type of PR is this? (check all applicable)

- [x] Refactor

- [ ] Feature

- [ ] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [x] No, because: n/a

## Have you updated all relevant documentation?

- [ ] Yes

- [x] No n/a

## Description

Big cleanup:

- improve & simplify the app logging

- resolve all TS issues

- resolve all circular dependencies

- fix all lint/format issues

## QA Instructions, Screenshots, Recordings

`yarn lint` passes:

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [x] No : n/a

## [optional] Are there any post deployment tasks we need to perform?

bask in the glory of what *should* be a fully-passing frontend lint on

this PR

Added the Ideal Size node

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [X] Feature

- [ ] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [X] No, because: It's a community node addition

## Have you updated all relevant documentation?

- [X] Yes

- [ ] No

## Description

Added a reference to my community node that calculates the ideal size

for initial latent generation that avoids duplication. This is the logic

that was present in 2.3.5's first pass of high-res optimization.

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [X] No : This is a documentation change that references my community

node.

## [optional] Are there any post deployment tasks we need to perform?

Add Face Mask to communityNodes.md

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [ ] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [x] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [x] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [x] Yes

- [ ] No

## Description

Add Face Mask to communituNodes.md list.