mirror of

https://github.com/invoke-ai/InvokeAI

synced 2024-08-30 20:32:17 +00:00

Merge remote-tracking branch 'upstream/development' into development

This commit is contained in:

@ -47,7 +47,7 @@ RUN git clone https://github.com/TencentARC/GFPGAN.git

|

||||

WORKDIR /GFPGAN

|

||||

RUN pip3 install -r requirements.txt \

|

||||

&& python3 setup.py develop \

|

||||

&& ln -s "/data/GFPGANv1.3.pth" experiments/pretrained_models/GFPGANv1.3.pth

|

||||

&& ln -s "/data/GFPGANv1.4.pth" experiments/pretrained_models/GFPGANv1.4.pth

|

||||

|

||||

WORKDIR /stable-diffusion

|

||||

RUN python3 scripts/preload_models.py

|

||||

|

||||

@ -8,20 +8,23 @@ hide:

|

||||

|

||||

## **Interactive Command Line Interface**

|

||||

|

||||

The `dream.py` script, located in `scripts/dream.py`, provides an interactive interface to image

|

||||

generation similar to the "dream mothership" bot that Stable AI provided on its Discord server.

|

||||

The `dream.py` script, located in `scripts/dream.py`, provides an interactive

|

||||

interface to image generation similar to the "dream mothership" bot that Stable

|

||||

AI provided on its Discord server.

|

||||

|

||||

Unlike the `txt2img.py` and `img2img.py` scripts provided in the original

|

||||

[CompVis/stable-diffusion](https://github.com/CompVis/stable-diffusion) source code repository, the

|

||||

time-consuming initialization of the AI model initialization only happens once. After that image

|

||||

generation from the command-line interface is very fast.

|

||||

[CompVis/stable-diffusion](https://github.com/CompVis/stable-diffusion) source

|

||||

code repository, the time-consuming initialization of the AI model

|

||||

initialization only happens once. After that image generation from the

|

||||

command-line interface is very fast.

|

||||

|

||||

The script uses the readline library to allow for in-line editing, command history (++up++ and

|

||||

++down++), autocompletion, and more. To help keep track of which prompts generated which images, the

|

||||

script writes a log file of image names and prompts to the selected output directory.

|

||||

The script uses the readline library to allow for in-line editing, command

|

||||

history (++up++ and ++down++), autocompletion, and more. To help keep track of

|

||||

which prompts generated which images, the script writes a log file of image

|

||||

names and prompts to the selected output directory.

|

||||

|

||||

In addition, as of version 1.02, it also writes the prompt into the PNG file's metadata where it can

|

||||

be retrieved using `scripts/images2prompt.py`

|

||||

In addition, as of version 1.02, it also writes the prompt into the PNG file's

|

||||

metadata where it can be retrieved using `scripts/images2prompt.py`

|

||||

|

||||

The script is confirmed to work on Linux, Windows and Mac systems.

|

||||

|

||||

@ -56,21 +59,24 @@ dream> q

|

||||

|

||||

|

||||

|

||||

The `dream>` prompt's arguments are pretty much identical to those used in the Discord bot, except

|

||||

you don't need to type "!dream" (it doesn't hurt if you do). A significant change is that creation

|

||||

of individual images is now the default unless `--grid` (`-g`) is given. A full list is given in

|

||||

The `dream>` prompt's arguments are pretty much identical to those used in the

|

||||

Discord bot, except you don't need to type "!dream" (it doesn't hurt if you do).

|

||||

A significant change is that creation of individual images is now the default

|

||||

unless `--grid` (`-g`) is given. A full list is given in

|

||||

[List of prompt arguments](#list-of-prompt-arguments).

|

||||

|

||||

## Arguments

|

||||

|

||||

The script itself also recognizes a series of command-line switches that will change important

|

||||

global defaults, such as the directory for image outputs and the location of the model weight files.

|

||||

The script itself also recognizes a series of command-line switches that will

|

||||

change important global defaults, such as the directory for image outputs and

|

||||

the location of the model weight files.

|

||||

|

||||

### List of arguments recognized at the command line

|

||||

|

||||

These command-line arguments can be passed to `dream.py` when you first run it from the Windows, Mac

|

||||

or Linux command line. Some set defaults that can be overridden on a per-prompt basis (see [List of

|

||||

prompt arguments] (#list-of-prompt-arguments). Others

|

||||

These command-line arguments can be passed to `dream.py` when you first run it

|

||||

from the Windows, Mac or Linux command line. Some set defaults that can be

|

||||

overridden on a per-prompt basis (see [List of prompt arguments]

|

||||

(#list-of-prompt-arguments). Others

|

||||

|

||||

| Argument <img width="240" align="right"/> | Shortcut <img width="100" align="right"/> | Default <img width="320" align="right"/> | Description |

|

||||

| ----------------------------------------- | ----------------------------------------- | ---------------------------------------------- | ---------------------------------------------------------------------------------------------------- |

|

||||

@ -90,7 +96,7 @@ prompt arguments] (#list-of-prompt-arguments). Others

|

||||

| `--seamless` | | `False` | Create interesting effects by tiling elements of the image. |

|

||||

| `--embedding_path <path>` | | `None` | Path to pre-trained embedding manager checkpoints, for custom models |

|

||||

| `--gfpgan_dir` | | `src/gfpgan` | Path to where GFPGAN is installed. |

|

||||

| `--gfpgan_model_path` | | `experiments/pretrained_models/GFPGANv1.3.pth` | Path to GFPGAN model file, relative to `--gfpgan_dir`. |

|

||||

| `--gfpgan_model_path` | | `experiments/pretrained_models/GFPGANv1.4.pth` | Path to GFPGAN model file, relative to `--gfpgan_dir`. |

|

||||

| `--device <device>` | `-d<device>` | `torch.cuda.current_device()` | Device to run SD on, e.g. "cuda:0" |

|

||||

|

||||

#### deprecated

|

||||

@ -115,9 +121,10 @@ These arguments are deprecated but still work:

|

||||

|

||||

### List of prompt arguments

|

||||

|

||||

After the `dream.py` script initializes, it will present you with a **`dream>`** prompt. Here you

|

||||

can enter information to generate images from text (txt2img), to embellish an existing image or

|

||||

sketch (img2img), or to selectively alter chosen regions of the image (inpainting).

|

||||

After the `dream.py` script initializes, it will present you with a **`dream>`**

|

||||

prompt. Here you can enter information to generate images from text (txt2img),

|

||||

to embellish an existing image or sketch (img2img), or to selectively alter

|

||||

chosen regions of the image (inpainting).

|

||||

|

||||

#### txt2img

|

||||

|

||||

@ -171,12 +178,13 @@ Those are the `dream` commands that apply to txt2img:

|

||||

than 640x480. Otherwise the image size will be identical to the provided photo and you may run out

|

||||

of memory if it is large.

|

||||

|

||||

Repeated chaining of img2img on an image can result in significant color shifts in the output,

|

||||

especially if run with lower strength. Color correction can be run against a reference image to fix

|

||||

this issue. Use the original input image to the chain as the the reference image for each step in

|

||||

the chain.

|

||||

Repeated chaining of img2img on an image can result in significant color shifts

|

||||

in the output, especially if run with lower strength. Color correction can be

|

||||

run against a reference image to fix this issue. Use the original input image to

|

||||

the chain as the the reference image for each step in the chain.

|

||||

|

||||

In addition to the command-line options recognized by txt2img, img2img accepts additional options:

|

||||

In addition to the command-line options recognized by txt2img, img2img accepts

|

||||

additional options:

|

||||

|

||||

| Argument <img width="160" align="right"/> | Shortcut | Default | Description |

|

||||

| ----------------------------------------- | ----------- | ------- | ------------------------------------------------------------------------------------------------------------------------------------------ |

|

||||

@ -198,8 +206,8 @@ In addition to the command-line options recognized by txt2img, img2img accepts a

|

||||

the areas to overpaint made transparent, but you must be careful not to destroy the pixels

|

||||

underneath when you create the transparent areas. See [Inpainting](./INPAINTING.md) for details.

|

||||

|

||||

Inpainting accepts all the arguments used for txt2img and img2img, as well as the `--mask` (`-M`)

|

||||

argument:

|

||||

Inpainting accepts all the arguments used for txt2img and img2img, as well as

|

||||

the `--mask` (`-M`) argument:

|

||||

|

||||

| Argument <img width="100" align="right"/> | Shortcut | Default | Description |

|

||||

| ----------------------------------------- | ---------- | ------- | ------------------------------------------------------------------------------------------------ |

|

||||

@ -207,37 +215,42 @@ argument:

|

||||

|

||||

## Command-line editing and completion

|

||||

|

||||

If you are on a Macintosh or Linux machine, the command-line offers convenient history tracking,

|

||||

editing, and command completion.

|

||||

If you are on a Macintosh or Linux machine, the command-line offers convenient

|

||||

history tracking, editing, and command completion.

|

||||

|

||||

- To scroll through previous commands and potentially edit/reuse them, use the ++up++ and ++down++

|

||||

cursor keys.

|

||||

- To edit the current command, use the ++left++ and ++right++ cursor keys to position the cursor,

|

||||

and then ++backspace++, ++delete++ or ++insert++ characters.

|

||||

- To move to the very beginning of the command, type ++ctrl+a++ (or ++command+a++ on the Mac)

|

||||

- To scroll through previous commands and potentially edit/reuse them, use the

|

||||

++up++ and ++down++ cursor keys.

|

||||

- To edit the current command, use the ++left++ and ++right++ cursor keys to

|

||||

position the cursor, and then ++backspace++, ++delete++ or ++insert++

|

||||

characters.

|

||||

- To move to the very beginning of the command, type ++ctrl+a++ (or

|

||||

++command+a++ on the Mac)

|

||||

- To move to the end of the command, type ++ctrl+e++.

|

||||

- To cut a section of the command, position the cursor where you want to start cutting and type

|

||||

++ctrl+k++.

|

||||

- To paste a cut section back in, position the cursor where you want to paste, and type ++ctrl+y++

|

||||

- To cut a section of the command, position the cursor where you want to start

|

||||

cutting and type ++ctrl+k++.

|

||||

- To paste a cut section back in, position the cursor where you want to paste,

|

||||

and type ++ctrl+y++

|

||||

|

||||

Windows users can get similar, but more limited, functionality if they launch `dream.py` with the

|

||||

"winpty" program:

|

||||

Windows users can get similar, but more limited, functionality if they launch

|

||||

`dream.py` with the "winpty" program:

|

||||

|

||||

```batch

|

||||

winpty python scripts\dream.py

|

||||

```

|

||||

|

||||

On the Mac and Linux platforms, when you exit `dream.py`, the last 1000 lines of your command-line

|

||||

history will be saved. When you restart `dream.py`, you can access the saved history using the

|

||||

++up++ key.

|

||||

On the Mac and Linux platforms, when you exit `dream.py`, the last 1000 lines of

|

||||

your command-line history will be saved. When you restart `dream.py`, you can

|

||||

access the saved history using the ++up++ key.

|

||||

|

||||

In addition, limited command-line completion is installed. In various contexts, you can start typing

|

||||

your command and press tab. A list of potential completions will be presented to you. You can then

|

||||

type a little more, hit tab again, and eventually autocomplete what you want.

|

||||

In addition, limited command-line completion is installed. In various contexts,

|

||||

you can start typing your command and press tab. A list of potential completions

|

||||

will be presented to you. You can then type a little more, hit tab again, and

|

||||

eventually autocomplete what you want.

|

||||

|

||||

When specifying file paths using the one-letter shortcuts, the CLI will attempt to complete

|

||||

pathnames for you. This is most handy for the `-I` (init image) and `-M` (init mask) paths. To

|

||||

initiate completion, start the path with a slash `/` or `./`, for example:

|

||||

When specifying file paths using the one-letter shortcuts, the CLI will attempt

|

||||

to complete pathnames for you. This is most handy for the `-I` (init image) and

|

||||

`-M` (init mask) paths. To initiate completion, start the path with a slash `/`

|

||||

or `./`, for example:

|

||||

|

||||

```bash

|

||||

dream> "zebra with a mustache" -I./test-pictures<TAB>

|

||||

|

||||

@ -4,37 +4,42 @@ title: Upscale

|

||||

|

||||

## Intro

|

||||

|

||||

The script provides the ability to restore faces and upscale. You can apply these operations

|

||||

at the time you generate the images, or at any time to a previously-generated PNG file, using

|

||||

the [!fix](#fixing-previously-generated-images) command.

|

||||

The script provides the ability to restore faces and upscale. You can apply

|

||||

these operations at the time you generate the images, or at any time to a

|

||||

previously-generated PNG file, using the

|

||||

[!fix](#fixing-previously-generated-images) command.

|

||||

|

||||

## Face Fixing

|

||||

|

||||

The default face restoration module is GFPGAN. The default upscale is Real-ESRGAN. For an alternative

|

||||

face restoration module, see [CodeFormer Support] below.

|

||||

The default face restoration module is GFPGAN. The default upscale is

|

||||

Real-ESRGAN. For an alternative face restoration module, see [CodeFormer

|

||||

Support] below.

|

||||

|

||||

As of version 1.14, environment.yaml will install the Real-ESRGAN package into the standard install

|

||||

location for python packages, and will put GFPGAN into a subdirectory of "src" in the

|

||||

stable-diffusion directory. (The reason for this is that the standard GFPGAN distribution has a

|

||||

minor bug that adversely affects image color.) Upscaling with Real-ESRGAN should "just work" without

|

||||

further intervention. Simply pass the --upscale (-U) option on the dream> command line, or indicate

|

||||

the desired scale on the popup in the Web GUI.

|

||||

As of version 1.14, environment.yaml will install the Real-ESRGAN package into

|

||||

the standard install location for python packages, and will put GFPGAN into a

|

||||

subdirectory of "src" in the stable-diffusion directory. (The reason for this is

|

||||

that the standard GFPGAN distribution has a minor bug that adversely affects

|

||||

image color.) Upscaling with Real-ESRGAN should "just work" without further

|

||||

intervention. Simply pass the --upscale (-U) option on the dream> command line,

|

||||

or indicate the desired scale on the popup in the Web GUI.

|

||||

|

||||

For **GFPGAN** to work, there is one additional step needed. You will need to download and copy the

|

||||

GFPGAN [models file](https://github.com/TencentARC/GFPGAN/releases/download/v1.3.0/GFPGANv1.3.pth)

|

||||

into **src/gfpgan/experiments/pretrained_models**. On Mac and Linux systems, here's how you'd do it

|

||||

using **wget**:

|

||||

For **GFPGAN** to work, there is one additional step needed. You will need to

|

||||

download and copy the GFPGAN

|

||||

[models file](https://github.com/TencentARC/GFPGAN/releases/download/v1.3.0/GFPGANv1.4.pth)

|

||||

into **src/gfpgan/experiments/pretrained_models**. On Mac and Linux systems,

|

||||

here's how you'd do it using **wget**:

|

||||

|

||||

```bash

|

||||

> wget https://github.com/TencentARC/GFPGAN/releases/download/v1.3.0/GFPGANv1.3.pth src/gfpgan/experiments/pretrained_models/

|

||||

> wget https://github.com/TencentARC/GFPGAN/releases/download/v1.3.0/GFPGANv1.4.pth src/gfpgan/experiments/pretrained_models/

|

||||

```

|

||||

|

||||

Make sure that you're in the stable-diffusion directory when you do this.

|

||||

|

||||

Alternatively, if you have GFPGAN installed elsewhere, or if you are using an earlier version of

|

||||

this package which asked you to install GFPGAN in a sibling directory, you may use the

|

||||

`--gfpgan_dir` argument with `dream.py` to set a custom path to your GFPGAN directory. _There are

|

||||

other GFPGAN related boot arguments if you wish to customize further._

|

||||

Alternatively, if you have GFPGAN installed elsewhere, or if you are using an

|

||||

earlier version of this package which asked you to install GFPGAN in a sibling

|

||||

directory, you may use the `--gfpgan_dir` argument with `dream.py` to set a

|

||||

custom path to your GFPGAN directory. _There are other GFPGAN related boot

|

||||

arguments if you wish to customize further._

|

||||

|

||||

!!! warning "Internet connection needed"

|

||||

|

||||

@ -52,13 +57,14 @@ You will now have access to two new prompt arguments.

|

||||

|

||||

`-U : <upscaling_factor> <upscaling_strength>`

|

||||

|

||||

The upscaling prompt argument takes two values. The first value is a scaling factor and should be

|

||||

set to either `2` or `4` only. This will either scale the image 2x or 4x respectively using

|

||||

different models.

|

||||

The upscaling prompt argument takes two values. The first value is a scaling

|

||||

factor and should be set to either `2` or `4` only. This will either scale the

|

||||

image 2x or 4x respectively using different models.

|

||||

|

||||

You can set the scaling stength between `0` and `1.0` to control intensity of the of the scaling.

|

||||

This is handy because AI upscalers generally tend to smooth out texture details. If you wish to

|

||||

retain some of those for natural looking results, we recommend using values between `0.5 to 0.8`.

|

||||

You can set the scaling stength between `0` and `1.0` to control intensity of

|

||||

the of the scaling. This is handy because AI upscalers generally tend to smooth

|

||||

out texture details. If you wish to retain some of those for natural looking

|

||||

results, we recommend using values between `0.5 to 0.8`.

|

||||

|

||||

If you do not explicitly specify an upscaling_strength, it will default to 0.75.

|

||||

|

||||

@ -66,18 +72,19 @@ If you do not explicitly specify an upscaling_strength, it will default to 0.75.

|

||||

|

||||

`-G : <gfpgan_strength>`

|

||||

|

||||

This prompt argument controls the strength of the face restoration that is being applied. Similar to

|

||||

upscaling, values between `0.5 to 0.8` are recommended.

|

||||

This prompt argument controls the strength of the face restoration that is being

|

||||

applied. Similar to upscaling, values between `0.5 to 0.8` are recommended.

|

||||

|

||||

You can use either one or both without any conflicts. In cases where you use both, the image will be

|

||||

first upscaled and then the face restoration process will be executed to ensure you get the highest

|

||||

quality facial features.

|

||||

You can use either one or both without any conflicts. In cases where you use

|

||||

both, the image will be first upscaled and then the face restoration process

|

||||

will be executed to ensure you get the highest quality facial features.

|

||||

|

||||

`--save_orig`

|

||||

|

||||

When you use either `-U` or `-G`, the final result you get is upscaled or face modified. If you want

|

||||

to save the original Stable Diffusion generation, you can use the `-save_orig` prompt argument to

|

||||

save the original unaffected version too.

|

||||

When you use either `-U` or `-G`, the final result you get is upscaled or face

|

||||

modified. If you want to save the original Stable Diffusion generation, you can

|

||||

use the `-save_orig` prompt argument to save the original unaffected version

|

||||

too.

|

||||

|

||||

### Example Usage

|

||||

|

||||

@ -102,60 +109,69 @@ dream> a man wearing a pineapple hat -I path/to/your/file.png -U 2 0.5 -G 0.6

|

||||

process is complete. While the image generation is taking place, you will still be able to preview

|

||||

the base images.

|

||||

|

||||

If you wish to stop during the image generation but want to upscale or face restore a particular

|

||||

generated image, pass it again with the same prompt and generated seed along with the `-U` and `-G`

|

||||

prompt arguments to perform those actions.

|

||||

If you wish to stop during the image generation but want to upscale or face

|

||||

restore a particular generated image, pass it again with the same prompt and

|

||||

generated seed along with the `-U` and `-G` prompt arguments to perform those

|

||||

actions.

|

||||

|

||||

## CodeFormer Support

|

||||

|

||||

This repo also allows you to perform face restoration using

|

||||

[CodeFormer](https://github.com/sczhou/CodeFormer).

|

||||

|

||||

In order to setup CodeFormer to work, you need to download the models like with GFPGAN. You can do

|

||||

this either by running `preload_models.py` or by manually downloading the

|

||||

[model file](https://github.com/sczhou/CodeFormer/releases/download/v0.1.0/codeformer.pth) and

|

||||

saving it to `ldm/restoration/codeformer/weights` folder.

|

||||

In order to setup CodeFormer to work, you need to download the models like with

|

||||

GFPGAN. You can do this either by running `preload_models.py` or by manually

|

||||

downloading the

|

||||

[model file](https://github.com/sczhou/CodeFormer/releases/download/v0.1.0/codeformer.pth)

|

||||

and saving it to `ldm/restoration/codeformer/weights` folder.

|

||||

|

||||

You can use `-ft` prompt argument to swap between CodeFormer and the default GFPGAN. The above

|

||||

mentioned `-G` prompt argument will allow you to control the strength of the restoration effect.

|

||||

You can use `-ft` prompt argument to swap between CodeFormer and the default

|

||||

GFPGAN. The above mentioned `-G` prompt argument will allow you to control the

|

||||

strength of the restoration effect.

|

||||

|

||||

### Usage:

|

||||

|

||||

The following command will perform face restoration with CodeFormer instead of the default gfpgan.

|

||||

The following command will perform face restoration with CodeFormer instead of

|

||||

the default gfpgan.

|

||||

|

||||

`<prompt> -G 0.8 -ft codeformer`

|

||||

|

||||

### Other Options:

|

||||

|

||||

- `-cf` - cf or CodeFormer Fidelity takes values between `0` and `1`. 0 produces high quality

|

||||

results but low accuracy and 1 produces lower quality results but higher accuacy to your original

|

||||

face.

|

||||

- `-cf` - cf or CodeFormer Fidelity takes values between `0` and `1`. 0 produces

|

||||

high quality results but low accuracy and 1 produces lower quality results but

|

||||

higher accuacy to your original face.

|

||||

|

||||

The following command will perform face restoration with CodeFormer. CodeFormer will output a result

|

||||

that is closely matching to the input face.

|

||||

The following command will perform face restoration with CodeFormer. CodeFormer

|

||||

will output a result that is closely matching to the input face.

|

||||

|

||||

`<prompt> -G 1.0 -ft codeformer -cf 0.9`

|

||||

|

||||

The following command will perform face restoration with CodeFormer. CodeFormer will output a result

|

||||

that is the best restoration possible. This may deviate slightly from the original face. This is an

|

||||

excellent option to use in situations when there is very little facial data to work with.

|

||||

The following command will perform face restoration with CodeFormer. CodeFormer

|

||||

will output a result that is the best restoration possible. This may deviate

|

||||

slightly from the original face. This is an excellent option to use in

|

||||

situations when there is very little facial data to work with.

|

||||

|

||||

`<prompt> -G 1.0 -ft codeformer -cf 0.1`

|

||||

|

||||

## Fixing Previously-Generated Images

|

||||

|

||||

It is easy to apply face restoration and/or upscaling to any previously-generated file. Just use the

|

||||

syntax `!fix path/to/file.png <options>`. For example, to apply GFPGAN at strength 0.8 and upscale 2X

|

||||

for a file named `./outputs/img-samples/000044.2945021133.png`, just run:

|

||||

It is easy to apply face restoration and/or upscaling to any

|

||||

previously-generated file. Just use the syntax

|

||||

`!fix path/to/file.png <options>`. For example, to apply GFPGAN at strength 0.8

|

||||

and upscale 2X for a file named `./outputs/img-samples/000044.2945021133.png`,

|

||||

just run:

|

||||

|

||||

~~~~

|

||||

```

|

||||

dream> !fix ./outputs/img-samples/000044.2945021133.png -G 0.8 -U 2

|

||||

~~~~

|

||||

```

|

||||

|

||||

A new file named `000044.2945021133.fixed.png` will be created in the output directory. Note that

|

||||

the `!fix` command does not replace the original file, unlike the behavior at generate time.

|

||||

A new file named `000044.2945021133.fixed.png` will be created in the output

|

||||

directory. Note that the `!fix` command does not replace the original file,

|

||||

unlike the behavior at generate time.

|

||||

|

||||

### Disabling:

|

||||

|

||||

If, for some reason, you do not wish to load the GFPGAN and/or ESRGAN libraries, you can disable them

|

||||

on the dream.py command line with the `--no_restore` and `--no_upscale` options, respectively.

|

||||

If, for some reason, you do not wish to load the GFPGAN and/or ESRGAN libraries,

|

||||

you can disable them on the dream.py command line with the `--no_restore` and

|

||||

`--no_upscale` options, respectively.

|

||||

|

||||

141

docs/help/SAMPLER_CONVERGENCE.md

Normal file

141

docs/help/SAMPLER_CONVERGENCE.md

Normal file

@ -0,0 +1,141 @@

|

||||

---

|

||||

title: SAMPLER CONVERGENCE

|

||||

---

|

||||

|

||||

## *Sampler Convergence*

|

||||

|

||||

As features keep increasing, making the right choices for your needs can become increasingly difficult. What sampler to use? And for how many steps? Do you change the CFG value? Do you use prompt weighting? Do you allow variations?

|

||||

|

||||

Even once you have a result, do you blend it with other images? Pass it through `img2img`? With what strength? Do you use inpainting to correct small details? Outpainting to extend cropped sections?

|

||||

|

||||

The purpose of this series of documents is to help you better understand these tools, so you can make the best out of them. Feel free to contribute with your own findings!

|

||||

|

||||

In this document, we will talk about sampler convergence.

|

||||

|

||||

Looking for a short version? Here's a TL;DR in 3 tables.

|

||||

|

||||

| Remember |

|

||||

|:---|

|

||||

| Results converge as steps (`-s`) are increased (except for `K_DPM_2_A` and `K_EULER_A`). Often at ≥ `-s100`, but may require ≥ `-s700`). |

|

||||

| Producing a batch of candidate images at low (`-s8` to `-s30`) step counts can save you hours of computation. |

|

||||

| `K_HEUN` and `K_DPM_2` converge in less steps (but are slower). |

|

||||

| `K_DPM_2_A` and `K_EULER_A` incorporate a lot of creativity/variability. |

|

||||

|

||||

| Sampler | (3 sample avg) it/s (M1 Max 64GB, 512x512) |

|

||||

|---|---|

|

||||

| `DDIM` | 1.89 |

|

||||

| `PLMS` | 1.86 |

|

||||

| `K_EULER` | 1.86 |

|

||||

| `K_LMS` | 1.91 |

|

||||

| `K_HEUN` | 0.95 (slower) |

|

||||

| `K_DPM_2` | 0.95 (slower) |

|

||||

| `K_DPM_2_A` | 0.95 (slower) |

|

||||

| `K_EULER_A` | 1.86 |

|

||||

|

||||

| Suggestions |

|

||||

|:---|

|

||||

| For most use cases, `K_LMS`, `K_HEUN` and `K_DPM_2` are the best choices (the latter 2 run 0.5x as quick, but tend to converge 2x as quick as `K_LMS`). At very low steps (≤ `-s8`), `K_HEUN` and `K_DPM_2` are not recommended. Use `K_LMS` instead.|

|

||||

| For variability, use `K_EULER_A` (runs 2x as quick as `K_DPM_2_A`). |

|

||||

|

||||

---

|

||||

|

||||

### *Sampler results*

|

||||

|

||||

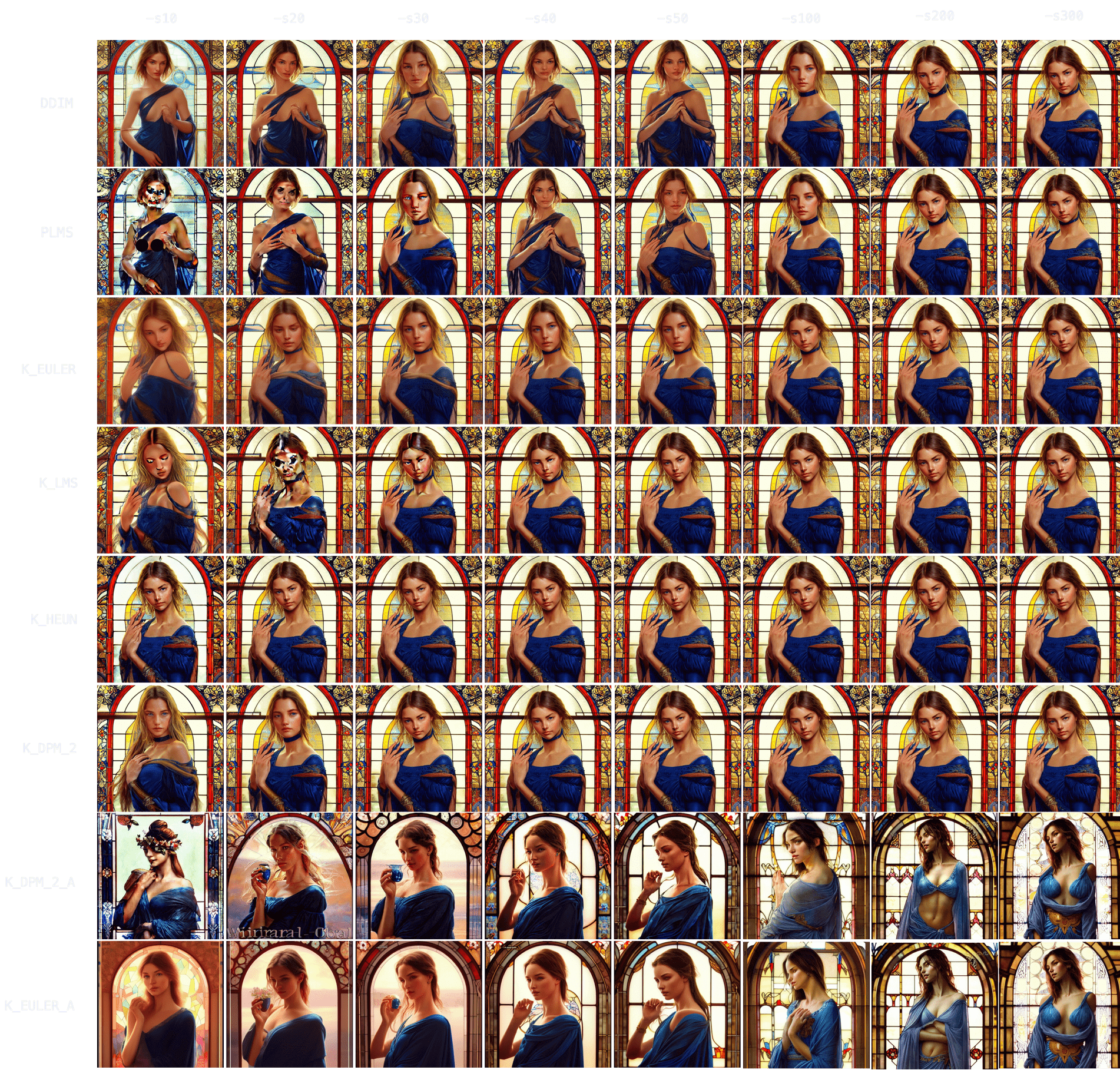

Let's start by choosing a prompt and using it with each of our 8 samplers, running it for 10, 20, 30, 40, 50 and 100 steps.

|

||||

|

||||

Anime. `"an anime girl" -W512 -H512 -C7.5 -S3031912972`

|

||||

|

||||

|

||||

|

||||

### *Sampler convergence*

|

||||

|

||||

Immediately, you can notice results tend to converge -that is, as `-s` (step) values increase, images look more and more similar until there comes a point where the image no longer changes.

|

||||

|

||||

You can also notice how `DDIM` and `PLMS` eventually tend to converge to K-sampler results as steps are increased.

|

||||

Among K-samplers, `K_HEUN` and `K_DPM_2` seem to require the fewest steps to converge, and even at low step counts they are good indicators of the final result. And finally, `K_DPM_2_A` and `K_EULER_A` seem to do a bit of their own thing and don't keep much similarity with the rest of the samplers.

|

||||

|

||||

### *Batch generation speedup*

|

||||

|

||||

This realization is very useful because it means you don't need to create a batch of 100 images (`-n100`) at `-s100` to choose your favorite 2 or 3 images.

|

||||

You can produce the same 100 images at `-s10` to `-s30` using a K-sampler (since they converge faster), get a rough idea of the final result, choose your 2 or 3 favorite ones, and then run `-s100` on those images to polish some details.

|

||||

The latter technique is 3-8x as quick.

|

||||

|

||||

Example:

|

||||

|

||||

At 60s per 100 steps.

|

||||

|

||||

(Option A) 60s * 100 images = 6000s (100 images at `-s100`, manually picking 3 favorites)

|

||||

|

||||

(Option B) 6s * 100 images + 60s * 3 images = 780s (100 images at `-s10`, manually picking 3 favorites, and running those 3 at `-s100` to polish details)

|

||||

|

||||

The result is 1 hour and 40 minutes (Option A) vs 13 minutes (Option B).

|

||||

|

||||

### *Topic convergance*

|

||||

|

||||

Now, these results seem interesting, but do they hold for other topics? How about nature? Food? People? Animals? Let's try!

|

||||

|

||||

Nature. `"valley landscape wallpaper, d&d art, fantasy, painted, 4k, high detail, sharp focus, washed colors, elaborate excellent painted illustration" -W512 -H512 -C7.5 -S1458228930`

|

||||

|

||||

|

||||

|

||||

With nature, you can see how initial results are even more indicative of final result -more so than with characters/people. `K_HEUN` and `K_DPM_2` are again the quickest indicators, almost right from the start. Results also converge faster (e.g. `K_HEUN` converged at `-s21`).

|

||||

|

||||

Food. `"a hamburger with a bowl of french fries" -W512 -H512 -C7.5 -S4053222918`

|

||||

|

||||

|

||||

|

||||

Again, `K_HEUN` and `K_DPM_2` take the fewest number of steps to be good indicators of the final result. `K_DPM_2_A` and `K_EULER_A` seem to incorporate a lot of creativity/variability, capable of producing rotten hamburgers, but also of adding lettuce to the mix. And they're the only samplers that produced an actual 'bowl of fries'!

|

||||

|

||||

Animals. `"grown tiger, full body" -W512 -H512 -C7.5 -S3721629802`

|

||||

|

||||

|

||||

|

||||

`K_HEUN` and `K_DPM_2` once again require the least number of steps to be indicative of the final result (around `-s30`), while other samplers are still struggling with several tails or malformed back legs.

|

||||

|

||||

It also takes longer to converge (for comparison, `K_HEUN` required around 150 steps to converge). This is normal, as producing human/animal faces/bodies is one of the things the model struggles the most with. For these topics, running for more steps will often increase coherence within the composition.

|

||||

|

||||

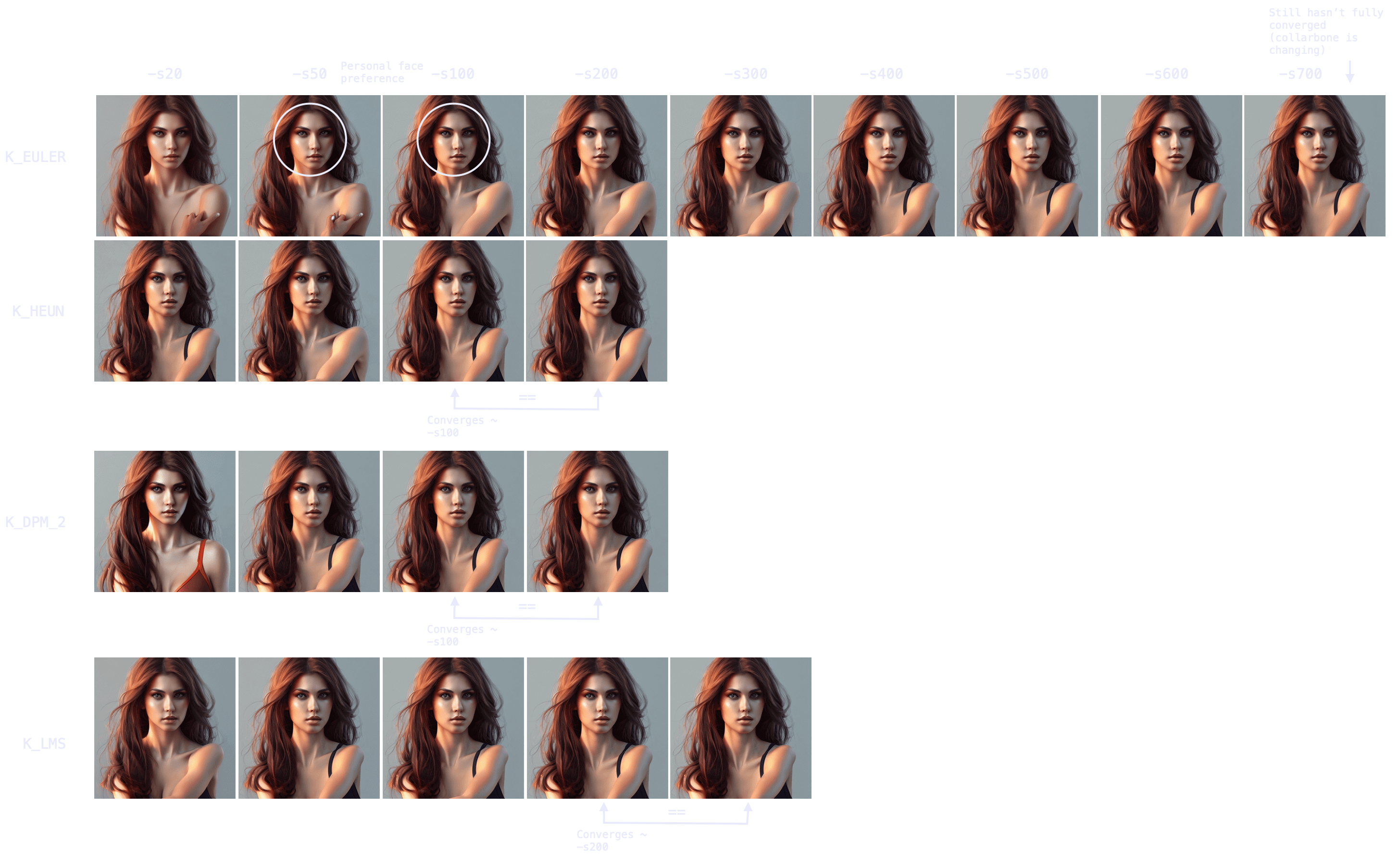

People. `"Ultra realistic photo, (Miranda Bloom-Kerr), young, stunning model, blue eyes, blond hair, beautiful face, intricate, highly detailed, smooth, art by artgerm and greg rutkowski and alphonse mucha, stained glass" -W512 -H512 -C7.5 -S2131956332`. This time, we will go up to 300 steps.

|

||||

|

||||

|

||||

|

||||

Observing the results, it again takes longer for all samplers to converge (`K_HEUN` took around 150 steps), but we can observe good indicative results much earlier (see: `K_HEUN`). Conversely, `DDIM` and `PLMS` are still undergoing moderate changes (see: lace around her neck), even at `-s300`.

|

||||

|

||||

In fact, as we can see in this other experiment, some samplers can take 700+ steps to converge when generating people.

|

||||

|

||||

|

||||

|

||||

Note also the point of convergence may not be the most desirable state (e.g. I prefer an earlier version of the face, more rounded), but it will probably be the most coherent arms/hands/face attributes-wise. You can always merge different images with a photo editing tool and pass it through `img2img` to smoothen the composition.

|

||||

|

||||

### *Sampler generation times*

|

||||

|

||||

Once we understand the concept of sampler convergence, we must look into the performance of each sampler in terms of steps (iterations) per second, as not all samplers run at the same speed.

|

||||

|

||||

On my M1 Max with 64GB of RAM, for a 512x512 image:

|

||||

| Sampler | (3 sample average) it/s |

|

||||

|---|---|

|

||||

| `DDIM` | 1.89 |

|

||||

| `PLMS` | 1.86 |

|

||||

| `K_EULER` | 1.86 |

|

||||

| `K_LMS` | 1.91 |

|

||||

| `K_HEUN` | 0.95 (slower) |

|

||||

| `K_DPM_2` | 0.95 (slower) |

|

||||

| `K_DPM_2_A` | 0.95 (slower) |

|

||||

| `K_EULER_A` | 1.86 |

|

||||

|

||||

Combining our results with the steps per second of each sampler, three choices come out on top: `K_LMS`, `K_HEUN` and `K_DPM_2` (where the latter two run 0.5x as quick but tend to converge 2x as quick as `K_LMS`). For creativity and a lot of variation between iterations, `K_EULER_A` can be a good choice (which runs 2x as quick as `K_DPM_2_A`).

|

||||

|

||||

Additionally, image generation at very low steps (≤ `-s8`) is not recommended for `K_HEUN` and `K_DPM_2`. Use `K_LMS` instead.

|

||||

|

||||

<img width="397" alt="192044949-67d5d441-a0d5-4d5a-be30-5dda4fc28a00-min" src="https://user-images.githubusercontent.com/50542132/192046823-2714cb29-bbf3-4eb1-9213-e27a0963905c.png">

|

||||

|

||||

### *Three key points*

|

||||

|

||||

Finally, it is relevant to mention that, in general, there are 3 important moments in the process of image formation as steps increase:

|

||||

|

||||

* The (earliest) point at which an image becomes a good indicator of the final result (useful for batch generation at low step values, to then improve the quality/coherence of the chosen images via running the same prompt and seed for more steps).

|

||||

|

||||

* The (earliest) point at which an image becomes coherent, even if different from the result if steps are increased (useful for batch generation at low step values, where quality/coherence is improved via techniques other than increasing the steps -e.g. via inpainting).

|

||||

|

||||

* The point at which an image fully converges.

|

||||

|

||||

Hence, remember that your workflow/strategy should define your optimal number of steps, even for the same prompt and seed (for example, if you seek full convergence, you may run `K_LMS` for `-s200` in the case of the red-haired girl, but `K_LMS` and `-s20`-taking one tenth the time- may do as well if your workflow includes adding small details, such as the missing shoulder strap, via `img2img`).

|

||||

@ -1,36 +1,60 @@

|

||||

# Before you begin

|

||||

|

||||

- For end users: Install Stable Diffusion locally using the instructions for your OS.

|

||||

- For developers: For container-related development tasks or for enabling easy deployment to other environments (on-premises or cloud), follow these instructions. For general use, install locally to leverage your machine's GPU.

|

||||

- For end users: Install Stable Diffusion locally using the instructions for

|

||||

your OS.

|

||||

- For developers: For container-related development tasks or for enabling easy

|

||||

deployment to other environments (on-premises or cloud), follow these

|

||||

instructions. For general use, install locally to leverage your machine's GPU.

|

||||

|

||||

# Why containers?

|

||||

|

||||

They provide a flexible, reliable way to build and deploy Stable Diffusion. You'll also use a Docker volume to store the largest model files and image outputs as a first step in decoupling storage and compute. Future enhancements can do this for other assets. See [Processes](https://12factor.net/processes) under the Twelve-Factor App methodology for details on why running applications in such a stateless fashion is important.

|

||||

They provide a flexible, reliable way to build and deploy Stable Diffusion.

|

||||

You'll also use a Docker volume to store the largest model files and image

|

||||

outputs as a first step in decoupling storage and compute. Future enhancements

|

||||

can do this for other assets. See [Processes](https://12factor.net/processes)

|

||||

under the Twelve-Factor App methodology for details on why running applications

|

||||

in such a stateless fashion is important.

|

||||

|

||||

You can specify the target platform when building the image and running the container. You'll also need to specify the Stable Diffusion requirements file that matches the container's OS and the architecture it will run on.

|

||||

You can specify the target platform when building the image and running the

|

||||

container. You'll also need to specify the Stable Diffusion requirements file

|

||||

that matches the container's OS and the architecture it will run on.

|

||||

|

||||

Developers on Apple silicon (M1/M2): You [can't access your GPU cores from Docker containers](https://github.com/pytorch/pytorch/issues/81224) and performance is reduced compared with running it directly on macOS but for development purposes it's fine. Once you're done with development tasks on your laptop you can build for the target platform and architecture and deploy to another environment with NVIDIA GPUs on-premises or in the cloud.

|

||||

Developers on Apple silicon (M1/M2): You

|

||||

[can't access your GPU cores from Docker containers](https://github.com/pytorch/pytorch/issues/81224)

|

||||

and performance is reduced compared with running it directly on macOS but for

|

||||

development purposes it's fine. Once you're done with development tasks on your

|

||||

laptop you can build for the target platform and architecture and deploy to

|

||||

another environment with NVIDIA GPUs on-premises or in the cloud.

|

||||

|

||||

# Installation on a Linux container

|

||||

# Installation on a Linux container

|

||||

|

||||

## Prerequisites

|

||||

|

||||

### Get the data files

|

||||

### Get the data files

|

||||

|

||||

Go to [Hugging Face](https://huggingface.co/CompVis/stable-diffusion-v-1-4-original), and click "Access repository" to Download the model file ```sd-v1-4.ckpt``` (~4 GB) to ```~/Downloads```. You'll need to create an account but it's quick and free.

|

||||

Go to

|

||||

[Hugging Face](https://huggingface.co/CompVis/stable-diffusion-v-1-4-original),

|

||||

and click "Access repository" to Download the model file `sd-v1-4.ckpt` (~4 GB)

|

||||

to `~/Downloads`. You'll need to create an account but it's quick and free.

|

||||

|

||||

Also download the face restoration model.

|

||||

|

||||

```Shell

|

||||

cd ~/Downloads

|

||||

wget https://github.com/TencentARC/GFPGAN/releases/download/v1.3.0/GFPGANv1.3.pth

|

||||

wget https://github.com/TencentARC/GFPGAN/releases/download/v1.3.0/GFPGANv1.4.pth

|

||||

```

|

||||

|

||||

### Install [Docker](https://github.com/santisbon/guides#docker)

|

||||

On the Docker Desktop app, go to Preferences, Resources, Advanced. Increase the CPUs and Memory to avoid this [Issue](https://github.com/invoke-ai/InvokeAI/issues/342). You may need to increase Swap and Disk image size too.

|

||||

### Install [Docker](https://github.com/santisbon/guides#docker)

|

||||

|

||||

On the Docker Desktop app, go to Preferences, Resources, Advanced. Increase the

|

||||

CPUs and Memory to avoid this

|

||||

[Issue](https://github.com/invoke-ai/InvokeAI/issues/342). You may need to

|

||||

increase Swap and Disk image size too.

|

||||

|

||||

## Setup

|

||||

|

||||

Set the fork you want to use and other variables.

|

||||

Set the fork you want to use and other variables.

|

||||

|

||||

```Shell

|

||||

TAG_STABLE_DIFFUSION="santisbon/stable-diffusion"

|

||||

PLATFORM="linux/arm64"

|

||||

@ -46,21 +70,28 @@ echo $CONDA_SUBDIR

|

||||

```

|

||||

|

||||

Create a Docker volume for the downloaded model files.

|

||||

|

||||

```Shell

|

||||

docker volume create my-vol

|

||||

```

|

||||

|

||||

Copy the data files to the Docker volume using a lightweight Linux container. We'll need the models at run time. You just need to create the container with the mountpoint; no need to run this dummy container.

|

||||

Copy the data files to the Docker volume using a lightweight Linux container.

|

||||

We'll need the models at run time. You just need to create the container with

|

||||

the mountpoint; no need to run this dummy container.

|

||||

|

||||

```Shell

|

||||

cd ~/Downloads # or wherever you saved the files

|

||||

|

||||

docker create --platform $PLATFORM --name dummy --mount source=my-vol,target=/data alpine

|

||||

docker create --platform $PLATFORM --name dummy --mount source=my-vol,target=/data alpine

|

||||

|

||||

docker cp sd-v1-4.ckpt dummy:/data

|

||||

docker cp GFPGANv1.3.pth dummy:/data

|

||||

docker cp GFPGANv1.4.pth dummy:/data

|

||||

```

|

||||

|

||||

Get the repo and download the Miniconda installer (we'll need it at build time). Replace the URL with the version matching your container OS and the architecture it will run on.

|

||||

Get the repo and download the Miniconda installer (we'll need it at build time).

|

||||

Replace the URL with the version matching your container OS and the architecture

|

||||

it will run on.

|

||||

|

||||

```Shell

|

||||

cd ~

|

||||

git clone $GITHUB_STABLE_DIFFUSION

|

||||

@ -70,10 +101,15 @@ chmod +x entrypoint.sh

|

||||

wget https://repo.anaconda.com/miniconda/Miniconda3-latest-Linux-aarch64.sh -O anaconda.sh && chmod +x anaconda.sh

|

||||

```

|

||||

|

||||

Build the Docker image. Give it any tag ```-t``` that you want.

|

||||

Choose the Linux container's host platform: x86-64/Intel is ```amd64```. Apple silicon is ```arm64```. If deploying the container to the cloud to leverage powerful GPU instances you'll be on amd64 hardware but if you're just trying this out locally on Apple silicon choose arm64.

|

||||

The application uses libraries that need to match the host environment so use the appropriate requirements file.

|

||||

Tip: Check that your shell session has the env variables set above.

|

||||

Build the Docker image. Give it any tag `-t` that you want.

|

||||

Choose the Linux container's host platform: x86-64/Intel is `amd64`. Apple

|

||||

silicon is `arm64`. If deploying the container to the cloud to leverage powerful

|

||||

GPU instances you'll be on amd64 hardware but if you're just trying this out

|

||||

locally on Apple silicon choose arm64.

|

||||

The application uses libraries that need to match the host environment so use

|

||||

the appropriate requirements file.

|

||||

Tip: Check that your shell session has the env variables set above.

|

||||

|

||||

```Shell

|

||||

docker build -t $TAG_STABLE_DIFFUSION \

|

||||

--platform $PLATFORM \

|

||||

@ -85,6 +121,7 @@ docker build -t $TAG_STABLE_DIFFUSION \

|

||||

|

||||

Run a container using your built image.

|

||||

Tip: Make sure you've created and populated the Docker volume (above).

|

||||

|

||||

```Shell

|

||||

docker run -it \

|

||||

--rm \

|

||||

@ -98,86 +135,121 @@ $TAG_STABLE_DIFFUSION

|

||||

# Usage (time to have fun)

|

||||

|

||||

## Startup

|

||||

If you're on a **Linux container** the ```dream``` script is **automatically started** and the output dir set to the Docker volume you created earlier.

|

||||

|

||||

If you're on a **Linux container** the `dream` script is **automatically

|

||||

started** and the output dir set to the Docker volume you created earlier.

|

||||

|

||||

If you're **directly on macOS follow these startup instructions**.

|

||||

With the Conda environment activated (```conda activate ldm```), run the interactive interface that combines the functionality of the original scripts ```txt2img``` and ```img2img```:

|

||||

Use the more accurate but VRAM-intensive full precision math because half-precision requires autocast and won't work.

|

||||

By default the images are saved in ```outputs/img-samples/```.

|

||||

With the Conda environment activated (`conda activate ldm`), run the interactive

|

||||

interface that combines the functionality of the original scripts `txt2img` and

|

||||

`img2img`:

|

||||

Use the more accurate but VRAM-intensive full precision math because

|

||||

half-precision requires autocast and won't work.

|

||||

By default the images are saved in `outputs/img-samples/`.

|

||||

|

||||

```Shell

|

||||

python3 scripts/dream.py --full_precision

|

||||

python3 scripts/dream.py --full_precision

|

||||

```

|

||||

|

||||

You'll get the script's prompt. You can see available options or quit.

|

||||

|

||||

```Shell

|

||||

dream> -h

|

||||

dream> q

|

||||

```

|

||||

|

||||

## Text to Image

|

||||

For quick (but bad) image results test with 5 steps (default 50) and 1 sample image. This will let you know that everything is set up correctly.

|

||||

|

||||

For quick (but bad) image results test with 5 steps (default 50) and 1 sample

|

||||

image. This will let you know that everything is set up correctly.

|

||||

Then increase steps to 100 or more for good (but slower) results.

|

||||

The prompt can be in quotes or not.

|

||||

|

||||

```Shell

|

||||

dream> The hulk fighting with sheldon cooper -s5 -n1

|

||||

dream> The hulk fighting with sheldon cooper -s5 -n1

|

||||

dream> "woman closeup highly detailed" -s 150

|

||||

# Reuse previous seed and apply face restoration

|

||||

dream> "woman closeup highly detailed" --steps 150 --seed -1 -G 0.75

|

||||

dream> "woman closeup highly detailed" --steps 150 --seed -1 -G 0.75

|

||||

```

|

||||

|

||||

You'll need to experiment to see if face restoration is making it better or worse for your specific prompt.

|

||||

You'll need to experiment to see if face restoration is making it better or

|

||||

worse for your specific prompt.

|

||||

|

||||

If you're on a container the output is set to the Docker volume. You can copy it wherever you want.

|

||||

If you're on a container the output is set to the Docker volume. You can copy it

|

||||

wherever you want.

|

||||

You can download it from the Docker Desktop app, Volumes, my-vol, data.

|

||||

Or you can copy it from your Mac terminal. Keep in mind ```docker cp``` can't expand ```*.png``` so you'll need to specify the image file name.

|

||||

Or you can copy it from your Mac terminal. Keep in mind `docker cp` can't expand

|

||||

`*.png` so you'll need to specify the image file name.

|

||||

|

||||

On your host Mac (you can use the name of any container that mounted the

|

||||

volume):

|

||||

|

||||

On your host Mac (you can use the name of any container that mounted the volume):

|

||||

```Shell

|

||||

docker cp dummy:/data/000001.928403745.png /Users/<your-user>/Pictures

|

||||

docker cp dummy:/data/000001.928403745.png /Users/<your-user>/Pictures

|

||||

```

|

||||

|

||||

## Image to Image

|

||||

You can also do text-guided image-to-image translation. For example, turning a sketch into a detailed drawing.

|

||||

|

||||

```strength``` is a value between 0.0 and 1.0 that controls the amount of noise that is added to the input image. Values that approach 1.0 allow for lots of variations but will also produce images that are not semantically consistent with the input. 0.0 preserves image exactly, 1.0 replaces it completely.

|

||||

You can also do text-guided image-to-image translation. For example, turning a

|

||||

sketch into a detailed drawing.

|

||||

|

||||

Make sure your input image size dimensions are multiples of 64 e.g. 512x512. Otherwise you'll get ```Error: product of dimension sizes > 2**31'```. If you still get the error [try a different size](https://support.apple.com/guide/preview/resize-rotate-or-flip-an-image-prvw2015/mac#:~:text=image's%20file%20size-,In%20the%20Preview%20app%20on%20your%20Mac%2C%20open%20the%20file,is%20shown%20at%20the%20bottom.) like 512x256.

|

||||

`strength` is a value between 0.0 and 1.0 that controls the amount of noise that

|

||||

is added to the input image. Values that approach 1.0 allow for lots of

|

||||

variations but will also produce images that are not semantically consistent

|

||||

with the input. 0.0 preserves image exactly, 1.0 replaces it completely.

|

||||

|

||||

Make sure your input image size dimensions are multiples of 64 e.g. 512x512.

|

||||

Otherwise you'll get `Error: product of dimension sizes > 2**31'`. If you still

|

||||

get the error

|

||||

[try a different size](https://support.apple.com/guide/preview/resize-rotate-or-flip-an-image-prvw2015/mac#:~:text=image's%20file%20size-,In%20the%20Preview%20app%20on%20your%20Mac%2C%20open%20the%20file,is%20shown%20at%20the%20bottom.)

|

||||

like 512x256.

|

||||

|

||||

If you're on a Docker container, copy your input image into the Docker volume

|

||||

|

||||

```Shell

|

||||

docker cp /Users/<your-user>/Pictures/sketch-mountains-input.jpg dummy:/data/

|

||||

```

|

||||

|

||||

Try it out generating an image (or more). The ```dream``` script needs absolute paths to find the image so don't use ```~```.

|

||||

Try it out generating an image (or more). The `dream` script needs absolute

|

||||

paths to find the image so don't use `~`.

|

||||

|

||||

If you're on your Mac

|

||||

```Shell

|

||||

|

||||

```Shell

|

||||

dream> "A fantasy landscape, trending on artstation" -I /Users/<your-user>/Pictures/sketch-mountains-input.jpg --strength 0.75 --steps 100 -n4

|

||||

```

|

||||

|

||||

If you're on a Linux container on your Mac

|

||||

|

||||

```Shell

|

||||

dream> "A fantasy landscape, trending on artstation" -I /data/sketch-mountains-input.jpg --strength 0.75 --steps 50 -n1

|

||||

```

|

||||

|

||||

## Web Interface

|

||||

You can use the ```dream``` script with a graphical web interface. Start the web server with:

|

||||

|

||||

You can use the `dream` script with a graphical web interface. Start the web

|

||||

server with:

|

||||

|

||||

```Shell

|

||||

python3 scripts/dream.py --full_precision --web

|

||||

```

|

||||

If it's running on your Mac point your Mac web browser to http://127.0.0.1:9090

|

||||

|

||||

If it's running on your Mac point your Mac web browser to http://127.0.0.1:9090

|

||||

|

||||

Press Control-C at the command line to stop the web server.

|

||||

|

||||

## Notes

|

||||

|

||||

Some text you can add at the end of the prompt to make it very pretty:

|

||||

|

||||

```Shell

|

||||

cinematic photo, highly detailed, cinematic lighting, ultra-detailed, ultrarealistic, photorealism, Octane Rendering, cyberpunk lights, Hyper Detail, 8K, HD, Unreal Engine, V-Ray, full hd, cyberpunk, abstract, 3d octane render + 4k UHD + immense detail + dramatic lighting + well lit + black, purple, blue, pink, cerulean, teal, metallic colours, + fine details, ultra photoreal, photographic, concept art, cinematic composition, rule of thirds, mysterious, eerie, photorealism, breathtaking detailed, painting art deco pattern, by hsiao, ron cheng, john james audubon, bizarre compositions, exquisite detail, extremely moody lighting, painted by greg rutkowski makoto shinkai takashi takeuchi studio ghibli, akihiko yoshida

|

||||

```

|

||||

|

||||

The original scripts should work as well.

|

||||

|

||||

```Shell

|

||||

python3 scripts/orig_scripts/txt2img.py --help

|

||||

python3 scripts/orig_scripts/txt2img.py --ddim_steps 100 --n_iter 1 --n_samples 1 --plms --prompt "new born baby kitten. Hyper Detail, Octane Rendering, Unreal Engine, V-Ray"

|

||||

python3 scripts/orig_scripts/txt2img.py --ddim_steps 5 --n_iter 1 --n_samples 1 --plms --prompt "ocean" # or --klms

|

||||

```

|

||||

```

|

||||

|

||||

@ -24,40 +24,40 @@ title: Linux

|

||||

the installation worked, your command prompt will be prefixed by the name of the

|

||||

current anaconda environment - `(base)`.

|

||||

|

||||

3. Copy the stable-diffusion source code from GitHub:

|

||||

3. Copy the InvokeAI source code from GitHub:

|

||||

|

||||

```bash

|

||||

(base) ~$ git clone https://github.com/invoke-ai/InvokeAI.git

|

||||

```

|

||||

```

|

||||

(base) ~$ git clone https://github.com/invoke-ai/InvokeAI.git

|

||||

```

|

||||

|

||||

This will create stable-diffusion folder where you will follow the rest of the

|

||||

steps.

|

||||

This will create InvokeAI folder where you will follow the rest of the steps.

|

||||

|

||||

4. Enter the newly-created stable-diffusion folder. From this step forward make

|

||||

sure that you are working in the stable-diffusion directory!

|

||||

4. Enter the newly-created InvokeAI folder. From this step forward make sure that you are working in the InvokeAI directory!

|

||||

|

||||

```bash

|

||||

(base) ~$ cd stable-diffusion

|

||||

(base) ~/stable-diffusion$

|

||||

```

|

||||

```

|

||||

(base) ~$ cd InvokeAI

|

||||

(base) ~/InvokeAI$

|

||||

```

|

||||

|

||||

5. Use anaconda to copy necessary python packages, create a new python

|

||||

environment named `ldm` and activate the environment.

|

||||

|

||||

```bash

|

||||

(base) ~/stable-diffusion$ conda env create -f environment.yaml

|

||||

(base) ~/stable-diffusion$ conda activate ldm

|

||||

(ldm) ~/stable-diffusion$

|

||||

```

|

||||

|

||||

```

|

||||

(base) ~/InvokeAI$ conda env create -f environment.yaml

|

||||

(base) ~/InvokeAI$ conda activate ldm

|

||||

(ldm) ~/InvokeAI$

|

||||

```

|

||||

|

||||

After these steps, your command prompt will be prefixed by `(ldm)` as shown

|

||||

above.

|

||||

|

||||

6. Load a couple of small machine-learning models required by stable diffusion:

|

||||

|

||||

```bash

|

||||

(ldm) ~/stable-diffusion$ python3 scripts/preload_models.py

|

||||

```

|

||||

|

||||

```

|

||||

(ldm) ~/InvokeAI$ python3 scripts/preload_models.py

|

||||

```

|

||||

|

||||

!!! note

|

||||

|

||||

@ -79,38 +79,34 @@ title: Linux

|

||||

This will create a symbolic link from the stable-diffusion model.ckpt file, to

|

||||

the true location of the `sd-v1-4.ckpt` file.

|

||||

|

||||

```bash

|

||||

(ldm) ~/stable-diffusion$ mkdir -p models/ldm/stable-diffusion-v1

|

||||

(ldm) ~/stable-diffusion$ ln -sf /path/to/sd-v1-4.ckpt models/ldm/stable-diffusion-v1/model.ckpt

|

||||

```

|

||||

|

||||

```

|

||||

(ldm) ~/InvokeAI$ mkdir -p models/ldm/stable-diffusion-v1

|

||||

(ldm) ~/InvokeAI$ ln -sf /path/to/sd-v1-4.ckpt models/ldm/stable-diffusion-v1/model.ckpt

|

||||

```

|

||||

|

||||

8. Start generating images!

|

||||

|

||||

```bash

|

||||

# for the pre-release weights use the -l or --liaon400m switch

|

||||

(ldm) ~/stable-diffusion$ python3 scripts/dream.py -l

|

||||

```

|

||||

# for the pre-release weights use the -l or --liaon400m switch

|

||||

(ldm) ~/InvokeAI$ python3 scripts/dream.py -l

|

||||

|

||||

# for the post-release weights do not use the switch

|

||||

(ldm) ~/stable-diffusion$ python3 scripts/dream.py

|

||||

# for the post-release weights do not use the switch

|

||||

(ldm) ~/InvokeAI$ python3 scripts/dream.py

|

||||

|

||||

# for additional configuration switches and arguments, use -h or --help

|

||||

(ldm) ~/stable-diffusion$ python3 scripts/dream.py -h

|

||||

```

|

||||

# for additional configuration switches and arguments, use -h or --help

|

||||

(ldm) ~/InvokeAI$ python3 scripts/dream.py -h

|

||||

```

|

||||

|

||||

9. Subsequently, to relaunch the script, be sure to run "conda activate ldm"

|

||||

(step 5, second command), enter the `stable-diffusion` directory, and then

|

||||

launch the dream script (step 8). If you forget to activate the ldm

|

||||

environment, the script will fail with multiple `ModuleNotFound` errors.

|

||||

9. Subsequently, to relaunch the script, be sure to run "conda activate ldm" (step 5, second command), enter the `InvokeAI` directory, and then launch the dream script (step 8). If you forget to activate the ldm environment, the script will fail with multiple `ModuleNotFound` errors.

|

||||

|

||||

## Updating to newer versions of the script

|

||||

|

||||

This distribution is changing rapidly. If you used the `git clone` method

|

||||

(step 5) to download the stable-diffusion directory, then to update to the

|

||||

latest and greatest version, launch the Anaconda window, enter

|

||||

`stable-diffusion` and type:

|

||||

|

||||

```bash

|

||||

(ldm) ~/stable-diffusion$ git pull

|

||||

This distribution is changing rapidly. If you used the `git clone` method (step 5) to download the InvokeAI directory, then to update to the latest and greatest version, launch the Anaconda window, enter `InvokeAI` and type:

|

||||

|

||||

```

|

||||

(ldm) ~/InvokeAI$ git pull

|

||||

```

|

||||

|

||||

This will bring your local copy into sync with the remote one.

|

||||

|

||||

59

frontend/src/app/features.ts

Normal file

59

frontend/src/app/features.ts

Normal file

@ -0,0 +1,59 @@

|

||||

type FeatureHelpInfo = {

|

||||

text: string;

|

||||

href: string;

|

||||

guideImage: string;

|

||||

};

|

||||

|

||||

export enum Feature {

|

||||

PROMPT,

|

||||

GALLERY,

|

||||

OUTPUT,

|

||||

SEED_AND_VARIATION,

|

||||

ESRGAN,

|

||||

FACE_CORRECTION,

|

||||

IMAGE_TO_IMAGE,

|

||||

SAMPLER,

|

||||

}

|

||||

|

||||

export const FEATURES: Record<Feature, FeatureHelpInfo> = {

|

||||

[Feature.PROMPT]: {

|

||||

text: 'This field will take all prompt text, including both content and stylistic terms. CLI Commands will not work in the prompt.',

|

||||

href: 'link/to/docs/feature3.html',

|

||||

guideImage: 'asset/path.gif',

|

||||

},

|

||||

[Feature.GALLERY]: {

|

||||

text: 'As new invocations are generated, files from the output directory will be displayed here. Generations have additional options to configure new generations.',

|

||||

href: 'link/to/docs/feature3.html',

|

||||

guideImage: 'asset/path.gif',

|

||||

},

|

||||

[Feature.OUTPUT]: {

|

||||

text: 'The Height and Width of generations can be controlled here. If you experience errors, you may be generating an image too large for your system. The seamless option will more often result in repeating patterns in outputs.',

|

||||

href: 'link/to/docs/feature3.html',

|

||||

guideImage: 'asset/path.gif',

|

||||

},

|

||||

[Feature.SEED_AND_VARIATION]: {

|

||||

text: 'Seed values provide an initial set of noise which guide the denoising process. Try a variation with an amount of between 0 and 1 to change the output image for that seed.',

|

||||

href: 'link/to/docs/feature3.html',

|

||||

guideImage: 'asset/path.gif',

|

||||

},

|

||||

[Feature.ESRGAN]: {

|

||||

text: 'The ESRGAN setting can be used to increase the output resolution without requiring a higher width/height in the initial generation.',

|

||||

href: 'link/to/docs/feature1.html',

|

||||

guideImage: 'asset/path.gif',

|

||||

},

|

||||

[Feature.FACE_CORRECTION]: {

|

||||

text: 'Using GFPGAN or CodeFormer, Face Correction will attempt to identify faces in outputs, and correct any defects/abnormalities. Higher values will apply a stronger corrective pressure on outputs.',

|

||||

href: 'link/to/docs/feature2.html',

|

||||

guideImage: 'asset/path.gif',

|

||||

},

|

||||

[Feature.IMAGE_TO_IMAGE]: {

|

||||

text: 'ImageToImage allows the upload of an initial image, which InvokeAI will use to guide the generation process, along with a prompt. A lower value for this setting will more closely resemble the original image. Values between 0-1 are accepted, and a range of .25-.75 is recommended ',

|

||||

href: 'link/to/docs/feature3.html',

|

||||

guideImage: 'asset/path.gif',

|

||||

},

|

||||

[Feature.SAMPLER]: {

|

||||

text: 'This setting allows for different denoising samplers to be used, as well as the number of denoising steps used, which will change the resulting output.',

|

||||

href: 'link/to/docs/feature3.html',

|

||||

guideImage: 'asset/path.gif',

|

||||

},

|

||||

};

|

||||

22

frontend/src/common/components/GuideIcon.tsx

Normal file

22

frontend/src/common/components/GuideIcon.tsx

Normal file

@ -0,0 +1,22 @@

|

||||

import { Box, forwardRef, Icon } from '@chakra-ui/react';

|

||||

import { IconType } from 'react-icons';

|

||||

import { MdHelp } from 'react-icons/md';

|

||||

import { Feature } from '../../app/features';

|

||||

import GuidePopover from './GuidePopover';

|

||||

|

||||

type GuideIconProps = {

|

||||

feature: Feature;

|

||||

icon?: IconType;

|

||||

};

|

||||

|

||||

const GuideIcon = forwardRef(

|

||||

({ feature, icon = MdHelp }: GuideIconProps, ref) => (

|

||||

<GuidePopover feature={feature}>

|

||||

<Box ref={ref}>

|

||||

<Icon as={icon} />

|

||||

</Box>

|

||||

</GuidePopover>

|

||||

)

|

||||

);

|

||||

|

||||

export default GuideIcon;

|

||||

51

frontend/src/common/components/GuidePopover.tsx

Normal file

51

frontend/src/common/components/GuidePopover.tsx

Normal file

@ -0,0 +1,51 @@

|

||||

import {

|

||||

Popover,

|

||||

PopoverArrow,

|

||||

PopoverContent,

|

||||

PopoverTrigger,

|

||||

PopoverHeader,

|

||||

Flex,

|

||||

Box,

|

||||

} from '@chakra-ui/react';

|

||||

import { SystemState } from '../../features/system/systemSlice';

|

||||

import { useAppSelector } from '../../app/store';

|

||||

import { RootState } from '../../app/store';

|