mirror of

https://github.com/invoke-ai/InvokeAI

synced 2024-08-30 20:32:17 +00:00

Merge branch 'main' into ryan/multi-image-ip

This commit is contained in:

2

.github/workflows/pypi-release.yml

vendored

2

.github/workflows/pypi-release.yml

vendored

@ -28,7 +28,7 @@ jobs:

|

||||

run: twine check dist/*

|

||||

|

||||

- name: check PyPI versions

|

||||

if: github.ref == 'refs/heads/main' || github.ref == 'refs/heads/v2.3' || github.ref == 'refs/heads/v3.3.0post1'

|

||||

if: github.ref == 'refs/heads/main' || startsWith(github.ref, 'refs/heads/release/')

|

||||

run: |

|

||||

pip install --upgrade requests

|

||||

python -c "\

|

||||

|

||||

@ -8,28 +8,42 @@ To download a node, simply download the `.py` node file from the link and add it

|

||||

|

||||

To use a community workflow, download the the `.json` node graph file and load it into Invoke AI via the **Load Workflow** button in the Workflow Editor.

|

||||

|

||||

--------------------------------

|

||||

- Community Nodes

|

||||

+ [Depth Map from Wavefront OBJ](#depth-map-from-wavefront-obj)

|

||||

+ [Film Grain](#film-grain)

|

||||

+ [Generative Grammar-Based Prompt Nodes](#generative-grammar-based-prompt-nodes)

|

||||

+ [GPT2RandomPromptMaker](#gpt2randompromptmaker)

|

||||

+ [Grid to Gif](#grid-to-gif)

|

||||

+ [Halftone](#halftone)

|

||||

+ [Ideal Size](#ideal-size)

|

||||

+ [Image and Mask Composition Pack](#image-and-mask-composition-pack)

|

||||

+ [Image to Character Art Image Nodes](#image-to-character-art-image-nodes)

|

||||

+ [Image Picker](#image-picker)

|

||||

+ [Load Video Frame](#load-video-frame)

|

||||

+ [Make 3D](#make-3d)

|

||||

+ [Oobabooga](#oobabooga)

|

||||

+ [Prompt Tools](#prompt-tools)

|

||||

+ [Retroize](#retroize)

|

||||

+ [Size Stepper Nodes](#size-stepper-nodes)

|

||||

+ [Text font to Image](#text-font-to-image)

|

||||

+ [Thresholding](#thresholding)

|

||||

+ [XY Image to Grid and Images to Grids nodes](#xy-image-to-grid-and-images-to-grids-nodes)

|

||||

- [Example Node Template](#example-node-template)

|

||||

- [Disclaimer](#disclaimer)

|

||||

- [Help](#help)

|

||||

|

||||

|

||||

--------------------------------

|

||||

### Make 3D

|

||||

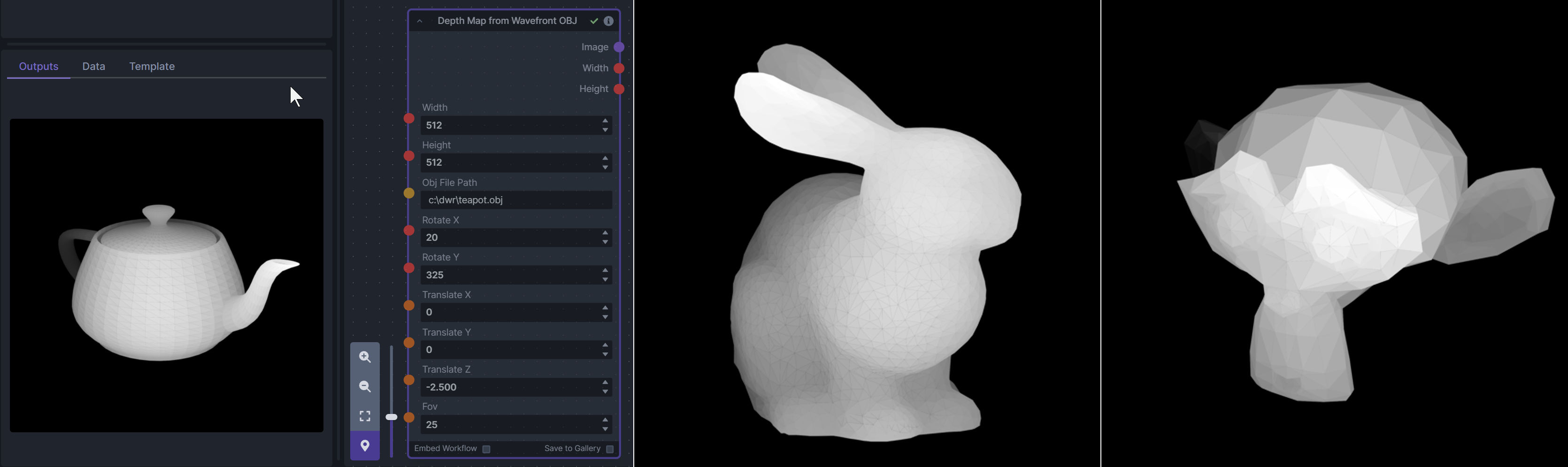

### Depth Map from Wavefront OBJ

|

||||

|

||||

**Description:** Create compelling 3D stereo images from 2D originals.

|

||||

**Description:** Render depth maps from Wavefront .obj files (triangulated) using this simple 3D renderer utilizing numpy and matplotlib to compute and color the scene. There are simple parameters to change the FOV, camera position, and model orientation.

|

||||

|

||||

**Node Link:** [https://gitlab.com/srcrr/shift3d/-/raw/main/make3d.py](https://gitlab.com/srcrr/shift3d)

|

||||

To be imported, an .obj must use triangulated meshes, so make sure to enable that option if exporting from a 3D modeling program. This renderer makes each triangle a solid color based on its average depth, so it will cause anomalies if your .obj has large triangles. In Blender, the Remesh modifier can be helpful to subdivide a mesh into small pieces that work well given these limitations.

|

||||

|

||||

**Example Node Graph:** https://gitlab.com/srcrr/shift3d/-/raw/main/example-workflow.json?ref_type=heads&inline=false

|

||||

**Node Link:** https://github.com/dwringer/depth-from-obj-node

|

||||

|

||||

**Output Examples**

|

||||

|

||||

{: style="height:512px;width:512px"}

|

||||

{: style="height:512px;width:512px"}

|

||||

|

||||

--------------------------------

|

||||

### Ideal Size

|

||||

|

||||

**Description:** This node calculates an ideal image size for a first pass of a multi-pass upscaling. The aim is to avoid duplication that results from choosing a size larger than the model is capable of.

|

||||

|

||||

**Node Link:** https://github.com/JPPhoto/ideal-size-node

|

||||

**Example Usage:**

|

||||

</br><img src="https://raw.githubusercontent.com/dwringer/depth-from-obj-node/main/depth_from_obj_usage.jpg" width="500" />

|

||||

|

||||

--------------------------------

|

||||

### Film Grain

|

||||

@ -39,68 +53,19 @@ To use a community workflow, download the the `.json` node graph file and load i

|

||||

**Node Link:** https://github.com/JPPhoto/film-grain-node

|

||||

|

||||

--------------------------------

|

||||

### Image Picker

|

||||

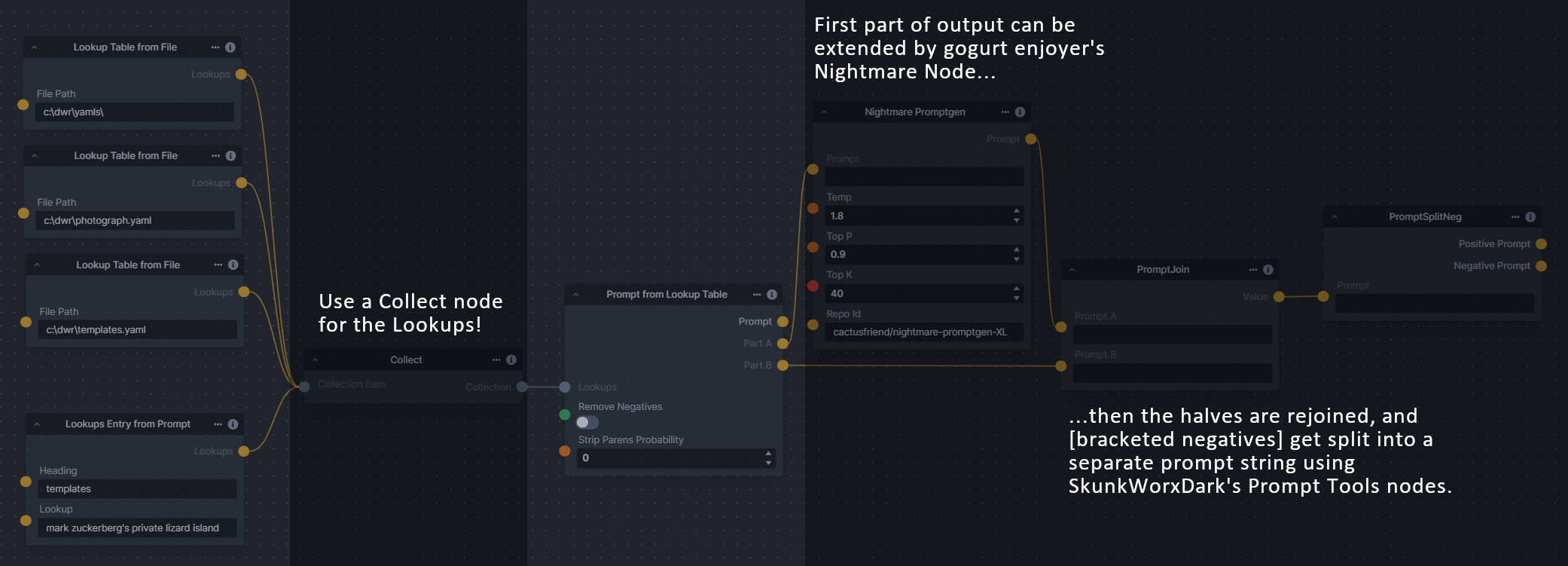

### Generative Grammar-Based Prompt Nodes

|

||||

|

||||

**Description:** This InvokeAI node takes in a collection of images and randomly chooses one. This can be useful when you have a number of poses to choose from for a ControlNet node, or a number of input images for another purpose.

|

||||

**Description:** This set of 3 nodes generates prompts from simple user-defined grammar rules (loaded from custom files - examples provided below). The prompts are made by recursively expanding a special template string, replacing nonterminal "parts-of-speech" until no nonterminal terms remain in the string.

|

||||

|

||||

**Node Link:** https://github.com/JPPhoto/image-picker-node

|

||||

This includes 3 Nodes:

|

||||

- *Lookup Table from File* - loads a YAML file "prompt" section (or of a whole folder of YAML's) into a JSON-ified dictionary (Lookups output)

|

||||

- *Lookups Entry from Prompt* - places a single entry in a new Lookups output under the specified heading

|

||||

- *Prompt from Lookup Table* - uses a Collection of Lookups as grammar rules from which to randomly generate prompts.

|

||||

|

||||

--------------------------------

|

||||

### Thresholding

|

||||

**Node Link:** https://github.com/dwringer/generative-grammar-prompt-nodes

|

||||

|

||||

**Description:** This node generates masks for highlights, midtones, and shadows given an input image. You can optionally specify a blur for the lookup table used in making those masks from the source image.

|

||||

|

||||

**Node Link:** https://github.com/JPPhoto/thresholding-node

|

||||

|

||||

**Examples**

|

||||

|

||||

Input:

|

||||

|

||||

{: style="height:512px;width:512px"}

|

||||

|

||||

Highlights/Midtones/Shadows:

|

||||

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/727021c1-36ff-4ec8-90c8-105e00de986d" style="width: 30%" />

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/0b721bfc-f051-404e-b905-2f16b824ddfe" style="width: 30%" />

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/04c1297f-1c88-42b6-a7df-dd090b976286" style="width: 30%" />

|

||||

|

||||

Highlights/Midtones/Shadows (with LUT blur enabled):

|

||||

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/19aa718a-70c1-4668-8169-d68f4bd13771" style="width: 30%" />

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/0a440e43-697f-4d17-82ee-f287467df0a5" style="width: 30%" />

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/0701fd0f-2ca7-4fe2-8613-2b52547bafce" style="width: 30%" />

|

||||

|

||||

--------------------------------

|

||||

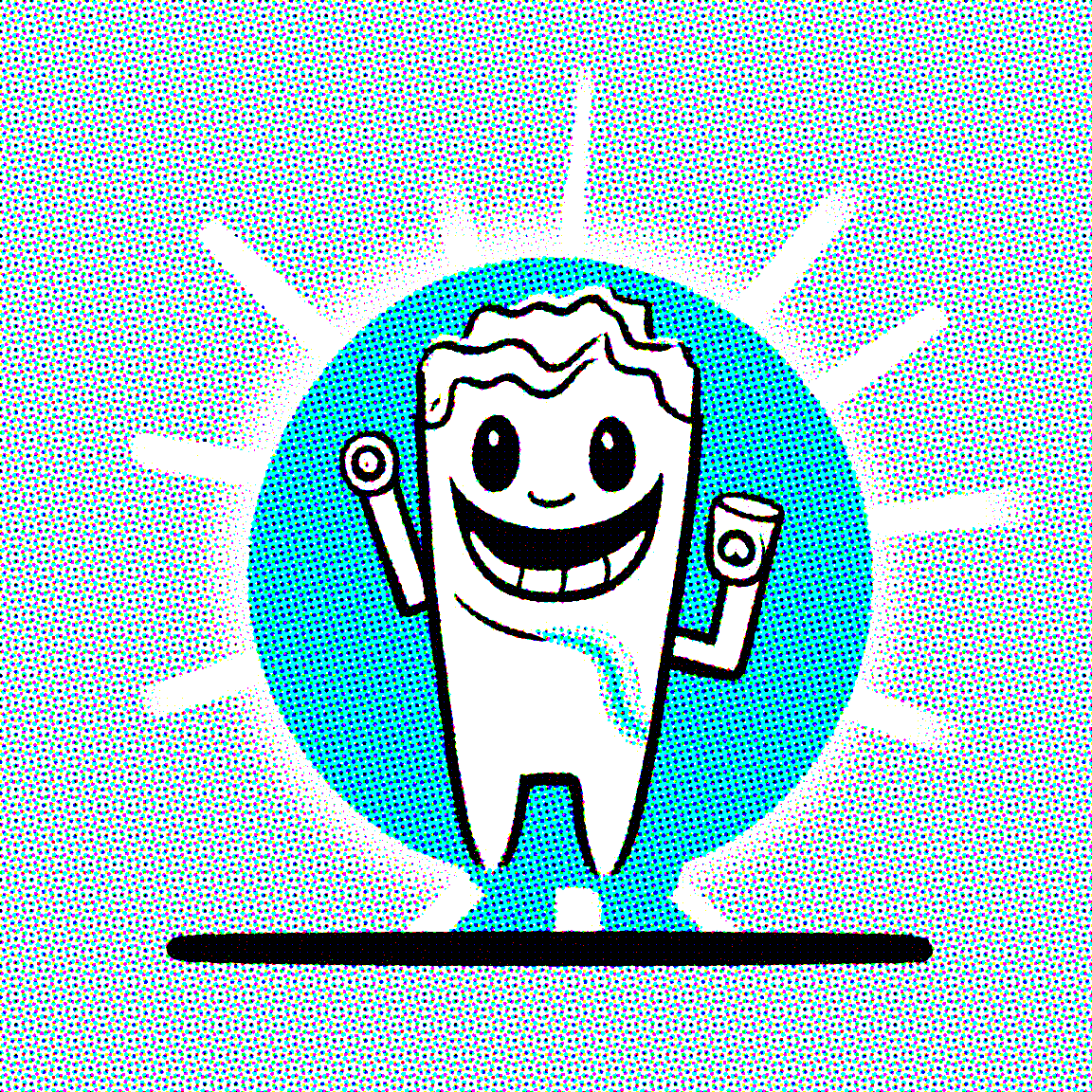

### Halftone

|

||||

|

||||

**Description**: Halftone converts the source image to grayscale and then performs halftoning. CMYK Halftone converts the image to CMYK and applies a per-channel halftoning to make the source image look like a magazine or newspaper. For both nodes, you can specify angles and halftone dot spacing.

|

||||

|

||||

**Node Link:** https://github.com/JPPhoto/halftone-node

|

||||

|

||||

**Example**

|

||||

|

||||

Input:

|

||||

|

||||

{: style="height:512px;width:512px"}

|

||||

|

||||

Halftone Output:

|

||||

|

||||

{: style="height:512px;width:512px"}

|

||||

|

||||

CMYK Halftone Output:

|

||||

|

||||

{: style="height:512px;width:512px"}

|

||||

|

||||

--------------------------------

|

||||

### Retroize

|

||||

|

||||

**Description:** Retroize is a collection of nodes for InvokeAI to "Retroize" images. Any image can be given a fresh coat of retro paint with these nodes, either from your gallery or from within the graph itself. It includes nodes to pixelize, quantize, palettize, and ditherize images; as well as to retrieve palettes from existing images.

|

||||

|

||||

**Node Link:** https://github.com/Ar7ific1al/invokeai-retroizeinode/

|

||||

|

||||

**Retroize Output Examples**

|

||||

|

||||

|

||||

**Example Usage:**

|

||||

</br><img src="https://raw.githubusercontent.com/dwringer/generative-grammar-prompt-nodes/main/lookuptables_usage.jpg" width="500" />

|

||||

|

||||

--------------------------------

|

||||

### GPT2RandomPromptMaker

|

||||

@ -113,76 +78,49 @@ CMYK Halftone Output:

|

||||

|

||||

Generated Prompt: An enchanted weapon will be usable by any character regardless of their alignment.

|

||||

|

||||

|

||||

<img src="https://github.com/mickr777/InvokeAI/assets/115216705/8496ba09-bcdd-4ff7-8076-ff213b6a1e4c" width="200" />

|

||||

|

||||

--------------------------------

|

||||

### Load Video Frame

|

||||

### Grid to Gif

|

||||

|

||||

**Description:** This is a video frame image provider + indexer/video creation nodes for hooking up to iterators and ranges and ControlNets and such for invokeAI node experimentation. Think animation + ControlNet outputs.

|

||||

**Description:** One node that turns a grid image into an image collection, one node that turns an image collection into a gif.

|

||||

|

||||

**Node Link:** https://github.com/helix4u/load_video_frame

|

||||

**Node Link:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/GridToGif.py

|

||||

|

||||

**Example Node Graph:** https://github.com/helix4u/load_video_frame/blob/main/Example_Workflow.json

|

||||

**Example Node Graph:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/Grid%20to%20Gif%20Example%20Workflow.json

|

||||

|

||||

**Output Example:**

|

||||

**Output Examples**

|

||||

|

||||

|

||||

[Full mp4 of Example Output test.mp4](https://github.com/helix4u/load_video_frame/blob/main/test.mp4)

|

||||

<img src="https://raw.githubusercontent.com/mildmisery/invokeai-GridToGifNode/main/input.png" width="300" />

|

||||

<img src="https://raw.githubusercontent.com/mildmisery/invokeai-GridToGifNode/main/output.gif" width="300" />

|

||||

|

||||

--------------------------------

|

||||

### Halftone

|

||||

|

||||

### Oobabooga

|

||||

**Description**: Halftone converts the source image to grayscale and then performs halftoning. CMYK Halftone converts the image to CMYK and applies a per-channel halftoning to make the source image look like a magazine or newspaper. For both nodes, you can specify angles and halftone dot spacing.

|

||||

|

||||

**Description:** asks a local LLM running in Oobabooga's Text-Generation-Webui to write a prompt based on the user input.

|

||||

**Node Link:** https://github.com/JPPhoto/halftone-node

|

||||

|

||||

**Link:** https://github.com/sammyf/oobabooga-node

|

||||

**Example**

|

||||

|

||||

Input:

|

||||

|

||||

**Example:**

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/fd5efb9f-4355-4409-a1c2-c1ca99e0cab4" width="300" />

|

||||

|

||||

"describe a new mystical creature in its natural environment"

|

||||

Halftone Output:

|

||||

|

||||

*can return*

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/7e606f29-e68f-4d46-b3d5-97f799a4ec2f" width="300" />

|

||||

|

||||

"The mystical creature I am describing to you is called the "Glimmerwing". It is a majestic, iridescent being that inhabits the depths of the most enchanted forests and glimmering lakes. Its body is covered in shimmering scales that reflect every color of the rainbow, and it has delicate, translucent wings that sparkle like diamonds in the sunlight. The Glimmerwing's home is a crystal-clear lake, surrounded by towering trees with leaves that shimmer like jewels. In this serene environment, the Glimmerwing spends its days swimming gracefully through the water, chasing schools of glittering fish and playing with the gentle ripples of the lake's surface.

|

||||

As the sun sets, the Glimmerwing perches on a branch of one of the trees, spreading its wings to catch the last rays of light. The creature's scales glow softly, casting a rainbow of colors across the forest floor. The Glimmerwing sings a haunting melody, its voice echoing through the stillness of the night air. Its song is said to have the power to heal the sick and bring peace to troubled souls. Those who are lucky enough to hear the Glimmerwing's song are forever changed by its beauty and grace."

|

||||

CMYK Halftone Output:

|

||||

|

||||

|

||||

|

||||

**Requirement**

|

||||

|

||||

a Text-Generation-Webui instance (might work remotely too, but I never tried it) and obviously InvokeAI 3.x

|

||||

|

||||

**Note**

|

||||

|

||||

This node works best with SDXL models, especially as the style can be described independantly of the LLM's output.

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/c59c578f-db8e-4d66-8c66-2851752d75ea" width="300" />

|

||||

|

||||

--------------------------------

|

||||

### Depth Map from Wavefront OBJ

|

||||

### Ideal Size

|

||||

|

||||

**Description:** Render depth maps from Wavefront .obj files (triangulated) using this simple 3D renderer utilizing numpy and matplotlib to compute and color the scene. There are simple parameters to change the FOV, camera position, and model orientation.

|

||||

**Description:** This node calculates an ideal image size for a first pass of a multi-pass upscaling. The aim is to avoid duplication that results from choosing a size larger than the model is capable of.

|

||||

|

||||

To be imported, an .obj must use triangulated meshes, so make sure to enable that option if exporting from a 3D modeling program. This renderer makes each triangle a solid color based on its average depth, so it will cause anomalies if your .obj has large triangles. In Blender, the Remesh modifier can be helpful to subdivide a mesh into small pieces that work well given these limitations.

|

||||

|

||||

**Node Link:** https://github.com/dwringer/depth-from-obj-node

|

||||

|

||||

**Example Usage:**

|

||||

|

||||

|

||||

--------------------------------

|

||||

### Generative Grammar-Based Prompt Nodes

|

||||

|

||||

**Description:** This set of 3 nodes generates prompts from simple user-defined grammar rules (loaded from custom files - examples provided below). The prompts are made by recursively expanding a special template string, replacing nonterminal "parts-of-speech" until no more nonterminal terms remain in the string.

|

||||

|

||||

This includes 3 Nodes:

|

||||

- *Lookup Table from File* - loads a YAML file "prompt" section (or of a whole folder of YAML's) into a JSON-ified dictionary (Lookups output)

|

||||

- *Lookups Entry from Prompt* - places a single entry in a new Lookups output under the specified heading

|

||||

- *Prompt from Lookup Table* - uses a Collection of Lookups as grammar rules from which to randomly generate prompts.

|

||||

|

||||

**Node Link:** https://github.com/dwringer/generative-grammar-prompt-nodes

|

||||

|

||||

**Example Usage:**

|

||||

|

||||

**Node Link:** https://github.com/JPPhoto/ideal-size-node

|

||||

|

||||

--------------------------------

|

||||

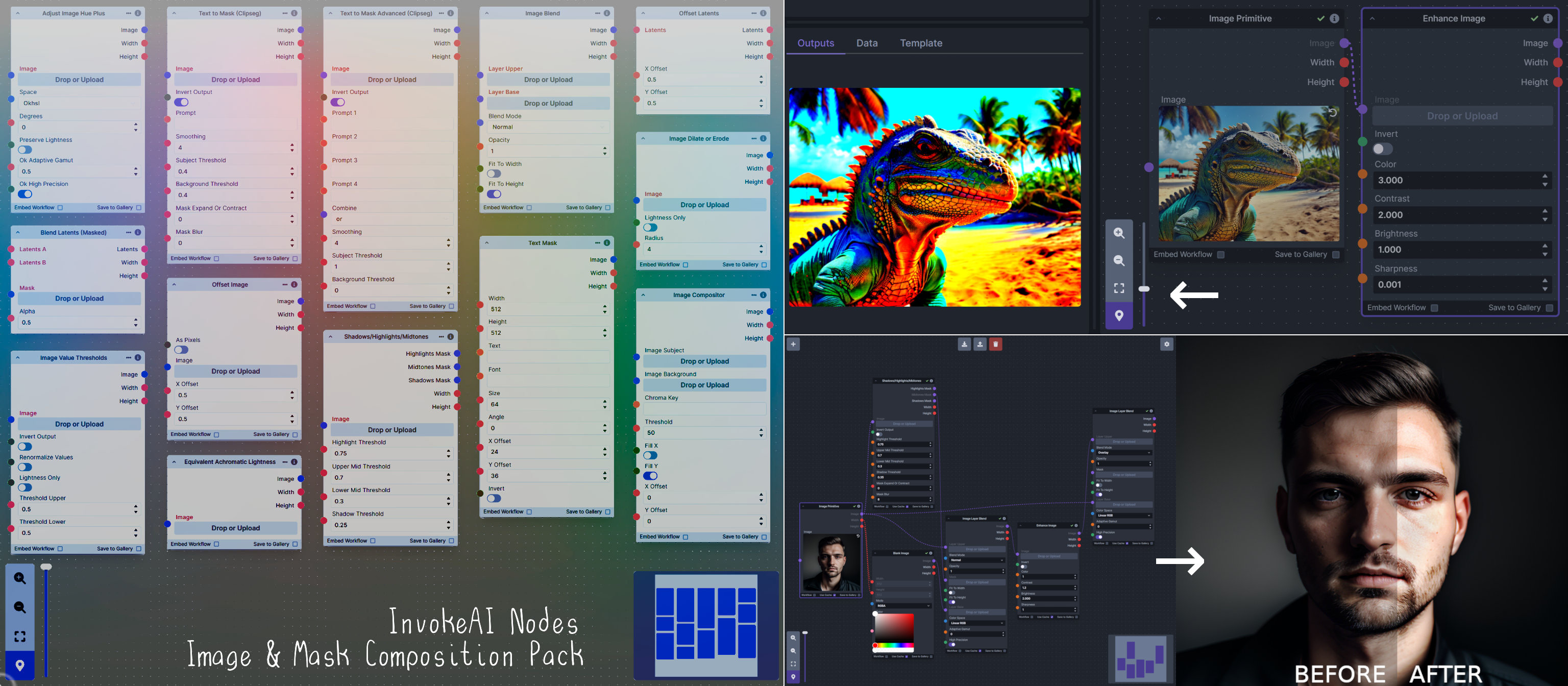

### Image and Mask Composition Pack

|

||||

@ -208,45 +146,88 @@ This includes 15 Nodes:

|

||||

- *Text Mask (simple 2D)* - create and position a white on black (or black on white) line of text using any font locally available to Invoke.

|

||||

|

||||

**Node Link:** https://github.com/dwringer/composition-nodes

|

||||

|

||||

**Nodes and Output Examples:**

|

||||

|

||||

|

||||

</br><img src="https://raw.githubusercontent.com/dwringer/composition-nodes/main/composition_pack_overview.jpg" width="500" />

|

||||

|

||||

--------------------------------

|

||||

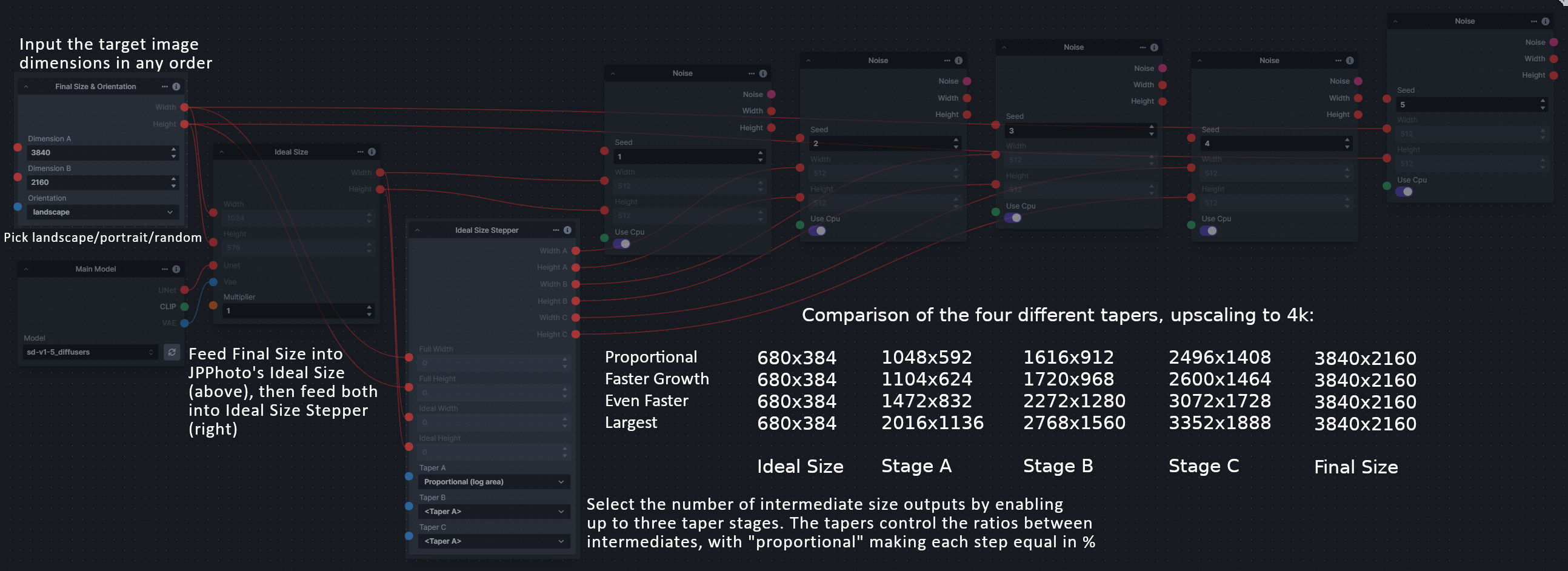

### Size Stepper Nodes

|

||||

### Image to Character Art Image Nodes

|

||||

|

||||

**Description:** This is a set of nodes for calculating the necessary size increments for doing upscaling workflows. Use the *Final Size & Orientation* node to enter your full size dimensions and orientation (portrait/landscape/random), then plug that and your initial generation dimensions into the *Ideal Size Stepper* and get 1, 2, or 3 intermediate pairs of dimensions for upscaling. Note this does not output the initial size or full size dimensions: the 1, 2, or 3 outputs of this node are only the intermediate sizes.

|

||||

**Description:** Group of nodes to convert an input image into ascii/unicode art Image

|

||||

|

||||

A third node is included, *Random Switch (Integers)*, which is just a generic version of Final Size with no orientation selection.

|

||||

|

||||

**Node Link:** https://github.com/dwringer/size-stepper-nodes

|

||||

|

||||

**Example Usage:**

|

||||

|

||||

|

||||

--------------------------------

|

||||

|

||||

### Text font to Image

|

||||

|

||||

**Description:** text font to text image node for InvokeAI, download a font to use (or if in font cache uses it from there), the text is always resized to the image size, but can control that with padding, optional 2nd line

|

||||

|

||||

**Node Link:** https://github.com/mickr777/textfontimage

|

||||

**Node Link:** https://github.com/mickr777/imagetoasciiimage

|

||||

|

||||

**Output Examples**

|

||||

|

||||

|

||||

|

||||

Results after using the depth controlnet

|

||||

|

||||

|

||||

|

||||

|

||||

<img src="https://user-images.githubusercontent.com/115216705/271817646-8e061fcc-9a2c-4fa9-bcc7-c0f7b01e9056.png" width="300" /><img src="https://github.com/mickr777/imagetoasciiimage/assets/115216705/3c4990eb-2f42-46b9-90f9-0088b939dc6a" width="300" /></br>

|

||||

<img src="https://github.com/mickr777/imagetoasciiimage/assets/115216705/fee7f800-a4a8-41e2-a66b-c66e4343307e" width="300" />

|

||||

<img src="https://github.com/mickr777/imagetoasciiimage/assets/115216705/1d9c1003-a45f-45c2-aac7-46470bb89330" width="300" />

|

||||

|

||||

--------------------------------

|

||||

|

||||

### Image Picker

|

||||

|

||||

**Description:** This InvokeAI node takes in a collection of images and randomly chooses one. This can be useful when you have a number of poses to choose from for a ControlNet node, or a number of input images for another purpose.

|

||||

|

||||

**Node Link:** https://github.com/JPPhoto/image-picker-node

|

||||

|

||||

--------------------------------

|

||||

### Load Video Frame

|

||||

|

||||

**Description:** This is a video frame image provider + indexer/video creation nodes for hooking up to iterators and ranges and ControlNets and such for invokeAI node experimentation. Think animation + ControlNet outputs.

|

||||

|

||||

**Node Link:** https://github.com/helix4u/load_video_frame

|

||||

|

||||

**Example Node Graph:** https://github.com/helix4u/load_video_frame/blob/main/Example_Workflow.json

|

||||

|

||||

**Output Example:**

|

||||

|

||||

<img src="https://github.com/helix4u/load_video_frame/blob/main/testmp4_embed_converted.gif" width="500" />

|

||||

[Full mp4 of Example Output test.mp4](https://github.com/helix4u/load_video_frame/blob/main/test.mp4)

|

||||

|

||||

--------------------------------

|

||||

### Make 3D

|

||||

|

||||

**Description:** Create compelling 3D stereo images from 2D originals.

|

||||

|

||||

**Node Link:** [https://gitlab.com/srcrr/shift3d/-/raw/main/make3d.py](https://gitlab.com/srcrr/shift3d)

|

||||

|

||||

**Example Node Graph:** https://gitlab.com/srcrr/shift3d/-/raw/main/example-workflow.json?ref_type=heads&inline=false

|

||||

|

||||

**Output Examples**

|

||||

|

||||

<img src="https://gitlab.com/srcrr/shift3d/-/raw/main/example-1.png" width="300" />

|

||||

<img src="https://gitlab.com/srcrr/shift3d/-/raw/main/example-2.png" width="300" />

|

||||

|

||||

--------------------------------

|

||||

### Oobabooga

|

||||

|

||||

**Description:** asks a local LLM running in Oobabooga's Text-Generation-Webui to write a prompt based on the user input.

|

||||

|

||||

**Link:** https://github.com/sammyf/oobabooga-node

|

||||

|

||||

**Example:**

|

||||

|

||||

"describe a new mystical creature in its natural environment"

|

||||

|

||||

*can return*

|

||||

|

||||

"The mystical creature I am describing to you is called the "Glimmerwing". It is a majestic, iridescent being that inhabits the depths of the most enchanted forests and glimmering lakes. Its body is covered in shimmering scales that reflect every color of the rainbow, and it has delicate, translucent wings that sparkle like diamonds in the sunlight. The Glimmerwing's home is a crystal-clear lake, surrounded by towering trees with leaves that shimmer like jewels. In this serene environment, the Glimmerwing spends its days swimming gracefully through the water, chasing schools of glittering fish and playing with the gentle ripples of the lake's surface.

|

||||

As the sun sets, the Glimmerwing perches on a branch of one of the trees, spreading its wings to catch the last rays of light. The creature's scales glow softly, casting a rainbow of colors across the forest floor. The Glimmerwing sings a haunting melody, its voice echoing through the stillness of the night air. Its song is said to have the power to heal the sick and bring peace to troubled souls. Those who are lucky enough to hear the Glimmerwing's song are forever changed by its beauty and grace."

|

||||

|

||||

<img src="https://github.com/sammyf/oobabooga-node/assets/42468608/cecdd820-93dd-4c35-abbf-607e001fb2ed" width="300" />

|

||||

|

||||

**Requirement**

|

||||

|

||||

a Text-Generation-Webui instance (might work remotely too, but I never tried it) and obviously InvokeAI 3.x

|

||||

|

||||

**Note**

|

||||

|

||||

This node works best with SDXL models, especially as the style can be described independently of the LLM's output.

|

||||

|

||||

--------------------------------

|

||||

### Prompt Tools

|

||||

|

||||

**Description:** A set of InvokeAI nodes that add general prompt manipulation tools. These where written to accompany the PromptsFromFile node and other prompt generation nodes.

|

||||

**Description:** A set of InvokeAI nodes that add general prompt manipulation tools. These were written to accompany the PromptsFromFile node and other prompt generation nodes.

|

||||

|

||||

1. PromptJoin - Joins to prompts into one.

|

||||

2. PromptReplace - performs a search and replace on a prompt. With the option of using regex.

|

||||

@ -263,51 +244,83 @@ See full docs here: https://github.com/skunkworxdark/Prompt-tools-nodes/edit/mai

|

||||

**Node Link:** https://github.com/skunkworxdark/Prompt-tools-nodes

|

||||

|

||||

--------------------------------

|

||||

### Retroize

|

||||

|

||||

**Description:** Retroize is a collection of nodes for InvokeAI to "Retroize" images. Any image can be given a fresh coat of retro paint with these nodes, either from your gallery or from within the graph itself. It includes nodes to pixelize, quantize, palettize, and ditherize images; as well as to retrieve palettes from existing images.

|

||||

|

||||

**Node Link:** https://github.com/Ar7ific1al/invokeai-retroizeinode/

|

||||

|

||||

**Retroize Output Examples**

|

||||

|

||||

<img src="https://github.com/Ar7ific1al/InvokeAI_nodes_retroize/assets/2306586/de8b4fa6-324c-4c2d-b36c-297600c73974" width="500" />

|

||||

|

||||

--------------------------------

|

||||

### Size Stepper Nodes

|

||||

|

||||

**Description:** This is a set of nodes for calculating the necessary size increments for doing upscaling workflows. Use the *Final Size & Orientation* node to enter your full size dimensions and orientation (portrait/landscape/random), then plug that and your initial generation dimensions into the *Ideal Size Stepper* and get 1, 2, or 3 intermediate pairs of dimensions for upscaling. Note this does not output the initial size or full size dimensions: the 1, 2, or 3 outputs of this node are only the intermediate sizes.

|

||||

|

||||

A third node is included, *Random Switch (Integers)*, which is just a generic version of Final Size with no orientation selection.

|

||||

|

||||

**Node Link:** https://github.com/dwringer/size-stepper-nodes

|

||||

|

||||

**Example Usage:**

|

||||

</br><img src="https://raw.githubusercontent.com/dwringer/size-stepper-nodes/main/size_nodes_usage.jpg" width="500" />

|

||||

|

||||

--------------------------------

|

||||

### Text font to Image

|

||||

|

||||

**Description:** text font to text image node for InvokeAI, download a font to use (or if in font cache uses it from there), the text is always resized to the image size, but can control that with padding, optional 2nd line

|

||||

|

||||

**Node Link:** https://github.com/mickr777/textfontimage

|

||||

|

||||

**Output Examples**

|

||||

|

||||

<img src="https://github.com/mickr777/InvokeAI/assets/115216705/c21b0af3-d9c6-4c16-9152-846a23effd36" width="300" />

|

||||

|

||||

Results after using the depth controlnet

|

||||

|

||||

<img src="https://github.com/mickr777/InvokeAI/assets/115216705/915f1a53-968e-43eb-aa61-07cd8f1a733a" width="300" />

|

||||

<img src="https://github.com/mickr777/InvokeAI/assets/115216705/821ef89e-8a60-44f5-b94e-471a9d8690cc" width="300" />

|

||||

<img src="https://github.com/mickr777/InvokeAI/assets/115216705/2befcb6d-49f4-4bfd-b5fc-1fee19274f89" width="300" />

|

||||

|

||||

--------------------------------

|

||||

### Thresholding

|

||||

|

||||

**Description:** This node generates masks for highlights, midtones, and shadows given an input image. You can optionally specify a blur for the lookup table used in making those masks from the source image.

|

||||

|

||||

**Node Link:** https://github.com/JPPhoto/thresholding-node

|

||||

|

||||

**Examples**

|

||||

|

||||

Input:

|

||||

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/c88ada13-fb3d-484c-a4fe-947b44712632" width="300" />

|

||||

|

||||

Highlights/Midtones/Shadows:

|

||||

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/727021c1-36ff-4ec8-90c8-105e00de986d" width="300" />

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/0b721bfc-f051-404e-b905-2f16b824ddfe" width="300" />

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/04c1297f-1c88-42b6-a7df-dd090b976286" width="300" />

|

||||

|

||||

Highlights/Midtones/Shadows (with LUT blur enabled):

|

||||

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/19aa718a-70c1-4668-8169-d68f4bd13771" width="300" />

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/0a440e43-697f-4d17-82ee-f287467df0a5" width="300" />

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/0701fd0f-2ca7-4fe2-8613-2b52547bafce" width="300" />

|

||||

|

||||

--------------------------------

|

||||

### XY Image to Grid and Images to Grids nodes

|

||||

|

||||

**Description:** Image to grid nodes and supporting tools.

|

||||

|

||||

1. "Images To Grids" node - Takes a collection of images and creates a grid(s) of images. If there are more images than the size of a single grid then mutilple grids will be created until it runs out of images.

|

||||

2. "XYImage To Grid" node - Converts a collection of XYImages into a labeled Grid of images. The XYImages collection has to be built using the supporoting nodes. See example node setups for more details.

|

||||

|

||||

1. "Images To Grids" node - Takes a collection of images and creates a grid(s) of images. If there are more images than the size of a single grid then multiple grids will be created until it runs out of images.

|

||||

2. "XYImage To Grid" node - Converts a collection of XYImages into a labeled Grid of images. The XYImages collection has to be built using the supporting nodes. See example node setups for more details.

|

||||

|

||||

See full docs here: https://github.com/skunkworxdark/XYGrid_nodes/edit/main/README.md

|

||||

|

||||

**Node Link:** https://github.com/skunkworxdark/XYGrid_nodes

|

||||

|

||||

--------------------------------

|

||||

|

||||

### Image to Character Art Image Node's

|

||||

|

||||

**Description:** Group of nodes to convert an input image into ascii/unicode art Image

|

||||

|

||||

**Node Link:** https://github.com/mickr777/imagetoasciiimage

|

||||

|

||||

**Output Examples**

|

||||

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/115216705/8e061fcc-9a2c-4fa9-bcc7-c0f7b01e9056" width="300" />

|

||||

<img src="https://github.com/mickr777/imagetoasciiimage/assets/115216705/3c4990eb-2f42-46b9-90f9-0088b939dc6a" width="300" /></br>

|

||||

<img src="https://github.com/mickr777/imagetoasciiimage/assets/115216705/fee7f800-a4a8-41e2-a66b-c66e4343307e" width="300" />

|

||||

<img src="https://github.com/mickr777/imagetoasciiimage/assets/115216705/1d9c1003-a45f-45c2-aac7-46470bb89330" width="300" />

|

||||

|

||||

--------------------------------

|

||||

|

||||

### Grid to Gif

|

||||

|

||||

**Description:** One node that turns a grid image into an image colletion, one node that turns an image collection into a gif

|

||||

|

||||

**Node Link:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/GridToGif.py

|

||||

|

||||

**Example Node Graph:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/Grid%20to%20Gif%20Example%20Workflow.json

|

||||

|

||||

**Output Examples**

|

||||

|

||||

<img src="https://raw.githubusercontent.com/mildmisery/invokeai-GridToGifNode/main/input.png" width="300" />

|

||||

<img src="https://raw.githubusercontent.com/mildmisery/invokeai-GridToGifNode/main/output.gif" width="300" />

|

||||

|

||||

--------------------------------

|

||||

|

||||

### Example Node Template

|

||||

|

||||

**Description:** This node allows you to do super cool things with InvokeAI.

|

||||

@ -318,7 +331,7 @@ See full docs here: https://github.com/skunkworxdark/XYGrid_nodes/edit/main/READ

|

||||

|

||||

**Output Examples**

|

||||

|

||||

{: style="height:115px;width:240px"}

|

||||

</br><img src="https://invoke-ai.github.io/InvokeAI/assets/invoke_ai_banner.png" width="500" />

|

||||

|

||||

|

||||

## Disclaimer

|

||||

|

||||

@ -152,6 +152,7 @@ async def import_model(

|

||||

) -> ImportModelResponse:

|

||||

"""Add a model using its local path, repo_id, or remote URL. Model characteristics will be probed and configured automatically"""

|

||||

|

||||

location = location.strip("\"' ")

|

||||

items_to_import = {location}

|

||||

prediction_types = {x.value: x for x in SchedulerPredictionType}

|

||||

logger = ApiDependencies.invoker.services.logger

|

||||

|

||||

@ -30,8 +30,8 @@ class SocketIO:

|

||||

|

||||

async def _handle_sub_queue(self, sid, data, *args, **kwargs):

|

||||

if "queue_id" in data:

|

||||

self.__sio.enter_room(sid, data["queue_id"])

|

||||

await self.__sio.enter_room(sid, data["queue_id"])

|

||||

|

||||

async def _handle_unsub_queue(self, sid, data, *args, **kwargs):

|

||||

if "queue_id" in data:

|

||||

self.__sio.enter_room(sid, data["queue_id"])

|

||||

await self.__sio.enter_room(sid, data["queue_id"])

|

||||

|

||||

@ -46,6 +46,8 @@ class FaceResultData(TypedDict):

|

||||

y_center: float

|

||||

mesh_width: int

|

||||

mesh_height: int

|

||||

chunk_x_offset: int

|

||||

chunk_y_offset: int

|

||||

|

||||

|

||||

class FaceResultDataWithId(FaceResultData):

|

||||

@ -78,6 +80,48 @@ FONT_SIZE = 32

|

||||

FONT_STROKE_WIDTH = 4

|

||||

|

||||

|

||||

def coalesce_faces(face1: FaceResultData, face2: FaceResultData) -> FaceResultData:

|

||||

face1_x_offset = face1["chunk_x_offset"] - min(face1["chunk_x_offset"], face2["chunk_x_offset"])

|

||||

face2_x_offset = face2["chunk_x_offset"] - min(face1["chunk_x_offset"], face2["chunk_x_offset"])

|

||||

face1_y_offset = face1["chunk_y_offset"] - min(face1["chunk_y_offset"], face2["chunk_y_offset"])

|

||||

face2_y_offset = face2["chunk_y_offset"] - min(face1["chunk_y_offset"], face2["chunk_y_offset"])

|

||||

|

||||

new_im_width = (

|

||||

max(face1["image"].width, face2["image"].width)

|

||||

+ max(face1["chunk_x_offset"], face2["chunk_x_offset"])

|

||||

- min(face1["chunk_x_offset"], face2["chunk_x_offset"])

|

||||

)

|

||||

new_im_height = (

|

||||

max(face1["image"].height, face2["image"].height)

|

||||

+ max(face1["chunk_y_offset"], face2["chunk_y_offset"])

|

||||

- min(face1["chunk_y_offset"], face2["chunk_y_offset"])

|

||||

)

|

||||

pil_image = Image.new(mode=face1["image"].mode, size=(new_im_width, new_im_height))

|

||||

pil_image.paste(face1["image"], (face1_x_offset, face1_y_offset))

|

||||

pil_image.paste(face2["image"], (face2_x_offset, face2_y_offset))

|

||||

|

||||

# Mask images are always from the origin

|

||||

new_mask_im_width = max(face1["mask"].width, face2["mask"].width)

|

||||

new_mask_im_height = max(face1["mask"].height, face2["mask"].height)

|

||||

mask_pil = create_white_image(new_mask_im_width, new_mask_im_height)

|

||||

black_image = create_black_image(face1["mask"].width, face1["mask"].height)

|

||||

mask_pil.paste(black_image, (0, 0), ImageOps.invert(face1["mask"]))

|

||||

black_image = create_black_image(face2["mask"].width, face2["mask"].height)

|

||||

mask_pil.paste(black_image, (0, 0), ImageOps.invert(face2["mask"]))

|

||||

|

||||

new_face = FaceResultData(

|

||||

image=pil_image,

|

||||

mask=mask_pil,

|

||||

x_center=max(face1["x_center"], face2["x_center"]),

|

||||

y_center=max(face1["y_center"], face2["y_center"]),

|

||||

mesh_width=max(face1["mesh_width"], face2["mesh_width"]),

|

||||

mesh_height=max(face1["mesh_height"], face2["mesh_height"]),

|

||||

chunk_x_offset=max(face1["chunk_x_offset"], face2["chunk_x_offset"]),

|

||||

chunk_y_offset=max(face2["chunk_y_offset"], face2["chunk_y_offset"]),

|

||||

)

|

||||

return new_face

|

||||

|

||||

|

||||

def prepare_faces_list(

|

||||

face_result_list: list[FaceResultData],

|

||||

) -> list[FaceResultDataWithId]:

|

||||

@ -91,7 +135,7 @@ def prepare_faces_list(

|

||||

should_add = True

|

||||

candidate_x_center = candidate["x_center"]

|

||||

candidate_y_center = candidate["y_center"]

|

||||

for face in deduped_faces:

|

||||

for idx, face in enumerate(deduped_faces):

|

||||

face_center_x = face["x_center"]

|

||||

face_center_y = face["y_center"]

|

||||

face_radius_w = face["mesh_width"] / 2

|

||||

@ -105,6 +149,7 @@ def prepare_faces_list(

|

||||

)

|

||||

|

||||

if p < 1: # Inside of the already-added face's radius

|

||||

deduped_faces[idx] = coalesce_faces(face, candidate)

|

||||

should_add = False

|

||||

break

|

||||

|

||||

@ -138,7 +183,6 @@ def generate_face_box_mask(

|

||||

chunk_x_offset: int = 0,

|

||||

chunk_y_offset: int = 0,

|

||||

draw_mesh: bool = True,

|

||||

check_bounds: bool = True,

|

||||

) -> list[FaceResultData]:

|

||||

result = []

|

||||

mask_pil = None

|

||||

@ -211,33 +255,20 @@ def generate_face_box_mask(

|

||||

mask_pil = create_white_image(w + chunk_x_offset, h + chunk_y_offset)

|

||||

mask_pil.paste(init_mask_pil, (chunk_x_offset, chunk_y_offset))

|

||||

|

||||

left_side = x_center - mesh_width

|

||||

right_side = x_center + mesh_width

|

||||

top_side = y_center - mesh_height

|

||||

bottom_side = y_center + mesh_height

|

||||

im_width, im_height = pil_image.size

|

||||

over_w = im_width * 0.1

|

||||

over_h = im_height * 0.1

|

||||

if not check_bounds or (

|

||||

(left_side >= -over_w)

|

||||

and (right_side < im_width + over_w)

|

||||

and (top_side >= -over_h)

|

||||

and (bottom_side < im_height + over_h)

|

||||

):

|

||||

x_center = float(x_center)

|

||||

y_center = float(y_center)

|

||||

face = FaceResultData(

|

||||

image=pil_image,

|

||||

mask=mask_pil or create_white_image(*pil_image.size),

|

||||

x_center=x_center + chunk_x_offset,

|

||||

y_center=y_center + chunk_y_offset,

|

||||

mesh_width=mesh_width,

|

||||

mesh_height=mesh_height,

|

||||

)

|

||||

x_center = float(x_center)

|

||||

y_center = float(y_center)

|

||||

face = FaceResultData(

|

||||

image=pil_image,

|

||||

mask=mask_pil or create_white_image(*pil_image.size),

|

||||

x_center=x_center + chunk_x_offset,

|

||||

y_center=y_center + chunk_y_offset,

|

||||

mesh_width=mesh_width,

|

||||

mesh_height=mesh_height,

|

||||

chunk_x_offset=chunk_x_offset,

|

||||

chunk_y_offset=chunk_y_offset,

|

||||

)

|

||||

|

||||

result.append(face)

|

||||

else:

|

||||

context.services.logger.info("FaceTools --> Face out of bounds, ignoring.")

|

||||

result.append(face)

|

||||

|

||||

return result

|

||||

|

||||

@ -346,7 +377,6 @@ def get_faces_list(

|

||||

chunk_x_offset=0,

|

||||

chunk_y_offset=0,

|

||||

draw_mesh=draw_mesh,

|

||||

check_bounds=False,

|

||||

)

|

||||

if should_chunk or len(result) == 0:

|

||||

context.services.logger.info("FaceTools --> Chunking image (chunk toggled on, or no face found in full image).")

|

||||

@ -360,24 +390,26 @@ def get_faces_list(

|

||||

if width > height:

|

||||

# Landscape - slice the image horizontally

|

||||

fx = 0.0

|

||||

steps = int(width * 2 / height)

|

||||

steps = int(width * 2 / height) + 1

|

||||

increment = (width - height) / (steps - 1)

|

||||

while fx <= (width - height):

|

||||

x = int(fx)

|

||||

image_chunks.append(image.crop((x, 0, x + height - 1, height - 1)))

|

||||

image_chunks.append(image.crop((x, 0, x + height, height)))

|

||||

x_offsets.append(x)

|

||||

y_offsets.append(0)

|

||||

fx += (width - height) / steps

|

||||

fx += increment

|

||||

context.services.logger.info(f"FaceTools --> Chunk starting at x = {x}")

|

||||

elif height > width:

|

||||

# Portrait - slice the image vertically

|

||||

fy = 0.0

|

||||

steps = int(height * 2 / width)

|

||||

steps = int(height * 2 / width) + 1

|

||||

increment = (height - width) / (steps - 1)

|

||||

while fy <= (height - width):

|

||||

y = int(fy)

|

||||

image_chunks.append(image.crop((0, y, width - 1, y + width - 1)))

|

||||

image_chunks.append(image.crop((0, y, width, y + width)))

|

||||

x_offsets.append(0)

|

||||

y_offsets.append(y)

|

||||

fy += (height - width) / steps

|

||||

fy += increment

|

||||

context.services.logger.info(f"FaceTools --> Chunk starting at y = {y}")

|

||||

|

||||

for idx in range(len(image_chunks)):

|

||||

@ -404,7 +436,7 @@ def get_faces_list(

|

||||

return all_faces

|

||||

|

||||

|

||||

@invocation("face_off", title="FaceOff", tags=["image", "faceoff", "face", "mask"], category="image", version="1.0.1")

|

||||

@invocation("face_off", title="FaceOff", tags=["image", "faceoff", "face", "mask"], category="image", version="1.0.2")

|

||||

class FaceOffInvocation(BaseInvocation):

|

||||

"""Bound, extract, and mask a face from an image using MediaPipe detection"""

|

||||

|

||||

@ -498,7 +530,7 @@ class FaceOffInvocation(BaseInvocation):

|

||||

return output

|

||||

|

||||

|

||||

@invocation("face_mask_detection", title="FaceMask", tags=["image", "face", "mask"], category="image", version="1.0.1")

|

||||

@invocation("face_mask_detection", title="FaceMask", tags=["image", "face", "mask"], category="image", version="1.0.2")

|

||||

class FaceMaskInvocation(BaseInvocation):

|

||||

"""Face mask creation using mediapipe face detection"""

|

||||

|

||||

@ -616,7 +648,7 @@ class FaceMaskInvocation(BaseInvocation):

|

||||

|

||||

|

||||

@invocation(

|

||||

"face_identifier", title="FaceIdentifier", tags=["image", "face", "identifier"], category="image", version="1.0.1"

|

||||

"face_identifier", title="FaceIdentifier", tags=["image", "face", "identifier"], category="image", version="1.0.2"

|

||||

)

|

||||

class FaceIdentifierInvocation(BaseInvocation):

|

||||

"""Outputs an image with detected face IDs printed on each face. For use with other FaceTools."""

|

||||

|

||||

@ -44,28 +44,31 @@ class CoreMetadata(BaseModelExcludeNull):

|

||||

"""Core generation metadata for an image generated in InvokeAI."""

|

||||

|

||||

app_version: str = Field(default=__version__, description="The version of InvokeAI used to generate this image")

|

||||

generation_mode: str = Field(

|

||||

generation_mode: Optional[str] = Field(

|

||||

default=None,

|

||||

description="The generation mode that output this image",

|

||||

)

|

||||

created_by: Optional[str] = Field(description="The name of the creator of the image")

|

||||

positive_prompt: str = Field(description="The positive prompt parameter")

|

||||

negative_prompt: str = Field(description="The negative prompt parameter")

|

||||

width: int = Field(description="The width parameter")

|

||||

height: int = Field(description="The height parameter")

|

||||

seed: int = Field(description="The seed used for noise generation")

|

||||

rand_device: str = Field(description="The device used for random number generation")

|

||||

cfg_scale: float = Field(description="The classifier-free guidance scale parameter")

|

||||

steps: int = Field(description="The number of steps used for inference")

|

||||

scheduler: str = Field(description="The scheduler used for inference")

|

||||

positive_prompt: Optional[str] = Field(default=None, description="The positive prompt parameter")

|

||||

negative_prompt: Optional[str] = Field(default=None, description="The negative prompt parameter")

|

||||

width: Optional[int] = Field(default=None, description="The width parameter")

|

||||

height: Optional[int] = Field(default=None, description="The height parameter")

|

||||

seed: Optional[int] = Field(default=None, description="The seed used for noise generation")

|

||||

rand_device: Optional[str] = Field(default=None, description="The device used for random number generation")

|

||||

cfg_scale: Optional[float] = Field(default=None, description="The classifier-free guidance scale parameter")

|

||||

steps: Optional[int] = Field(default=None, description="The number of steps used for inference")

|

||||

scheduler: Optional[str] = Field(default=None, description="The scheduler used for inference")

|

||||

clip_skip: Optional[int] = Field(

|

||||

default=None,

|

||||

description="The number of skipped CLIP layers",

|

||||

)

|

||||

model: MainModelField = Field(description="The main model used for inference")

|

||||

controlnets: list[ControlField] = Field(description="The ControlNets used for inference")

|

||||

ipAdapters: list[IPAdapterMetadataField] = Field(description="The IP Adapters used for inference")

|

||||

t2iAdapters: list[T2IAdapterField] = Field(description="The IP Adapters used for inference")

|

||||

loras: list[LoRAMetadataField] = Field(description="The LoRAs used for inference")

|

||||

model: Optional[MainModelField] = Field(default=None, description="The main model used for inference")

|

||||

controlnets: Optional[list[ControlField]] = Field(default=None, description="The ControlNets used for inference")

|

||||

ipAdapters: Optional[list[IPAdapterMetadataField]] = Field(

|

||||

default=None, description="The IP Adapters used for inference"

|

||||

)

|

||||

t2iAdapters: Optional[list[T2IAdapterField]] = Field(default=None, description="The IP Adapters used for inference")

|

||||

loras: Optional[list[LoRAMetadataField]] = Field(default=None, description="The LoRAs used for inference")

|

||||

vae: Optional[VAEModelField] = Field(

|

||||

default=None,

|

||||

description="The VAE used for decoding, if the main model's default was not used",

|

||||

@ -122,27 +125,34 @@ class MetadataAccumulatorOutput(BaseInvocationOutput):

|

||||

class MetadataAccumulatorInvocation(BaseInvocation):

|

||||

"""Outputs a Core Metadata Object"""

|

||||

|

||||

generation_mode: str = InputField(

|

||||

generation_mode: Optional[str] = InputField(

|

||||

default=None,

|

||||

description="The generation mode that output this image",

|

||||

)

|

||||

positive_prompt: str = InputField(description="The positive prompt parameter")

|

||||

negative_prompt: str = InputField(description="The negative prompt parameter")

|

||||

width: int = InputField(description="The width parameter")

|

||||

height: int = InputField(description="The height parameter")

|

||||

seed: int = InputField(description="The seed used for noise generation")

|

||||

rand_device: str = InputField(description="The device used for random number generation")

|

||||

cfg_scale: float = InputField(description="The classifier-free guidance scale parameter")

|

||||

steps: int = InputField(description="The number of steps used for inference")

|

||||

scheduler: str = InputField(description="The scheduler used for inference")

|

||||

clip_skip: Optional[int] = Field(

|

||||

positive_prompt: Optional[str] = InputField(default=None, description="The positive prompt parameter")

|

||||

negative_prompt: Optional[str] = InputField(default=None, description="The negative prompt parameter")

|

||||

width: Optional[int] = InputField(default=None, description="The width parameter")

|

||||

height: Optional[int] = InputField(default=None, description="The height parameter")

|

||||

seed: Optional[int] = InputField(default=None, description="The seed used for noise generation")

|

||||

rand_device: Optional[str] = InputField(default=None, description="The device used for random number generation")

|

||||

cfg_scale: Optional[float] = InputField(default=None, description="The classifier-free guidance scale parameter")

|

||||

steps: Optional[int] = InputField(default=None, description="The number of steps used for inference")

|

||||

scheduler: Optional[str] = InputField(default=None, description="The scheduler used for inference")

|

||||

clip_skip: Optional[int] = InputField(

|

||||

default=None,

|

||||

description="The number of skipped CLIP layers",

|

||||

)

|

||||

model: MainModelField = InputField(description="The main model used for inference")

|

||||

controlnets: list[ControlField] = InputField(description="The ControlNets used for inference")

|

||||

ipAdapters: list[IPAdapterMetadataField] = InputField(description="The IP Adapters used for inference")

|

||||

t2iAdapters: list[T2IAdapterField] = Field(description="The IP Adapters used for inference")

|

||||

loras: list[LoRAMetadataField] = InputField(description="The LoRAs used for inference")

|

||||

model: Optional[MainModelField] = InputField(default=None, description="The main model used for inference")

|

||||

controlnets: Optional[list[ControlField]] = InputField(

|

||||

default=None, description="The ControlNets used for inference"

|

||||

)

|

||||

ipAdapters: Optional[list[IPAdapterMetadataField]] = InputField(

|

||||

default=None, description="The IP Adapters used for inference"

|

||||

)

|

||||

t2iAdapters: Optional[list[T2IAdapterField]] = InputField(

|

||||

default=None, description="The IP Adapters used for inference"

|

||||

)

|

||||

loras: Optional[list[LoRAMetadataField]] = InputField(default=None, description="The LoRAs used for inference")

|

||||

strength: Optional[float] = InputField(

|

||||

default=None,

|

||||

description="The strength used for latents-to-latents",

|

||||

@ -156,6 +166,20 @@ class MetadataAccumulatorInvocation(BaseInvocation):

|

||||

description="The VAE used for decoding, if the main model's default was not used",

|

||||

)

|

||||

|

||||

# High resolution fix metadata.

|

||||

hrf_width: Optional[int] = InputField(

|

||||

default=None,

|

||||

description="The high resolution fix height and width multipler.",

|

||||

)

|

||||

hrf_height: Optional[int] = InputField(

|

||||

default=None,

|

||||

description="The high resolution fix height and width multipler.",

|

||||

)

|

||||

hrf_strength: Optional[float] = InputField(

|

||||

default=None,

|

||||

description="The high resolution fix img2img strength used in the upscale pass.",

|

||||

)

|

||||

|

||||

# SDXL

|

||||

positive_style_prompt: Optional[str] = InputField(

|

||||

default=None,

|

||||

|

||||

@ -12,7 +12,7 @@ from .board_image_records_base import BoardImageRecordStorageBase

|

||||

class SqliteBoardImageRecordStorage(BoardImageRecordStorageBase):

|

||||

_conn: sqlite3.Connection

|

||||

_cursor: sqlite3.Cursor

|

||||

_lock: threading.Lock

|

||||

_lock: threading.RLock

|

||||

|

||||

def __init__(self, db: SqliteDatabase) -> None:

|

||||

super().__init__()

|

||||

|

||||

@ -20,7 +20,7 @@ from .board_records_common import (

|

||||

class SqliteBoardRecordStorage(BoardRecordStorageBase):

|

||||

_conn: sqlite3.Connection

|

||||

_cursor: sqlite3.Cursor

|

||||

_lock: threading.Lock

|

||||

_lock: threading.RLock

|

||||

|

||||

def __init__(self, db: SqliteDatabase) -> None:

|

||||

super().__init__()

|

||||

|

||||

@ -24,7 +24,7 @@ from .image_records_common import (

|

||||

class SqliteImageRecordStorage(ImageRecordStorageBase):

|

||||

_conn: sqlite3.Connection

|

||||

_cursor: sqlite3.Cursor

|

||||

_lock: threading.Lock

|

||||

_lock: threading.RLock

|

||||

|

||||

def __init__(self, db: SqliteDatabase) -> None:

|

||||

super().__init__()

|

||||

|

||||

@ -17,7 +17,7 @@ class SqliteItemStorage(ItemStorageABC, Generic[T]):

|

||||

_conn: sqlite3.Connection

|

||||

_cursor: sqlite3.Cursor

|

||||

_id_field: str

|

||||

_lock: threading.Lock

|

||||

_lock: threading.RLock

|

||||

|

||||

def __init__(self, db: SqliteDatabase, table_name: str, id_field: str = "id"):

|

||||

super().__init__()

|

||||

|

||||

@ -37,7 +37,7 @@ class SqliteSessionQueue(SessionQueueBase):

|

||||

__invoker: Invoker

|

||||

__conn: sqlite3.Connection

|

||||

__cursor: sqlite3.Cursor

|

||||

__lock: threading.Lock

|

||||

__lock: threading.RLock

|

||||

|

||||

def start(self, invoker: Invoker) -> None:

|

||||

self.__invoker = invoker

|

||||

|

||||

@ -9,7 +9,7 @@ sqlite_memory = ":memory:"

|

||||

|

||||

class SqliteDatabase:

|

||||

conn: sqlite3.Connection

|

||||

lock: threading.Lock

|

||||

lock: threading.RLock

|

||||

_logger: Logger

|

||||

_config: InvokeAIAppConfig

|

||||

|

||||

@ -27,7 +27,7 @@ class SqliteDatabase:

|

||||

self._logger.info(f"Using database at {location}")

|

||||

|

||||

self.conn = sqlite3.connect(location, check_same_thread=False)

|

||||

self.lock = threading.Lock()

|

||||

self.lock = threading.RLock()

|

||||

self.conn.row_factory = sqlite3.Row

|

||||

|

||||

if self._config.log_sql:

|

||||

|

||||

@ -236,9 +236,16 @@ class ModelInstall(object):

|

||||

if not models_installed:

|

||||

models_installed = dict()

|

||||

|

||||

model_path_id_or_url = str(model_path_id_or_url).strip("\"' ")

|

||||

|

||||

# A little hack to allow nested routines to retrieve info on the requested ID

|

||||

self.current_id = model_path_id_or_url

|

||||

path = Path(model_path_id_or_url)

|

||||

|

||||

# fix relative paths

|

||||

if path.exists() and not path.is_absolute():

|

||||

path = path.absolute() # make relative to current WD

|

||||

|

||||

# checkpoint file, or similar

|

||||

if path.is_file():

|

||||

models_installed.update({str(path): self._install_path(path)})

|

||||

|

||||

@ -55,8 +55,10 @@ class MemorySnapshot:

|

||||

|

||||

try:

|

||||

malloc_info = LibcUtil().mallinfo2()

|

||||

except OSError:

|

||||

# This is expected in environments that do not have the 'libc.so.6' shared library.

|

||||

except (OSError, AttributeError):

|

||||

# OSError: This is expected in environments that do not have the 'libc.so.6' shared library.

|

||||

# AttributeError: This is expected in environments that have `libc.so.6` but do not have the `mallinfo2` (e.g. glibc < 2.33)

|

||||

# TODO: Does `mallinfo` work?

|

||||

malloc_info = None

|

||||

|

||||

return cls(process_ram, vram, malloc_info)

|

||||

|

||||

@ -986,6 +986,8 @@ class ModelManager(object):

|

||||

|

||||

for model_path in models_dir.iterdir():

|

||||

if model_path not in loaded_files: # TODO: check

|

||||

if model_path.name.startswith("."):

|

||||

continue

|

||||

model_name = model_path.name if model_path.is_dir() else model_path.stem

|

||||

model_key = self.create_key(model_name, cur_base_model, cur_model_type)

|

||||

|

||||

|

||||

@ -54,42 +54,42 @@

|

||||

]

|

||||

},

|

||||

"dependencies": {

|

||||

"@chakra-ui/anatomy": "^2.2.0",

|

||||

"@chakra-ui/icons": "^2.1.0",

|

||||

"@chakra-ui/react": "^2.8.0",

|

||||

"@chakra-ui/anatomy": "^2.2.1",

|

||||

"@chakra-ui/icons": "^2.1.1",

|

||||

"@chakra-ui/react": "^2.8.1",

|

||||

"@chakra-ui/styled-system": "^2.9.1",

|

||||

"@chakra-ui/theme-tools": "^2.1.0",

|

||||

"@chakra-ui/theme-tools": "^2.1.1",

|

||||

"@dagrejs/graphlib": "^2.1.13",

|

||||

"@dnd-kit/core": "^6.0.8",

|

||||

"@dnd-kit/modifiers": "^6.0.1",

|

||||

"@dnd-kit/utilities": "^3.2.1",

|

||||

"@emotion/react": "^11.11.1",

|

||||

"@emotion/styled": "^11.11.0",

|

||||

"@floating-ui/react-dom": "^2.0.1",

|

||||

"@fontsource-variable/inter": "^5.0.8",

|

||||

"@fontsource/inter": "^5.0.8",

|

||||

"@floating-ui/react-dom": "^2.0.2",

|

||||

"@fontsource-variable/inter": "^5.0.13",

|

||||

"@fontsource/inter": "^5.0.13",

|

||||

"@mantine/core": "^6.0.19",

|

||||

"@mantine/form": "^6.0.19",

|

||||

"@mantine/hooks": "^6.0.19",

|

||||

"@nanostores/react": "^0.7.1",

|

||||

"@reduxjs/toolkit": "^1.9.5",

|

||||

"@roarr/browser-log-writer": "^1.1.5",

|

||||

"@reduxjs/toolkit": "^1.9.7",

|

||||

"@roarr/browser-log-writer": "^1.3.0",

|

||||

"@stevebel/png": "^1.5.1",

|

||||

"compare-versions": "^6.1.0",

|

||||

"dateformat": "^5.0.3",

|

||||

"formik": "^2.4.3",

|

||||

"framer-motion": "^10.16.1",

|

||||

"formik": "^2.4.5",

|

||||

"framer-motion": "^10.16.4",

|

||||

"fuse.js": "^6.6.2",

|

||||

"i18next": "^23.4.4",

|

||||

"i18next": "^23.5.1",

|

||||

"i18next-browser-languagedetector": "^7.0.2",

|

||||

"i18next-http-backend": "^2.2.1",

|

||||

"konva": "^9.2.0",

|

||||

"i18next-http-backend": "^2.2.2",

|

||||

"konva": "^9.2.2",

|

||||

"lodash-es": "^4.17.21",

|

||||

"nanostores": "^0.9.2",

|

||||

"new-github-issue-url": "^1.0.0",

|

||||

"openapi-fetch": "^0.7.4",

|

||||

"overlayscrollbars": "^2.2.0",

|

||||

"overlayscrollbars-react": "^0.5.0",

|

||||

"openapi-fetch": "^0.7.10",

|

||||

"overlayscrollbars": "^2.3.2",

|

||||

"overlayscrollbars-react": "^0.5.2",

|

||||

"patch-package": "^8.0.0",

|

||||

"query-string": "^8.1.0",

|

||||

"react": "^18.2.0",

|

||||

@ -98,25 +98,25 @@

|

||||

"react-dropzone": "^14.2.3",

|

||||

"react-error-boundary": "^4.0.11",

|

||||

"react-hotkeys-hook": "4.4.1",

|

||||

"react-i18next": "^13.1.2",

|

||||

"react-icons": "^4.10.1",

|

||||

"react-i18next": "^13.3.0",

|

||||

"react-icons": "^4.11.0",

|

||||

"react-konva": "^18.2.10",

|

||||

"react-redux": "^8.1.2",

|

||||

"react-redux": "^8.1.3",

|

||||

"react-resizable-panels": "^0.0.55",

|

||||

"react-use": "^17.4.0",

|

||||

"react-virtuoso": "^4.5.0",

|

||||

"react-zoom-pan-pinch": "^3.0.8",

|

||||

"reactflow": "^11.8.3",

|

||||

"react-virtuoso": "^4.6.1",

|

||||

"react-zoom-pan-pinch": "^3.2.0",

|

||||

"reactflow": "^11.9.3",

|

||||

"redux-dynamic-middlewares": "^2.2.0",

|

||||

"redux-remember": "^4.0.1",

|

||||

"redux-remember": "^4.0.4",

|

||||

"roarr": "^7.15.1",

|

||||

"serialize-error": "^11.0.1",

|

||||

"serialize-error": "^11.0.2",

|

||||

"socket.io-client": "^4.7.2",

|

||||

"type-fest": "^4.2.0",

|

||||

"type-fest": "^4.4.0",

|

||||

"use-debounce": "^9.0.4",

|

||||

"use-image": "^1.1.1",

|

||||

"uuid": "^9.0.0",

|

||||

"zod": "^3.22.2",

|

||||

"uuid": "^9.0.1",

|

||||

"zod": "^3.22.4",

|

||||

"zod-validation-error": "^1.5.0"

|

||||

},

|

||||

"peerDependencies": {

|

||||

@ -129,40 +129,40 @@

|

||||

"devDependencies": {

|

||||

"@chakra-ui/cli": "^2.4.1",

|

||||

"@types/dateformat": "^5.0.0",

|

||||

"@types/lodash-es": "^4.14.194",

|

||||

"@types/node": "^20.5.1",

|

||||

"@types/react": "^18.2.20",

|

||||

"@types/react-dom": "^18.2.6",

|

||||

"@types/react-redux": "^7.1.25",

|

||||

"@types/react-transition-group": "^4.4.6",

|

||||

"@types/uuid": "^9.0.2",

|

||||

"@typescript-eslint/eslint-plugin": "^6.4.1",

|

||||

"@typescript-eslint/parser": "^6.4.1",

|

||||

"@vitejs/plugin-react-swc": "^3.3.2",

|

||||

"axios": "^1.4.0",

|

||||

"@types/lodash-es": "^4.17.9",

|

||||

"@types/node": "^20.8.6",

|

||||

"@types/react": "^18.2.28",

|

||||

"@types/react-dom": "^18.2.13",

|

||||

"@types/react-redux": "^7.1.27",

|

||||

"@types/react-transition-group": "^4.4.7",

|

||||

"@types/uuid": "^9.0.5",

|

||||

"@typescript-eslint/eslint-plugin": "^6.7.5",

|

||||

"@typescript-eslint/parser": "^6.7.5",

|

||||

"@vitejs/plugin-react-swc": "^3.4.0",

|

||||

"axios": "^1.5.1",

|

||||

"babel-plugin-transform-imports": "^2.0.0",

|

||||

"concurrently": "^8.2.0",

|

||||

"eslint": "^8.47.0",

|

||||

"concurrently": "^8.2.1",

|

||||

"eslint": "^8.51.0",

|

||||

"eslint-config-prettier": "^9.0.0",

|

||||

"eslint-plugin-prettier": "^5.0.0",

|

||||

"eslint-plugin-prettier": "^5.0.1",

|

||||

"eslint-plugin-react": "^7.33.2",

|

||||

"eslint-plugin-react-hooks": "^4.6.0",

|

||||

"form-data": "^4.0.0",

|

||||

"husky": "^8.0.3",

|

||||

"lint-staged": "^14.0.1",

|

||||

"lint-staged": "^15.0.1",

|

||||

"madge": "^6.1.0",

|

||||

"openapi-types": "^12.1.3",

|

||||

"openapi-typescript": "^6.5.2",

|

||||

"openapi-typescript": "^6.7.0",

|

||||

"postinstall-postinstall": "^2.1.0",

|

||||

"prettier": "^3.0.2",

|

||||

"prettier": "^3.0.3",

|

||||

"rollup-plugin-visualizer": "^5.9.2",

|

||||

"ts-toolbelt": "^9.6.0",

|

||||

"typescript": "^5.2.2",

|

||||

"vite": "^4.4.9",

|

||||

"vite": "^4.4.11",

|

||||

"vite-plugin-css-injected-by-js": "^3.3.0",

|

||||

"vite-plugin-dts": "^3.5.2",

|

||||

"vite-plugin-dts": "^3.6.0",

|

||||

"vite-plugin-eslint": "^1.8.1",

|

||||

"vite-tsconfig-paths": "^4.2.0",

|

||||

"vite-tsconfig-paths": "^4.2.1",

|

||||

"yarn": "^1.22.19"

|

||||

}

|

||||

}

|

||||

|

||||

@ -137,9 +137,9 @@

|

||||

"controlnet": {

|

||||

"controlAdapter_one": "Control Adapter",

|

||||

"controlAdapter_other": "Control Adapters",

|

||||

"controlnet": "$t(controlnet.controlAdapter) #{{number}} ($t(common.controlNet))",

|

||||

"ip_adapter": "$t(controlnet.controlAdapter) #{{number}} ($t(common.ipAdapter))",

|

||||

"t2i_adapter": "$t(controlnet.controlAdapter) #{{number}} ($t(common.t2iAdapter))",

|

||||

"controlnet": "$t(controlnet.controlAdapter_one) #{{number}} ($t(common.controlNet))",

|

||||

"ip_adapter": "$t(controlnet.controlAdapter_one) #{{number}} ($t(common.ipAdapter))",

|

||||

"t2i_adapter": "$t(controlnet.controlAdapter_one) #{{number}} ($t(common.t2iAdapter))",

|

||||

"addControlNet": "Add $t(common.controlNet)",

|

||||

"addIPAdapter": "Add $t(common.ipAdapter)",

|

||||

"addT2IAdapter": "Add $t(common.t2iAdapter)",

|

||||

@ -559,8 +559,10 @@

|

||||

"negativePrompt": "Negative Prompt",

|

||||

"noImageDetails": "No image details found",

|

||||

"noMetaData": "No metadata found",

|

||||

"noRecallParameters": "No parameters to recall found",

|

||||

"perlin": "Perlin Noise",

|

||||

"positivePrompt": "Positive Prompt",

|

||||

"recallParameters": "Recall Parameters",

|

||||

"scheduler": "Scheduler",

|

||||

"seamless": "Seamless",

|

||||

"seed": "Seed",

|

||||

@ -1113,6 +1115,7 @@

|

||||

"showProgressInViewer": "Show Progress Images in Viewer",

|

||||

"ui": "User Interface",

|

||||

"useSlidersForAll": "Use Sliders For All Options",

|

||||

"clearIntermediatesDisabled": "Queue must be empty to clear intermediates",

|

||||

"clearIntermediatesDesc1": "Clearing intermediates will reset your Canvas and ControlNet state.",

|

||||

"clearIntermediatesDesc2": "Intermediate images are byproducts of generation, different from the result images in the gallery. Clearing intermediates will free disk space.",

|

||||

"clearIntermediatesDesc3": "Your gallery images will not be deleted.",

|

||||

|

||||

@ -87,7 +87,9 @@

|

||||

"learnMore": "Per saperne di più",

|

||||

"ipAdapter": "Adattatore IP",

|

||||

"t2iAdapter": "Adattatore T2I",

|

||||

"controlAdapter": "Adattatore di Controllo"

|

||||

"controlAdapter": "Adattatore di Controllo",

|

||||

"controlNet": "ControlNet",

|

||||

"auto": "Automatico"

|

||||

},

|

||||

"gallery": {

|

||||

"generations": "Generazioni",

|

||||

@ -115,7 +117,10 @@

|

||||

"currentlyInUse": "Questa immagine è attualmente utilizzata nelle seguenti funzionalità:",