Added the Ideal Size node

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [X] Feature

- [ ] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [X] No, because: It's a community node addition

## Have you updated all relevant documentation?

- [X] Yes

- [ ] No

## Description

Added a reference to my community node that calculates the ideal size

for initial latent generation that avoids duplication. This is the logic

that was present in 2.3.5's first pass of high-res optimization.

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [X] No : This is a documentation change that references my community

node.

## [optional] Are there any post deployment tasks we need to perform?

Add Face Mask to communityNodes.md

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [ ] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [x] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [x] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [x] Yes

- [ ] No

## Description

Add Face Mask to communituNodes.md list.

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [ ] Bug Fix

- [ ] Optimization

- [x] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [x] No, because: just updated docs to try to help lead new users to

installs a little easier

## Have you updated relevant documentation?

- [x] Yes

- [ ] No

## Description

Some minor docs tweaks

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [x] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

## What type of PR is this? (check all applicable)

- [x] Refactor

- [ ] Feature

- [ ] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [x] Yes

- [ ] No, because:

## Description

Revised boards logic and UI

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue # discord convos

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [x] No : n/a

## [optional] Are there any post deployment tasks we need to perform?

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [x] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

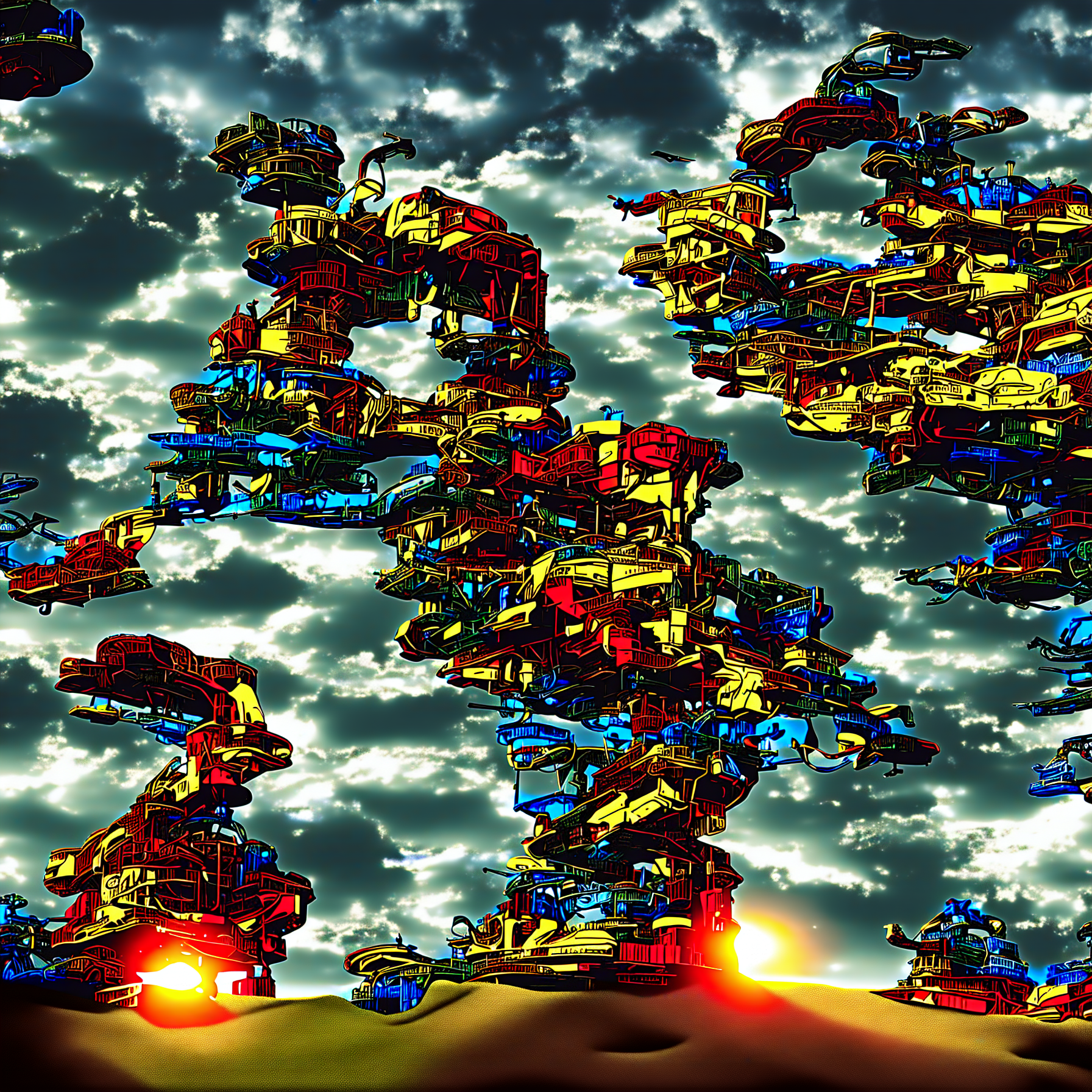

## Description

On mps generating images with resolution above ~1536x1536 results in

"fried" output. Main problem that such resolution results in tensors in

size more then 4gb. Looks like that some of mps internals can't handle

properly this, so to mitigate it I break attention calculation in

chunks.

## QA Instructions, Screenshots, Recordings

Example of bad output:

## What type of PR is this? (check all applicable)

- [ X] Documentation Update

## Have you discussed this change with the InvokeAI team?

- [X ] Yes

- [ ] No, because:

## Description

This is a WIP to collect documentation enhancements and other polish

prior to final 3.0.0 release. Minor bug fixes may go in here if

non-controversial. It should be merged into main prior to the final

release.