This is a fork of CompVis/stable-diffusion, the open source text-to-image generator. It provides a streamlined process with various new features and options to aid the image generation process. It runs on Windows, macOS and Linux machines, with GPU cards with as little as 4 GB of RAM. It provides both a polished Web interface (see below), and an easy-to-use command-line interface.

Quick links: [How to Install] [Discord Server] [Documentation and Tutorials] [Code and Downloads] [Bug Reports] [Discussion, Ideas & Q&A]

Note: InvokeAI is rapidly evolving. Please use the Issues tab to report bugs and make feature requests. Be sure to use the provided templates. They will help us diagnose issues faster.

Getting Started with InvokeAI

For full installation and upgrade instructions, please see: InvokeAI Installation Overview

- Go to the bottom of the Latest Release Page

- Download the .zip file for your OS (Windows/macOS/Linux).

- Unzip the file.

- If you are on Windows, double-click on the

install.batscript. On macOS, open a Terminal window, drag the fileinstall.shfrom Finder into the Terminal, and press return. On Linux, runinstall.sh. - Wait a while, until it is done.

- The folder where you ran the installer from will now be filled with lots of files. If you are on Windows, double-click on the

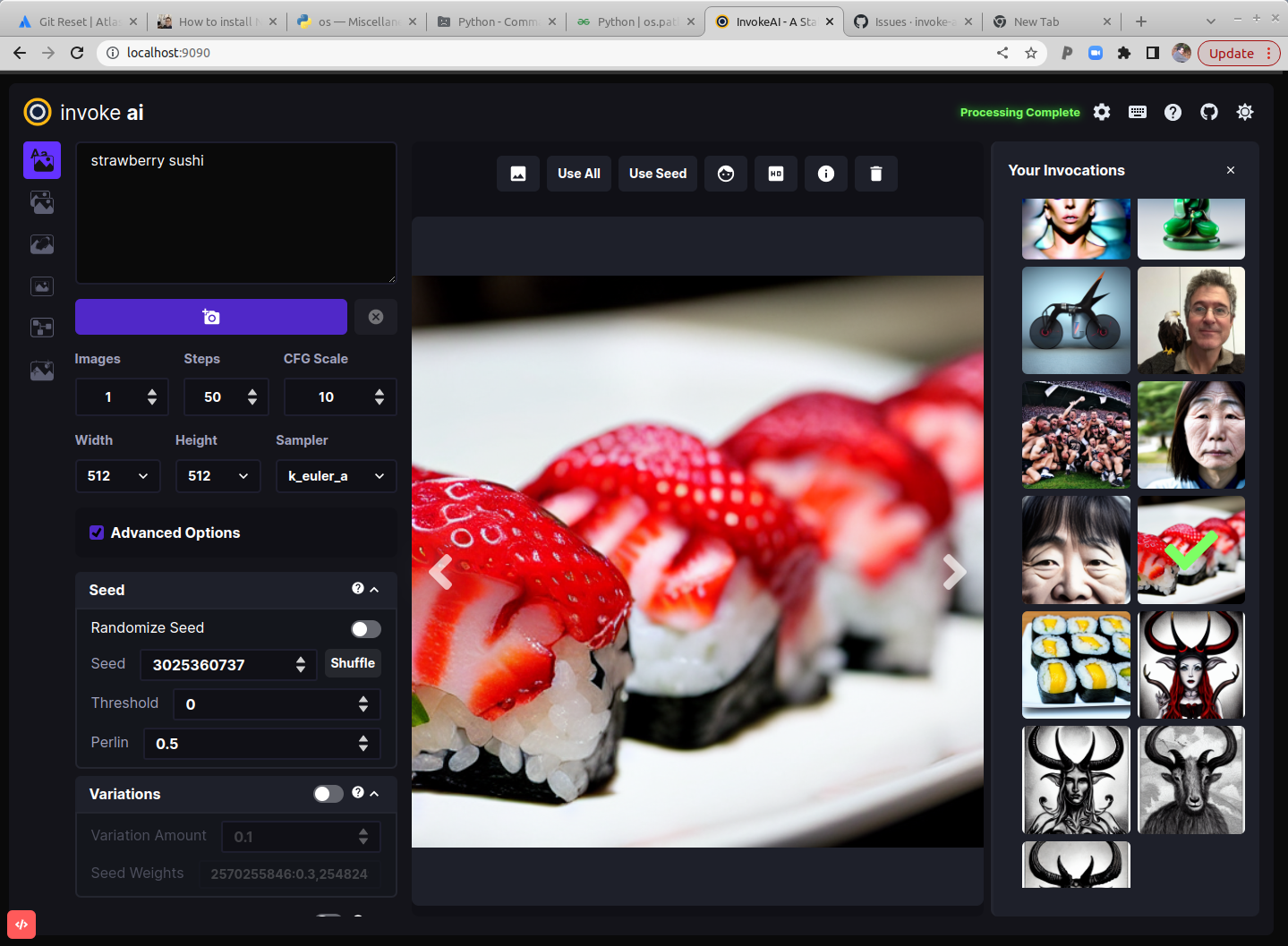

invoke.batfile. On macOS, open a Terminal window, draginvoke.shfrom the folder into the Terminal, and press return. On Linux, runinvoke.sh - Press 2 to open the "browser-based UI", press enter/return, wait a minute or two for Stable Diffusion to start up, then open your browser and go to http://localhost:9090.

- Type

banana sushiin the box on the top left and clickInvoke:

Table of Contents

- Installation

- Hardware Requirements

- Features

- Latest Changes

- Troubleshooting

- Contributing

- Contributors

- Support

- Further Reading

Installation

This fork is supported across Linux, Windows and Macintosh. Linux users can use either an Nvidia-based card (with CUDA support) or an AMD card (using the ROCm driver). For full installation and upgrade instructions, please see: InvokeAI Installation Overview

Hardware Requirements

InvokeAI is supported across Linux, Windows and macOS. Linux users can use either an Nvidia-based card (with CUDA support) or an AMD card (using the ROCm driver).

System

You wil need one of the following:

- An NVIDIA-based graphics card with 4 GB or more VRAM memory.

- An Apple computer with an M1 chip.

We do not recommend the GTX 1650 or 1660 series video cards. They are unable to run in half-precision mode and do not have sufficient VRAM to render 512x512 images.

Memory

- At least 12 GB Main Memory RAM.

Disk

- At least 12 GB of free disk space for the machine learning model, Python, and all its dependencies.

Note

If you have a Nvidia 10xx series card (e.g. the 1080ti), please run the dream script in full-precision mode as shown below.

Similarly, specify full-precision mode on Apple M1 hardware.

Precision is auto configured based on the device. If however you encounter

errors like 'expected type Float but found Half' or 'not implemented for Half'

you can try starting invoke.py with the --precision=float32 flag to your initialization command

(invokeai) ~/InvokeAI$ python scripts/invoke.py --precision=float32

Or by updating your InvokeAI configuration file with this argument.

Features

Major Features

- Web Server

- Interactive Command Line Interface

- Image To Image

- Inpainting Support

- Outpainting Support

- Upscaling, face-restoration and outpainting

- Reading Prompts From File

- Prompt Blending

- Thresholding and Perlin Noise Initialization Options

- Negative/Unconditioned Prompts

- Variations

- Personalizing Text-to-Image Generation

- Simplified API for text to image generation

Other Features

Latest Changes

For our latest changes, view our Release Notes

Troubleshooting

Please check out our Q&A to get solutions for common installation problems and other issues.

Contributing

Anyone who wishes to contribute to this project, whether documentation, features, bug fixes, code cleanup, testing, or code reviews, is very much encouraged to do so.

To join, just raise your hand on the InvokeAI Discord server (#dev-chat) or the GitHub discussion board.

If you are unfamiliar with how to contribute to GitHub projects, here is a Getting Started Guide. A full set of contribution guidelines, along with templates, are in progress, but for now the most important thing is to make your pull request against the "development" branch, and not against "main". This will help keep public breakage to a minimum and will allow you to propose more radical changes.

We hope you enjoy using our software as much as we enjoy creating it, and we hope that some of those of you who are reading this will elect to become part of our community.

Welcome to InvokeAI!

Contributors

This fork is a combined effort of various people from across the world. Check out the list of all these amazing people. We thank them for their time, hard work and effort.

Support

For support, please use this repository's GitHub Issues tracking service. Feel free to send me an email if you use and like the script.

Original portions of the software are Copyright (c) 2020 Lincoln D. Stein

Further Reading

Please see the original README for more information on this software and underlying algorithm, located in the file README-CompViz.md.