* Fixes inpainting + code cleanup

* Disable stage info in Inpainting Tab

* Mask Brush Preview now always at 0.5 opacity

The new mask is only visible properly at max opacity but at max opacity the brush preview becomes fully opaque blocking the view. So the mask brush preview no remains at 0.5 no matter what the Brush opacity is.

* Remove save button from Canvas Controls (cleanup)

* Implements invert mask

* Changes "Invert Mask" to "Preserve Masked Areas"

* Fixes (?) spacebar issues

* Patches redux-persist and redux-deep-persist with debounced persists

Our app changes redux state very, very often. As our undo/redo history grows, the calls to persist state start to take in the 100ms range, due to a the deep cloning of the history. This causes very noticeable performance lag.

The deep cloning is required because we need to blacklist certain items in redux from being persisted (e.g. the app's connection status).

Debouncing the whole process of persistence is a simple and effective solution. Unfortunately, `redux-persist` dropped `debounce` between v4 and v5, replacing it with `throttle`. `throttle`, instead of delaying the expensive action until a period of X ms of inactivity, simply ensures the action is executed at least every X ms. Of course, this does not fix our performance issue.

The patch is very simple. It adds a `debounce` argument - a number of milliseconds - and debounces `redux-persist`'s `update()` method (provided by `createPersistoid`) by that many ms.

Before this, I also tried writing a custom storage adapter for `redux-persist` to debounce the calls to `localStorage.setItem()`. While this worked and was far less invasive, it doesn't actually address the issue. It turns out `setItem()` is a very fast part of the process.

We use `redux-deep-persist` to simplify the `redux-persist` configuration, which can get complicated when you need to blacklist or whitelist deeply nested state. There is also a patch here for that library because it uses the same types as `redux-persist`.

Unfortunately, the last release of `redux-persist` used a package `flat-stream` which was malicious and has been removed from npm. The latest commits to `redux-persist` (about 1 year ago) do not build; we cannot use the master branch. And between the last release and last commit, the changes have all been breaking.

Patching this last release (about 3 years old at this point) directly is far simpler than attempting to fix the upstream library's master branch or figuring out an alternative to the malicious and now non-existent dependency.

* Adds debouncing

* Fixes AttributeError: 'dict' object has no attribute 'invert_mask'

* Updates package.json to use redux-persist patches

* Attempts to fix redux-persist debounce patch

* Fixes undo/redo

* Fixes invert mask

* Debounce > 300ms

* Limits history to 256 for each of undo and redo

* Canvas styling

* Hotkeys improvement

* Add Metadata To Viewer

* Increases CFG Scale max to 200

* Fix gallery width size for Outpainting

Also fixes the canvas resizing failing n fast pushes

* Fixes disappearing canvas grid lines

* Adds staging area

* Fixes "use all" not setting variationAmount

Now sets to 0 when the image had variations.

* Builds fresh bundle

* Outpainting tab loads to empty canvas instead of upload

* Fixes wonky canvas layer ordering & compositing

* Fixes error on inpainting paste back

`TypeError: 'float' object cannot be interpreted as an integer`

* Hides staging area outline on mouseover prev/next

* Fixes inpainting not doing img2img when no mask

* Fixes bbox not resizing in outpainting if partially off screen

* Fixes crashes during iterative outpaint. Still doesn't work correctly though.

* Fix iterative outpainting by restoring original images

* Moves image uploading to HTTP

- It all seems to work fine

- A lot of cleanup is still needed

- Logging needs to be added

- May need types to be reviewed

* Fixes: outpainting temp images show in gallery

* WIP refactor to unified canvas

* Removes console.log from redux-persist patch

* Initial unification of canvas

* Removes all references to split inpainting/outpainting canvas

* Add patchmatch and infill_method parameter to prompt2image (options are 'patchmatch' or 'tile').

* Fixes app after removing in/out-painting refs

* Rebases on dev, updates new env files w/ patchmatch

* Organises features/canvas

* Fixes bounding box ending up offscreen

* Organises features/canvas

* Stops unnecessary canvas rescales on gallery state change

* Fixes 2px layout shift on toggle canvas lock

* Clips lines drawn while canvas locked

When drawing with the locked canvas, if a brush stroke gets too close to the edge of the canvas and its stroke would extend past the edge of the canvas, the edge of that stroke will be seen after unlocking the canvas.

This could cause a problem if you unlock the canvas and now have a bunch of strokes just outside the init image area, which are far back in undo history and you cannot easily erase.

With this change, lines drawn while the canvas is locked get clipped to the initial image bbox, fixing this issue.

Additionally, the merge and save to gallery functions have been updated to respect the initial image bbox so they function how you'd expect.

* Fixes reset canvas view when locked

* Fixes send to buttons

* Fixes bounding box not being rounded to 64

* Abandons "inpainting" canvas lock

* Fixes save to gallery including empty area, adds download and copy image

* Fix Current Image display background going over image bounds

* Sets status immediately when clicking Invoke

* Adds hotkeys and refactors sharing of konva instances

Adds hotkeys to canvas. As part of this change, the access to konva instance objects was refactored:

Previously closure'd refs were used to indirectly get access to the konva instances outside of react components.

Now, a getter and setter function are used to provide access directly to the konva objects.

* Updates hotkeys

* Fixes canvas showing spinner on first load

Also adds good default canvas scale and positioning when no image is on it

* Fixes possible hang on MaskCompositer

* Improves behaviour when setting init canvas image/reset view

* Resets bounding box coords/dims when no image present

* Disables canvas actions which cannot be done during processing

* Adds useToastWatcher hook

- Dispatch an `addToast` action with standard Chakra toast options object to add a toast to the toastQueue

- The hook is called in App.tsx and just useEffect's w/ toastQueue as dependency to create the toasts

- So now you can add toasts anywhere you have access to `dispatch`, which includes middleware and thunks

- Adds first usage of this for the save image buttons in canvas

* Update Hotkey Info

Add missing tooltip hotkeys and update the hotkeys modal to reflect the new hotkeys for the Unified Canvas.

* Fix theme changer not displaying current theme on page refresh

* Fix tab count in hotkeys panel

* Unify Brush and Eraser Sizes

* Fix staging area display toggle not working

* Staging Area delete button is now red

So it doesnt feel blended into to the rest of them.

* Revert "Fix theme changer not displaying current theme on page refresh"

This reverts commit 903edfb803e743500242589ff093a8a8a0912726.

* Add arguments to use SSL to webserver

* Integrates #1487 - touch events

Need to add:

- Pinch zoom

- Touch-specific handling (some things aren't quite right)

* Refactors upload-related async thunks

- Now standard thunks instead of RTK createAsyncThunk()

- Adds toasts for all canvas upload-related actions

* Reorganises app file structure

* Fixes Canvas Auto Save to Gallery

* Fixes staging area outline

* Adds staging area hotkeys, disables gallery left/right when staging

* Fixes Use All Parameters

* Fix metadata viewer image url length when viewing intermediate

* Fixes intermediate images being tiny in txt2img/img2img

* Removes stale code

* Improves canvas status text and adds option to toggle debug info

* Fixes paste image to upload

* Adds model drop-down to site header

* Adds theme changer popover

* Fix missing key on ThemeChanger map

* Fixes stage position changing on zoom

* Hotkey Cleanup

- Viewer is now Z

- Canvas Move tool is V - sync with PS

- Removed some unused hotkeys

* Fix canvas resizing when both options and gallery are unpinned

* Implements thumbnails for gallery

- Thumbnails are saved whenever an image is saved, and when gallery requests images from server

- Thumbnails saved at original image aspect ratio with width of 128px as WEBP

- If the thumbnail property of an image is unavailable for whatever reason, the image's full size URL is used instead

* Saves thumbnails to separate thumbnails directory

* Thumbnail size = 256px

* Fix Lightbox Issues

* Disables canvas image saving functions when processing

* Fix index error on going past last image in Gallery

* WIP - Lightbox Fixes

Still need to fix the images not being centered on load when the image res changes

* Fixes another similar index error, simplifies logic

* Reworks canvas toolbar

* Fixes canvas toolbar upload button

* Cleans up IAICanvasStatusText

* Improves metadata handling, fixes #1450

- Removes model list from metadata

- Adds generation's specific model to metadata

- Displays full metadata in JSON viewer

* Gracefully handles corrupted images; fixes #1486

- App does not crash if corrupted image loaded

- Error is displayed in the UI console and CLI output if an image cannot be loaded

* Adds hotkey to reset canvas interaction state

If the canvas' interaction state (e.g. isMovingBoundingBox, isDrawing, etc) get stuck somehow, user can press Escape to reset the state.

* Removes stray console.log()

* Fixes bug causing gallery to close on context menu open

* Minor bugfixes

- When doing long-running canvas image exporting actions, display indeterminate progress bar

- Fix staging area image outline not displaying after committing/discarding results

* Removes unused imports

* Fixes repo root .gitignore ignoring frontend things

* Builds fresh bundle

* Styling updates

* Removes reasonsWhyNotReady

The popover doesn't play well with the button being disabled, and I don't think adds any value.

* Image gallery resize/style tweaks

* Styles buttons for clearing canvas history and mask

* First pass on Canvas options panel

* Fixes bug where discarding staged images results in loss of history

* Adds Save to Gallery button to staging toolbar

* Rearrange some canvas toolbar icons

Put brush stuff together and canvas movement stuff together

* Fix gallery maxwidth on unified canvas

* Update Layer hotkey display to UI

* Adds option to crop to bounding box on save

* Masking option tweaks

* Crop to Bounding Box > Save Box Region Only

* Adds clear temp folder

* Updates mask options popover behavior

* Builds fresh bundle

* Fix styling on alert modals

* Fix input checkbox styling being incorrect on light theme

* Styling fixes

* Improves gallery resize behaviour

* Cap gallery size on canvas tab so it doesnt overflow

* Fixes bug when postprocessing image with no metadata

* Adds IAIAlertDialog component

* Moves Loopback to app settings

* Fixes metadata viewer not showing metadata after refresh

Also adds Dream-style prompt to metadata

* Adds outpainting specific options

* Linting

* Fixes gallery width on lightbox, fixes gallery button expansion

* Builds fresh bundle

* Fix Lightbox images of different res not centering

* Update feature tooltip text

* Highlight mask icon when on mask layer

* Fix gallery not resizing correctly on open and close

* Add loopback to just img2img. Remove from settings.

* Fix to gallery resizing

* Removes Advanced checkbox, cleans up options panel for unified canvas

* Minor styling fixes to new options panel layout

* Styling Updates

* Adds infill method

* Tab Styling Fixes

* memoize outpainting options

* Fix unnecessary gallery re-renders

* Isolate Cursor Pos debug text on canvas to prevent rerenders

* Fixes missing postprocessed image metadata before refresh

* Builds fresh bundle

* Fix rerenders on model select

* Floating panel re-render fix

* Simplify fullscreen hotkey selector

* Add Training WIP Tab

* Adds Training icon

* Move full screen hotkey to floating to prevent tab rerenders

* Adds single-column gallery layout

* Fixes crash on cancel with intermediates enabled, fixes #1416

* Updates npm dependencies

* Fixes img2img attempting inpaint when init image has transparency

* Fixes missing threshold and perlin parameters in metadata viewer

* Renames "Threshold" > "Noise Threshold"

* Fixes postprocessing not being disabled when clicking use all

* Builds fresh bundle

* Adds color picker

* Lints & builds fresh bundle

* Fixes iterations being disabled when seed random & variations are off

* Un-floors cursor position

* Changes color picker preview to circles

* Fixes variation params not set correctly when recalled

* Fixes invoke hotkey not working in input fields

* Simplifies Accordion

Prep for adding reset buttons for each section

* Fixes mask brush preview color

* Committing color picker color changes tool to brush

* Color picker does not overwrite user-selected alpha

* Adds brush color alpha hotkey

* Lints

* Removes force_outpaint param

* Add inpaint size options to inpaint at a larger size than the actual inpaint image, then scale back down for recombination

* Bug fix for inpaint size

* Adds inpaint size (as scale bounding box) to UI

* Adds auto-scaling for inpaint size

* Improves scaled bbox display logic

* Fixes bug with clear mask and history

* Fixes shouldShowStagingImage not resetting to true on commit

* Builds fresh bundle

* Fixes canvas failing to scale on first run

* Builds fresh bundle

* Fixes unnecessary canvas scaling

* Adds gallery drag and drop to img2img/canvas

* Builds fresh bundle

* Fix desktop mode being broken with new versions of flaskwebgui

* Fixes canvas dimensions not setting on first load

* Builds fresh bundle

* stop crash on !import_models call on model inside rootdir

- addresses bug report #1546

* prevent "!switch state gets confused if model switching fails"

- If !switch were to fail on a particular model, then generate got

confused and wouldn't try again until you switch to a different working

model and back again.

- This commit fixes and closes #1547

* Revert "make the docstring more readable and improve the list_models logic"

This reverts commit 248068fe5d.

* fix model cache path

* also set fail-fast to it's default (true)

in this way the whole action fails if one job fails

this should unblock the runners!!!

* fix output path for Archive results

* disable checks for python 3.9

* Update-requirements and test-invoke-pip workflow (#1574)

* update requirements files

* update test-invoke-pip workflow

* move requirements-mkdocs.txt to docs folder (#1575)

* move requirements-mkdocs.txt to docs folder

* update copyright

* Fixes outpainting with resized inpaint size

* Interactive configuration (#1517)

* Update scripts/configure_invokeai.py

prevent crash if output exists

Co-authored-by: psychedelicious <4822129+psychedelicious@users.noreply.github.com>

* implement changes requested by reviews

* default to correct root and output directory on Windows systems

- Previously the script was relying on the readline buffer editing

feature to set up the correct default. But this feature doesn't

exist on windows.

- This commit detects when user typed return with an empty directory

value and replaces with the default directory.

* improved readability of directory choices

* Update scripts/configure_invokeai.py

Co-authored-by: psychedelicious <4822129+psychedelicious@users.noreply.github.com>

* better error reporting at startup

- If user tries to run the script outside of the repo or runtime directory,

a more informative message will appear explaining the problem.

Co-authored-by: psychedelicious <4822129+psychedelicious@users.noreply.github.com>

* Embedding merging (#1526)

* add whole <style token> to vocab for concept library embeddings

* add ability to load multiple concept .bin files

* make --log_tokenization respect custom tokens

* start working on concept downloading system

* preliminary support for dynamic loading and merging of multiple embedded models

- The embedding_manager is now enhanced with ldm.invoke.concepts_lib,

which handles dynamic downloading and caching of embedded models from

the Hugging Face concepts library (https://huggingface.co/sd-concepts-library)

- Downloading of a embedded model is triggered by the presence of one or more

<concept> tags in the prompt.

- Once the embedded model is downloaded, its trigger phrase will be loaded

into the embedding manager and the prompt's <concept> tag will be replaced

with the <trigger_phrase>

- The downloaded model stays on disk for fast loading later.

- The CLI autocomplete will complete partial <concept> tags for you. Type a

'<' and hit tab to get all ~700 concepts.

BUGS AND LIMITATIONS:

- MODEL NAME VS TRIGGER PHRASE

You must use the name of the concept embed model from the SD

library, and not the trigger phrase itself. Usually these are the

same, but not always. For example, the model named "hoi4-leaders"

corresponds to the trigger "<HOI4-Leader>"

One reason for this design choice is that there is no apparent

constraint on the uniqueness of the trigger phrases and one trigger

phrase may map onto multiple models. So we use the model name

instead.

The second reason is that there is no way I know of to search

Hugging Face for models with certain trigger phrases. So we'd have

to download all 700 models to index the phrases.

The problem this presents is that this may confuse users, who will

want to reuse prompts from distributions that use the trigger phrase

directly. Usually this will work, but not always.

- WON'T WORK ON A FIREWALLED SYSTEM

If the host running IAI has no internet connection, it can't

download the concept libraries. I will add a script that allows

users to preload a list of concept models.

- BUG IN PROMPT REPLACEMENT WHEN MODEL NOT FOUND

There's a small bug that occurs when the user provides an invalid

model name. The <concept> gets replaced with <None> in the prompt.

* fix loading .pt embeddings; allow multi-vector embeddings; warn on dupes

* simplify replacement logic and remove cuda assumption

* download list of concepts from hugging face

* remove misleading customization of '*' placeholder

the existing code as-is did not do anything; unclear what it was supposed to do.

the obvious alternative -- setting using 'placeholder_strings' instead of

'placeholder_tokens' to match model.params.personalization_config.params.placeholder_strings --

caused a crash. i think this is because the passed string also needed to be handed over

on init of the PersonalizedBase as the 'placeholder_token' argument.

this is weird config dict magic and i don't want to touch it. put a

breakpoint in personalzied.py line 116 (top of PersonalizedBase.__init__) if

you want to have a crack at it yourself.

* address all the issues raised by damian0815 in review of PR #1526

* actually resize the token_embeddings

* multiple improvements to the concept loader based on code reviews

1. Activated the --embedding_directory option (alias --embedding_path)

to load a single embedding or an entire directory of embeddings at

startup time.

2. Can turn off automatic loading of embeddings using --no-embeddings.

3. Embedding checkpoints are scanned with the pickle scanner.

4. More informative error messages when a concept can't be loaded due

either to a 404 not found error or a network error.

* autocomplete terms end with ">" now

* fix startup error and network unreachable

1. If the .invokeai file does not contain the --root and --outdir options,

invoke.py will now fix it.

2. Catch and handle network problems when downloading hugging face textual

inversion concepts.

* fix misformatted error string

Co-authored-by: Damian Stewart <d@damianstewart.com>

* model_cache.py: fix list_models

Signed-off-by: devops117 <55235206+devops117@users.noreply.github.com>

* add statement of values (#1584)

* this adds the Statement of Values

Google doc source = https://docs.google.com/document/d/1-PrUKDJcxy8OyNGc8CyiHhv2VgLvjt7LRGlEpbg1nmQ/edit?usp=sharing

* Fix heading

* Update InvokeAI_Statement_of_Values.md

* Update InvokeAI_Statement_of_Values.md

* Update InvokeAI_Statement_of_Values.md

* Update InvokeAI_Statement_of_Values.md

* Update InvokeAI_Statement_of_Values.md

* add keturn and mauwii to the team member list

* Fix punctuation

* this adds the Statement of Values

Google doc source = https://docs.google.com/document/d/1-PrUKDJcxy8OyNGc8CyiHhv2VgLvjt7LRGlEpbg1nmQ/edit?usp=sharing

* add keturn and mauwii to the team member list

* fix formating

- make sub bullets use * (decide to all use - or *)

- indent sub bullets

Sorry, first only looked at the code version and found this only after

looking at the markdown rendered version

* use multiparagraph numbered sections

* Break up Statement Of Values as per comments on #1584

* remove duplicated word, reduce vagueness

it's important not to overstate how many artists we are consulting.

* fix typo (sorry blessedcoolant)

Co-authored-by: mauwii <Mauwii@outlook.de>

Co-authored-by: damian <git@damianstewart.com>

* update dockerfile (#1551)

* update dockerfile

* remove not existing file from .dockerignore

* remove bloat and unecesary step

also use --no-cache-dir for pip install

image is now close to 2GB

* make Dockerfile a variable

* set base image to `ubuntu:22.10`

* add build-essential

* link outputs folder for persistence

* update tag variable

* update docs

* fix not customizeable build args, add reqs output

* !model_import autocompletes in ROOTDIR

* Adds psychedelicious to statement of values signature (#1602)

* add a --no-patchmatch option to disable patchmatch loading (#1598)

This feature was added to prevent the CI Macintosh tests from erroring

out when patchmatch is unable to retrieve its shared library from

github assets.

* Fix #1599 by relaxing the `match_trigger` regex (#1601)

* Fix #1599 by relaxing the `match_trigger` regex

Also simplify logic and reduce duplication.

* restrict trigger regex again (but not so far)

* make concepts library work with Web UI

This PR makes it possible to include a Hugging Face concepts library

<style-or-subject-trigger> in the WebUI prompt. The metadata seems

to be correctly handled.

* documentation enhancements (#1603)

- Add documentation for the Hugging Face concepts library and TI embedding.

- Fixup index.md to point to each of the feature documentation files,

including ones that are pending.

* tweak setup and environment files for linux & pypatchmatch (#1580)

* tweak setup and environment files for linux & pypatchmatch

- Downgrade python requirements to 3.9 because 3.10 is not supported

on Ubuntu 20.04 LTS (widely-used distro)

- Use our github pypatchmatch 0.1.3 in order to install Makefile

where it needs to be.

- Restored "-e ." as the last install step on pip installs. Hopefully

this will not trigger the high-CPU hang we've previously experienced.

* keep windows on basicsr 1.4.1

* keep windows on basicsr 1.4.1

* bump pypatchmatch requirement to 0.1.4

- This brings in a version of pypatchmatch that will gracefully

handle internet connection not available at startup time.

- Also refactors and simplifies the handling of gfpgan's basicsr requirement

across various platforms.

* revert to older version of list_models() (#1611)

This restores the correct behavior of list_models() and quenches

the bug of list_models() returning a single model entry named "name".

I have not investigated what was wrong with the new version, but I

think it may have to do with changes to the behavior in dict.update()

* Fixes for #1604 (#1605)

* Converts ESRGAN image input to RGB

- Also adds typing for image input.

- Partially resolves #1604

* ensure there are unmasked pixels before color matching

Co-authored-by: Kyle Schouviller <kyle0654@hotmail.com>

* update index.md (#1609)

- comment out non existing link

- fix indention

- add seperator between feature categories

* Debloat-docker (#1612)

* debloat Dockerfile

- less options more but more userfriendly

- better Entrypoint to simulate CLI usage

- without command the container still starts the web-host

* debloat build.sh

* better syntax in run.sh

* update Docker docs

- fix description of VOLUMENAME

- update run script example to reflect new entrypoint

* Test installer (#1618)

* test linux install

* try removing http from parsed requirements

* pip install confirmed working on linux

* ready for linux testing

- rebuilt py3.10-linux-x86_64-cuda-reqs.txt to include pypatchmatch

dependency.

- point install.sh and install.bat to test-installer branch.

* Updates MPS reqs

* detect broken readline history files

* fix download.pytorch.org URL

* Test installer (Win 11) (#1620)

Co-authored-by: Cyrus Chan <cyruswkc@hku.hk>

* Test installer (MacOS 13.0.1 w/ torch==1.12.0) (#1621)

* Test installer (Win 11)

* Test installer (MacOS 13.0.1 w/ torch==1.12.0)

Co-authored-by: Cyrus Chan <cyruswkc@hku.hk>

* change sourceball to development for testing

* Test installer (MacOS 13.0.1 w/ torch==1.12.1 & torchvision==1.13.1) (#1622)

* Test installer (Win 11)

* Test installer (MacOS 13.0.1 w/ torch==1.12.0)

* Test installer (MacOS 13.0.1 w/ torch==1.12.1 & torchvision==1.13.1)

Co-authored-by: Cyrus Chan <cyruswkc@hku.hk>

Co-authored-by: psychedelicious <4822129+psychedelicious@users.noreply.github.com>

Co-authored-by: Cyrus Chan <82143712+cyruschan360@users.noreply.github.com>

Co-authored-by: Cyrus Chan <cyruswkc@hku.hk>

* 2.2 Doc Updates (#1589)

* Unified Canvas Docs & Assets

Unified Canvas draft

Advanced Tools Updates

Doc Updates (lstein feedback)

* copy edits to Unified Canvas docs

- consistent capitalisation and feature naming

- more intimate address (replace "the user" with "you") for improved User

Engagement(tm)

- grammatical massaging and *poesie*

Co-authored-by: Lincoln Stein <lincoln.stein@gmail.com>

Co-authored-by: damian <git@damianstewart.com>

* include a step after config to `cat ~/.invokeai` (#1629)

* disable patchmatch in CI actions (#1626)

* disable patchmatch in CI actions

* fix indention

* replace tab with spaces

Co-authored-by: Matthias Wild <40327258+mauwii@users.noreply.github.com>

Co-authored-by: mauwii <Mauwii@outlook.de>

* Fix installer script for macOS. (#1630)

* refer to the platform as 'osx' instead of 'mac', otherwise the

composed URL to micromamba is wrong.

* move the `-O` option to `tar` to be grouped with the other tar flags

to avoid the `-O` being interpreted as something to unarchive.

* Removes symlinked environment.yaml (#1631)

Was unintentionally added in #1621

* Fix inpainting with iterations (#1635)

* fix error when inpainting using runwayml inpainting model (#1634)

- error was "Omnibus object has no attribute pil_image"

- closes #1596

* add k_dpmpp_2_a and k_dpmpp_2 solvers options (#1389)

* add k_dpmpp_2_a and k_dpmpp_2 solvers options

* update frontend

Co-authored-by: Victor <victorca25@users.noreply.github.com>

Co-authored-by: Lincoln Stein <lincoln.stein@gmail.com>

* add .editorconfig (#1636)

* Web UI 2.2 bugfixes (#1572)

* Fixes bug preventing multiple images from being generated

* Fixes valid seam strength value range

* Update Delete Alert Text

Indicates to the user that images are not permanently deleted.

* Fixes left/right arrows not working on gallery

* Fixes initial image on load erroneously set to a user uploaded image

Should be a result gallery image.

* Lightbox Fixes

- Lightbox is now a button in the current image buttons

- Lightbox is also now available in the gallery context menu

- Lightbox zoom issues fixed

- Lightbox has a fade in animation.

* Fix image display wrapper in current preview not overflow bounds

* Revert "Fix image display wrapper in current preview not overflow bounds"

This reverts commit 5511c82714dbf1d1999d64e8bc357bafa34ddf37.

* Change Staging Area discard icon from Bin to X

* Expose Snap Threshold and Move Snap Settings to BBox Panel

* Changes img2img strength default to 0.75

* Fixes drawing triggering when mouse enters canvas w/ button down

When we only supported inpainting and no zoom, this was useful. It allowed the cursor to leave the canvas (which was easy to do given the limited canvas dimensions) and without losing the "I am drawing" state.

With a zoomable canvas this is no longer as useful.

Additionally, we have more popovers and tools (like the color pickers) which result in unexpected brush strokes. This fixes that issue.

* Revert "Expose Snap Threshold and Move Snap Settings to BBox Panel"

We will handle this a bit differently - by allowing the grid origin to be moved. I will dig in at some point.

This reverts commit 33c92ecf4da724c2f17d9d91c7ea31a43a2f6deb.

* Adds Limit Strokes to Box

* Adds fill bounding box button

* Adds erase bounding box button

* Changes Staging area discard icon to match others

* Fixes right click breaking move tool

* Fixes brush preview visibility issue with "darken outside box"

* Fixes history bugs with addFillRect, addEraseRect, and other actions

* Adds missing `key`

* Fixes postprocessing being applied to canvas generations

* Fixes bbox not getting scaled in various situations

* Fixes staging area show image toggle not resetting on accept/discard

* Locks down canvas while generating/staging

* Fixes move tool breaking when canvas loses focus during move/transform

* Hides cursor when restrict strokes is on and mouse outside bbox

* Lints

* Builds fresh bundle

* Fix overlapping hotkey for Fill Bounding Box

* Build Fresh Bundle

* Fixes bug with mask and bbox overlay

* Builds fresh bundle

Co-authored-by: blessedcoolant <54517381+blessedcoolant@users.noreply.github.com>

Co-authored-by: Lincoln Stein <lincoln.stein@gmail.com>

* disable NSFW checker loading during the CI tests (#1641)

* disable NSFW checker loading during the CI tests

The NSFW filter apparently causes invoke.py to crash during CI testing,

possibly due to out of memory errors. This workaround disables NSFW

model loading.

* doc change

* fix formatting errors in yml files

* Configure the NSFW checker at install time with default on (#1624)

* configure the NSFW checker at install time with default on

1. Changes the --safety_checker argument to --nsfw_checker and

--no-nsfw_checker. The original argument is recognized for backward

compatibility.

2. The configure script asks users whether to enable the checker

(default yes). Also offers users ability to select default sampler and

number of generation steps.

3.Enables the pasting of the caution icon on blurred images when

InvokeAI is installed into the package directory.

4. Adds documentation for the NSFW checker, including caveats about

accuracy, memory requirements, and intermediate image dispaly.

* use better fitting icon

* NSFW defaults false for testing

* set default back to nsfw active

Co-authored-by: Matthias Wild <40327258+mauwii@users.noreply.github.com>

Co-authored-by: mauwii <Mauwii@outlook.de>

Signed-off-by: devops117 <55235206+devops117@users.noreply.github.com>

Co-authored-by: psychedelicious <4822129+psychedelicious@users.noreply.github.com>

Co-authored-by: blessedcoolant <54517381+blessedcoolant@users.noreply.github.com>

Co-authored-by: Kyle Schouviller <kyle0654@hotmail.com>

Co-authored-by: javl <mail@jaspervanloenen.com>

Co-authored-by: Kent Keirsey <31807370+hipsterusername@users.noreply.github.com>

Co-authored-by: mauwii <Mauwii@outlook.de>

Co-authored-by: Matthias Wild <40327258+mauwii@users.noreply.github.com>

Co-authored-by: Damian Stewart <d@damianstewart.com>

Co-authored-by: DevOps117 <55235206+devops117@users.noreply.github.com>

Co-authored-by: damian <git@damianstewart.com>

Co-authored-by: Damian Stewart <null@damianstewart.com>

Co-authored-by: Cyrus Chan <82143712+cyruschan360@users.noreply.github.com>

Co-authored-by: Cyrus Chan <cyruswkc@hku.hk>

Co-authored-by: Andre LaBranche <dre@mac.com>

Co-authored-by: victorca25 <41912303+victorca25@users.noreply.github.com>

Co-authored-by: Victor <victorca25@users.noreply.github.com>

39 KiB

title

| title |

|---|

| CLI |

:material-bash: CLI

Interactive Command Line Interface

The invoke.py script, located in scripts/, provides an interactive interface

to image generation similar to the "invoke mothership" bot that Stable AI

provided on its Discord server.

Unlike the txt2img.py and img2img.py scripts provided in the original

CompVis/stable-diffusion source

code repository, the time-consuming initialization of the AI model

initialization only happens once. After that image generation from the

command-line interface is very fast.

The script uses the readline library to allow for in-line editing, command history (++up++ and ++down++), autocompletion, and more. To help keep track of which prompts generated which images, the script writes a log file of image names and prompts to the selected output directory.

In addition, as of version 1.02, it also writes the prompt into the PNG file's

metadata where it can be retrieved using scripts/images2prompt.py

The script is confirmed to work on Linux, Windows and Mac systems.

!!! note

This script runs from the command-line or can be used as a Web application. The Web GUI is

currently rudimentary, but a much better replacement is on its way.

(invokeai) ~/stable-diffusion$ python3 ./scripts/invoke.py

* Initializing, be patient...

Loading model from models/ldm/text2img-large/model.ckpt

(...more initialization messages...)

* Initialization done! Awaiting your command...

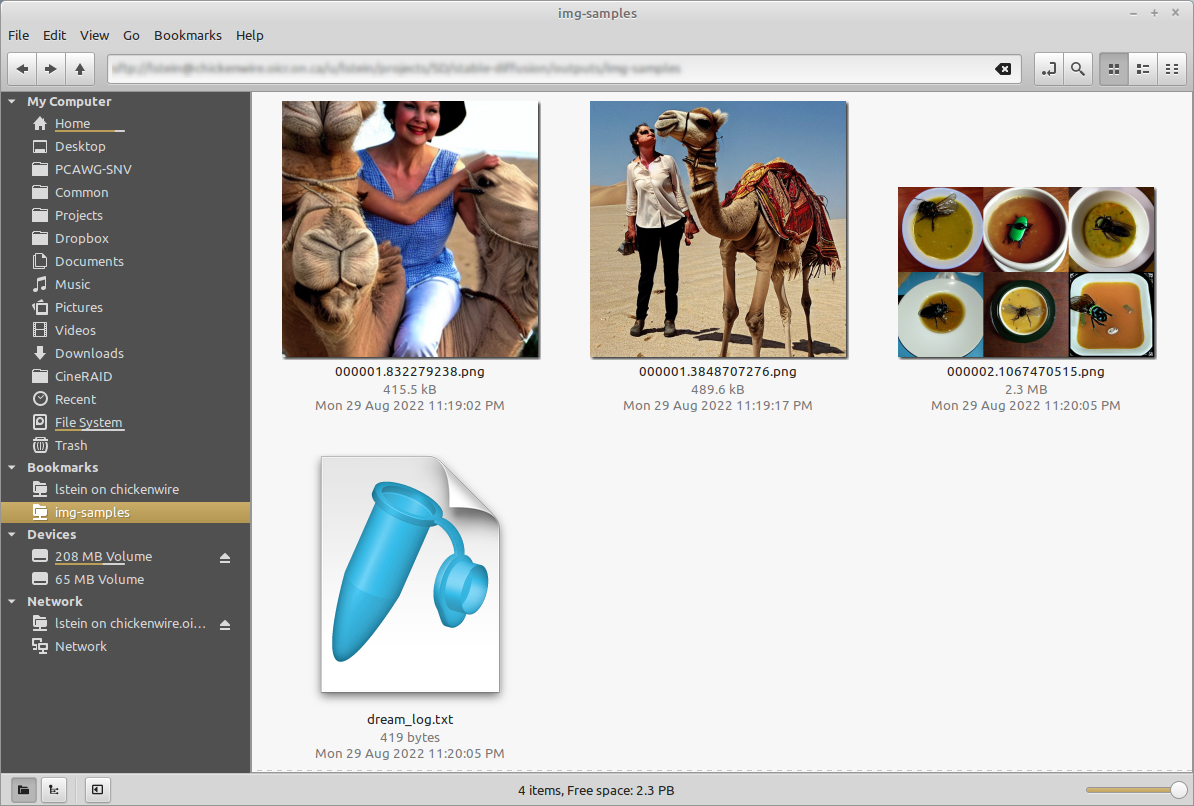

invoke> ashley judd riding a camel -n2 -s150

Outputs:

outputs/img-samples/00009.png: "ashley judd riding a camel" -n2 -s150 -S 416354203

outputs/img-samples/00010.png: "ashley judd riding a camel" -n2 -s150 -S 1362479620

invoke> "there's a fly in my soup" -n6 -g

outputs/img-samples/00011.png: "there's a fly in my soup" -n6 -g -S 2685670268

seeds for individual rows: [2685670268, 1216708065, 2335773498, 822223658, 714542046, 3395302430]

invoke> q

# this shows how to retrieve the prompt stored in the saved image's metadata

(invokeai) ~/stable-diffusion$ python ./scripts/images2prompt.py outputs/img_samples/*.png

00009.png: "ashley judd riding a camel" -s150 -S 416354203

00010.png: "ashley judd riding a camel" -s150 -S 1362479620

00011.png: "there's a fly in my soup" -n6 -g -S 2685670268

The invoke> prompt's arguments are pretty much identical to those used in the

Discord bot, except you don't need to type !invoke (it doesn't hurt if you

do). A significant change is that creation of individual images is now the

default unless --grid (-g) is given. A full list is given in

List of prompt arguments.

Arguments

The script itself also recognizes a series of command-line switches that will change important global defaults, such as the directory for image outputs and the location of the model weight files.

List of arguments recognized at the command line

These command-line arguments can be passed to invoke.py when you first run it

from the Windows, Mac or Linux command line. Some set defaults that can be

overridden on a per-prompt basis (see

List of prompt arguments. Others

| Argument |

Shortcut |

Default |

Description |

|---|---|---|---|

--help |

-h |

Print a concise help message. | |

--outdir <path> |

-o<path> |

outputs/img_samples |

Location for generated images. |

--prompt_as_dir |

-p |

False |

Name output directories using the prompt text. |

--from_file <path> |

None |

Read list of prompts from a file. Use - to read from standard input |

|

--model <modelname> |

stable-diffusion-1.4 |

Loads model specified in configs/models.yaml. Currently one of "stable-diffusion-1.4" or "laion400m" | |

--full_precision |

-F |

False |

Run in slower full-precision mode. Needed for Macintosh M1/M2 hardware and some older video cards. |

--png_compression <0-9> |

-z<0-9> |

6 |

Select level of compression for output files, from 0 (no compression) to 9 (max compression) |

--safety-checker |

False |

Activate safety checker for NSFW and other potentially disturbing imagery | |

--web |

False |

Start in web server mode | |

--host <ip addr> |

localhost |

Which network interface web server should listen on. Set to 0.0.0.0 to listen on any. | |

--port <port> |

9090 |

Which port web server should listen for requests on. | |

--config <path> |

configs/models.yaml |

Configuration file for models and their weights. | |

--iterations <int> |

-n<int> |

1 |

How many images to generate per prompt. |

--width <int> |

-W<int> |

512 |

Width of generated image |

--height <int> |

-H<int> |

512 |

Height of generated image |

--strength <float> |

-s<float> |

0.75 |

For img2img: how hard to try to match the prompt to the initial image. Ranges from 0.0-0.99, with higher values replacing the initial image completely. |

--fit |

-F |

False |

For img2img: scale the init image to fit into the specified -H and -W dimensions |

--grid |

-g |

False |

Save all image series as a grid rather than individually. |

--sampler <sampler> |

-A<sampler> |

k_lms |

Sampler to use. Use -h to get list of available samplers. |

--seamless |

False |

Create interesting effects by tiling elements of the image. | |

--embedding_path <path> |

None |

Path to pre-trained embedding manager checkpoints, for custom models | |

--gfpgan_model_path |

experiments/pretrained_models/GFPGANv1.4.pth |

Path to GFPGAN model file. | |

--free_gpu_mem |

False |

Free GPU memory after sampling, to allow image decoding and saving in low VRAM conditions | |

--precision |

auto |

Set model precision, default is selected by device. Options: auto, float32, float16, autocast |

!!! warning "These arguments are deprecated but still work"

<div align="center" markdown>

| Argument | Shortcut | Default | Description |

|--------------------|------------|---------------------|--------------|

| `--weights <path>` | | `None` | Path to weights file; use `--model stable-diffusion-1.4` instead |

| `--laion400m` | `-l` | `False` | Use older LAION400m weights; use `--model=laion400m` instead |

</div>

!!! tip

On Windows systems, you may run into

problems when passing the invoke script standard backslashed path

names because the Python interpreter treats "\" as an escape.

You can either double your slashes (ick): `C:\\path\\to\\my\\file`, or

use Linux/Mac style forward slashes (better): `C:/path/to/my/file`.

The .invokeai initialization file

To start up invoke.py with your preferred settings, place your desired

startup options in a file in your home directory named .invokeai The

file should contain the startup options as you would type them on the

command line (--steps=10 --grid), one argument per line, or a

mixture of both using any of the accepted command switch formats:

!!! example ""

```bash

--web

--steps=28

--grid

-f 0.6 -C 11.0 -A k_euler_a

```

Note that the initialization file only accepts the command line arguments.

There are additional arguments that you can provide on the invoke> command

line (such as -n or --iterations) that cannot be entered into this file.

Also be alert for empty blank lines at the end of the file, which will cause

an arguments error at startup time.

List of prompt arguments

After the invoke.py script initializes, it will present you with a invoke>

prompt. Here you can enter information to generate images from text

(txt2img), to embellish an existing image or sketch

(img2img), or to selectively alter chosen regions of the image

(inpainting).

txt2img

!!! example ""

```bash

invoke> waterfall and rainbow -W640 -H480

```

This will create the requested image with the dimensions 640 (width)

and 480 (height).

Here are the invoke> command that apply to txt2img:

| Argument |

Shortcut |

Default |

Description |

|---|---|---|---|

| "my prompt" | Text prompt to use. The quotation marks are optional. | ||

--width <int> |

-W<int> |

512 |

Width of generated image |

--height <int> |

-H<int> |

512 |

Height of generated image |

--iterations <int> |

-n<int> |

1 |

How many images to generate from this prompt |

--steps <int> |

-s<int> |

50 |

How many steps of refinement to apply |

--cfg_scale <float> |

-C<float> |

7.5 |

How hard to try to match the prompt to the generated image; any number greater than 1.0 works, but the useful range is roughly 5.0 to 20.0 |

--seed <int> |

-S<int> |

None |

Set the random seed for the next series of images. This can be used to recreate an image generated previously. |

--sampler <sampler> |

-A<sampler> |

k_lms |

Sampler to use. Use -h to get list of available samplers. |

--karras_max <int> |

29 |

When using k_* samplers, set the maximum number of steps before shifting from using the Karras noise schedule (good for low step counts) to the LatentDiffusion noise schedule (good for high step counts) This value is sticky. [29] | |

--hires_fix |

Larger images often have duplication artefacts. This option suppresses duplicates by generating the image at low res, and then using img2img to increase the resolution | ||

--png_compression <0-9> |

-z<0-9> |

6 |

Select level of compression for output files, from 0 (no compression) to 9 (max compression) |

--grid |

-g |

False |

Turn on grid mode to return a single image combining all the images generated by this prompt |

--individual |

-i |

True |

Turn off grid mode (deprecated; leave off --grid instead) |

--outdir <path> |

-o<path> |

outputs/img_samples |

Temporarily change the location of these images |

--seamless |

False |

Activate seamless tiling for interesting effects | |

--seamless_axes |

x,y |

Specify which axes to use circular convolution on. | |

--log_tokenization |

-t |

False |

Display a color-coded list of the parsed tokens derived from the prompt |

--skip_normalization |

-x |

False |

Weighted subprompts will not be normalized. See Weighted Prompts |

--upscale <int> <float> |

-U <int> <float> |

-U 1 0.75 |

Upscale image by magnification factor (2, 4), and set strength of upscaling (0.0-1.0). If strength not set, will default to 0.75. |

--facetool_strength <float> |

-G <float> |

-G0 |

Fix faces (defaults to using the GFPGAN algorithm); argument indicates how hard the algorithm should try (0.0-1.0) |

--facetool <name> |

-ft <name> |

-ft gfpgan |

Select face restoration algorithm to use: gfpgan, codeformer |

--codeformer_fidelity |

-cf <float> |

0.75 |

Used along with CodeFormer. Takes values between 0 and 1. 0 produces high quality but low accuracy. 1 produces high accuracy but low quality |

--save_original |

-save_orig |

False |

When upscaling or fixing faces, this will cause the original image to be saved rather than replaced. |

--variation <float> |

-v<float> |

0.0 |

Add a bit of noise (0.0=none, 1.0=high) to the image in order to generate a series of variations. Usually used in combination with -S<seed> and -n<int> to generate a series a riffs on a starting image. See Variations. |

--with_variations <pattern> |

None |

Combine two or more variations. See Variations for now to use this. | |

--save_intermediates <n> |

None |

Save the image from every nth step into an "intermediates" folder inside the output directory |

Note that the width and height of the image must be multiples of 64. You can provide different values, but they will be rounded down to the nearest multiple of 64.

This is an example of img2img:

invoke> waterfall and rainbow -I./vacation-photo.png -W640 -H480 --fit

This will modify the indicated vacation photograph by making it more like the prompt. Results will vary greatly depending on what is in the image. We also ask to --fit the image into a box no bigger than 640x480. Otherwise the image size will be identical to the provided photo and you may run out of memory if it is large.

In addition to the command-line options recognized by txt2img, img2img accepts additional options:

| Argument |

Shortcut | Default | Description |

|---|---|---|---|

--init_img <path> |

-I<path> |

None |

Path to the initialization image |

--fit |

-F |

False |

Scale the image to fit into the specified -H and -W dimensions |

--strength <float> |

-s<float> |

0.75 |

How hard to try to match the prompt to the initial image. Ranges from 0.0-0.99, with higher values replacing the initial image completely. |

inpainting

!!! example ""

```bash

invoke> waterfall and rainbow -I./vacation-photo.png -M./vacation-mask.png -W640 -H480 --fit

```

This will do the same thing as img2img, but image alterations will

only occur within transparent areas defined by the mask file specified

by `-M`. You may also supply just a single initial image with the areas

to overpaint made transparent, but you must be careful not to destroy

the pixels underneath when you create the transparent areas. See

[Inpainting](./INPAINTING.md) for details.

inpainting accepts all the arguments used for txt2img and img2img, as well as the --mask (-M) and --text_mask (-tm) arguments:

| Argument |

Shortcut | Default | Description |

|---|---|---|---|

--init_mask <path> |

-M<path> |

None |

Path to an image the same size as the initial_image, with areas for inpainting made transparent. |

--invert_mask |

False | If true, invert the mask so that transparent areas are opaque and vice versa. | |

--text_mask <prompt> [<float>] |

-tm <prompt> [<float>] |

Create a mask from a text prompt describing part of the image |

The mask may either be an image with transparent areas, in which case the inpainting will occur in the transparent areas only, or a black and white image, in which case all black areas will be painted into.

--text_mask (short form -tm) is a way to generate a mask using a text

description of the part of the image to replace. For example, if you have an

image of a breakfast plate with a bagel, toast and scrambled eggs, you can

selectively mask the bagel and replace it with a piece of cake this way:

invoke> a piece of cake -I /path/to/breakfast.png -tm bagel

The algorithm uses clipseg to classify different

regions of the image. The classifier puts out a confidence score for each region

it identifies. Generally regions that score above 0.5 are reliable, but if you

are getting too much or too little masking you can adjust the threshold down (to

get more mask), or up (to get less). In this example, by passing -tm a higher

value, we are insisting on a more stringent classification.

invoke> a piece of cake -I /path/to/breakfast.png -tm bagel 0.6

Custom Styles and Subjects

You can load and use hundreds of community-contributed Textual Inversion models just by typing the appropriate trigger phrase. Please see Concepts Library for more details.

Other Commands

The CLI offers a number of commands that begin with "!".

Postprocessing images

To postprocess a file using face restoration or upscaling, use the !fix

command.

!fix

This command runs a post-processor on a previously-generated image. It takes a

PNG filename or path and applies your choice of the -U, -G, or --embiggen

switches in order to fix faces or upscale. If you provide a filename, the script

will look for it in the current output directory. Otherwise you can provide a

full or partial path to the desired file.

Some examples:

!!! example "Upscale to 4X its original size and fix faces using codeformer"

```bash

invoke> !fix 0000045.4829112.png -G1 -U4 -ft codeformer

```

!!! example "Use the GFPGAN algorithm to fix faces, then upscale to 3X using --embiggen"

```bash

invoke> !fix 0000045.4829112.png -G0.8 -ft gfpgan

>> fixing outputs/img-samples/0000045.4829112.png

>> retrieved seed 4829112 and prompt "boy enjoying a banana split"

>> GFPGAN - Restoring Faces for image seed:4829112

Outputs:

[1] outputs/img-samples/000017.4829112.gfpgan-00.png: !fix "outputs/img-samples/0000045.4829112.png" -s 50 -S -W 512 -H 512 -C 7.5 -A k_lms -G 0.8

```

!mask

This command takes an image, a text prompt, and uses the clipseg algorithm to

automatically generate a mask of the area that matches the text prompt. It is

useful for debugging the text masking process prior to inpainting with the

--text_mask argument. See [INPAINTING.md] for details.

Model selection and importation

The CLI allows you to add new models on the fly, as well as to switch among them rapidly without leaving the script.

!models

This prints out a list of the models defined in `config/models.yaml'. The active model is bold-faced

Example:

laion400m not loaded stable-diffusion-1.4 active Stable Diffusion v1.4 waifu-diffusion not loaded Waifu Diffusion v1.3

!switch

This quickly switches from one model to another without leaving the CLI script.

invoke.py uses a memory caching system; once a model has been loaded,

switching back and forth is quick. The following example shows this in action.

Note how the second column of the !models table changes to cached after a

model is first loaded, and that the long initialization step is not needed when

loading a cached model.

invoke> !models laion400m not loaded stable-diffusion-1.4 cached Stable Diffusion v1.4 waifu-diffusion active Waifu Diffusion v1.3 invoke> !switch waifu-diffusion >> Caching model stable-diffusion-1.4 in system RAM >> Loading waifu-diffusion from models/ldm/stable-diffusion-v1/model-epoch08-float16.ckpt | LatentDiffusion: Running in eps-prediction mode | DiffusionWrapper has 859.52 M params. | Making attention of type 'vanilla' with 512 in_channels | Working with z of shape (1, 4, 32, 32) = 4096 dimensions. | Making attention of type 'vanilla' with 512 in_channels | Using faster float16 precision >> Model loaded in 18.24s >> Max VRAM used to load the model: 2.17G >> Current VRAM usage:2.17G >> Setting Sampler to k_lms invoke> !models laion400m not loaded stable-diffusion-1.4 cached Stable Diffusion v1.4 waifu-diffusion active Waifu Diffusion v1.3 invoke> !switch stable-diffusion-1.4 >> Caching model waifu-diffusion in system RAM >> Retrieving model stable-diffusion-1.4 from system RAM cache >> Setting Sampler to k_lms invoke> !models laion400m not loaded stable-diffusion-1.4 active Stable Diffusion v1.4 waifu-diffusion cached Waifu Diffusion v1.3

!import_model <path/to/model/weights>

This command imports a new model weights file into InvokeAI, makes it available

for image generation within the script, and writes out the configuration for the

model into config/models.yaml for use in subsequent sessions.

Provide !import_model with the path to a weights file ending in .ckpt. If

you type a partial path and press tab, the CLI will autocomplete. Although it

will also autocomplete to .vae files, these are not currenty supported (but

will be soon).

When you hit return, the CLI will prompt you to fill in additional information

about the model, including the short name you wish to use for it with the

!switch command, a brief description of the model, the default image width and

height to use with this model, and the model's configuration file. The latter

three fields are automatically filled with reasonable defaults. In the example

below, the bold-faced text shows what the user typed in with the exception of

the width, height and configuration file paths, which were filled in

automatically.

Example:

invoke> !import_model models/ldm/stable-diffusion-v1/model-epoch08-float16.ckpt >> Model import in process. Please enter the values needed to configure this model: Name for this model: waifu-diffusion Description of this model: Waifu Diffusion v1.3 Configuration file for this model: configs/stable-diffusion/v1-inference.yaml Default image width: 512 Default image height: 512 >> New configuration: waifu-diffusion: config: configs/stable-diffusion/v1-inference.yaml description: Waifu Diffusion v1.3 height: 512 weights: models/ldm/stable-diffusion-v1/model-epoch08-float16.ckpt width: 512 OK to import [n]? y >> Caching model stable-diffusion-1.4 in system RAM >> Loading waifu-diffusion from models/ldm/stable-diffusion-v1/model-epoch08-float16.ckpt | LatentDiffusion: Running in eps-prediction mode | DiffusionWrapper has 859.52 M params. | Making attention of type 'vanilla' with 512 in_channels | Working with z of shape (1, 4, 32, 32) = 4096 dimensions. | Making attention of type 'vanilla' with 512 in_channels | Using faster float16 precision invoke>

###!edit_model <name_of_model>

The !edit_model command can be used to modify a model that is already defined

in config/models.yaml. Call it with the short name of the model you wish to

modify, and it will allow you to modify the model's description, weights and

other fields.

Example:

invoke> !edit_model waifu-diffusion >> Editing model waifu-diffusion from configuration file ./configs/models.yaml description: Waifu diffusion v1.4beta weights: models/ldm/stable-diffusion-v1/model-epoch10-float16.ckpt config: configs/stable-diffusion/v1-inference.yaml width: 512 height: 512 >> New configuration: waifu-diffusion: config: configs/stable-diffusion/v1-inference.yaml description: Waifu diffusion v1.4beta weights: models/ldm/stable-diffusion-v1/model-epoch10-float16.ckpt height: 512 width: 512 OK to import [n]? y >> Caching model stable-diffusion-1.4 in system RAM >> Loading waifu-diffusion from models/ldm/stable-diffusion-v1/model-epoch10-float16.ckpt ...

======= invoke> !fix 000017.4829112.gfpgan-00.png --embiggen 3 ...lots of text... Outputs: [2] outputs/img-samples/000018.2273800735.embiggen-00.png: !fix "outputs/img-samples/000017.243781548.gfpgan-00.png" -s 50 -S 2273800735 -W 512 -H 512 -C 7.5 -A k_lms --embiggen 3.0 0.75 0.25 ```

History processing

The CLI provides a series of convenient commands for reviewing previous actions, retrieving them, modifying them, and re-running them.

!history

The invoke script keeps track of all the commands you issue during a session, allowing you to re-run them. On Mac and Linux systems, it also writes the command-line history out to disk, giving you access to the most recent 1000 commands issued.

The !history command will return a numbered list of all the commands issued

during the session (Windows), or the most recent 1000 commands (Mac|Linux). You

can then repeat a command by using the command !NNN, where "NNN" is the

history line number. For example:

invoke> !history

...

[14] happy woman sitting under tree wearing broad hat and flowing garment

[15] beautiful woman sitting under tree wearing broad hat and flowing garment

[18] beautiful woman sitting under tree wearing broad hat and flowing garment -v0.2 -n6

[20] watercolor of beautiful woman sitting under tree wearing broad hat and flowing garment -v0.2 -n6 -S2878767194

[21] surrealist painting of beautiful woman sitting under tree wearing broad hat and flowing garment -v0.2 -n6 -S2878767194

...

invoke> !20

invoke> watercolor of beautiful woman sitting under tree wearing broad hat and flowing garment -v0.2 -n6 -S2878767194

!fetch

This command retrieves the generation parameters from a previously generated image and either loads them into the command line (Linux|Mac), or prints them out in a comment for copy-and-paste (Windows). You may provide either the name of a file in the current output directory, or a full file path. Specify path to a folder with image png files, and wildcard *.png to retrieve the dream command used to generate the images, and save them to a file commands.txt for further processing.

This example loads the generation command for a single png file:

invoke> !fetch 0000015.8929913.png

# the script returns the next line, ready for editing and running:

invoke> a fantastic alien landscape -W 576 -H 512 -s 60 -A plms -C 7.5

This one fetches the generation commands from a batch of files and stores them

into selected.txt:

invoke> !fetch outputs\selected-imgs\*.png selected.txt

!replay

This command replays a text file generated by !fetch or created manually

invoke> !replay outputs\selected-imgs\selected.txt

Note that these commands may behave unexpectedly if given a PNG file that was not generated by InvokeAI.

!search

This is similar to !history but it only returns lines that contain

search string. For example:

invoke> !search surreal

[21] surrealist painting of beautiful woman sitting under tree wearing broad hat and flowing garment -v0.2 -n6 -S2878767194

!clear

This clears the search history from memory and disk. Be advised that this operation is irreversible and does not issue any warnings!

Command-line editing and completion

The command-line offers convenient history tracking, editing, and command completion.

- To scroll through previous commands and potentially edit/reuse them, use the ++up++ and ++down++ keys.

- To edit the current command, use the ++left++ and ++right++ keys to position the cursor, and then ++backspace++, ++delete++ or insert characters.

- To move to the very beginning of the command, type ++ctrl+a++ (or ++command+a++ on the Mac)

- To move to the end of the command, type ++ctrl+e++.

- To cut a section of the command, position the cursor where you want to start cutting and type ++ctrl+k++

- To paste a cut section back in, position the cursor where you want to paste, and type ++ctrl+y++

Windows users can get similar, but more limited, functionality if they launch

invoke.py with the winpty program and have the pyreadline3 library

installed:

> winpty python scripts\invoke.py

On the Mac and Linux platforms, when you exit invoke.py, the last 1000 lines of

your command-line history will be saved. When you restart invoke.py, you can

access the saved history using the ++up++ key.

In addition, limited command-line completion is installed. In various contexts, you can start typing your command and press ++tab++. A list of potential completions will be presented to you. You can then type a little more, hit ++tab++ again, and eventually autocomplete what you want.

When specifying file paths using the one-letter shortcuts, the CLI will attempt

to complete pathnames for you. This is most handy for the -I (init image) and

-M (init mask) paths. To initiate completion, start the path with a slash

(/) or ./. For example:

invoke> zebra with a mustache -I./test-pictures<TAB>

-I./test-pictures/Lincoln-and-Parrot.png -I./test-pictures/zebra.jpg -I./test-pictures/madonna.png

-I./test-pictures/bad-sketch.png -I./test-pictures/man_with_eagle/

You can then type ++z++, hit ++tab++ again, and it will autofill to zebra.jpg.

More text completion features (such as autocompleting seeds) are on their way.