# Weblate Translation

After doing a full integration test of 3 translation service providers

on my fork of InvokeAI, we have chosen

[Weblate](https://hosted.weblate.org). The other two viable options were

[Crowdin](https://crowdin.com/) and

[Transifex](https://www.transifex.com/).

Weblate was the choice because its hosted service provides a very solid

UX / DX, can scale as much as we may ever need, is FOSS itself, and

generously offers free hosted service to other libre projects like ours.

## How it works

Weblate hosts its own fork of our repo and establishes a kind of

unidirectional relationship between our repo and its fork.

### InvokeAI --> Weblate

The `invoke-ai/InvokeAI` repo has had the Weblate GitHub app added to

it. This app watches for changes to our translation source

(`invokeai/frontend/public/locales/en.json`) and then updates the

Weblate fork. The Weblate UI then knows there are new strings to be

translated, or changes to be made.

### Translation

Our translators can then update the translations on the Weblate UI. The

plan now is to invite individual community members who have expressed

interest in maintaining a language or two and give them access to the

app. We can also open the doors to the general public if desired.

### Weblate --> InvokeAI

When a translation is ready or changed, the system will make a PR to

`main`. We have a substantial degree of control over this and will

likely manually trigger these PRs instead of letting them fire off

automatically.

Once a PR is merged, we will still need to rebuild the web UI. I think

we can set things up so that we only need the rebuild when a totally new

language is added, but for now, we will stick to this relatively simple

setup.

## This PR

This PR sets up the web UI's translation stuff to work with Weblate:

- merged each locale into a single file

- updated the i18next config and UI to work with this simpler file

structure

- update our eslint and prettier rules to ensure the locale files have

the same format as what Weblate outputs (`tabWidth: 4`)

- added a thank you to Weblate in our README

Once this is merged, I'll link Weblate to `main` and do a couple tests

to ensure it is all working as expected.

This fixes a few cosmetic bugs in the merge models console GUI:

1) Fix the minimum and maximum ranges on alpha. Was 0.05 to 0.95. Now

0.01 to 0.99.

2) Don't show the 'add_difference' interpolation method when 2 models

selected, or the other three methods when three models selected

## Convert v2 models in CLI

- This PR introduces a CLI prompt for the proper configuration file to

use when converting a ckpt file, in order to support both inpainting

and v2 models files.

- When user tries to directly !import a v2 model, it prints out a proper

warning that v2 ckpts are not directly supported and converts it into a

diffusers model automatically.

The user interaction looks like this:

```

(stable-diffusion-1.5) invoke> !import_model /home/lstein/graphic-art.ckpt

Short name for this model [graphic-art]: graphic-art-test

Description for this model [Imported model graphic-art]: Imported model graphic-art

What type of model is this?:

[1] A model based on Stable Diffusion 1.X

[2] A model based on Stable Diffusion 2.X

[3] An inpainting model based on Stable Diffusion 1.X

[4] Something else

Your choice: [1] 2

```

In addition, this PR enhances the bulk checkpoint import function. If a

directory path is passed to `!import_model` then it will be scanned for

`.ckpt` and `.safetensors` files. The user will be prompted to import

all the files found, or select which ones to import.

Addresses

https://discord.com/channels/1020123559063990373/1073730061380894740/1073954728544845855

- fix alpha slider to show values from 0.01 to 0.99

- fix interpolation list to show 'difference' method for 3 models,

- and weighted_sum, sigmoid and inverse_sigmoid methods for 2

Porting over as many usable options to slider as possible.

- Ported Face Restoration settings to Sliders.

- Ported Upscale Settings to Sliders.

- Ported Variation Amount to Sliders.

- Ported Noise Threshold to Sliders <-- Optimized slider so the values

actually make sense.

- Ported Perlin Noise to Sliders.

- Added a suboption hook for the High Res Strength Slider.

- Fixed a couple of small issues with the Slider component.

- Ported Main Options to Sliders.

* new OffloadingDevice loads one model at a time, on demand

* fixup! new OffloadingDevice loads one model at a time, on demand

* fix(prompt_to_embeddings): call the text encoder directly instead of its forward method

allowing any associated hooks to run with it.

* more attempts to get things on the right device from the offloader

* more attempts to get things on the right device from the offloader

* make offloading methods an explicit part of the pipeline interface

* inlining some calls where device is only used once

* ensure model group is ready after pipeline.to is called

* fixup! Strategize slicing based on free [V]RAM (#2572)

* doc(offloading): docstrings for offloading.ModelGroup

* doc(offloading): docstrings for offloading-related pipeline methods

* refactor(offloading): s/SimpleModelGroup/FullyLoadedModelGroup

* refactor(offloading): s/HotSeatModelGroup/LazilyLoadedModelGroup

to frame it is the same terms as "FullyLoadedModelGroup"

---------

Co-authored-by: Damian Stewart <null@damianstewart.com>

- filter paths for `build-container.yml` and `test-invoke-pip.yml`

- add workflow to pass required checks on PRs with `paths-ignore`

- this triggers if `test-invoke-pip.yml` does not

- fix "CI checks on main link" in `/README.md`

- filter paths for `build-container.yml` and `test-invoke-pip.yml`

- add workflow to pass required checks on PRs with `paths-ignore`

- this triggers if `test-invoke-pip.yml` does not

- fix "CI checks on main link" in `/README.md`

Assuming that mixing `"literal strings"` and `{'JSX expressions'}`

throughout the code is not for a explicit reason but just a result IDE

autocompletion, I changed all props to be consistent with the

conventional style of using simple string literals where it is

sufficient.

This is a somewhat trivial change, but it makes the code a little more

readable and uniform

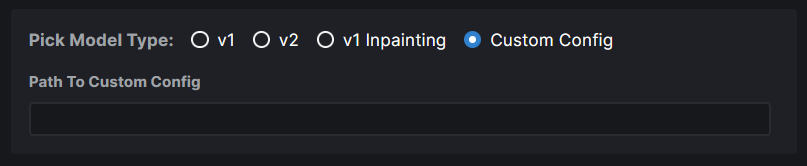

### WebUI Model Conversion

**Model Search Updates**

- Model Search now has a radio group that allows users to pick the type

of model they are importing. If they know their model has a custom

config file, they can assign it right here. Based on their pick, the

model config data is automatically populated. And this same information

is used when converting the model to `diffusers`.

- Files named `model.safetensors` and

`diffusion_pytorch_model.safetensors` are excluded from the search

because these are naming conventions used by diffusers models and they

will end up showing on the list because our conversion saves safetensors

and not bin files.

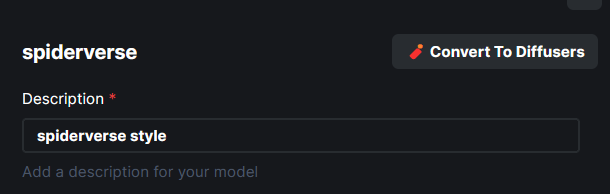

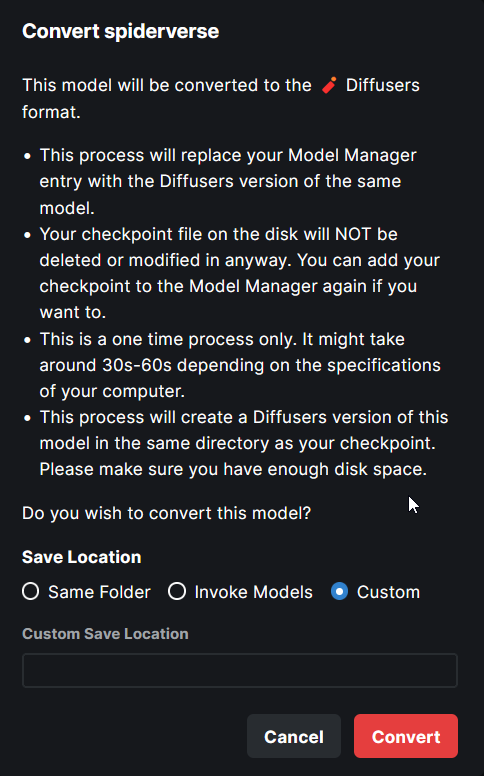

**Model Conversion UI**

- The **Convert To Diffusers** button can be found on the Edit page of

any **Checkpoint Model**.

- When converting the model, the entire process is handled

automatically. The corresponding config while at the time of the Ckpt

addition is used in the process.

- Users are presented with the choice on where to save the diffusers

converted model - same location as the ckpt, InvokeAI models root folder

or a completely custom location.

- When the model is converted, the checkpoint entry is replaced with the

diffusers model entry. A user can readd the ckpt if they wish to.

---

More or less done. Might make some minor UX improvements as I refine

things.