- Previous PR to truncate long filenames won't work on windows due to

lack of support for os.pathconf(). This works around the limitation by

hardcoding the value for PC_NAME_MAX when pathconf is unavailable.

- The `multiprocessing` send() and recv() methods weren't working

properly on Windows due to issues involving `utf-8` encoding and

pickling/unpickling. Changed these calls to `send_bytes()` and

`recv_bytes()` , which seems to fix the issue.

Not fully tested on Windows since I lack a GPU machine to test on, but

is working on CPU.

- Previous PR to truncate long filenames won't work on windows

due to lack of support for os.pathconf(). This works around the

limitation by hardcoding the value for PC_NAME_MAX when pathconf

is unavailable.

Some quick bug fixes related to the UI for the 2.3.4. release.

**Features:**

- Added the ability to now add Textual Inversions to the Negative Prompt

using the UI.

- Added the ability to clear Textual Inversions and Loras from Prompt

and Negative Prompt with a single click.

- Textual Inversions now have status pips - indicating whether they are

used in the Main Prompt, Negative Prompt or both.

**Fixes**

- Fixes#3138

- Fixes#3144

- Fixed `usePrompt` not updating the Lora and TI count in prompt /

negative prompt.

- Fixed the TI regex not respecting names in substrings.

- Fixed trailing spaces when adding and removing loras and TI's.

- Fixed an issue with the TI regex not respecting the `<` and `>` used

by HuggingFace concepts.

- Some other minor bug fixes.

NOTE: This PR works with `diffusers` models **only**. As a result

InvokeAI is now converting all legacy checkpoint/safetensors files into

diffusers models on the fly. This introduces a bit of extra delay when

loading legacy models. You can avoid this by converting the files to

diffusers either at import time, or after the fact.

# Instructions:

1. Download LoRA .safetensors files of your choice and place in

`INVOKEAIROOT/loras`. Unlike the draft version of this PR, the file

names can now contain underscores and hyphens. Names with arbitrary

unicode characters are not supported.

2. Add `withLora(lora-file-basename,weight)` to your prompt. The weight

is optional and will default to 1.0. A few examples, assuming that a

LoRA file named `loras/sushi.safetensors` is present:

```

family sitting at dinner table eating sushi withLora(sushi,0.9)

family sitting at dinner table eating sushi withLora(sushi, 0.75)

family sitting at dinner table eating sushi withLora(sushi)

```

Multiple `withLora()` prompt fragments are allowed. The weight can be

arbitrarily large, but the useful range is roughly 0.5 to 1.0. Higher

weights make the LoRA's influence stronger. The last version of the

syntax, which uses the default weight of 1.0, is waiting on the next

version of the Compel library to be released and may not work at this

time.

In my limited testing, I found it useful to reduce the CFG to avoid

oversharpening. Also I got better results when running the LoRA on top

of the model on which it was based during training.

Don't try to load a SD 1.x-trained LoRA into a SD 2.x model, and vice

versa. You will get a nasty stack trace. This needs to be cleaned up.

3. You can change the location of the `loras` directory by passing the

`--lora_directory` option to `invokeai.

Documentation can be found in docs/features/LORAS.md.

Note that this PR incorporates the unmerged 2.3.3 PR code (#3058) and

bumps the version number up to 2.3.4a0.

A zillion thanks to @felorhik, @neecapp and many others for this

implementation. @blessedcoolant and I just did a little tidying up.

- Remove unused (and probably dangerous) `unload_applied_loras()` method

- Remove unused `LoraManager.loras_to_load` attribute

- Change default LoRA weight to 0.75 when using WebUI to add a LoRA to prompt.

Now, for python 3.9 installer run upgrade pip command like this:

`pip install --upgrade pip`

And because pip binary locked as running process this lead to error(at

least on windows):

```

ERROR: Could not install packages due to an OSError: [WinError 5] Access is denied: 'e:\invokeai\.venv\scripts\pip.exe'

Check the permissions.

```

To prevent this recomended command to upgrade pip is:

`python -m pip install --upgrade pip`

Which not locking pip file.

- removed app directory (a 3.0 feature), so app tests had to go too

- fixed regular expression in the concepts lib which was causing deprecation warnings

(note that this is actually release candidate 7, but I made the mistake

of including an old rc number in the branch and can't easily change it)

## Updating Root directory

- Introduced new mechanism for updating the root directory when

necessary. Currently only used to update the invoke.sh script using new

dialog colors.

- Fixed ROCm torch module version number

## Loading legacy 2.0/2.1 models

- Due to not converting the torch.dtype precision correctly, the

`load_pipeline_from_original_stable_diffusion_ckpt()` was returning

models of dtype float32 regardless of the precision setting. This caused

a precision mismatch crash.

- Problem now fixed (also see #3057 for the same fix to `main`)

## Support for a fourth textual inversion embedding file format

- This variant, exemplified by "easynegative.safetensors" has a single

'embparam' key containing a Tensor.

- Also refactored code to make it easier to read.

- Handle both pickle and safetensor formats.

## Persistent model selection

- To be consistent with WebUI parameter behavior, the currently selected

model is saved on exit and restored on restart for both WebUI and CLI

## Bug fixes

- Name of VAE cache directory was "hug", not "hub". This is fixed.

## VAE fixes

- Allow custom VAEs to be assigned to a legacy model by placing a

like-named vae file adjacent to the checkpoint file.

- The custom VAE will be picked up and incorporated into the diffusers

model if the user chooses to convert/optimize.

## Custom config file loading

- Some of the civitai models instruct users to place a custom .yaml file

adjacent to the checkpoint file. This generally wasn't working because

some of the .yaml files use FrozenCLIPEmbedder rather than

WeightedFrozenCLIPEmbedder, and our FrozenCLIPEmbedder class doesn't

handle the `personalization_config` section used by the the textual

inversion manager. Other .yaml files don't have the

`personalization_config` section at all. Both these issues are

fixed.#1685

## Consistent pytorch version

- There was an inconsistency between the pytorch version requirement in

`pyproject.toml` and the requirement in the installer (which does a

little jiggery-pokery to load torch with the right CUDA/ROCm version

prior to the main pip install. This was causing torch to be installed,

then uninstalled, and reinstalled with a different version number. This

is now fixed.

Instructions:

1. Download LoRA .safetensors files of your choice and place in

`INVOKEAIROOT/loras`. Unlike the draft version of this, the file

names can contain underscores and alphanumerics. Names with

arbitrary unicode characters are not supported.

2. Add `withLora(lora-file-basename,weight)` to your prompt. The

weight is optional and will default to 1.0. A few examples, assuming

that a LoRA file named `loras/sushi.safetensors` is present:

```

family sitting at dinner table eating sushi withLora(sushi,0.9)

family sitting at dinner table eating sushi withLora(sushi, 0.75)

family sitting at dinner table eating sushi withLora(sushi)

```

Multiple `withLora()` prompt fragments are allowed. The weight can be

arbitrarily large, but the useful range is roughly 0.5 to 1.0. Higher

weights make the LoRA's influence stronger.

In my limited testing, I found it useful to reduce the CFG to avoid

oversharpening. Also I got better results when running the LoRA on top

of the model on which it was based during training.

Don't try to load a SD 1.x-trained LoRA into a SD 2.x model, and vice

versa. You will get a nasty stack trace. This needs to be cleaned up.

3. You can change the location of the `loras` directory by passing the

`--lora_directory` option to `invokeai.

Documentation can be found in docs/features/LORAS.md.

- Allow invokeai-update to update using a release, tag or branch.

- Allow CLI's root directory update routine to update directory

contents regardless of whether current version is released.

- In model importation routine, clarify wording of instructions when user is

asked to choose the type of model being imported.

- This variant, exemplified by "easynegative.safetensors" has a single

'embparam' key containing a Tensor.

- Also refactored code to make it easier to read.

- Handle both pickle and safetensor formats.

This commit fixes bugs related to the on-the-fly conversion and loading of

legacy checkpoint models built on SD-2.0 base.

- When legacy checkpoints built on SD-2.0 models were converted

on-the-fly using --ckpt_convert, generation would crash with a

precision incompatibility error.

- In addition, broken logic was causing some 2.0-derived ckpt files to

be converted into diffusers and then processed through the legacy

generation routines - not good.

- installer now installs the pretty dialog-based console launcher

- added dialogrc for custom colors

- add updater to download new launcher when users do an update

- This variant, exemplified by "easynegative.safetensors" has a single

'embparam' key containing a Tensor.

- Also refactored code to make it easier to read.

- Handle both pickle and safetensor formats.

- Imported V2 legacy models will now autoconvert into diffusers at load

time regardless of setting of --ckpt_convert.

- model manager `heuristic_import()` function now looks for side-by-side

yaml and vae files for custom configuration and VAE respectively.

Example of this:

illuminati-v1.1.safetensors illuminati-v1.1.vae.safetensors

illuminati-v1.1.yaml

When the user tries to import `illuminati-v1.1.safetensors`, the yaml

file will be used for its configuration, and the VAE will be used for

its VAE. Conversion to diffusers will happen if needed, and the yaml

file will be used to determine which V2 format (if any) to apply.

NOTE that the changes to `ckpt_to_diffusers.py` were previously reviewed

by @JPPhoto on the `main` branch and approved.

- Imported V2 legacy models will now autoconvert into diffusers

at load time regardless of setting of --ckpt_convert.

- model manager `heuristic_import()` function now looks for

side-by-side yaml and vae files for custom configuration and VAE

respectively.

Example of this:

illuminati-v1.1.safetensors

illuminati-v1.1.vae.safetensors

illuminati-v1.1.yaml

When the user tries to import `illuminati-v1.1.safetensors`, the yaml

file will be used for its configuration, and the VAE will be used for

its VAE. Conversion to diffusers will happen if needed, and the yaml

file will be used to determine which V2 format (if any) to apply.

Several related security fixes:

1. Port #2946 from main to 2.3.2 branch - this closes a hole that allows

a pickle checkpoint file to masquerade as a safetensors file.

2. Add pickle scanning to the checkpoint to diffusers conversion script.

3. Pickle scan VAE non-safetensors files

4. Avoid running scanner twice on same file during the probing and

conversion process.

5. Clean up diagnostic messages.

- The command `invokeai-batch --invoke` was created a time-stamped

logfile with colons in its name, which is a Windows no-no. This corrects

the problem by writing the timestamp out as "13-06-2023_8-35-10"

- Closes#3005

- Since 2.3.2 invokeai stores the next PNG file's numeric prefix in a

file named `.next_prefix` in the outputs directory. This avoids the

overhead of doing a directory listing to find out what file number comes

next.

- The code uses advisory locking to prevent corruption of this file in

the event that multiple invokeai's try to access it simultaneously, but

some users have experienced corruption of the file nevertheless.

- This PR addresses the problem by detecting a potentially corrupted

`.next_prefix` file and falling back to the directory listing method. A

fixed version of the file is then written out.

- Closes#3001

This PR addresses issues raised by #3008.

1. Update documentation to indicate the correct maximum batch size for

TI training when xformers is and isn't used.

2. Update textual inversion code so that the default for batch size is

aware of xformer availability.

3. Add documentation for how to launch TI with distributed learning.

Lots of little bugs have been squashed since 2.3.2 and a new minor point

release is imminent. This PR updates the version number in preparation

for a RC.

- Since 2.3.2 invokeai stores the next PNG file's numeric prefix in a

file named `.next_prefix` in the outputs directory. This avoids the

overhead of doing a directory listing to find out what file number

comes next.

- The code uses advisory locking to prevent corruption of this file in

the event that multiple invokeai's try to access it simultaneously,

but some users have experienced corruption of the file nevertheless.

- This PR addresses the problem by detecting a potentially corrupted

`.next_prefix` file and falling back to the directory listing method.

A fixed version of the file is then written out.

- Closes#3001

Lots of little bugs have been squashed since 2.3.2 and a new minor

point release is imminent. This PR updates the version number in

preparation for a RC.

- `invokeai-batch --invoke` was created a time-stamped logfile with colons in its

name, which is a Windows no-no. This corrects the problem by writing

the timestamp out as "13-06-2023_8-35-10"

- Closes#3005

This PR addresses issues raised by #3008.

1. Update documentation to indicate the correct maximum batch size for

TI training when xformers is and isn't used.

2. Update textual inversion code so that the default for batch size

is aware of xformer availability.

3. Add documentation for how to launch TI with distributed learning.

Two related security fixes:

1. Port #2946 from main to 2.3.2 branch - this closes a hole that

allows a pickle checkpoint file to masquerade as a safetensors

file.

2. Add pickle scanning to the checkpoint to diffusers conversion

script. This will be ported to main in a separate PR.

This commit enhances support for V2 variant (epsilon and v-predict)

import and conversion to diffusers, by prompting the user to select the

proper config file during startup time autoimport as well as in the

invokeai installer script. Previously the user was only prompted when

doing an `!import` from the command line or when using the WebUI Model

Manager.

This commit enhances support for V2 variant (epsilon and v-predict)

import and conversion to diffusers, by prompting the user to select

the proper config file during startup time autoimport as well as

in the invokeai installer script..

At some point `pyproject.toml` was modified to remove the

invokeai-update and invokeai-model-install scripts. This PR fixes the

issue.

If this was an intentional change, let me know and we'll discuss.

# Support SD version 2 "epsilon" and "v-predict" inference

configurations in v2.3

This is a port of the `main` PR #2870 back into V2.3. It allows both

"epsilon" inference V2 models (e.g. "v2-base") and "v-predict" models

(e.g. "V2-768") to be imported and converted into correct diffusers

models. This depends on picking the right configuration file to use, and

since there is no intrinsic difference between the two types of models,

when we detect that a V2 model is being imported, we fall back to asking

the user to select the model type.

This PR ports the `main` PR #2871 to the v2.3 branch. This adjusts the

global diffusers model cache to work with the 0.14 diffusers layout of

placing models in HF_HOME/hub rather than HF_HOME/diffusers. It also

implements the one-time migration action to the new layout.

This PR ports the `main` PR #2871 to the v2.3 branch. This adjusts

the global diffusers model cache to work with the 0.14 diffusers

layout of placing models in HF_HOME/hub rather than HF_HOME/diffusers.

# Programatically generate a large number of images varying by prompt

and other image generation parameters

This is a little standalone script named `dynamic_prompting.py` that

enables the generation of dynamic prompts. Using YAML syntax, you

specify a template of prompt phrases and lists of generation parameters,

and the script will generate a cross product of prompts and generation

settings for you. You can save these prompts to disk for later use, or

pipe them to the invokeai CLI to generate the images on the fly.

Typical uses are testing step and CFG values systematically while

holding the seed and prompt constant, testing out various artist's

styles, and comparing the results of the same prompt across different

models.

A typical template will look like this:

```

model: stable-diffusion-1.5

steps: 30;50;10

seed: 50

dimensions: 512x512

cfg:

- 7

- 12

sampler:

- k_euler_a

- k_lms

prompt:

style:

- greg rutkowski

- gustav klimt

location:

- the mountains

- a desert

object:

- luxurious dwelling

- crude tent

template: a {object} in {location}, in the style of {style}

```

This will generate 96 different images, each of which varies by one of

the dimensions specified in the template. For example, the prompt axis

will generate a cross product list like:

```

a luxurious dwelling in the mountains, in the style of greg rutkowski

a luxurious dwelling in the mountains, in the style of gustav klimt

a luxious dwelling in a desert, in the style of greg rutkowski

... etc

```

A typical usage would be:

```

python scripts/dynamic_prompts.py --invoke --outdir=/tmp/scanning my_template.yaml

```

This will populate `/tmp/scanning` with each of the requested images,

and also generate a `log.md` file which you can open with an e-book

reader to show something like this:

Full instructions can be obtained using the `--instructions` switch, and

an example template can be printed out using `--example`:

```

python scripts/dynamic_prompts.py --instructions

python scripts/dynamic_prompts.py --example > my_first_template.yaml

```

When a legacy ckpt model was converted into diffusers in RAM, the

built-in NSFW checker was not being disabled, in contrast to models

converted and saved to disk. Because InvokeAI does its NSFW checking as

a separate post-processing step (in order to generate blurred images

rather than black ones), this defeated the

--nsfw and --no-nsfw switches.

This closes#2836 and #2580.

Note - this fix will be applied to `main` as a separate PR.

At some point `pyproject.toml` was modified to remove the

invokeai-update script, which in turn breaks the update

function in the launcher scripts. This PR fixes the

issue.

If this was an intentional change, let me know and we'll discuss.

When a legacy ckpt model was converted into diffusers in RAM, the

built-in NSFW checker was not being disabled, in contrast to models

converted and saved to disk. Because InvokeAI does its NSFW checking

as a separate post-processing step (in order to generate blurred

images rather than black ones), this defeated the

--nsfw and --no-nsfw switches.

This closes#2836 and #2580.

This is a different source/base branch from

https://github.com/invoke-ai/InvokeAI/pull/2823 but is otherwise the

same content. `yarn build` was ran on this clean branch.

## What was the problem/requirement? (What/Why)

As part of a [change in

2.3.0](d74c4009cb),

the high resolution fix was no longer being applied when 'Use all' was

selected. This effectively meant that users had to manually analyze

images to ensure that the parameters were set to match.

~~Additionally, and never actually working, Upscaling and Face

Restoration parameters were also not pulling through with the action,

causing a similar usability issue.~~ See:

https://github.com/invoke-ai/InvokeAI/pull/2823#issuecomment-1445530362

## What was the solution? (How)

This change adds a new reducer to the `postprocessingSlice` file,

mimicking the `generationSlice` reducer to assign all parameters

appropriate for the post processing options. This reducer assigns:

* Hi-res's toggle button only if the type is `txt2img`, since `img2img`

hi-res was removed previously

* ~~Upscaling's toggle button, scale, denoising strength, and upscale

strength~~

* ~~Face Restoration's toggle button, type, strength, and fidelity (if

present/applicable)~~

### Minor

* Added `endOfLine: 'crlf'` to prettier's config to prevent all files

from being checked out on Windows due to difference of line endings (and

git not picking up those changes as modifications, causing ghost

modified files from Git)

### Revision 2:

* Removed out upscaling and face restoration pulling of parameters

### Revision 3:

* More defensive coding for the `hires_fix` not present (assume false)

### Out of Scope

* Hi-res strength (applied as img2img strength in the initial image that

is generated) is not in the metadata of the final image and can't be

reconstructed easily

* Upscaling and face restoration have some peculiarities for multi-post

processing outside of the UI, which complicates it enough to scope out

of this PR.

## How were these changes tested?

* `yarn dev` => Server started successfully

* Manual testing on the development server to ensure parameters pulled

correctly

* `yarn build` => Success

## Notes

As with `generationSlice`, this code assumes `action.payload.image` is

valid and doesn't do a formal check on it to ensure it is valid.

- Crash would occur at the end of this sequence:

- launch CLI

- !convert <URL pointing to a legacy ckpt file>

- Answer "Y" when asked to delete original .ckpt file

- This commit modifies model_manager.heuristic_import() to silently

delete the downloaded legacy file after it has been converted into a

diffusers model. The user is no longer asked to approve deletion.

NB: This should be cherry-picked into main once refactor is done.

For your consideration, here is a revised set of codeowners for the v2.3

branch. The previous set had the bad property that both @blessedcoolant

and @lstein were codeowners of everything, meaning that we had the

superpower of being able to put in a PR and get full approval if any

other member of the team (not a codeowner) approved.

The proposed file is a bit more sensible but needs many eyes on it.

Please take a look and make improvements. I wasn't sure where to put

some people, such as @netsvetaev or @GreggHelt2

I don't think it makes sense to tinker with the `main` CODEOWNERS until

the "Big Freeze" code reorganization happens.

I subscribed everyone to this PR. Apologies

- Crash would occur at the end of this sequence:

- launch CLI

- !convert <URL pointing to a legacy ckpt file>

- Answer "Y" when asked to delete original .ckpt file

- This commit modifies model_manager.heuristic_import()

to silently delete the downloaded legacy file after

it has been converted into a diffusers model. The user

is no longer asked to approve deletion.

NB: This should be cherry-picked into main once refactor

is done.

Simple script to generate a file of InvokeAI prompts and settings

that scan across steps and other parameters.

To use, create a file named "template.yaml" (or similar) formatted like this

>>> cut here <<<

steps: "30:50:1"

seed: 50

cfg:

- 7

- 8

- 12

sampler:

- ddim

- k_lms

prompt:

- a sunny meadow in the mountains

- a gathering storm in the mountains

>>> cut here <<<

Create sections named "steps", "seed", "cfg", "sampler" and "prompt".

- Each section can have a constant value such as this:

steps: 50

- Or a range of numeric values in the format:

steps: "<start>:<stop>:<step>"

- Or a list of values in the format:

- value1

- value2

- value3

Be careful to: 1) put quotation marks around numeric ranges; 2) put a

space between the "-" and the value in a list of values; and 3) use spaces,

not tabs, at the beginnings of indented lines.

When you run this script, capture the output into a text file like this:

python generate_param_scan.py template.yaml > output_prompts.txt

"output_prompts.txt" will now contain an expansion of all the list

values you provided. You can examine it in a text editor such as

Notepad.

Now start the CLI, and feed the expanded prompt file to it using the

"!replay" command:

!replay output_prompts.txt

Alternatively, you can directly feed the output of this script

by issuing a command like this from the developer's console:

python generate_param_scan.py template.yaml | invokeai

You can use the web interface to view the resulting images and their

metadata.

- stable-diffusion-2.1-base base model from

stabilityai/stable-diffusion-2-1-base

- stable-diffusion-2.1-768 768 pixel model from

stabilityai/stable-diffusion-2-1-768

- sd-inpainting-2.0 512 pixel inpainting model from

runwayml/stable-diffusion-inpainting

This PR also bumps the version number up to v2.3.1.post2

- stable-diffusion-2.1-base

base model from stabilityai/stable-diffusion-2-1-base

- stable-diffusion-2.1-768

768 pixel model from stabilityai/stable-diffusion-2-1-768

- sd-inpainting-2.0

512 pixel inpainting model from runwayml/stable-diffusion-inpainting

author Kyle Schouviller <kyle0654@hotmail.com> 1669872800 -0800

committer Kyle Schouviller <kyle0654@hotmail.com> 1676240900 -0800

Adding base node architecture

Fix type annotation errors

Runs and generates, but breaks in saving session

Fix default model value setting. Fix deprecation warning.

Fixed node api

Adding markdown docs

Simplifying Generate construction in apps

[nodes] A few minor changes (#2510)

* Pin api-related requirements

* Remove confusing extra CORS origins list

* Adds response models for HTTP 200

[nodes] Adding graph_execution_state to soon replace session. Adding tests with pytest.

Minor typing fixes

[nodes] Fix some small output query hookups

[node] Fixing some additional typing issues

[nodes] Move and expand graph code. Add base item storage and sqlite implementation.

Update startup to match new code

[nodes] Add callbacks to item storage

[nodes] Adding an InvocationContext object to use for invocations to provide easier extensibility

[nodes] New execution model that handles iteration

[nodes] Fixing the CLI

[nodes] Adding a note to the CLI

[nodes] Split processing thread into separate service

[node] Add error message on node processing failure

Removing old files and duplicated packages

Adding python-multipart

black:

- extend-exclude legacy scripts

- config for python 3.9 as long as we support it

isort:

- set atomic to true to only apply if no syntax errors are introduced

- config for python 3.9 as long as we support it

- extend_skib_glob legacy scripts

- filter_files

- match line_length with black

- remove_redundant_aliases

- skip_gitignore

- set src paths

- include virtual_env to detect third party modules

- better order of hooks

- add flake8-comprehensions and flake8-simplify

- remove unecesarry hooks which are covered by previous hooks

- add hooks

- check-executables-have-shebangs

- check-shebang-scripts-are-executable

- Updated Spanish translation

- Updated Portuguese (Brazil) translation

- Fix a number of translation issues and add missing strings

- Fix vertical symmetry and symmetry steps issue when generation steps

is adjusted

This PR adds the core of the node-based invocation system first

discussed in https://github.com/invoke-ai/InvokeAI/discussions/597 and

implements it through a basic CLI and API. This supersedes #1047, which

was too far behind to rebase.

## Architecture

### Invocations

The core of the new system is **invocations**, found in

`/ldm/invoke/app/invocations`. These represent individual nodes of

execution, each with inputs and outputs. Core invocations are already

implemented (`txt2img`, `img2img`, `upscale`, `face_restore`) as well as

a debug invocation (`show_image`). To implement a new invocation, all

that is required is to add a new implementation in this folder (there is

a markdown document describing the specifics, though it is slightly

out-of-date).

### Sessions

Invocations and links between them are maintained in a **session**.

These can be queued for invocation (either the next ready node, or all

nodes). Some notes:

* Sessions may be added to at any time (including after invocation), but

may not be modified.

* Links are always added with a node, and are always links from existing

nodes to the new node. These links can be relative "history" links, e.g.

`-1` to link from a previously executed node, and can link either

specific outputs, or can opportunistically link all matching outputs by

name and type by using `*`.

* There are no iteration/looping constructs. Most needs for this could

be solved by either duplicating nodes or cloning sessions. This is open

for discussion, but is a difficult problem to solve in a way that

doesn't make the code even more complex/confusing (especially regarding

node ids and history).

### Services

These make up the core the invocation system, found in

`/ldm/invoke/app/services`. One of the key design philosophies here is

that most components should be replaceable when possible. For example,

if someone wants to use cloud storage for their images, they should be

able to replace the image storage service easily.

The services are broken down as follows (several of these are

intentionally implemented with an initial simple/naïve approach):

* Invoker: Responsible for creating and executing **sessions** and

managing services used to do so.

* Session Manager: Manages session history. An on-disk implementation is

provided, which stores sessions as json files on disk, and caches

recently used sessions for quick access.

* Image Storage: Stores images of multiple types. An on-disk

implementation is provided, which stores images on disk and retains

recently used images in an in-memory cache.

* Invocation Queue: Used to queue invocations for execution. An

in-memory implementation is provided.

* Events: An event system, primarily used with socket.io to support

future web UI integration.

## Apps

Apps are available through the `/scripts/invoke-new.py` script (to-be

integrated/renamed).

### CLI

```

python scripts/invoke-new.py

```

Implements a simple CLI. The CLI creates a single session, and

automatically links all inputs to the previous node's output. Commands

are automatically generated from all invocations, with command options

being automatically generated from invocation inputs. Help is also

available for the cli and for each command, and is very verbose.

Additionally, the CLI supports command piping for single-line entry of

multiple commands. Example:

```

> txt2img --prompt "a cat eating sushi" --steps 20 --seed 1234 | upscale | show_image

```

### API

```

python scripts/invoke-new.py --api --host 0.0.0.0

```

Implements an API using FastAPI with Socket.io support for signaling.

API documentation is available at `http://localhost:9090/docs` or

`http://localhost:9090/redoc`. This includes OpenAPI schema for all

available invocations, session interaction APIs, and image APIs.

Socket.io signals are per-session, and can be subscribed to by session

id. These aren't currently auto-documented, though the code for event

emission is centralized in `/ldm/invoke/app/services/events.py`.

A very simple test html and script are available at

`http://localhost:9090/static/test.html` This demonstrates creating a

session from a graph, invoking it, and receiving signals from Socket.io.

## What's left?

* There are a number of features not currently covered by invocations. I

kept the set of invocations small during core development in order to

simplify refactoring as I went. Now that the invocation code has

stabilized, I'd love some help filling those out!

* There's no image metadata generated. It would be fairly

straightforward (and would make good sense) to serialize either a

session and node reference into an image, or the entire node into the

image. There are a lot of questions to answer around source images,

linked images, etc. though. This history is all stored in the session as

well, and with complex sessions, the metadata in an image may lose its

value. This needs some further discussion.

* We need a list of features (both current and future) that would be

difficult to implement without looping constructs so we can have a good

conversation around it. I'm really hoping we can avoid needing

looping/iteration in the graph execution, since it'll necessitate

separating an execution of a graph into its own concept/system, and will

further complicate the system.

* The API likely needs further filling out to support the UI. I think

using the new API for the current UI is possible, and potentially

interesting, since it could work like the new/demo CLI in a "single

operation at a time" workflow. I don't know how compatible that will be

with our UI goals though. It would be nice to support only a single API

though.

* Deeper separation of systems. I intentionally tried to not touch

Generate or other systems too much, but a lot could be gained by

breaking those apart. Even breaking apart Args into two pieces (command

line arguments and the parser for the current CLI) would make it easier

to maintain. This is probably in the future though.

author Kyle Schouviller <kyle0654@hotmail.com> 1669872800 -0800

committer Kyle Schouviller <kyle0654@hotmail.com> 1676240900 -0800

Adding base node architecture

Fix type annotation errors

Runs and generates, but breaks in saving session

Fix default model value setting. Fix deprecation warning.

Fixed node api

Adding markdown docs

Simplifying Generate construction in apps

[nodes] A few minor changes (#2510)

* Pin api-related requirements

* Remove confusing extra CORS origins list

* Adds response models for HTTP 200

[nodes] Adding graph_execution_state to soon replace session. Adding tests with pytest.

Minor typing fixes

[nodes] Fix some small output query hookups

[node] Fixing some additional typing issues

[nodes] Move and expand graph code. Add base item storage and sqlite implementation.

Update startup to match new code

[nodes] Add callbacks to item storage

[nodes] Adding an InvocationContext object to use for invocations to provide easier extensibility

[nodes] New execution model that handles iteration

[nodes] Fixing the CLI

[nodes] Adding a note to the CLI

[nodes] Split processing thread into separate service

[node] Add error message on node processing failure

Removing old files and duplicated packages

Adding python-multipart

I had inadvertently un-safe-d our translation types when migrating to Weblate.

This PR fixes that, and a number of translation string bugs that went unnoticed due to the lack of type safety,

- Add curated set of starter models based on team discussion. The final

list of starter models can be found in

`invokeai/configs/INITIAL_MODELS.yaml`

- To test model installation, I selected and installed all the models on

the list. This led to my discovering that when there are no more starter

models to display, the console front end crashes. So I made a fix to

this in which the entire starter model selection is no longer shown.

- Update model table in 050_INSTALL_MODELS.md

- Add guide to dealing with low-memory situations

- Version is now `v2.3.1`

- add new script `scripts/make_models_markdown_table.py` that parses

INITIAL_MODELS.yaml and creates markdown table for the model installation

documentation file

- update 050_INSTALLING_MODELS.md with above table, and add a warning

about additional license terms that apply to some of the models.

- Final list can be found in invokeai/configs/INITIAL_MODELS.yaml

- After installing all the models, I discovered a bug in the file

selection form that caused a crash when no remaining uninstalled

models remained. So had to fix this.

The sample_to_image method in `ldm.invoke.generator.base` was still

using ckpt-era code. As a result when the WebUI was set to show

"accurate" intermediate images, there'd be a crash. This PR corrects the

problem.

- Closes#2784

- Closes#2775

- Discord member @marcus.llewellyn reported that some civitai

2.1-derived checkpoints were not converting properly (probably

dreambooth-generated):

https://discord.com/channels/1020123559063990373/1078386197589655582/1078387806122025070

- @blessedcoolant tracked this down to a missing key that was used to

derive vector length of the CLIP model used by fetching the second

dimension of the tensor at "cond_stage_model.model.text_projection".

- On inspection, I found that the same second dimension can be recovered

from key 'cond_stage_model.model.ln_final.bias', and use that instead. I

hope this is correct; tested on multiple v1, v2 and inpainting models

and they converted correctly.

- While debugging this, I found and fixed several other issues:

- model download script was not pre-downloading the OpenCLIP

text_encoder or text_tokenizer. This is fixed.

- got rid of legacy code in `ckpt_to_diffuser.py` and replaced with

calls into `model_manager`

- more consistent status reporting in the CLI.

without this change, the project can be installed on 3.9 but not used

this also fixes the container images

Maybe we should re-enable Python 3.9 checks which would have prevented

this.

- Discord member @marcus.llewellyn reported that some civitai 2.1-derived checkpoints were

not converting properly (probably dreambooth-generated):

https://discord.com/channels/1020123559063990373/1078386197589655582/1078387806122025070

- @blessedcoolant tracked this down to a missing key that was used to

derive vector length of the CLIP model used by fetching the second

dimension of the tensor at "cond_stage_model.model.text_projection".

His proposed solution was to hardcode a value of 1024.

- On inspection, I found that the same second dimension can be

recovered from key 'cond_stage_model.model.ln_final.bias', and use

that instead. I hope this is correct; tested on multiple v1, v2 and

inpainting models and they converted correctly.

- While debugging this, I found and fixed several other issues:

- model download script was not pre-downloading the OpenCLIP

text_encoder or text_tokenizer. This is fixed.

- got rid of legacy code in `ckpt_to_diffuser.py` and replaced

with calls into `model_manager`

- more consistent status reporting in the CLI.

Root directory finding algorithm is:

2) use --root argument

2) use INVOKEAI_ROOT environment variable

3) use VIRTUAL_ENV environment variable

4) use ~/invokeai

Since developers are liable to put virtual environments in their

favorite places, not necessarily in the invokeai root directory, this PR

adds a sanity check that looks for the existence of

`VIRTUAL_ENV/invokeai.init`, and moves on to (4) if not found.

# This will constitute v2.3.1+rc2

## Windows installer enhancements

1. resize installer window to give more room for configure and download

forms

2. replace '\' with '/' in directory names to allow user to

drag-and-drop

folders into the dialogue boxes that accept directories.

3. similar change in CLI for the !import_model and !convert_model

commands

4. better error reporting when a model download fails due to network

errors

5. put the launcher scripts into a loop so that menu reappears after

invokeai, merge script, etc exits. User can quit with "Q".

6. do not try to download fp16 of sd-ft-mse-vae, since it doesn't exist.

7. cleaned up status reporting when installing models

8. Detect when install failed for some reason and print helpful error

message rather than stack trace.

9. Detect window size and resize to minimum acceptable values to provide

better display of configure and install forms.

10. Fix a bug in the CLI which prevented diffusers imported by their

repo_ids

from being correctly registered in the current session (though they

install

correctly)

11. Capitalize the "i" in Imported in the autogenerated descriptions.

Root directory finding algorithm is:

2) use --root argument

2) use INVOKEAI_ROOT environment variable

3) use VIRTUAL_ENV environment variable

4) use ~/invokeai

Since developer's are liable to put virtual environments in their

favorite places, not necessarily in the invokeai root directory, this

PR adds a sanity check that looks for the existence of

VIRTUAL_ENV/invokeai.init, and moves to (4) if not found.

- Fix a bug in the CLI which prevented diffusers imported by their repo_ids

from being correctly registered in the current session (though they install

correctly)

- Capitalize the "i" in Imported in the autogenerated descriptions.

1. resize installer window to give more room for configure and download forms

2. replace '\' with '/' in directory names to allow user to drag-and-drop

folders into the dialogue boxes that accept directories.

3. similar change in CLI for the !import_model and !convert_model commands

4. better error reporting when a model download fails due to network errors

5. put the launcher scripts into a loop so that menu reappears after

invokeai, merge script, etc exits. User can quit with "Q".

6. do not try to download fp16 of sd-ft-mse-vae, since it doesn't exist.

7. cleaned up status reporting when installing models

- Detect when install failed for some reason and print helpful error

message rather than stack trace.

- Detect window size and resize to minimum acceptable values to provide

better display of configure and install forms.

Currently translated at 81.4% (382 of 469 strings)

translationBot(ui): update translation (Russian)

Currently translated at 81.6% (382 of 468 strings)

Co-authored-by: Sergey Krashevich <svk@svk.su>

Translate-URL: https://hosted.weblate.org/projects/invokeai/web-ui/ru/

Translation: InvokeAI/Web UI

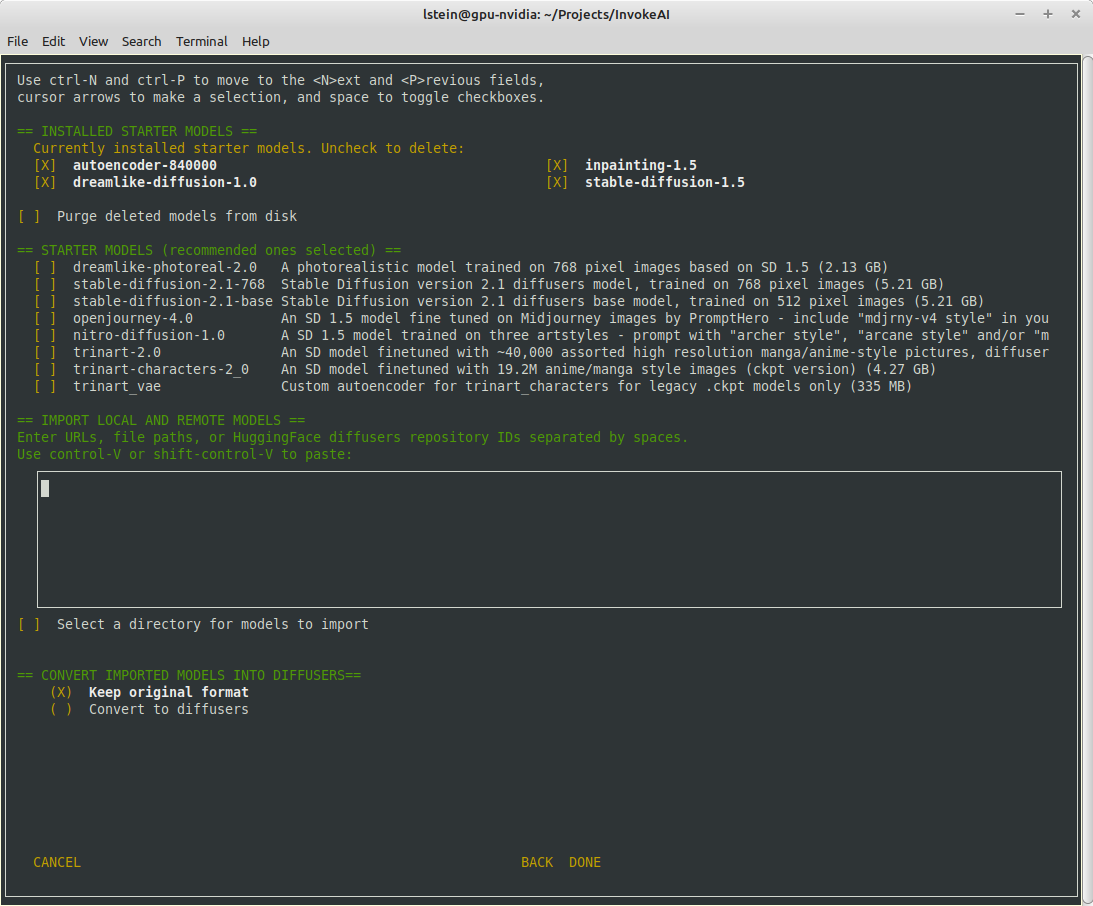

## Major Changes

The invokeai-configure script has now been refactored. The work of

selecting and downloading initial models at install time is now done by

a script named `invokeai-model-install` (module name is

`ldm.invoke.config.model_install`)

Screen 1 - adjust startup options:

Screen 2 - select SD models:

The calling arguments for `invokeai-configure` have not changed, so

nothing should break. After initializing the root directory, the script

calls `invokeai-model-install` to let the user select the starting

models to install.

`invokeai-model-install puts up a console GUI with checkboxes to

indicate which models to install. It respects the `--default_only` and

`--yes` arguments so that CI will continue to work. Here are the various

effects you can achieve:

`invokeai-configure`

This will use console-based UI to initialize invokeai.init,

download support models, and choose and download SD models

`invokeai-configure --yes`

Without activating the GUI, populate invokeai.init with default values,

download support models and download the "recommended" SD models

`invokeai-configure --default_only`

Activate the GUI for changing init options, but don't show the SD

download

form, and automatically download the default SD model (currently SD-1.5)

`invokeai-model-install`

Select and install models. This can be used to download arbitrary

models from the Internet, install HuggingFace models using their

repo_id,

or watch a directory for models to load at startup time

`invokeai-model-install --yes`

Import the recommended SD models without a GUI

`invokeai-model-install --default_only`

As above, but only import the default model

## Flexible Model Imports

The console GUI allows the user to import arbitrary models into InvokeAI

using:

1. A HuggingFace Repo_id

2. A URL (http/https/ftp) that points to a checkpoint or safetensors

file

3. A local path on disk pointing to a checkpoint/safetensors file or

diffusers directory

4. A directory to be scanned for all checkpoint/safetensors files to be

imported

The UI allows the user to specify multiple models to bulk import. The

user can specify whether to import the ckpt/safetensors as-is, or

convert to `diffusers`. The user can also designate a directory to be

scanned at startup time for checkpoint/safetensors files.

## Backend Changes

To support the model selection GUI PR introduces a new method in

`ldm.invoke.model_manager` called `heuristic_import(). This accepts a

string-like object which can be a repo_id, URL, local path or directory.

It will figure out what the object is and import it. It interrogates the

contents of checkpoint and safetensors files to determine what type of

SD model they are -- v1.x, v2.x or v1.x inpainting.

## Installer

I am attaching a zip file of the installer if you would like to try the

process from end to end.

[InvokeAI-installer-v2.3.0.zip](https://github.com/invoke-ai/InvokeAI/files/10785474/InvokeAI-installer-v2.3.0.zip)

motivation: i want to be doing future prompting development work in the

`compel` lib (https://github.com/damian0815/compel) - which is currently

pip installable with `pip install compel`.

-At some point pathlib was added to the list of imported modules and

this broken the os.path code that assembled the sample data set.

-Now fixed by replacing os.path calls with Path methods

-At some point pathlib was added to the list of imported modules and this

broken the os.path code that assembled the sample data set.

-Now fixed by replacing os.path calls with Path methods

- Disable responsive resizing below starting dimensions (you can make

form larger, but not smaller than what it was at startup)

- Fix bug that caused multiple --ckpt_convert entries (and similar) to

be written to init file.

This bug is related to the format in which we stored prompts for some time: an array of weighted subprompts.

This caused some strife when recalling a prompt if the prompt had colons in it, due to our recently introduced handling of negative prompts.

Currently there is no need to store a prompt as anything other than a string, so we revert to doing that.

Compatibility with structured prompts is maintained via helper hook.

Lots of earlier embeds use a common trigger token such as * or the

hebrew letter shan. Previously, the textual inversion manager would

refuse to load the second and subsequent embeddings that used a

previously-claimed trigger. Now, when this case is encountered, the

trigger token is replaced by <filename> and the user is informed of the

fact.

1. Fixed display crash when the number of installed models is less than

the number of desired columns to display them.

2. Added --ckpt_convert option to init file.

Enhancements:

1. Directory-based imports will not attempt to import components of diffusers models.

2. Diffuser directory imports now supported

3. Files that end with .ckpt that are not Stable Diffusion models (such as VAEs) are

skipped during import.

Bugs identified in Psychedelicious's review:

1. The invokeai-configure form now tracks the current contents of `invokeai.init` correctly.

2. The autoencoders are no longer treated like installable models, but instead are

mandatory support models. They will no longer appear in `models.yaml`

Bugs identified in Damian's review:

1. If invokeai-model-install is started before the root directory is initialized, it will

call invokeai-configure to fix the matter.

2. Fix bug that was causing empty `models.yaml` under certain conditions.

3. Made import textbox smaller

4. Hide the "convert to diffusers" options if nothing to import.

In theory, this reduces peak memory consumption by doing the conditioned

and un-conditioned predictions one after the other instead of in a

single mini-batch.

In practice, it doesn't reduce the reported "Max VRAM used for this

generation" for me, even without xformers. (But it does slow things down

by a good 18%.)

That suggests to me that the peak memory usage is during VAE decoding,

not the diffusion unet, but ymmv. It does [improve things for gogurt's

16 GB

M1](https://github.com/invoke-ai/InvokeAI/pull/2732#issuecomment-1436187407),

so it seems worthwhile.

To try it out, use the `--sequential_guidance` option:

2dded68267/ldm/invoke/args.py (L487-L492)

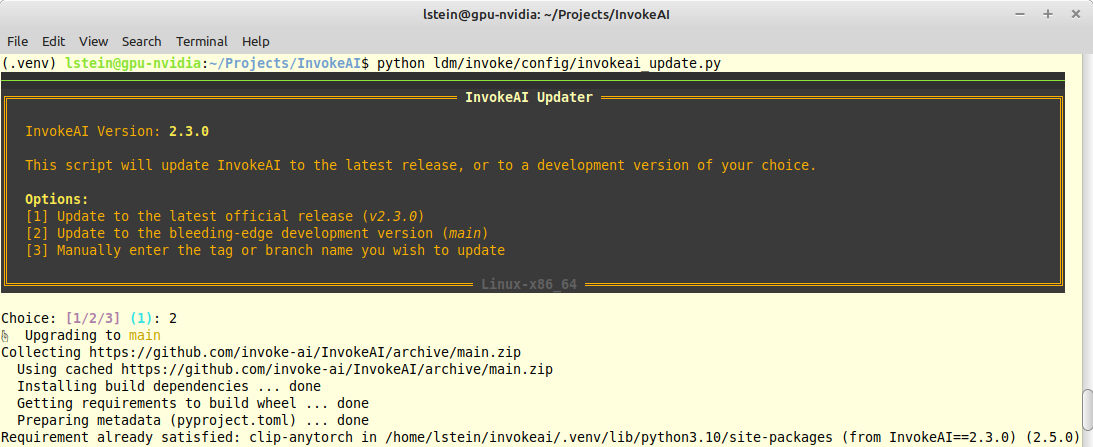

- Adds an update action to launcher script

- This action calls new python script `invokeai-update`, which prompts

user to update to latest release version, main development version, or

an arbitrary git tag or branch name.

- It then uses `pip` to update to whatever tag was specified.

The user interface (such as it is) looks like this:

- The TI script was looping over all files in the training image

directory, regardless of whether they were image files or not. This PR

adds a check for image file extensions.

-

- Closes#2715

- Fixes longstanding bug in the token vector size code which caused .pt

files to be assigned the wrong token vector length. These were then

tossed out during directory scanning.

- Fixes longstanding bug in the token vector size code which caused

.pt files to be assigned the wrong token vector length. These

were then tossed out during directory scanning.

- Fixed the test for token length; tested on several .pt and .bin files

- Also added a __main__ entrypoint for CLI.py, to make pdb debugging a

bit more convenient.

When selecting the last model of the third model-list in the

model-merging-TUI it crashed because the code forgot about the "None"

element.

Additionally it seems that it accidentally always took the wrong model

as third model if selected?

This simple fix resolves both issues.

Added symmetry to Invoke based on discussions with @damian0815. This can currently only be activated via the CLI with the `--h_symmetry_time_pct` and `--v_symmetry_time_pct` options. Those take values from 0.0-1.0, exclusive, indicating the percentage through generation at which symmetry is applied as a one-time operation. To have symmetry in either axis applied after the first step, use a very low value like 0.001.

- not sure why, but at some pont --ckpt_convert (which converts legacy checkpoints)

into diffusers in memory, stopped working due to float16/float32 issues.

- this commit repairs the problem

- also removed some debugging messages I found in passing

- Fixed the test for token length; tested on several .pt and .bin files

- Also added a __main__ entrypoint for CLI.py, to make pdb debugging a bit

more convenient.

- You can now achieve several effects:

`invokeai-configure`

This will use console-based UI to initialize invokeai.init,

download support models, and choose and download SD models

`invokeai-configure --yes`

Without activating the GUI, populate invokeai.init with default values,

download support models and download the "recommended" SD models

`invokeai-configure --default_only`

As above, but only download the default SD model (currently SD-1.5)

`invokeai-model-install`

Select and install models. This can be used to download arbitrary

models from the Internet, install HuggingFace models using their repo_id,

or watch a directory for models to load at startup time

`invokeai-model-install --yes`

Import the recommended SD models without a GUI

`invokeai-model-install --default_only`

As above, but only import the default model

A few bugs fixed.

- After the recent update to the Cancel Button, it was no longer

respecting sizing in Floating Mode and the Beta Canvas. Fixed that.

- After the recent dependency update, useHotkeys was bugging out for the

fullscreen hotkey `f`. Realized this was happening because the hotkey

was initialized in two places -- in both the gallery and the parameter

floating button. Removed it from both those places and moved it to the

InvokeTabs component. It makes sense to reside it here because it is a

global hotkey.

- Also added index `0` to the default Accordion index in state in order

to ensure that the main accordions stay open. Conveniently this works

great on all tabs. We have all the primary options in accordions so they

stay open. And as for advanced settings, the first one is always Seed

which is an important accordion, so it opens up by default.

Think there may be some more bugs. Looking in to them.

After upgrading the deps, the full screen hotkey started to bug out. I believe this was happening because it was triggered in two different components causing it to run twice. Removed it from both floating buttons and moved it to the Invoke tab. Makes sense to keep it there as it is a global hotkey.

After the recent changes the Cancel button wasn't maintaining min height in floating mode. Also the new button group was not scaling in width correctly on the Canvas Beta UI. Fixed both.

- Adds a translation status badge

- Adds a blurb about contributing a translation (we want Weblate to be

the source of truth for translations, and to avoid updating translations

directly here)

- Upgraded all dependencies

- Removed beta TS 5.0 as it conflicted with some packages

- Added types for `Array.prototype.findLast` and

`Array.prototype.findLastIndex` (these definitions are provided in TS

5.0

- Fixed fixed type import syntax in a few components

- Re-patched `redux-deep-persist` and tested to ensure the patch still

works

The husky pre-commit command was `npx run lint`, but it should run

`lint-staged`. Also, `npx` wasn't working for me. Changed the command to

`npm run lint-staged` and it all works. Extended the `lint-staged`

triggers to hit `json`, `scss` and `html`.

When encountering a bad embedding, InvokeAI was asking about reconfiguring models. This is because the embedding load error was never handled - it now is.

- Upgraded all dependencies

- Removed beta TS 5.0 as it conflicted with some packages

- Added types for `Array.prototype.findLast` and `Array.prototype.findLastIndex` (these definitions are provided in TS 5.0

- Fixed fixed type import syntax in a few components

- Re-patched `redux-deep-persist` and tested to ensure the patch still works

Model Manager lags a bit if you have a lot of models.

Basically added a fake delay to rendering the model list so the modal

has time to load first. Hacky but if it works it works.

## What was the problem/requirement? (What/Why)

Frequently, I wish to cancel the processing of images, but also want the

current image to finalize before I do. To work around this, I need to

wait until the current one finishes before pressing the cancel.

## What was the solution? (How)

* Implemented a button that allows to "Cancel after current iteration,"

which stores a state in the UI that will attempt to cancel the

processing after the current image finishes

* If the button is pressed again, while it is spinning and before the

next iteration happens, this will stop the scheduling of the cancel, and

behave as if the button was never pressed.

### Minor

* Added `.yarn` to `.gitignore` as this was an output folder produced

from following Frontend's README

### Revision 2

#### Major

* Changed from a standalone button to a context menu next to the

original cancel button. Pressing the context menu will give the

drop-down option to select which type of cancel method the user prefers,

and they can press that button for canceling in the specified type

* Moved states to system state for cross-screen and toggled cancel types

management

* Added in distribution for the target yarn version (allowing any

version of yarn to compile successfully), and updated the README to

ensure `--immutable` is passed for onboarding developers

#### Minor

* Updated `.gitignore` to ignore specific yarn folders, as specified by

their team -

https://yarnpkg.com/getting-started/qa#which-files-should-be-gitignored

## How were these changes tested?

* `yarn dev` => Server started successfully

* Manual testing on the development server to ensure the button behaved

as expected

* `yarn run build` => Success

### Artifacts

#### Revision 1

* Video showing the UI changes in action

https://user-images.githubusercontent.com/89283782/218347722-3a15ce61-2d8c-4c38-b681-e7a3e79dd595.mov

* Images showing the basic UI changes

#### Revision 2

* Video showing the UI changes in action

https://user-images.githubusercontent.com/89283782/219901217-048d2912-9b61-4415-85fd-9e8fedb00c79.mov

* Images showing the basic UI changes

(Default state)

(Drop-down context menu active)

(Scheduled cancel selected and running)

(Scheduled cancel started)

## Notes

* Using `SystemState`'s `currentStatus` variable, when the value is

`common:statusIterationComplete` is an alternative to this approach (and

would be more optimal as it should prevent the next iteration from even

starting), but since the names are within the translations, rather than

an enum or other type, this method of tracking the current iteration was

used instead.

* `isLoading` on `IAIIconButton` caused the Icon Button to also be

disabled, so the current solution works around that with conditionally

rendering the icon of the button instead of passing that value.

* I don't have context on the development expectation for `dist` folder

interactions (and couldn't find any documentation outside of the

`.gitignore` mentioning that the folder should remain. Let me know if

they need to be modified a certain way.

- The checkpoint conversion script was generating diffusers models with

the safety checker set to null. This resulted in models that could not

be merged with ones that have the safety checker activated.

- This PR fixes the issue by incorporating the safety checker into all

1.x-derived checkpoints, regardless of user's nsfw_checker setting.

- The checkpoint conversion script was generating diffusers models

with the safety checker set to null. This resulted in models

that could not be merged with ones that have the safety checker

activated.

- This PR fixes the issue by incorporating the safety checker into

all 1.x-derived checkpoints, regardless of user's nsfw_checker setting.

Also tighten up the typing of `device` attributes in general.

Fixes

> ValueError: Expected a torch.device with a specified index or an

integer, but got:cuda

Weblate's first PR was it attempting to fix some translation issues we

had overlooked!

It wanted to remove some keys which it did not see in our translation

source due to typos.

This PR instead corrects the key names to resolve the issues.

# Weblate Translation

After doing a full integration test of 3 translation service providers

on my fork of InvokeAI, we have chosen

[Weblate](https://hosted.weblate.org). The other two viable options were

[Crowdin](https://crowdin.com/) and

[Transifex](https://www.transifex.com/).

Weblate was the choice because its hosted service provides a very solid

UX / DX, can scale as much as we may ever need, is FOSS itself, and

generously offers free hosted service to other libre projects like ours.

## How it works

Weblate hosts its own fork of our repo and establishes a kind of

unidirectional relationship between our repo and its fork.

### InvokeAI --> Weblate

The `invoke-ai/InvokeAI` repo has had the Weblate GitHub app added to

it. This app watches for changes to our translation source

(`invokeai/frontend/public/locales/en.json`) and then updates the

Weblate fork. The Weblate UI then knows there are new strings to be

translated, or changes to be made.

### Translation

Our translators can then update the translations on the Weblate UI. The

plan now is to invite individual community members who have expressed

interest in maintaining a language or two and give them access to the

app. We can also open the doors to the general public if desired.

### Weblate --> InvokeAI

When a translation is ready or changed, the system will make a PR to

`main`. We have a substantial degree of control over this and will

likely manually trigger these PRs instead of letting them fire off

automatically.

Once a PR is merged, we will still need to rebuild the web UI. I think

we can set things up so that we only need the rebuild when a totally new

language is added, but for now, we will stick to this relatively simple

setup.

## This PR

This PR sets up the web UI's translation stuff to work with Weblate:

- merged each locale into a single file

- updated the i18next config and UI to work with this simpler file

structure

- update our eslint and prettier rules to ensure the locale files have

the same format as what Weblate outputs (`tabWidth: 4`)

- added a thank you to Weblate in our README

Once this is merged, I'll link Weblate to `main` and do a couple tests

to ensure it is all working as expected.

This fixes a few cosmetic bugs in the merge models console GUI:

1) Fix the minimum and maximum ranges on alpha. Was 0.05 to 0.95. Now

0.01 to 0.99.

2) Don't show the 'add_difference' interpolation method when 2 models

selected, or the other three methods when three models selected

## Convert v2 models in CLI

- This PR introduces a CLI prompt for the proper configuration file to

use when converting a ckpt file, in order to support both inpainting

and v2 models files.

- When user tries to directly !import a v2 model, it prints out a proper

warning that v2 ckpts are not directly supported and converts it into a

diffusers model automatically.

The user interaction looks like this:

```

(stable-diffusion-1.5) invoke> !import_model /home/lstein/graphic-art.ckpt

Short name for this model [graphic-art]: graphic-art-test

Description for this model [Imported model graphic-art]: Imported model graphic-art

What type of model is this?:

[1] A model based on Stable Diffusion 1.X

[2] A model based on Stable Diffusion 2.X

[3] An inpainting model based on Stable Diffusion 1.X

[4] Something else

Your choice: [1] 2

```

In addition, this PR enhances the bulk checkpoint import function. If a

directory path is passed to `!import_model` then it will be scanned for

`.ckpt` and `.safetensors` files. The user will be prompted to import

all the files found, or select which ones to import.

Addresses

https://discord.com/channels/1020123559063990373/1073730061380894740/1073954728544845855

- fix alpha slider to show values from 0.01 to 0.99

- fix interpolation list to show 'difference' method for 3 models,

- and weighted_sum, sigmoid and inverse_sigmoid methods for 2

Porting over as many usable options to slider as possible.

- Ported Face Restoration settings to Sliders.

- Ported Upscale Settings to Sliders.

- Ported Variation Amount to Sliders.

- Ported Noise Threshold to Sliders <-- Optimized slider so the values

actually make sense.

- Ported Perlin Noise to Sliders.

- Added a suboption hook for the High Res Strength Slider.

- Fixed a couple of small issues with the Slider component.

- Ported Main Options to Sliders.

- Corrected error that caused --full-precision argument to be ignored

when models downloaded using the --yes argument.

- Improved autodetection of v1 inpainting files; no longer relies on the

file having 'inpaint' in the name.

* new OffloadingDevice loads one model at a time, on demand

* fixup! new OffloadingDevice loads one model at a time, on demand

* fix(prompt_to_embeddings): call the text encoder directly instead of its forward method

allowing any associated hooks to run with it.

* more attempts to get things on the right device from the offloader

* more attempts to get things on the right device from the offloader

* make offloading methods an explicit part of the pipeline interface

* inlining some calls where device is only used once

* ensure model group is ready after pipeline.to is called

* fixup! Strategize slicing based on free [V]RAM (#2572)

* doc(offloading): docstrings for offloading.ModelGroup

* doc(offloading): docstrings for offloading-related pipeline methods

* refactor(offloading): s/SimpleModelGroup/FullyLoadedModelGroup

* refactor(offloading): s/HotSeatModelGroup/LazilyLoadedModelGroup

to frame it is the same terms as "FullyLoadedModelGroup"

---------

Co-authored-by: Damian Stewart <null@damianstewart.com>

- filter paths for `build-container.yml` and `test-invoke-pip.yml`

- add workflow to pass required checks on PRs with `paths-ignore`

- this triggers if `test-invoke-pip.yml` does not

- fix "CI checks on main link" in `/README.md`

- filter paths for `build-container.yml` and `test-invoke-pip.yml`

- add workflow to pass required checks on PRs with `paths-ignore`

- this triggers if `test-invoke-pip.yml` does not

- fix "CI checks on main link" in `/README.md`

Assuming that mixing `"literal strings"` and `{'JSX expressions'}`

throughout the code is not for a explicit reason but just a result IDE

autocompletion, I changed all props to be consistent with the

conventional style of using simple string literals where it is

sufficient.

This is a somewhat trivial change, but it makes the code a little more

readable and uniform

- quashed multiple bugs in model conversion and importing

- found old issue in handling of resume of interrupted downloads

- will require extensive testing

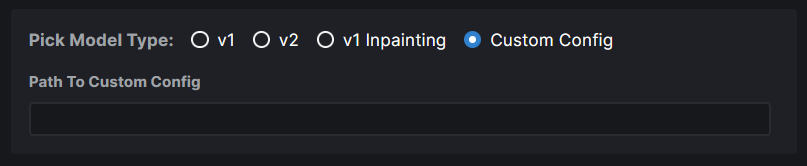

### WebUI Model Conversion

**Model Search Updates**

- Model Search now has a radio group that allows users to pick the type

of model they are importing. If they know their model has a custom

config file, they can assign it right here. Based on their pick, the

model config data is automatically populated. And this same information

is used when converting the model to `diffusers`.

- Files named `model.safetensors` and

`diffusion_pytorch_model.safetensors` are excluded from the search

because these are naming conventions used by diffusers models and they

will end up showing on the list because our conversion saves safetensors

and not bin files.

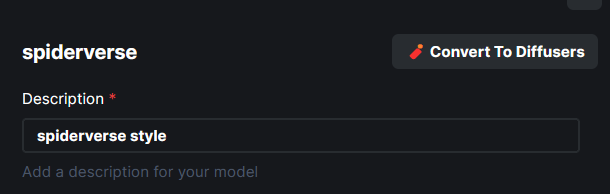

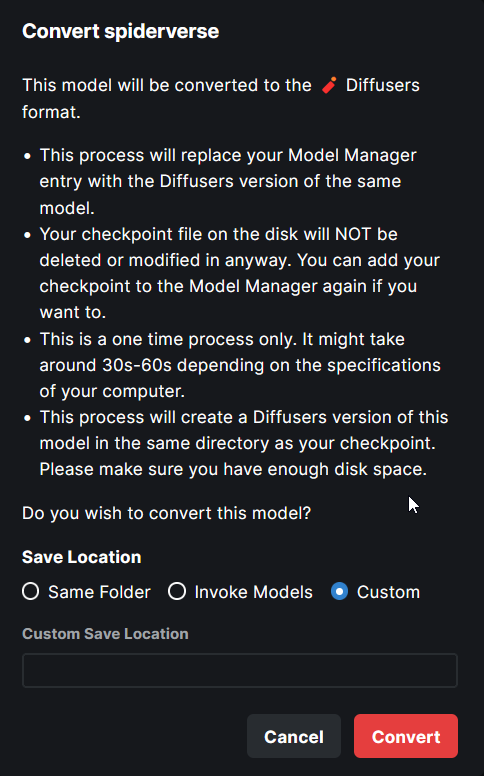

**Model Conversion UI**

- The **Convert To Diffusers** button can be found on the Edit page of

any **Checkpoint Model**.

- When converting the model, the entire process is handled

automatically. The corresponding config while at the time of the Ckpt

addition is used in the process.

- Users are presented with the choice on where to save the diffusers

converted model - same location as the ckpt, InvokeAI models root folder

or a completely custom location.

- When the model is converted, the checkpoint entry is replaced with the

diffusers model entry. A user can readd the ckpt if they wish to.

---

More or less done. Might make some minor UX improvements as I refine

things.

Tensors with diffusers no longer have to be multiples of 8. This broke Perlin noise generation. We now generate noise for the next largest multiple of 8 and return a cropped result. Fixes#2674.

`generator` now asks `InvokeAIDiffuserComponent` to do postprocessing work on latents after every step. Thresholding - now implemented as replacing latents outside of the threshold with random noise - is called at this point. This postprocessing step is also where we can hook up symmetry and other image latent manipulations in the future.

Note: code at this layer doesn't need to worry about MPS as relevant torch functions are wrapped and made MPS-safe by `generator.py`.

1. Now works with sites that produce lots of redirects, such as CIVITAI

2. Derive name of destination model file from HTTP Content-Disposition header,

if present.

3. Swap \\ for / in file paths provided by users, to hopefully fix issues with

Windows.

This PR adds a new attributer to ldm.generate, `embedding_trigger_strings`:

```

gen = Generate(...)

strings = gen.embedding_trigger_strings

strings = gen.embedding_trigger_strings()

```

The trigger strings will change when the model is updated to show only

those strings which are compatible with the current

model. Dynamically-downloaded triggers from the HF Concepts Library

will only show up after they are used for the first time. However, the

full list of concepts available for download can be retrieved

programatically like this:

```

from ldm.invoke.concepts_lib import HuggingFAceConceptsLibrary

concepts = HuggingFaceConceptsLibrary()

trigger_strings = concepts.list_concepts()

```

I have added the arabic locale files. There need to be some

modifications to the code in order to detect the language direction and

add it to the current document body properties.

For example we can use this:

import { appWithTranslation, useTranslation } from "next-i18next";

import React, { useEffect } from "react";

const { t, i18n } = useTranslation();

const direction = i18n.dir();

useEffect(() => {

document.body.dir = direction;

}, [direction]);

This should be added to the app file. It uses next-i18next to

automatically get the current language and sets the body text direction

(ltr or rtl) depending on the selected language.

## Provide informative error messages when TI and Merge scripts have

insufficient space for console UI

- The invokeai-ti and invokeai-merge scripts will crash if there is not

enough space in the console to fit the user interface (even after

responsive formatting).

- This PR intercepts the errors and prints a useful error message

advising user to make window larger.

1. The invokeai-configure script has now been refactored. The work of

selecting and downloading initial models at install time is now done

by a script named invokeai-initial-models (module

name is ldm.invoke.config.initial_model_select)

The calling arguments for invokeai-configure have not changed, so

nothing should break. After initializing the root directory, the

script calls invokeai-initial-models to let the user select the

starting models to install.

2. invokeai-initial-models puts up a console GUI with checkboxes to

indicate which models to install. It respects the --default_only

and --yes arguments so that CI will continue to work.

3. User can now edit the VAE assigned to diffusers models in the CLI.

4. Fixed a bug that caused a crash during model loading when the VAE

is set to None, rather than being empty.

- The invokeai-ti and invokeai-merge scripts will crash if there is not enough space

in the console to fit the user interface (even after responsive formatting).

- This PR intercepts the errors and prints a useful error message advising user to

make window larger.

- fix unused variables and f-strings found by pyflakes

- use global_converted_ckpts_dir() to find location of diffusers

- fixed bug in model_manager that was causing the description of converted

models to read "Optimized version of {model_name}'

Strategize slicing based on free [V]RAM when not using xformers. Free [V]RAM is evaluated at every generation. When there's enough memory, the entire generation occurs without slicing. If there is not enough free memory, we use diffusers' sliced attention.

- Adds an update action to launcher script

- This action calls new python script `invokeai-update`, which prompts

user to update to latest release version, main development version,

or an arbitrary git tag or branch name.

- It then uses `pip` to update to whatever tag was specified.

Some of the core features of this PR include:

- optional push image to dockerhub (will be skipped in repos which

didn't set it up)

- stop using the root user at runtime

- trigger builds also for update/docker/* and update/ci/docker/*

- always cache image from current branch and main branch

- separate caches for container flavors

- updated comments with instructions in build.sh and run.sh

This commit cleans up the code that did bulk imports of legacy model

files. The code has been refactored, and the user is now offered the

option of importing all the model files found in the directory, or

selecting which ones to import.

Users can now pick the folder to save their diffusers converted model. It can either be the same folder as the ckpt, or the invoke root models folder or a totally custom location.

Fixed a couple of bugs:

1. The original config file for the ckpt file is derived from the entry in

`models.yaml` rather than relying on the user to select. The implication

of this is that V2 ckpt models need to be assigned `v2-inference-v.yaml`

when they are first imported. Otherwise they won't convert right. Note

that currently V2 ckpts are imported with `v1-inference.yaml`, which

isn't right either.

2. Fixed a backslash in the output diffusers path, which was causing

load failures on Linux.

Remaining issues:

1. The radio buttons for selecting the model type are

nonfunctional. It feels to me like these should be moved into the

dialogue for importing ckpt/safetensors files, because this is

where the algorithm needs help from the user.

2. The output diffusers model is written into the same directory as

the input ckpt file. The CLI does it differently and stores the