# Application-wide configuration service

This PR creates a new `InvokeAIAppConfig` object that reads

application-wide settings from an init file, the environment, and the

command line.

Arguments and fields are taken from the pydantic definition of the

model. Defaults can be set by creating a yaml configuration file that

has a top-level key of "InvokeAI" and subheadings for each of the

categories returned by `invokeai --help`.

The file looks like this:

[file: invokeai.yaml]

```

InvokeAI:

Paths:

root: /home/lstein/invokeai-main

conf_path: configs/models.yaml

legacy_conf_dir: configs/stable-diffusion

outdir: outputs

embedding_dir: embeddings

lora_dir: loras

autoconvert_dir: null

gfpgan_model_dir: models/gfpgan/GFPGANv1.4.pth

Models:

model: stable-diffusion-1.5

embeddings: true

Memory/Performance:

xformers_enabled: false

sequential_guidance: false

precision: float16

max_loaded_models: 4

always_use_cpu: false

free_gpu_mem: false

Features:

nsfw_checker: true

restore: true

esrgan: true

patchmatch: true

internet_available: true

log_tokenization: false

Cross-Origin Resource Sharing:

allow_origins: []

allow_credentials: true

allow_methods:

- '*'

allow_headers:

- '*'

Web Server:

host: 127.0.0.1

port: 8081

```

The default name of the configuration file is `invokeai.yaml`, located

in INVOKEAI_ROOT. You can use any OmegaConf dictionary by passing it to

the config object at initialization time:

```

omegaconf = OmegaConf.load('/tmp/init.yaml')

conf = InvokeAIAppConfig(conf=omegaconf)

```

The default name of the configuration file is `invokeai.yaml`, located

in INVOKEAI_ROOT. You can replace supersede this by providing

anyOmegaConf dictionary object initialization time:

```

omegaconf = OmegaConf.load('/tmp/init.yaml')

conf = InvokeAIAppConfig(conf=omegaconf)

```

By default, InvokeAIAppConfig will parse the contents of `sys.argv` at

initialization time. You may pass a list of strings in the optional

`argv` argument to use instead of the system argv:

```

conf = InvokeAIAppConfig(arg=['--xformers_enabled'])

```

It is also possible to set a value at initialization time. This value

has highest priority.

```

conf = InvokeAIAppConfig(xformers_enabled=True)

```

Any setting can be overwritten by setting an environment variable of

form: "INVOKEAI_<setting>", as in:

```

export INVOKEAI_port=8080

```

Order of precedence (from highest):

1) initialization options

2) command line options

3) environment variable options

4) config file options

5) pydantic defaults

Typical usage:

```

from invokeai.app.services.config import InvokeAIAppConfig

# get global configuration and print its nsfw_checker value

conf = InvokeAIAppConfig()

print(conf.nsfw_checker)

```

Finally, the configuration object is able to recreate its (modified)

yaml file, by calling its `to_yaml()` method:

```

conf = InvokeAIAppConfig(outdir='/tmp', port=8080)

print(conf.to_yaml())

```

# Legacy code removal and porting

This PR replaces Globals with the InvokeAIAppConfig system throughout,

and therefore removes the `globals.py` and `args.py` modules. It also

removes `generate` and the legacy CLI. ***The old CLI and web servers

are now gone.***

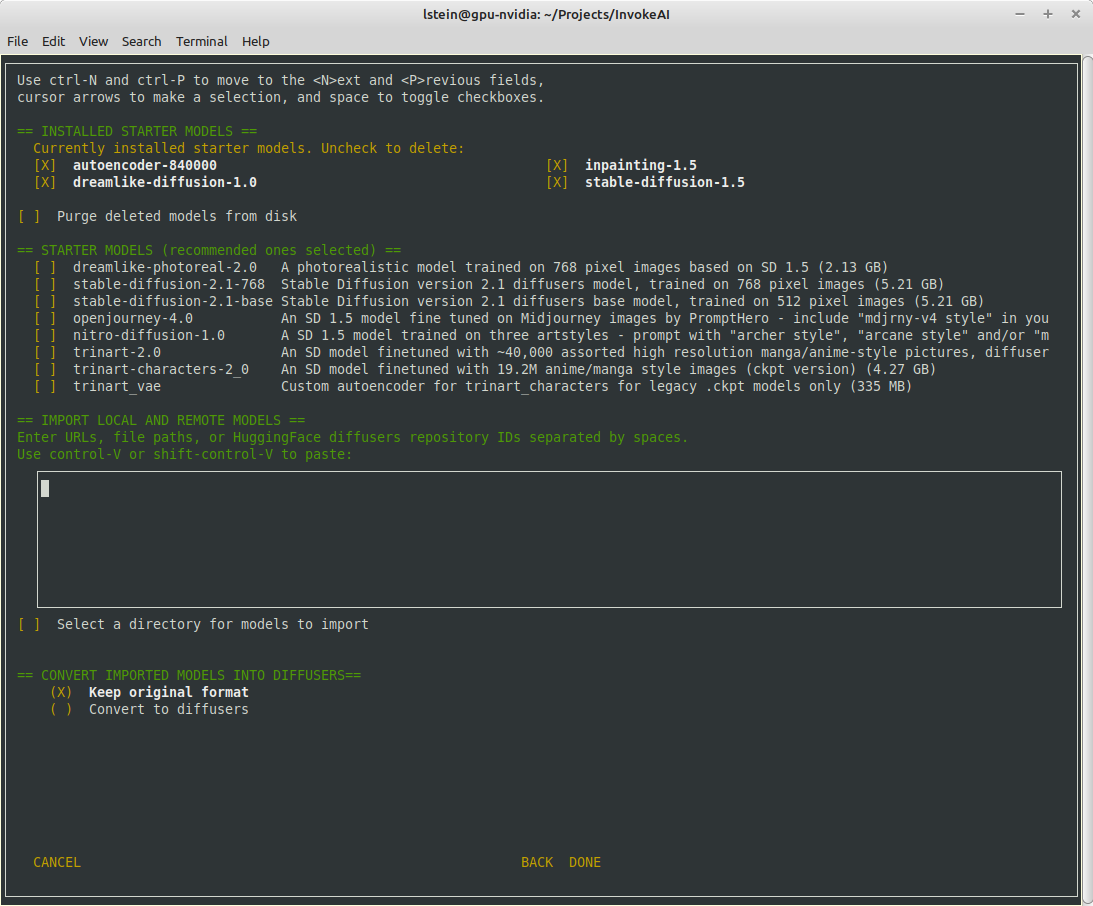

I have ported the functionality of the configuration script, the model

installer, and the merge and textual inversion scripts. The `invokeai`

command will now launch `invokeai-node-cli`, and `invokeai-web` will

launch the web server.

I have changed the continuous invocation tests to accommodate the new

command syntax in `invokeai-node-cli`. As a convenience function, you

can also pass invocations to `invokeai-node-cli` (or its alias

`invokeai`) on the command line as as standard input:

```

invokeai-node-cli "t2i --positive_prompt 'banana sushi' --seed 42"

invokeai < invocation_commands.txt

```

- Make environment variable settings case InSenSiTive:

INVOKEAI_MAX_LOADED_MODELS and InvokeAI_Max_Loaded_Models

environment variables will both set `max_loaded_models`

- Updated realesrgan to use new config system.

- Updated textual_inversion_training to use new config system.

- Discovered a race condition when InvokeAIAppConfig is created

at module load time, which makes it impossible to customize

or replace the help message produced with --help on the command

line. To fix this, moved all instances of get_invokeai_config()

from module load time to object initialization time. Makes code

cleaner, too.

- Added `--from_file` argument to `invokeai-node-cli` and changed

github action to match. CI tests will hopefully work now.

- invokeai-configure updated to work with new config system

- migrate invokeai.init to invokeai.yaml during configure

- replace legacy invokeai with invokeai-node-cli

- add ability to run an invocation directly from invokeai-node-cli command line

- update CI tests to work with new invokeai syntax

* refetch images list if error loading

* tell user to refresh instead of refetching

* unused import

* feat(ui): use `useAppToaster` to make toast

* fix(ui): clear selected/initial image on error

---------

Co-authored-by: Mary Hipp <maryhipp@Marys-MacBook-Air.local>

Co-authored-by: psychedelicious <4822129+psychedelicious@users.noreply.github.com>

This PR improves the logging module a tad bit along with the

documentation.

**New Look:**

## Usage

**General Logger**

InvokeAI has a module level logger. You can call it this way.

In this below example, you will use the default logger `InvokeAI` and

all your messages will be logged under that name.

```python

from invokeai.backend.util.logging import logger

logger.critical("CriticalMessage") // In Bold Red

logger.error("Info Message") // In Red

logger.warning("Info Message") // In Yellow

logger.info("Info Message") // In Grey

logger.debug("Debug Message") // In Grey

```

Results:

```

[12-05-2023 20]::[InvokeAI]::CRITICAL --> This is an info message [In Bold Red]

[12-05-2023 20]::[InvokeAI]::ERROR --> This is an info message [In Red]

[12-05-2023 20]::[InvokeAI]::WARNING --> This is an info message [In Yellow]

[12-05-2023 20]::[InvokeAI]::INFO --> This is an info message [In Grey]

[12-05-2023 20]::[InvokeAI]::DEBUG --> This is an info message [In Grey]

```

**Custom Logger**

If you want to use a custom logger for your module, you can import it

the following way.

```python

from invokeai.backend.util.logging import logging

logger = logging.getLogger(name='Model Manager')

logger.critical("CriticalMessage") // In Bold Red

logger.error("Info Message") // In Red

logger.warning("Info Message") // In Yellow

logger.info("Info Message") // In Grey

logger.debug("Debug Message") // In Grey

```

Results:

```

[12-05-2023 20]::[Model Manager]::CRITICAL --> This is an info message [In Bold Red]

[12-05-2023 20]::[Model Manager]::ERROR --> This is an info message [In Red]

[12-05-2023 20]::[Model Manager]::WARNING --> This is an info message [In Yellow]

[12-05-2023 20]::[Model Manager]::INFO --> This is an info message [In Grey]

[12-05-2023 20]::[Model Manager]::DEBUG --> This is an info message [In Grey]

```

**When to use custom logger?**

It is recommended to use a custom logger if your module is not a part of

base InvokeAI. For example: custom extensions / nodes.

1. if retrieving an item from the queue raises an exception, the

InvocationProcessor thread crashes, but the API continues running in

a non-functional state. This fixes the issue

2. when there are no items in the queue, sleep 1 second before checking

again.

3. Also ensures the thread isn't crashed if an exception is raised from

invoker, and emits the error event

Intentionally using base Exceptions because for now we don't know which

specific exception to expect.

Fixes (sort of)? #3222

- do not show canvas intermediates in gallery

- do not show progress image in uploads gallery category

- use custom dark mode `localStorage` key (prevents collision with

commercial)

- use variable font (reduce bundle size by factor of 10)

- change how custom headers are used

- use style injection for building package

- fix tab icon sizes

when building for package, CSS is all in JS files. when used as a package, it is then injected into the page. bit of a hack to missing CSS in commercial product

**Features:**

- Add UniPC Scheduler

- Add Euler Karras Scheduler

- Add DPMPP_2 Karras Scheduler

- Add DEIS Scheduler

- Add DDPM Scheduler

**Other:**

- Renamed schedulers to their accurate names: _a = Ancestral, _k =

Karras

- Fix scheduler not defaulting correctly to DDIM.

- Code split SCHEDULER_MAP so its consistently loaded from the same

place.

**Known Bugs:**

- dpmpp_2s not working in img2img for denoising values < 0.8 ==> // This

seems to be an upstream bug. I've disabled it in img2img and canvas

until the upstream bug is fixed.

https://github.com/huggingface/diffusers/issues/1866

This PR updates to `xformers ~= 0.0.19` and `torch ~= 2.0.0`, which

together seem to solve the non-deterministic image generation issue that

was previously seen with earlier versions of `xformers`.

Update the push trigger with the branch which should deploy the docs,

also bring over the updates to the workflow from the v2.3 branch and:

- remove main and development branch from trigger

- they would fail without the updated toml

- cache pip environment

- update install method (`pip install ".[docs]"`)

hi there, love the project! i noticed a small typo when going over the

install process.

when copying the automated install instructions from the docs into a

terminal, the line to install the python packages failed as it was

missing the `-y` flag.

when copying the automated install instructions from the docs into a terminal, the line to install the python packages failed as it was missing the `-y` flag.

Seems like this is the only change needed for the existing inpaint code

to work as a node. Kyle said on Discord that inpaint shouldn't be a

node, so feel free to just reject this if this code is going to be gone

soon.

# Intro

This commit adds invokeai.backend.util.logging, which provides support

for formatted console and logfile messages that follow the status

reporting conventions of earlier InvokeAI versions:

```

### A critical error

*** A non-fatal error

** A warning

>> Informational message

| Debugging message

```

Internally, the invokeai logging module creates a new default logger

named "invokeai" so that its logging does not interfere with other

module's use of the vanilla logging module. So `logging.error("foo")`

will go through the regular logging path and not add InvokeAI's

informational message decorations, while `ialog.error("foo")` will add

the decorations.

# Usage:

This is a thin wrapper around the standard Python logging module. It can

be used in several ways:

## Module-level logging style

This style logs everything through a single default logging object and

is identical to using Python's `logging` module. The commonly-used

module-level logging functions are implemented as simple pass-thrus to

logging:

```

import invokeai.backend.util.logging as logger

logger.debug('this is a debugging message')

logger.info('this is a informational message')

logger.log(level=logging.CRITICAL, 'get out of dodge')

logger.disable(level=logging.INFO)

logger.basicConfig(filename='/var/log/invokeai.log')

logger.error('this will be logged to console and to invokeai.log')

```

Internally these functions all go through a custom logging object named

"invokeai". You can access it to perform additional customization in

either of these ways:

```

logger = logger.getLogger()

logger = logger.getLogger('invokeai')

```

## Object-oriented style

For more control, the logging module's object-oriented logging style is

also supported. The API is identical to the vanilla logging usage. In

fact, the only thing that has changed is that the getLogger() method

adds a custom formatter to the log messages.

```

import logging

from invokeai.backend.util.logging import InvokeAILogger

logger = InvokeAILogger.getLogger(__name__)

fh = logging.FileHandler('/var/invokeai.log')

logger.addHandler(fh)

logger.critical('this will be logged to both the console and the log file')

```

## Within the nodes API

From within the nodes API, the logger module is stored in the `logger`

slot of InvocationServices during dependency initialization. For

example, in a router, the idiom is:

```

from ..dependencies import ApiDependencies

logger = ApiDependencies.invoker.services.logger

logger.warning('uh oh')

```

Currently, to change the logger used by the API, one must change the

logging module passed to `ApiDependencies.initialize()` in `api_app.py`.

However, this will eventually be replaced with a method to select the

preferred logging module using the configuration file (dependent on

merging of PR #3221)

- I've sorted out the issues that make *not* persisting troublesome, these will be rolled out with canvas

- Also realized that persisting gallery images very quickly fills up localStorage, so we can't really do it anyways

vastly improves the gallery performance when many images are loaded.

- `react-virtuoso` to do the virtualized list

- `overlayscrollbars` for a scrollbar

On hyperthreaded CPUs we get two threads operating on the queue by

default on each core. This cases two threads to process queue items.

This results in pytorch errors and sometimes generates garbage.

Locking this to single thread makes sense because we are bound by the

number of GPUs in the system, not by CPU cores. And to parallelize

across GPUs we should just start multiple processors (and use async

instead of threading)

Fixes#3289

- `disabledParametersPanels` -> `disabledFeatures`

- handle disabling `faceRestore`, `upscaling`, `lightbox`, `modelManager` and OSS header links/buttons

- wait until models are loaded to hide loading screen

- also wait until schema is parsed if `nodes` is an enabled tab

When gallery was empty (and there is therefore no selected image), no

progress images were displayed.

- fix by correcting the logic in CurrentImageDisplay

- also fix app crash introduced by fixing the first bug

Prevent legacy CLI crash caused by removal of convert option

- Compensatory change to the CLI that prevents it from crashing when it

tries to import a model.

- Bug introduced when the "convert" option removed from the model

manager.

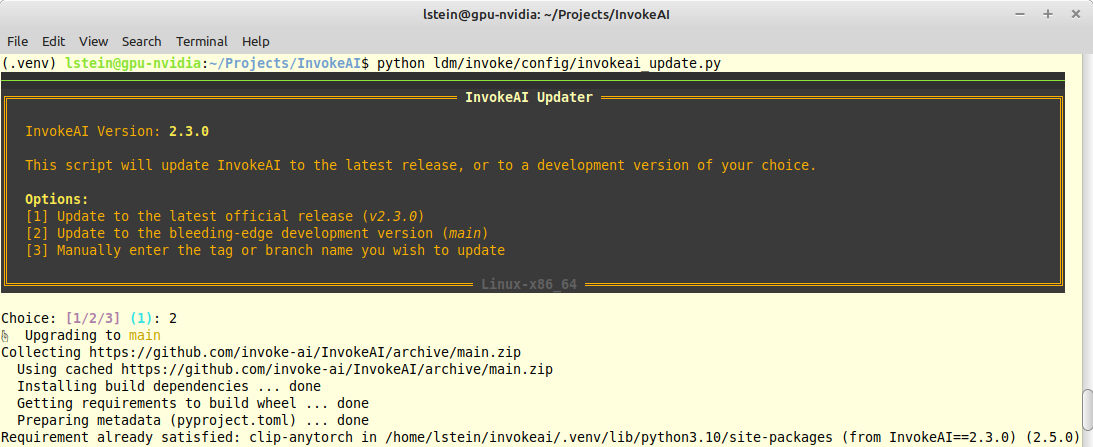

- Fix the update script to work again and fixes the ambiguity between

when a user wants to update to a tag vs updating to a branch, by making

these two operations explicitly separate.

- Remove dangling functions and arguments related to legacy checkpoint

conversion. These are no longer needed now that all legacy models are

either converted at import time, or on-the-fly in RAM.

I noticed that the current invokeai-new.py was using almost all of a CPU

core. After a bit of profileing I noticed that there were many thousands

of calls to epoll() which suggested to me that something wasn't sleeping

properly in asyncio's loop.

A bit of further investigation with Python profiling revealed that the

__dispatch_from_queue() method in FastAPIEventService

(app/api/events.py:33) was also being called thousands of times.

I believe the asyncio.sleep(0.001) in that method is too aggressive (it

means that the queue will be polled every 1ms) and that 0.1 (100ms) is

still entirely reasonable.

Currently translated at 100.0% (512 of 512 strings)

translationBot(ui): update translation (Russian)

Currently translated at 100.0% (512 of 512 strings)

translationBot(ui): update translation (English)

Currently translated at 100.0% (512 of 512 strings)

translationBot(ui): update translation (Ukrainian)

Currently translated at 100.0% (506 of 506 strings)

translationBot(ui): update translation (Russian)

Currently translated at 100.0% (506 of 506 strings)

translationBot(ui): update translation (Russian)

Currently translated at 100.0% (506 of 506 strings)

Co-authored-by: System X - Files <vasyasos@gmail.com>

Translate-URL: https://hosted.weblate.org/projects/invokeai/web-ui/en/

Translate-URL: https://hosted.weblate.org/projects/invokeai/web-ui/ru/

Translate-URL: https://hosted.weblate.org/projects/invokeai/web-ui/uk/

Translation: InvokeAI/Web UI

Currently translated at 100.0% (512 of 512 strings)

translationBot(ui): update translation (Italian)

Currently translated at 100.0% (511 of 511 strings)

translationBot(ui): update translation (Italian)

Currently translated at 100.0% (506 of 506 strings)

Co-authored-by: Riccardo Giovanetti <riccardo.giovanetti@gmail.com>

Translate-URL: https://hosted.weblate.org/projects/invokeai/web-ui/it/

Translation: InvokeAI/Web UI

Currently translated at 100.0% (512 of 512 strings)

translationBot(ui): update translation (Spanish)

Currently translated at 100.0% (511 of 511 strings)

translationBot(ui): update translation (Spanish)

Currently translated at 100.0% (506 of 506 strings)

Co-authored-by: gallegonovato <fran-carro@hotmail.es>

Translate-URL: https://hosted.weblate.org/projects/invokeai/web-ui/es/

Translation: InvokeAI/Web UI

* if `shouldFetchImages` is passed in, UI will make an additional

request to get valid image URL when an invocation is complete

* this is necessary in order to have optional authorization for images

- Style the Minimap

- Made the Node UI Legend Responsive

- Set Min Width for nodes on Spawn so resize doesn't snap.

- Initial Implementation of Node Search

- Added FuseJS to handle the node filtering

The first draft for a Responsive Mobile Layout for InvokeAI. Some basic

documentation to help contributors. // Notes from: @blessedcoolant

---

The whole rework needs to be done using the `mobile first` concept where

the base design will be catered to mobile and we add responsive changes

as we grow to larger screens.

**Added**

- Basic breakpoints have been added to the `theme.ts` file that indicate

at which values Chakra makes the responsive changes.

- A basic `useResolution` hook has been added that either returns

`mobile`, `tablet` or `desktop` based on the breakpoint. We can

customize this hook further to do more complex checks for us if need be.

**Syntax**

- Any Chakra component is directly capable of taking different values

for the different breakpoints set in our `theme.ts` file. These can be

passed in a few ways with the most descriptive being an object. For

example:

`flexDir={{ base: 'column', xl: 'row' }}` - This would set the `0em and

above` to be column for the flex direction but change to row

automatically when we hit `xl` and above resolutions which in our case

is `80em or 1280px`. This same format is applicable for any element in

Chakra.

`flexDir={['column', null, null, 'row', null]}` - The above syntax can

also be passed as an array to the property with each value in the array

corresponding to each breakpoint we have. Setting `null` just bypasses

it. This is a good short hand but I think we stick to the above syntax

for readability.

**Note**: I've modified a few elements here and there to give an idea on

how the responsive syntax works for reference.

---

**Problems to be solved** @SammCheese

- Some issues you might run into are with the Resizable components.

We've decided we will get not use resizable components for smaller

resolutions. Doesn't make sense. So you'll need to make conditional

renderings around these.

- Some components that need custom layouts for different screens might

be better if ported over to `Grid` and use `gridTemplateAreas` to swap

out the design layout. I've demonstrated an example of this in a commit

I've made. I'll let you be the judge of where we might need this.

- The header will probably need to be converted to a burger menu of some

sort with the model changing being handled correctly UX wise. We'll

discuss this on discord.

---

Anyone willing to contribute to this PR can feel free to join the

discussion on discord.

https://discord.com/channels/1020123559063990373/1020839344170348605/threads/1097323866780606615

* feat(ui): add axios client generator and simple example

* fix(ui): update client & nodes test code w/ new Edge type

* chore(ui): organize generated files

* chore(ui): update .eslintignore, .prettierignore

* chore(ui): update openapi.json

* feat(backend): fixes for nodes/generator

* feat(ui): generate object args for api client

* feat(ui): more nodes api prototyping

* feat(ui): nodes cancel

* chore(ui): regenerate api client

* fix(ui): disable OG web server socket connection

* fix(ui): fix scrollbar styles typing and prop

just noticed the typo, and made the types stronger.

* feat(ui): add socketio types

* feat(ui): wip nodes

- extract api client method arg types instead of manually declaring them

- update example to display images

- general tidy up

* start building out node translations from frontend state and add notes about missing features

* use reference to sampler_name

* use reference to sampler_name

* add optional apiUrl prop

* feat(ui): start hooking up dynamic txt2img node generation, create middleware for session invocation

* feat(ui): write separate nodes socket layer, txt2img generating and rendering w single node

* feat(ui): img2img implementation

* feat(ui): get intermediate images working but types are stubbed out

* chore(ui): add support for package mode

* feat(ui): add nodes mode script

* feat(ui): handle random seeds

* fix(ui): fix middleware types

* feat(ui): add rtk action type guard

* feat(ui): disable NodeAPITest

This was polluting the network/socket logs.

* feat(ui): fix parameters panel border color

This commit should be elsewhere but I don't want to break my flow

* feat(ui): make thunk types more consistent

* feat(ui): add type guards for outputs

* feat(ui): load images on socket connect

Rudimentary

* chore(ui): bump redux-toolkit

* docs(ui): update readme

* chore(ui): regenerate api client

* chore(ui): add typescript as dev dependency

I am having trouble with TS versions after vscode updated and now uses TS 5. `madge` has installed 3.9.10 and for whatever reason my vscode wants to use that. Manually specifying 4.9.5 and then setting vscode to use that as the workspace TS fixes the issue.

* feat(ui): begin migrating gallery to nodes

Along the way, migrate to use RTK `createEntityAdapter` for gallery images, and separate `results` and `uploads` into separate slices. Much cleaner this way.

* feat(ui): clean up & comment results slice

* fix(ui): separate thunk for initial gallery load so it properly gets index 0

* feat(ui): POST upload working

* fix(ui): restore removed type

* feat(ui): patch api generation for headers access

* chore(ui): regenerate api

* feat(ui): wip gallery migration

* feat(ui): wip gallery migration

* chore(ui): regenerate api

* feat(ui): wip refactor socket events

* feat(ui): disable panels based on app props

* feat(ui): invert logic to be disabled

* disable panels when app mounts

* feat(ui): add support to disableTabs

* docs(ui): organise and update docs

* lang(ui): add toast strings

* feat(ui): wip events, comments, and general refactoring

* feat(ui): add optional token for auth

* feat(ui): export StatusIndicator and ModelSelect for header use

* feat(ui) working on making socket URL dynamic

* feat(ui): dynamic middleware loading

* feat(ui): prep for socket jwt

* feat(ui): migrate cancelation

also updated action names to be event-like instead of declaration-like

sorry, i was scattered and this commit has a lot of unrelated stuff in it.

* fix(ui): fix img2img type

* chore(ui): regenerate api client

* feat(ui): improve InvocationCompleteEvent types

* feat(ui): increase StatusIndicator font size

* fix(ui): fix middleware order for multi-node graphs

* feat(ui): add exampleGraphs object w/ iterations example

* feat(ui): generate iterations graph

* feat(ui): update ModelSelect for nodes API

* feat(ui): add hi-res functionality for txt2img generations

* feat(ui): "subscribe" to particular nodes

feels like a dirty hack but oh well it works

* feat(ui): first steps to node editor ui

* fix(ui): disable event subscription

it is not fully baked just yet

* feat(ui): wip node editor

* feat(ui): remove extraneous field types

* feat(ui): nodes before deleting stuff

* feat(ui): cleanup nodes ui stuff

* feat(ui): hook up nodes to redux

* fix(ui): fix handle

* fix(ui): add basic node edges & connection validation

* feat(ui): add connection validation styling

* feat(ui): increase edge width

* feat(ui): it blends

* feat(ui): wip model handling and graph topology validation

* feat(ui): validation connections w/ graphlib

* docs(ui): update nodes doc

* feat(ui): wip node editor

* chore(ui): rebuild api, update types

* add redux-dynamic-middlewares as a dependency

* feat(ui): add url host transformation

* feat(ui): handle already-connected fields

* feat(ui): rewrite SqliteItemStore in sqlalchemy

* fix(ui): fix sqlalchemy dynamic model instantiation

* feat(ui, nodes): metadata wip

* feat(ui, nodes): models

* feat(ui, nodes): more metadata wip

* feat(ui): wip range/iterate

* fix(nodes): fix sqlite typing

* feat(ui): export new type for invoke component

* tests(nodes): fix test instantiation of ImageField

* feat(nodes): fix LoadImageInvocation

* feat(nodes): add `title` ui hint

* feat(nodes): make ImageField attrs optional

* feat(ui): wip nodes etc

* feat(nodes): roll back sqlalchemy

* fix(nodes): partially address feedback

* fix(backend): roll back changes to pngwriter

* feat(nodes): wip address metadata feedback

* feat(nodes): add seeded rng to RandomRange

* feat(nodes): address feedback

* feat(nodes): move GET images error handling to DiskImageStorage

* feat(nodes): move GET images error handling to DiskImageStorage

* fix(nodes): fix image output schema customization

* feat(ui): img2img/txt2img -> linear

- remove txt2img and img2img tabs

- add linear tab

- add initial image selection to linear parameters accordion

* feat(ui): tidy graph builders

* feat(ui): tidy misc

* feat(ui): improve invocation union types

* feat(ui): wip metadata viewer recall

* feat(ui): move fonts to normal deps

* feat(nodes): fix broken upload

* feat(nodes): add metadata module + tests, thumbnails

- `MetadataModule` is stateless and needed in places where the `InvocationContext` is not available, so have not made it a `service`

- Handles loading/parsing/building metadata, and creating png info objects

- added tests for MetadataModule

- Lifted thumbnail stuff to util

* fix(nodes): revert change to RandomRangeInvocation

* feat(nodes): address feedback

- make metadata a service

- rip out pydantic validation, implement metadata parsing as simple functions

- update tests

- address other minor feedback items

* fix(nodes): fix other tests

* fix(nodes): add metadata service to cli

* fix(nodes): fix latents/image field parsing

* feat(nodes): customise LatentsField schema

* feat(nodes): move metadata parsing to frontend

* fix(nodes): fix metadata test

---------

Co-authored-by: maryhipp <maryhipp@gmail.com>

Co-authored-by: Mary Hipp <maryhipp@Marys-MacBook-Air.local>

Since the change itself is quite straight-forward, I'll just describe

the context. Tried using automatic installer on my laptop, kept erroring

out on line 140-something of installer.py, "ERROR: Can not perform a

'--user' install. User site-packages are not visible in this

virtualenv."

Got tired of of fighting with pip so moved on to command line install.

Worked immediately, but at the time lacked instruction for CPU, so

instead of opening any helpful hyperlinks in the readme, took a few

minutes to grab the link from installer.py - thus this pr.

- Fixed a bunch of padding and margin issues across the app

- Fixed the Invoke logo compressing

- Disabled the visibility of the options panel pin button in tablet and mobile views

- Refined the header menu options in mobile and tablet views

- Refined other site header elements in mobile and tablet views

- Aligned Tab Icons to center in mobile and tablet views

Made some basic responsive changes to demonstrate how to go about making changes.

There are a bunch of problems not addressed yet. Like dealing with the resizeable component and etc.

This component just classifies `base` and `sm` as mobile, `md` and `lg` as tablet and `xl` and `2xl` as desktop.

This is a basic hook for quicker work with resolutions. Can be modified and adjusted to our needs. All resolution related work can go into this hook.

This commit adds invokeai.backend.util.logging, which provides support

for formatted console and logfile messages that follow the status

reporting conventions of earlier InvokeAI versions.

Examples:

### A critical error (logging.CRITICAL)

*** A non-fatal error (logging.ERROR)

** A warning (logging.WARNING)

>> Informational message (logging.INFO)

| Debugging message (logging.DEBUG)

This style logs everything through a single logging object and is

identical to using Python's `logging` module. The commonly-used

module-level logging functions are implemented as simple pass-thrus

to logging:

import invokeai.backend.util.logging as ialog

ialog.debug('this is a debugging message')

ialog.info('this is a informational message')

ialog.log(level=logging.CRITICAL, 'get out of dodge')

ialog.disable(level=logging.INFO)

ialog.basicConfig(filename='/var/log/invokeai.log')

Internally, the invokeai logging module creates a new default logger

named "invokeai" so that its logging does not interfere with other

module's use of the vanilla logging module. So `logging.error("foo")`

will go through the regular logging path and not add the additional

message decorations.

For more control, the logging module's object-oriented logging style

is also supported. The API is identical to the vanilla logging

usage. In fact, the only thing that has changed is that the

getLogger() method adds a custom formatter to the log messages.

import logging

from invokeai.backend.util.logging import InvokeAILogger

logger = InvokeAILogger.getLogger(__name__)

fh = logging.FileHandler('/var/invokeai.log')

logger.addHandler(fh)

logger.critical('this will be logged to both the console and the log file')

This commit adds invokeai.backend.util.logging, which provides support

for formatted console and logfile messages that follow the status

reporting conventions of earlier InvokeAI versions.

Examples:

### A critical error (logging.CRITICAL)

*** A non-fatal error (logging.ERROR)

** A warning (logging.WARNING)

>> Informational message (logging.INFO)

| Debugging message (logging.DEBUG)

- add invocation schema customisation

done via fastapi's `Config` class and `schema_extra`. when using `Config`, inherit from `InvocationConfig` to get type hints.

where it makes sense - like for all math invocations - define a `MathInvocationConfig` class and have all invocations inherit from it.

this customisation can provide any arbitrary additional data to the UI. currently it provides tags and field type hints.

this is necessary for `model` type fields, which are actually string fields. without something like this, we can't reliably differentiate `model` fields from normal `string` fields.

can also be used for future field types.

all invocations now have tags, and all `model` fields have ui type hints.

- fix model handling for invocations

added a helper to fall back to the default model if an invalid model name is chosen. model names in graphs now work.

- fix latents progress callback

noticed this wasn't correct while working on everything else.

When running this app first time in WSL2 environment, which is

notoriously slow when it comes to IO, computing the SHAs of the models

takes an eternity.

Computing shas for sd2.1

```

| Calculating sha256 hash of model files

| sha256 = 1e4ce085102fe6590d41ec1ab6623a18c07127e2eca3e94a34736b36b57b9c5e (49 files hashed in 510.87s)

```

I increased the chunk size to 16MB reduce the number of round trips for

loading the data. New results:

```

| Calculating sha256 hash of model files

| sha256 = 1e4ce085102fe6590d41ec1ab6623a18c07127e2eca3e94a34736b36b57b9c5e (49 files hashed in 59.89s)

```

Higher values don't seem to make an impact.

- add `list_images` endpoint at `GET api/v1/images`

- extend `ImageStorageBase` with `list()` method, implemented it for `DiskImageStorage`

- add `ImageReponse` class to for image responses, which includes urls, metadata

- add `ImageMetadata` class (basically a stub at the moment)

- uploaded images now named `"{uuid}_{timestamp}.png"`

- add `models` modules. besides separating concerns more clearly, this helps to mitigate circular dependencies

- improve thumbnail handling

- the functionality to automatically import and run legacy checkpoint

files in a designated folder has been removed from the backend but there

are vestiges of the code remaining in the frontend that are causing

crashes.

- This fixes the problem.

- Closes#3075

This PR introduces a new set of ModelManager methods that enables you to

retrieve the individual parts of a stable diffusion pipeline model,

including the vae, text_encoder, unet, tokenizer, etc.

To use:

```

from invokeai.backend import ModelManager

manager = ModelManager('/path/to/models.yaml')

# get the VAE

vae = manager.get_model_vae('stable-diffusion-1.5')

# get the unet

unet = manager.get_model_unet('stable-diffusion-1.5')

# get the tokenizer

tokenizer = manager.get_model_tokenizer('stable-diffusion-1.5')

# etc etc

feature_extractor = manager.get_model_feature_extractor('stable-diffusion-1.5')

scheduler = manager.get_model_scheduler('stable-diffusion-1.5')

text_encoder = manager.get_model_text_encoder('stable-diffusion-1.5')

# if no model provided, then defaults to the one currently in GPU, if any

vae = manager.get_model_vae()

```

- Compensatory change to the CLI that prevents it from crashing

when it tries to import a model.

- Bug introduced when the "convert" option removed from the model

manager.

* Add latents nodes.

* Fix iteration expansion.

* Add collection generator nodes, math nodes.

* Add noise node.

* Add some graph debug commands to the CLI.

* Fix negative id linking in CLI.

* Fix a CLI bug with multiple links per node.

- New method is ModelManager.get_sub_model(model_name:str,model_part:SDModelComponent)

To use:

```

from invokeai.backend import ModelManager, SDModelComponent as sdmc

manager = ModelManager('/path/to/models.yaml')

vae = manager.get_sub_model('stable-diffusion-1.5', sdmc.vae)

```

The typo accidentally did not affect functionality; when `query==""`, it

`search()`ed but found everything due to empty query, then paginated

results, so it worked the same as `list()`.

Still fix it

currently if users input eg `happy (camper:0.3)` it gets parsed

incorrectly, which causes crashes if it's in the negative prompt. bump

to compel 1.0.5 fixes the parser to avoid this (note the weight is

parsed as plain text, it's not converted to proper invoke syntax)

- This PR adds support for embedding files that contain a single key

"emb_params". The only example I know of this format is the

"EasyNegative" embedding on HuggingFace, but there are certainly others.

- This PR also adds support for loading embedding files that have been

saved in safetensors format.

- It also cleans up the code so that the logic of probing for and

selecting the right format parser is clear.

- This is the same as #3045, which is on the 2.3 branch.

- Commands, invocations and their parameters will now autocomplete using

introspection.

- Two types of parameter *arguments* will also autocomplete:

- --sampler_name will autocomplete the scheduler name

- --model will autocomplete the model name

- There don't seem to be commands for reading/writing image files yet,

so path autocompletion is not implemented

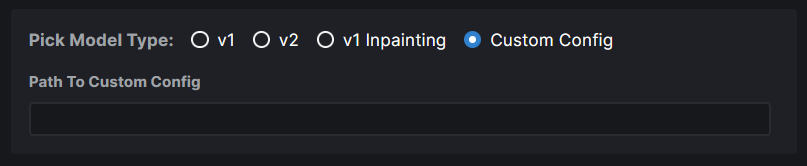

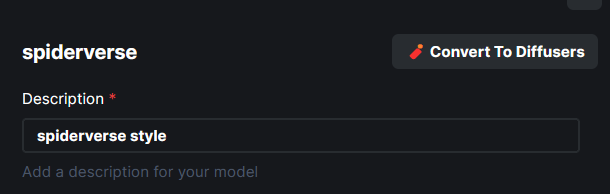

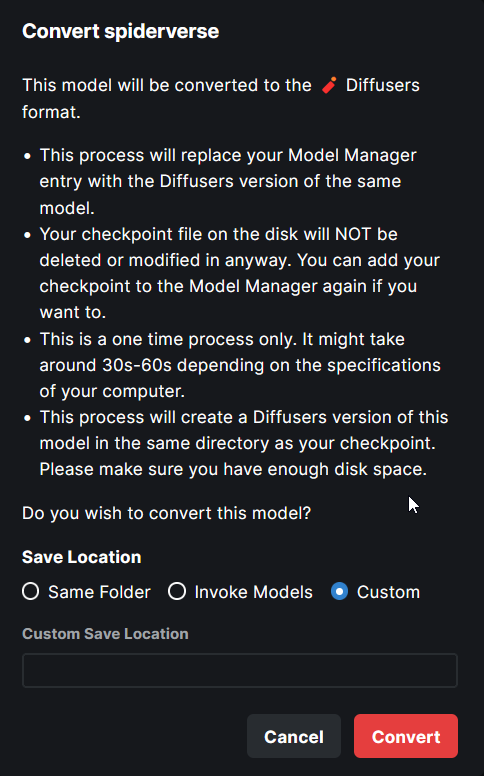

A long-standing issue with importing legacy checkpoints (both ckpt and

safetensors) is that the user has to identify the correct config file,

either by providing its path or by selecting which type of model the

checkpoint is (e.g. "v1 inpainting"). In addition, some users wish to

provide custom VAEs for use with the model. Currently this is done in

the WebUI by importing the model, editing it, and then typing in the

path to the VAE.

## Model configuration file selection

To improve the user experience, the model manager's `heuristic_import()`

method has been enhanced as follows:

1. When initially called, the caller can pass a config file path, in

which case it will be used.

2. If no config file provided, the method looks for a .yaml file in the

same directory as the model which bears the same basename. e.g.

```

my-new-model.safetensors

my-new-model.yaml

```

The yaml file is then used as the configuration file for importation and

conversion.

3. If no such file is found, then the method opens up the checkpoint and

probes it to determine whether it is V1, V1-inpaint or V2. If it is a V1

format, then the appropriate v1-inference.yaml config file is used.

Unfortunately there are two V2 variants that cannot be distinguished by

introspection.

4. If the probe algorithm is unable to determine the model type, then

its last-ditch effort is to execute an optional callback function that

can be provided by the caller. This callback, named

`config_file_callback` receives the path to the legacy checkpoint and

returns the path to the config file to use. The CLI uses to put up a

multiple choice prompt to the user. The WebUI **could** use this to

prompt the user to choose from a radio-button selection.

5. If the config file cannot be determined, then the import is

abandoned.

## Custom VAE Selection

The user can attach a custom VAE to the imported and converted model by

copying the desired VAE into the same directory as the file to be

imported, and giving it the same basename. E.g.:

```

my-new-model.safetensors

my-new-model.vae.pt

```

For this to work, the VAE must end with ".vae.pt", ".vae.ckpt", or

".vae.safetensors". The indicated VAE will be converted into diffusers

format and stored with the converted models file, so the ".pt" file can

be deleted after conversion.

No facility is currently provided to swap a diffusers VAE at import

time, but this can be done after the fact using the WebUI and CLI's

model editing functions.

Note that this is the same fix that was applied to the 2.3 branch in

#3043 . This applies to `main`.

## Enable the on-the-fly conversion of models based on SD 2.0/2.1 into

diffusers

This commit fixes bugs related to the on-the-fly conversion and loading

of legacy checkpoint models built on SD-2.0 base.

- When legacy checkpoints built on SD-2.0 models were converted

on-the-fly using --ckpt_convert, generation would crash with a precision

incompatibility error. This problem has been found and fixed.

This commit fixes bugs related to the on-the-fly conversion and loading of

legacy checkpoint models built on SD-2.0 base.

- When legacy checkpoints built on SD-2.0 models were converted

on-the-fly using --ckpt_convert, generation would crash with a

precision incompatibility error.

The Pytorch ROCm version in the documentation in outdated (`rocm5.2`)

which leads to errors during the installation of InvokeAI.

This PR updates the documentation with the latest Pytorch ROCm `5.4.2`

version.

A long-standing issue with importing legacy checkpoints (both ckpt and

safetensors) is that the user has to identify the correct config file,

either by providing its path or by selecting which type of model the

checkpoint is (e.g. "v1 inpainting"). In addition, some users wish to

provide custom VAEs for use with the model. Currently this is done in

the WebUI by importing the model, editing it, and then typing in the

path to the VAE.

To improve the user experience, the model manager's

`heuristic_import()` method has been enhanced as follows:

1. When initially called, the caller can pass a config file path, in

which case it will be used.

2. If no config file provided, the method looks for a .yaml file in the

same directory as the model which bears the same basename. e.g.

```

my-new-model.safetensors

my-new-model.yaml

```

The yaml file is then used as the configuration file for

importation and conversion.

3. If no such file is found, then the method opens up the checkpoint

and probes it to determine whether it is V1, V1-inpaint or V2.

If it is a V1 format, then the appropriate v1-inference.yaml config

file is used. Unfortunately there are two V2 variants that cannot be

distinguished by introspection.

4. If the probe algorithm is unable to determine the model type, then its

last-ditch effort is to execute an optional callback function that can

be provided by the caller. This callback, named `config_file_callback`

receives the path to the legacy checkpoint and returns the path to the

config file to use. The CLI uses to put up a multiple choice prompt to

the user. The WebUI **could** use this to prompt the user to choose

from a radio-button selection.

5. If the config file cannot be determined, then the import is abandoned.

The user can attach a custom VAE to the imported and converted model

by copying the desired VAE into the same directory as the file to be

imported, and giving it the same basename. E.g.:

```

my-new-model.safetensors

my-new-model.vae.pt

```

For this to work, the VAE must end with ".vae.pt", ".vae.ckpt", or

".vae.safetensors". The indicated VAE will be converted into diffusers

format and stored with the converted models file, so the ".pt" file

can be deleted after conversion.

No facility is currently provided to swap a diffusers VAE at import

time, but this can be done after the fact using the WebUI and CLI's

model editing functions.

- This PR adds support for embedding files that contain a single key

"emb_params". The only example I know of this format is the

"EasyNegative" embedding on HuggingFace, but there are certainly

others.

- This PR also adds support for loading embedding files that have been

saved in safetensors format.

- It also cleans up the code so that the logic of probing for and

selecting the right format parser is clear.

keeping `main` up to date with my api nodes branch:

- bd7e515290: [nodes] Add cancelation to

the API @Kyle0654

- 5fe38f7: fix(backend): simple typing fixes

- just picking some low-hanging fruit to improve IDE hinting

- c34ac91: fix(nodes): fix cancel; fix callback for img2img, inpaint

- makes nodes cancel immediate, use fix progress images on nodes, fix

callbacks for img2img/inpaint

- 4221cf7: fix(nodes): fix schema generation for output classes

- did this previously for some other class; needed to not have node

outputs be optional

Some schedulers report not only the noisy latents at the current

timestep, but also their estimate so far of what the de-noised latents

will be.

It makes for a more legible preview than the noisy latents do.

I think this is a huge improvement, but there are a few considerations:

- Need to not spook @JPPhoto by changing how previews look.

- Some schedulers (most notably **DPM Solver++**) don't provide this

data, and it falls back to the current behavior there. That's not

terrible, but seeing such a big difference in how _previews_ look from

one scheduler to the next might mislead people into thinking there's a

bigger difference in their overall effectiveness than there really is.

My fear of configuration-option-overwhelm leaves me inclined to _not_

add a configuration option for this, but we could.

- Commands, invocations and their parameters will now autocomplete

using introspection.

- Two types of parameter *arguments* will also autocomplete:

- --sampler_name will autocomplete the scheduler name

- --model will autocomplete the model name

- There don't seem to be commands for reading/writing image files yet, so

path autocompletion is not implemented

- resolve conflicts with generate.py invocation

- remove unused symbols that pyflakes complains about

- add **untested** code for passing intermediate latent image to the

step callback in the format expected.

This PR fixes#2951 and restores the step_callback argument in the

refactored generate() method. Note that this issue states that

"something is still wrong because steps and step are zero." However,

I think this is confusion over the call signature of the callback, which

since the diffusers merge has been `callback(state:PipelineIntermediateState)`

This is the test script that I used to determine that `step` is being passed

correctly:

```

from pathlib import Path

from invokeai.backend import ModelManager, PipelineIntermediateState

from invokeai.backend.globals import global_config_dir

from invokeai.backend.generator import Txt2Img

def my_callback(state:PipelineIntermediateState, total_steps:int):

print(f'callback(step={state.step}/{total_steps})')

def main():

manager = ModelManager(Path(global_config_dir()) / "models.yaml")

model = manager.get_model('stable-diffusion-1.5')

print ('=== TXT2IMG TEST ===')

steps=30

output = next(Txt2Img(model).generate(prompt='banana sushi',

iterations=None,

steps=steps,

step_callback=lambda x: my_callback(x,steps)

)

)

print(f'image={output.image}, seed={output.seed}, steps={output.params.steps}')

if __name__=='__main__':

main()

```

- When a legacy checkpoint model is loaded via --convert_ckpt and its

models.yaml stanza refers to a custom VAE path (using the 'vae:' key),

the custom VAE will be converted and used within the diffusers model.

Otherwise the VAE contained within the legacy model will be used.

- Note that the checkpoint import functions in the CLI or Web UIs

continue to default to the standard stabilityai/sd-vae-ft-mse VAE. This

can be fixed after the fact by editing VAE key using either the CLI or

Web UI.

- Fixes issue #2917

The mkdocs-workflow has been failing over the past week due to

permission denied errors. I *think* this is the result of not passing

the GitHub API token to the workflow, and this is a speculative fix for

the issue.

- This PR turns on pickle scanning before a legacy checkpoint file is

loaded from disk within the checkpoint_to_diffusers module.

- Also miscellaneous diagnostic message cleanup.

- See also #3011 for a similar patch to the 2.3 branch.

Currently translated at 100.0% (504 of 504 strings)

translationBot(ui): update translation (Spanish)

Currently translated at 100.0% (501 of 501 strings)

Co-authored-by: gallegonovato <fran-carro@hotmail.es>

Translate-URL: https://hosted.weblate.org/projects/invokeai/web-ui/es/

Translation: InvokeAI/Web UI

Currently translated at 100.0% (504 of 504 strings)

translationBot(ui): update translation (Italian)

Currently translated at 100.0% (501 of 501 strings)

translationBot(ui): update translation (Italian)

Currently translated at 100.0% (500 of 500 strings)

Co-authored-by: Riccardo Giovanetti <riccardo.giovanetti@gmail.com>

Translate-URL: https://hosted.weblate.org/projects/invokeai/web-ui/it/

Translation: InvokeAI/Web UI

This PR fixes#2951 and restores the step_callback argument in the

refactored generate() method. Note that this issue states that

"something is still wrong because steps and step are zero." However,

I think this is confusion over the call signature of the callback, which

since the diffusers merge has been `callback(state:PipelineIntermediateState)`

This is the test script that I used to determine that `step` is being passed

correctly:

```

from pathlib import Path

from invokeai.backend import ModelManager, PipelineIntermediateState

from invokeai.backend.globals import global_config_dir

from invokeai.backend.generator import Txt2Img

def my_callback(state:PipelineIntermediateState, total_steps:int):

print(f'callback(step={state.step}/{total_steps})')

def main():

manager = ModelManager(Path(global_config_dir()) / "models.yaml")

model = manager.get_model('stable-diffusion-1.5')

print ('=== TXT2IMG TEST ===')

steps=30

output = next(Txt2Img(model).generate(prompt='banana sushi',

iterations=None,

steps=steps,

step_callback=lambda x: my_callback(x,steps)

)

)

print(f'image={output.image}, seed={output.seed}, steps={output.params.steps}')

if __name__=='__main__':

main()

```

This PR corrects a bug in which embeddings were not being applied when a

non-diffusers model was loaded.

- Fixes#2954

- Also improves diagnostic reporting during embedding loading.

- This PR turns on pickle scanning before a legacy checkpoint file

is loaded from disk within the checkpoint_to_diffusers module.

- Also miscellaneous diagnostic message cleanup.

- When a legacy checkpoint model is loaded via --convert_ckpt and its

models.yaml stanza refers to a custom VAE path (using the 'vae:'

key), the custom VAE will be converted and used within the diffusers

model. Otherwise the VAE contained within the legacy model will be

used.

- Note that the heuristic_import() method, which imports arbitrary

legacy files on disk and URLs, will continue to default to the

the standard stabilityai/sd-vae-ft-mse VAE. This can be fixed after

the fact by editing the models.yaml stanza using the Web or CLI

UIs.

- Fixes issue #2917

- 86932469e76f1315ee18bfa2fc52b588241dace1 add image_to_dataURL util

- 0c2611059711b45bb6142d30b1d1343ac24268f3 make fast latents method

static

- this method doesn't really need `self` and should be able to be called

without instantiating `Generator`

- 2360bfb6558ea511e9c9576f3d4b5535870d84b4 fix schema gen for

GraphExecutionState

- `GraphExecutionState` uses `default_factory` in its fields; the result

is the OpenAPI schema marks those fields as optional, which propagates

to the generated API client, which means we need a lot of unnecessary

type guards to use this data type. the [simple

fix](https://github.com/pydantic/pydantic/discussions/4577) is to add

config to explicitly say all class properties are required. looks this

this will be resolved in a future pydantic release

- 3cd7319cfdb0f07c6bb12d62d7d02efe1ab12675 fix step callback and fast

latent generation on nodes. have this working in UI. depends on the

small change in #2957

Update `compel` to 1.0.0.

This fixes#2832.

It also changes the way downweighting is applied. In particular,

downweighting should now be much better and more controllable.

From the [compel

changelog](https://github.com/damian0815/compel#changelog):

> Downweighting now works by applying an attention mask to remove the

downweighted tokens, rather than literally removing them from the

sequence. This behaviour is the default, but the old behaviour can be

re-enabled by passing `downweight_mode=DownweightMode.REMOVE` on init of

the `Compel` instance.

>

> Formerly, downweighting a token worked by both multiplying the

weighting of the token's embedding, and doing an inverse-weighted blend

with a copy of the token sequence that had the downweighted tokens

removed. The intuition is that as weight approaches zero, the tokens

being downweighted should be actually removed from the sequence.

However, removing the tokens resulted in the positioning of all

downstream tokens becoming messed up. The blend ended up blending a lot

more than just the tokens in question.

>

> As of v1.0.0, taking advice from @keturn and @bonlime

(https://github.com/damian0815/compel/issues/7) the procedure is by

default different. Downweighting still involves a blend but what is

blended is a version of the token sequence with the downweighted tokens

masked out, rather than removed. This correctly preserves positioning

embeddings of the other tokens.

* Update root component to allow optional children that will render as

dynamic header of UI

* Export additional components (logo & themeChanger) for use in said

dynamic header (more to come here)

# The Problem

Pickle files (.pkl, .ckpt, etc) are extremely unsafe as they can be

trivially crafted to execute arbitrary code when parsed using

`torch.load`

Right now the conventional wisdom among ML researchers and users is to

simply `not run untrusted pickle files ever` and instead only use

Safetensor files, which cannot be injected with arbitrary code. This is

very good advice.

Unfortunately, **I have discovered a vulnerability inside of InvokeAI

that allows an attacker to disguise a pickle file as a safetensor and

have the payload execute within InvokeAI.**

# How It Works

Within `model_manager.py` and `convert_ckpt_to_diffusers.py` there are

if-statements that decide which `load` method to use based on the file

extension of the model file. The logic (written in a slightly more

readable format than it exists in the codebase) is as follows:

```

if Path(file).suffix == '.safetensors':

safetensor_load(file)

else:

unsafe_pickle_load(file)

```

A malicious actor would only need to create an infected .ckpt file, and

then rename the extension to something that does not pass the `==

'.safetensors'` check, but still appears to a user to be a safetensors

file.

For example, this might be something like `.Safetensors`,

`.SAFETENSORS`, `SafeTensors`, etc.

InvokeAI will happily import the file in the Model Manager and execute

the payload.

# Proof of Concept

1. Create a malicious pickle file.

(https://gist.github.com/CodeZombie/27baa20710d976f45fb93928cbcfe368)

2. Rename the `.ckpt` extension to some variation of `.Safetensors`,

ensuring there is a capital letter anywhere in the extension (eg.

`malicious_pickle.SAFETENSORS`)

3. Import the 'model' like you would normally with any other safetensors

file with the Model Manager.

4. Upon trying to select the model in the web ui, it will be loaded (or

attempt to be converted to a Diffuser) with `torch.load` and the payload

will execute.

# The Fix

This pull request changes the logic InvokeAI uses to decide which model

loader to use so that the safe behavior is the default. Instead of

loading as a pickle if the extension is not exactly `.safetensors`, it

will now **always** load as a safetensors file unless the extension is

**exactly** `.ckpt`.

# Notes:

I think support for pickle files should be totally dropped ASAP as a

matter of security, but I understand that there are reasons this would

be difficult.

In the meantime, I think `RestrictedUnpickler` or something similar

should be implemented as a replacement for `torch.load`, as this

significantly reduces the amount of Python methods that an attacker has

to work with when crafting malicious payloads

inside a pickle file.

Automatic1111 already uses this with some success.

(https://github.com/AUTOMATIC1111/stable-diffusion-webui/blob/master/modules/safe.py)

- The value of png_compression was always 6, despite the value provided

to the --png_compression argument. This fixes the bug.

- It also fixes an inconsistency between the maximum range of

png_compression and the help text.

- Closes#2945

- The value of png_compression was always 6, despite the value provided to the

--png_compression argument. This fixes the bug.

- It also fixes an inconsistency between the maximum range of png_compression

and the help text.

- Closes#2945

Prior to this commit, all models would be loaded with the extremely unsafe `torch.load` method, except those with the exact extension `.safetensors`. Even a change in casing (eg. `saFetensors`, `Safetensors`, etc) would cause the file to be loaded with torch.load instead of the much safer `safetensors.toch.load_file`.

If a malicious actor renamed an infected `.ckpt` to something like `.SafeTensors` or `.SAFETENSORS` an unsuspecting user would think they are loading a safe .safetensor, but would in fact be parsing an unsafe pickle file, and executing an attacker's payload. This commit fixes this vulnerability by reversing the loading-method decision logic to only use the unsafe `torch.load` when the file extension is exactly `.ckpt`.

#2931 was caused by new code that held onto the PRNG in `get_make_image`

and used it in `make_image` for img2img and inpainting. This

functionality has been moved elsewhere so that we can generate multiple

images again.

fix(ui): remove old scrollbar css

fix(ui): make guidepopover lazy

feat(ui): wip resizable drawer

feat(ui): wip resizable drawer

feat(ui): add scroll-linked shadow

feat(ui): organize files

Align Scrollbar next to content

Move resizable drawer underneath the progress bar

Add InvokeLogo to unpinned & align

Adds Invoke Logo to Unpinned Parameters panel and aligns to make it feel seamless.

# Remove node dependencies on generate.py

This is a draft PR in which I am replacing `generate.py` with a cleaner,

more structured interface to the underlying image generation routines.

The basic code pattern to generate an image using the new API is this:

```

from invokeai.backend import ModelManager, Txt2Img, Img2Img

manager = ModelManager('/data/lstein/invokeai-main/configs/models.yaml')

model = manager.get_model('stable-diffusion-1.5')

txt2img = Txt2Img(model)

outputs = txt2img.generate(prompt='banana sushi', steps=12, scheduler='k_euler_a', iterations=5)

# generate() returns an iterator

for next_output in outputs:

print(next_output.image, next_output.seed)

outputs = Img2Img(model).generate(prompt='strawberry` sushi', init_img='./banana_sushi.png')

output = next(outputs)

output.image.save('strawberries.png')

```

### model management

The `ModelManager` handles model selection and initialization. Its

`get_model()` method will return a `dict` with the following keys:

`model`, `model_name`,`hash`, `width`, and `height`, where `model` is

the actual StableDiffusionGeneratorPIpeline. If `get_model()` is called

without a model name, it will return whatever is defined as the default

in `models.yaml`, or the first entry if no default is designated.

### InvokeAIGenerator

The abstract base class `InvokeAIGenerator` is subclassed into into

`Txt2Img`, `Img2Img`, `Inpaint` and `Embiggen`. The constructor for

these classes takes the model dict returned by

`model_manager.get_model()` and optionally an

`InvokeAIGeneratorBasicParams` object, which encapsulates all the

parameters in common among `Txt2Img`, `Img2Img` etc. If you don't

provide the basic params, a reasonable set of defaults will be chosen.

Any of these parameters can be overridden at `generate()` time.

These classes are defined in `invokeai.backend.generator`, but they are

also exported by `invokeai.backend` as shown in the example below.

```

from invokeai.backend import InvokeAIGeneratorBasicParams, Img2Img

params = InvokeAIGeneratorBasicParams(

perlin = 0.15

steps = 30

scheduler = 'k_lms'

)

img2img = Img2Img(model, params)

outputs = img2img.generate(scheduler='k_heun')

```

Note that we were able to override the basic params in the call to

`generate()`

The `generate()` method will returns an iterator over a series of

`InvokeAIGeneratorOutput` objects. These objects contain the PIL image,

the seed, the model name and hash, and attributes for all the parameters

used to generate the object (you can also get these as a dict). The

`iterations` argument controls how many objects will be returned,

defaulting to 1. Pass `None` to get an infinite iterator.

Given the proposed use of `compel` to generate a templated series of

prompts, I thought the API would benefit from a style that lets you loop

over the output results indefinitely. I did consider returning a single

`InvokeAIGeneratorOutput` object in the event that `iterations=1`, but I

think it's dangerous for a method to return different types of result

under different circumstances.

Changing the model is as easy as this:

```

model = manager.get_model('inkspot-2.0`)

txt2img = Txt2Img(model)

```

### Node and legacy support

With respect to `Nodes`, I have written `model_manager_initializer` and

`restoration_services` modules that return `model_manager` and

`restoration` services respectively. The latter is used by the face

reconstruction and upscaling nodes. There is no longer any reference to

`Generate` in the `app` tree.

I have confirmed that `txt2img` and `img2img` work in the nodes client.

I have not tested `embiggen` or `inpaint` yet. pytests are passing, with

some warnings that I don't think are related to what I did.

The legacy WebUI and CLI are still working off `Generate` (which has not

yet been removed from the source tree) and fully functional.

I've finished all the tasks on my TODO list:

- [x] Update the pytests, which are failing due to dangling references

to `generate`

- [x] Rewrite the `reconstruct.py` and `upscale.py` nodes to call

directly into the postprocessing modules rather than going through

`Generate`

- [x] Update the pytests, which are failing due to dangling references

to `generate`

Prior to the folder restructure, the `paths` for `test-invoke-pip` did

not include the UI's path `invokeai/frontend/`:

```yaml

paths:

- 'pyproject.toml'

- 'ldm/**'

- 'invokeai/backend/**'

- 'invokeai/configs/**'

- 'invokeai/frontend/dist/**'

```

After the restructure, more code was moved into the `invokeai/frontend/`

folder, and `paths` was updated:

```yaml

paths:

- 'pyproject.toml'

- 'invokeai/**'

- 'invokeai/backend/**'

- 'invokeai/configs/**'

- 'invokeai/frontend/web/dist/**'

```

Now, the second path includes the UI. The UI now needs to be excluded,

and must be excluded prior to `invokeai/frontend/web/dist/**` being

included.

On `test-invoke-pip-skip`, we need to do a bit of logic juggling to

invert the folder selection. First, include the web folder, then exclude

everying around it and finally exclude the `dist/` folder

Currently translated at 100.0% (500 of 500 strings)

translationBot(ui): update translation (Italian)

Currently translated at 100.0% (500 of 500 strings)

translationBot(ui): update translation (Italian)

Currently translated at 100.0% (482 of 482 strings)

translationBot(ui): update translation (Italian)

Currently translated at 100.0% (480 of 480 strings)

Co-authored-by: Riccardo Giovanetti <riccardo.giovanetti@gmail.com>

Translate-URL: https://hosted.weblate.org/projects/invokeai/web-ui/it/

Translation: InvokeAI/Web UI

Currently translated at 100.0% (500 of 500 strings)

translationBot(ui): update translation (Spanish)

Currently translated at 100.0% (482 of 482 strings)

translationBot(ui): update translation (Spanish)

Currently translated at 100.0% (480 of 480 strings)

Co-authored-by: gallegonovato <fran-carro@hotmail.es>

Translate-URL: https://hosted.weblate.org/projects/invokeai/web-ui/es/

Translation: InvokeAI/Web UI

Cause of the problem was inadvertent activation of the safety checker.

When conversion occurs on disk, the safety checker is disabled during loading.

However, when converting in RAM, the safety checker was not removed, resulting

in it activating even when user specified --no-nsfw_checker.

This PR fixes the problem by detecting when the caller has requested the InvokeAi

StableDiffusionGeneratorPipeline class to be returned and setting safety checker

to None. Do not do this with diffusers models destined for disk because then they

will be incompatible with the merge script!!

Closes#2836

Some schedulers report not only the noisy latents at the current timestep,

but also their estimate so far of what the de-noised latents will be.

It makes for a more legible preview than the noisy latents do.

Reverts invoke-ai/InvokeAI#2903

@mauwii has a point here. It looks like triggering on a comment results

in an action for each of the stale issues, even ones that have been

previously dealt with. I'd like to revert this back to the original

behavior of running once every time the cron job executes.

What's the original motivation for having more frequent labeling of the

issues?

I found it to be a chore to remove labels manually in order to

"un-stale" issues. This is contrary to the bot message which says

commenting should remove "stale" status. On the current `cron` schedule,

there may be a delay of up to 24 hours before the label is removed. This

PR will trigger the workflow on issue comments in addition to the

schedule.

Also adds a condition to not run this job on PRs (Github treats issues

and PRs equivalently in this respect), and rewords the messages for

clarity.

This ought to be working but i don't know how it's supposed to behave so

i haven't been able to verify. At least, I know the numbers are getting

pushed all the way to the SD unet, i just have been unable to verify if

what's coming out is what is expected. Please test.

You'll `need to pip install -e .` after switching to the branch, because

it's currently pulling from a non-main `compel` branch. Once it's

verified as working as intended i'll promote the compel branch to pypi.

# Overview

Adding a few accessibility items (I think 9 total items). Mostly

`aria-label`, but also a `<VisuallyHidden>` to the left-side nav tab

icons. Tried to match existing copy that was being used. Feedback

welcome

* Fix img2img and inpainting code so a strength of 1 behaves the same as txt2img.

* Make generated images identical to their txt2img counterparts when strength is 1.

Updates the CLI to define CLI commands as Pydantic objects, similar to

how Invocations (nodes) work. For example:

```py

class HelpCommand(BaseCommand):

"""Shows help"""

type: Literal['help'] = 'help'

def run(self, context: CliContext) -> None:

context.parser.print_help()

```

*looks like this #2814 was reverted accidentally. instead of trying to

revert the revert, this PR can simply be re-accepted and will fix the

ui.*

- Migrate UI from SCSS to Chakra's CSS-in-JS system

- better dx

- more capable theming

- full RTL language support (we now have Arabic and Hebrew)

- general cleanup of the whole UI's styling

- Tidy npm packages and update scripts, necessitates update to github

actions

To test this PR in dev mode, you will need to do a `yarn install` as a

lot has changed.

thanks to @blessedcoolant for helping out on this, it was a big effort.

There are actually two Stable Diffusion v2 legacy checkpoint

configurations:

1. "epsilon" prediction type for Stable Diffusion v2 Base

2. "v-prediction" type for Stable Diffusion v2-768

This commit adds the configuration file needed for epsilon prediction

type models as well as the UI that prompts the user to select the

appropriate configuration file when the code can't do so automatically.

To avoid `git blame` recording all the autoformatting changes under the

name 'lstein', this PR adds a `.git-blame-ignore-revs` that will ignore

any provenance changes that occurred during the recent refactor merge.

This fixes the crash that was occurring when trying to load a legacy

checkpoint file.

Note that this PR includes commits from #2867 to avoid diffusers files

from re-downloading at startup time.

There are actually two Stable Diffusion v2 legacy checkpoint

configurations:

1) "epsilon" prediction type for Stable Diffusion v2 Base

2) "v-prediction" type for Stable Diffusion v2-768

This commit adds the configuration file needed for epsilon prediction

type models as well as the UI that prompts the user to select the

appropriate configuration file when the code can't do so

automatically.

# Migrate to new HF diffusers cache location

This PR adjusts the model cache directory to use the layout of

`diffusers 0.14`. This will automatically migrate any diffusers models

located in `INVOKEAI_ROOT/models/diffusers` to

`INVOKEAI_ROOT/models/hub`, and cache new downloaded diffusers files

into the same location.

As before, if environment variable `HF_HOME` is set, then both

HuggingFace `from_pretrained()` calls as well as all InvokeAI methods

will use `HF_HOME/hub` as their cache.

- Migrate UI from SCSS to Chakra's CSS-in-JS system

- better dx

- more capable theming

- full RTL language support (we now have Arabic and Hebrew)

- general cleanup of the whole UI's styling

- Tidy npm packages and update scripts, necessitates update to github

actions

To test this PR in dev mode, you will need to do a `yarn install` as a

lot has changed.

thanks to @blessedcoolant for helping out on this, it was a big effort.

This removes modules that appear to be no longer used by any code under

the `invokeai` package now that the `ckpt_generator` is gone.

There are a few small changes in here to code that was referencing code

in a conditional branch for ckpt, or to swap out a ⚡ function for a

🤗 one, but only as much was strictly necessary to get things to

run. We'll follow with more clean-up to get lingering `if isinstance` or

`except AttributeError` branches later.

build(ui): fix husky path

build(ui): fix hmr issue, remove emotion cache

build(ui): clean up package.json

build(ui): update gh action and npm scripts

feat(ui): wip port lightbox to chakra theme

feat(ui): wip use chakra theme tokens

feat(ui): Add status text to main loading spinner

feat(ui): wip chakra theme tweaking

feat(ui): simply iaisimplemenu button

feat(ui): wip chakra theming

feat(ui): Theme Management

feat(ui): Add Ocean Blue Theme

feat(ui): wip lightbox

fix(ui): fix lightbox mouse

feat(ui): set default theme variants

feat(ui): model manager chakra theme

chore(ui): lint

feat(ui): remove last scss

feat(ui): fix switch theme

feat(ui): Theme Cleanup

feat(ui): Stylize Search Models Found List

feat(ui): hide scrollbars

feat(ui): fix floating button position

feat(ui): Scrollbar Styling

fix broken scripts

This PR fixes the following scripts:

1) Scripts that can be executed within the repo's scripts directory.

Note that these are for development testing and are not intended

to be exposed to the user.

configure_invokeai.py - configuration

dream.py - the legacy CLI

images2prompt.py - legacy "dream prompt" retriever

invoke-new.py - new nodes-based CLI

invoke.py - the legacy CLI under another name

make_models_markdown_table.py - a utility used during the release/doc process

pypi_helper.py - another utility used during the release process

sd-metadata.py - retrieve JSON-formatted metadata from a PNG file

2) Scripts that are installed by pip install. They get placed into the venv's

PATH and are intended to be the official entry points:

invokeai-node-cli - new nodes-based CLI

invokeai-node-web - new nodes-based web server

invokeai - legacy CLI

invokeai-configure - install time configuration script

invokeai-merge - model merging script

invokeai-ti - textual inversion script

invokeai-model-install - model installer

invokeai-update - update script

invokeai-metadata" - retrieve JSON-formatted metadata from PNG files

protect invocations against black autoformatting

deps: upgrade to diffusers 0.14, safetensors 0.3, transformers 4.26, accelerate 0.16

Things to check for in this version:

- `diffusers` cache location is now more consistent with other

huggingface-hub using code (i.e. `transformers`) as of

https://github.com/huggingface/diffusers/pull/2005. I think ultimately

this should make @damian0815 (and other folks with multiple

diffusers-using projects) happier, but it's worth taking a look to make

sure the way @lstein set things up to respect `HF_HOME` is still

functioning as intended.

- I've gone ahead and updated `transformers` to the current version

(4.26), but I have a vague memory that we were holding it back at some

point? Need to look that up and see if that's the case and why.

This PR fixes the following scripts:

1) Scripts that can be executed within the repo's scripts directory.

Note that these are for development testing and are not intended

to be exposed to the user.

```

configure_invokeai.py - configuration

dream.py - the legacy CLI

images2prompt.py - legacy "dream prompt" retriever

invoke-new.py - new nodes-based CLI

invoke.py - the legacy CLI under another name

make_models_markdown_table.py - a utility used during the release/doc process

pypi_helper.py - another utility used during the release process

sd-metadata.py - retrieve JSON-formatted metadata from a PNG file

```