It was happening before pruning queue items - should happen afterwards, else you have to restart the app again to free disk space made available by the pruning.

Adds logic to `DiskLatentsStorage.start()` to empty the latents folder on startup.

Adds start and stop methods to `ForwardCacheLatentsStorage`. This is required for `DiskLatentsStorage.start()` to be called, due to how this particular service breaks the direct DI pattern, wrapping the underlying storage with a cache.

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [X] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [X] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [X] Yes

- [ ] No

## Description

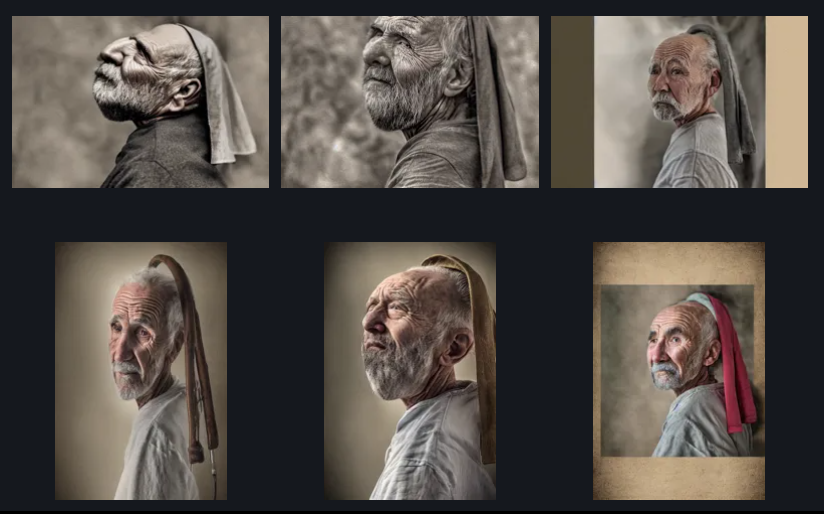

This adds support for at least some of the SDXL embeddings currently

available on Civitai. The embeddings I have tested include:

- https://civitai.com/models/154898/marblingtixl?modelVersionId=173668

- https://civitai.com/models/148131?modelVersionId=167640

-

https://civitai.com/models/123485/hannah-ferguson-or-sdxl-or-comfyui-only-or-embedding?modelVersionId=134674

(said to be "comfyui only")

-

https://civitai.com/models/185938/kendall-jenner-sdxl-embedding?modelVersionId=208785

I am _not entirely sure_ that I have implemented support in the most

elegant way. The issue is that these embeddings have two weight tensors,

`clip_g` and `clip_l`, which correspond to `text_encoder` and

`text_encoder_2` in the main model. When the patcher calls the

ModelPatcher's `apply_ti()` method, I simply check the dimensions of the

incoming text encoder and choose the weights that match the dimensions

of the encoder.

While writing this, I also ran into a possible issue with the Compel

library's `get_pooled_embeddings()` call. It pads the input token list

to the model's max token length and then calls the TI manager to add the

additional tokens from the embedding. However, this ends up making the

input token list longer than the max length, and CLIPTextEncoder crashes

with a tensor size mismatch. I worked around this behavior by making the

TI manager's `expand_textual_inversion_token_ids_if_necessary()` method

remove the excess pads at the end of the token list.

Also note that I have made similar changes to `apply_ti()` in the

ONNXModelPatcher, but haven't tested them yet.

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes#4401

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [X] No : We need to create tests for model patching...

## [optional] Are there any post deployment tasks we need to perform?

IndexedDB has a much larger storage limit than LocalStorage, and is widely supported.

Implemented as a custom storage driver for `redux-remember` via `idb-keyval`. `idb-keyval` is a simple wrapper for IndexedDB that allows it to be used easily as a key-value store.

The logic to clear persisted storage has been updated throughout the app.

- Reset init image, control adapter images, and node image fields when their selected image fails to load

- Only do this if the app is connected via socket (this indicates that the image is "really" gone, and there isn't just a transient network issue)

It's possible for image parameters/nodes/states to have reference a deleted image. For example, a resize image node might have an image set on it, and the workflow saved. The workflow contains a hard reference to that image.

The image is deleted and the workflow loaded again later. The deleted image is still in that workflow, but the app doesn't detect that. The result is that the workflow/graph appears to be valid, but will fail on invoke.

This creates a really confusing user experience, where when somebody shares a workflow with an image baked into it, and another person opens it, everything *looks* ok, but the workflow fails with a mysterious error about a missing image.

The problem affects node images, control adapter images and the img2img init image. Resetting the image when it fails to load *and* socket is connected resolves this in a simple way.

The problem also affects canvas images, but we have handle that by displaying an error fallback image, so no change is made there.

Closes#5121

- Parse `anyOf` for enums (present when they are optional)

- Consolidate `FieldTypeParseError` and `UnsupportedFieldTypeError` into `FieldParseError` (there was no difference in handling and it simplifies things a bit)

* add centerpadcrop node

- Allows users to add padding to or crop images from the center

- Also outputs a white mask with the dimensions of the output image for use with outpainting

* add CenterPadCrop to NODES.md

Updates NODES.md with CenterPadCrop entry.

* remove mask & output class

- Remove "ImageMaskOutput" where both image and mask are output

- Remove ability to output mask from node

---------

Co-authored-by: psychedelicious <4822129+psychedelicious@users.noreply.github.com>

Use UTF-8 encoding on reading prompts from files to allow Unicode characters to load correctly.

The following examples currently will not load correctly from a file:

Hello, 世界!

😭🤮💔

Added New Match Histogram node

Updated XYGrid nodes and Prompt Tools nodes

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [ ] Bug Fix

- [ ] Optimization

- [x] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [ ] Yes

- [ ] No

## Description

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [ ] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

This class is deprecated and no longer needed. Set its workflow attr value to None (meaning it is now a no-op), and issue a warning when an invocation subclasses it.

Update workflows handling for Workflow Library.

**Updated Workflow Storage**

"Embedded Workflows" are workflows associated with images, and are now only stored in the image files. "Library Workflows" are not associated with images, and are stored only in DB.

This works out nicely. We have always saved workflows to files, but recently began saving them to the DB in addition to in image files. When that happened, we stopped reading workflows from files, so all the workflows that only existed in images were inaccessible. With this change, access to those workflows is restored, and no workflows are lost.

**Updated Workflow Handling in Nodes**

Prior to this change, workflows were embedded in images by passing the whole workflow JSON to a special workflow field on a node. In the node's `invoke()` function, the node was able to access this workflow and save it with the image. This (inaccurately) models workflows as a property of an image and is rather awkward technically.

A workflow is now a property of a batch/session queue item. It is available in the InvocationContext and therefore available to all nodes during `invoke()`.

**Database Migrations**

Added a `SQLiteMigrator` class to handle database migrations. Migrations were needed to accomodate the DB-related changes in this PR. See the code for details.

The `images`, `workflows` and `session_queue` tables required migrations for this PR, and are using the new migrator. Other tables/services are still creating tables themselves. A followup PR will adapt them to use the migrator.

**Other/Support Changes**

- Add a `has_workflow` column to `images` table to indicate that the image has an embedded workflow.

- Add handling for retrieving the workflow from an image in python. The image file must be fetched, the workflow extracted, and then sent to client, avoiding needing the browser to parse the image file. With the `has_workflow` column, the UI knows if there is a workflow to be fetched, and only fetches when the user requests to load the workflow.

- Add route to get the workflow from an image

- Add CRUD service/routes for the library workflows

- `workflow_images` table and services removed (no longer needed now that embedded workflows are not in the DB)

This new name more accurately represents that these are fields with a type of `T | T[]`, where the "base" type must be the same on both sides of the union.

Custom nodes have a new attribute `node_pack` indicating the node pack they came from.

- This is displayed in the UI in the icon icon tooltip.

- If a workflow is loaded and a node is unavailable, its node pack will be displayed (if it is known).

- If a workflow is migrated from v1 to v2, and the node is unknown, it falls back to "Unknown". If the missing node pack is installed and the node is updated, the node pack will be updated as expected.

Node authors may now create their own arbitrary/custom field types. Any pydantic model is supported.

Two notes:

1. Your field type's class name must be unique.

Suggest prefixing fields with something related to the node pack as a kind of namespace.

2. Custom field types function as connection-only fields.

For example, if your custom field has string attributes, you will not get a text input for that attribute when you give a node a field with your custom type.

This is the same behaviour as other complex fields that don't have custom UIs in the workflow editor - like, say, a string collection.

feat(ui): fix tooltips for custom types

We need to hold onto the original type of the field so they don't all just show up as "Unknown".

fix(ui): fix ts error with custom fields

feat(ui): custom field types connection validation

In the initial commit, a custom field's original type was added to the *field templates* only as `originalType`. Custom fields' `type` property was `"Custom"`*. This allowed for type safety throughout the UI logic.

*Actually, it was `"Unknown"`, but I changed it to custom for clarity.

Connection validation logic, however, uses the *field instance* of the node/field. Like the templates, *field instances* with custom types have their `type` set to `"Custom"`, but they didn't have an `originalType` property. As a result, all custom fields could be connected to all other custom fields.

To resolve this, we need to add `originalType` to the *field instances*, then switch the validation logic to use this instead of `type`.

This ended up needing a bit of fanagling:

- If we make `originalType` a required property on field instances, existing workflows will break during connection validation, because they won't have this property. We'd need a new layer of logic to migrate the workflows, adding the new `originalType` property.

While this layer is probably needed anyways, typing `originalType` as optional is much simpler. Workflow migration logic can come layer.

(Technically, we could remove all references to field types from the workflow files, and let the templates hold all this information. This feels like a significant change and I'm reluctant to do it now.)

- Because `originalType` is optional, anywhere we care about the type of a field, we need to use it over `type`. So there are a number of `field.originalType ?? field.type` expressions. This is a bit of a gotcha, we'll need to remember this in the future.

- We use `Array.prototype.includes()` often in the workflow editor, e.g. `COLLECTION_TYPES.includes(type)`. In these cases, the const array is of type `FieldType[]`, and `type` is is `FieldType`.

Because we now support custom types, the arg `type` is now widened from `FieldType` to `string`.

This causes a TS error. This behaviour is somewhat controversial (see https://github.com/microsoft/TypeScript/issues/14520). These expressions are now rewritten as `COLLECTION_TYPES.some((t) => t === type)` to satisfy TS. It's logically equivalent.

fix(ui): typo

feat(ui): add CustomCollection and CustomPolymorphic field types

feat(ui): add validation for CustomCollection & CustomPolymorphic types

- Update connection validation for custom types

- Use simple string parsing to determine if a field is a collection or polymorphic type.

- No longer need to keep a list of collection and polymorphic types.

- Added runtime checks in `baseinvocation.py` to ensure no fields are named in such a way that it could mess up the new parsing

chore(ui): remove errant console.log

fix(ui): rename 'nodes.currentConnectionFieldType' -> 'nodes.connectionStartFieldType'

This was confusingly named and kept tripping me up. Renamed to be consistent with the `reactflow` `ConnectionStartParams` type.

fix(ui): fix ts error

feat(nodes): add runtime check for custom field names

"Custom", "CustomCollection" and "CustomPolymorphic" are reserved field names.

chore(ui): add TODO for revising field type names

wip refactor fieldtype structured

wip refactor field types

wip refactor types

wip refactor types

fix node layout

refactor field types

chore: mypy

organisation

organisation

organisation

fix(nodes): fix field orig_required, field_kind and input statuses

feat(nodes): remove broken implementation of default_factory on InputField

Use of this could break connection validation due to the difference in node schemas required fields and invoke() required args.

Removed entirely for now. It wasn't ever actually used by the system, because all graphs always had values provided for fields where default_factory was used.

Also, pydantic is smart enough to not reuse the same object when specifying a default value - it clones the object first. So, the common pattern of `default_factory=list` is extraneous. It can just be `default=[]`.

fix(nodes): fix InputField name validation

workflow validation

validation

chore: ruff

feat(nodes): fix up baseinvocation comments

fix(ui): improve typing & logic of buildFieldInputTemplate

improved error handling in parseFieldType

fix: back compat for deprecated default_factory and UIType

feat(nodes): do not show node packs loaded log if none loaded

chore(ui): typegen

We used the `RealESRGANer` utility class from the repo. It handled model loading and tiled upscaling logic.

Unfortunately, it hasn't been updated in over a year, had no types, and annoyingly printed to console.

I've adapted the class, cleaning it up a bit and removing the bits that are not relevant for us.

Upscaling functionality is identical.

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [ ] Bug Fix

- [ ] Optimization

- [x] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [x] No, because:

## Have you updated all relevant documentation?

- [ ] Yes

- [x] No

## Description

Fixes wrong Q&A Troubleshooting link (original leads to 404)

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [x] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

* working on recall height/width

* working on adding resize

* working on feature

* fix(ui): move added translation from dist/ to public/

* fix(ui): use `metadata` as hotkey cb dependency

Using `imageDTO` may result in stale data being used

---------

Co-authored-by: psychedelicious <4822129+psychedelicious@users.noreply.github.com>

* eslint added and new string added

* strings and translation hook added

* more changes made

* missing translation added

* final errors resolve in progress

* all errors resolved

* fix(ui): fix missing import of `t()`

* fix(ui): use plurals for moving images to board translation

* fix(ui): fix typo in translation key

* fix(ui): do not use translation for "invoke ai"

* chore(ui): lint

---------

Co-authored-by: psychedelicious <4822129+psychedelicious@users.noreply.github.com>

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [X] Feature

- [ ] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [X] No, because: Small obvious fix

## Have you updated all relevant documentation?

- [X] Yes

- [ ] No

## Description

This one-line patch adds support for LCM models such as

`SimianLuo/LCM_Dreamshaper_v7`

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Closes#4951

## QA Instructions, Screenshots, Recordings

Try installing `SimianLuo/LCM_Dreamshaper_v7` and using with CFG 2.5 and

the LCM scheduler.

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [X] Not needed

This PR adds a link and description to the Remote Image node.

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [ ] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [x] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [x] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [x] Yes

- [ ] No

## Description

Adds a description and link to a new community node

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [x] No : This is only a documentation change

## [optional] Are there any post deployment tasks we need to perform?

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [ ] Bug Fix

- [ ] Optimization

- [x] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [x] No, because: community nodes already use these import paths

## Have you updated all relevant documentation?

- [x] Yes

- [ ] No

## Description

The example custom node code in the docs uses old (?) import paths for

invokeai modules. These paths cause the module to fail to load. This PR

updates them.

## QA Instructions, Screenshots, Recordings

- [x] verified that example code is loaded successfully when copied to

custom nodes directory

- [x] verified that custom node works as expected in workflows

## Added/updated tests?

- [ ] Yes

- [x] No : documentation update

## What type of PR is this? (check all applicable)

3.4.0post3

## Have you discussed this change with the InvokeAI team?

- [x] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

N/A

## Description

3.4.0post2 release - mainly fixes duplicate LoRA patching

* first string only to test

* more strings changed

* almost half strings added in json file

* more strings added

* more changes

* few strings and t function changed

* resolved

* errors resolved

* chore(ui): fmt en.json

---------

Co-authored-by: psychedelicious <4822129+psychedelicious@users.noreply.github.com>

## What type of PR is this? (check all applicable)

3.4 Release Updates

## Have you discussed this change with the InvokeAI team?

- [X] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [X] Yes

- [ ] No

## Description

## Related Tickets & Documents

## [optional] Are there any post deployment tasks we need to perform?

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [x] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Description

Resolves two bugs introduced in #5106:

1. Linear UI images sometimes didn't make it to the gallery.

This was a race condition. The VAE decode nodes were handled by the

socketInvocationComplete listener. At that moment, the image was marked

as intermediate. Immediately after this node was handled, a

LinearUIOutputInvocation, introduced in #5106, was handled by

socketInvocationComplete. This node internally sets changed the image to

not intermediate.

During the handling of that socketInvocationComplete, RTK Query would

sometimes use its cache instead of retrieving the image DTO again. The

result is that the UI never got the message that the image was not

intermediate, so it wasn't added to the gallery.

This is resolved by refactoring the socketInvocationComplete listener.

We now skip the gallery processing for linear UI events, except for the

LinearUIOutputInvocation. Images now always make it to the gallery, and

network requests to get image DTOs are substantially reduced.

2. Canvas temp images always went into the gallery

The LinearUIOutputInvocation was always setting its image's

is_intermediate to false. This included all canvas images and resulted

in all canvas temp images going to gallery.

This is resolved by making LinearUIOutputInvocation set is_intermediate

based on `self.is_intermediate`. The behaviour now more or less

mirroring the behaviour of is_intermediate on other image-outputting

nodes, except it doesn't save the image again - only changes it.

One extra minor change - LinearUIOutputInvocation only changes

is_intermediate if it differs from the image's current setting. Very

minor optimisation.

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue

https://discord.com/channels/1020123559063990373/1149513625321603162/1174721072826945638

## QA Instructions, Screenshots, Recordings

Try to reproduce the issues described int he discord thread:

- Images should always go to the gallery from txt2img and img2img

- Canvas temp images should not go to the gallery unless auto-save is

enabled

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [ ] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [X] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [X] No, because:

## Have you updated all relevant documentation?

- [X] Yes

- [ ] No

## Description

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [X] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

Resolves two bugs introduced in #5106:

1. Linear UI images sometimes didn't make it to the gallery.

This was a race condition. The VAE decode nodes were handled by the socketInvocationComplete listener. At that moment, the image was marked as intermediate. Immediately after this node was handled, a LinearUIOutputInvocation, introduced in #5106, was handled by socketInvocationComplete. This node internally sets changed the image to not intermediate.

During the handling of that socketInvocationComplete, RTK Query would sometimes use its cache instead of retrieving the image DTO again. The result is that the UI never got the message that the image was not intermediate, so it wasn't added to the gallery.

This is resolved by refactoring the socketInvocationComplete listener. We now skip the gallery processing for linear UI events, except for the LinearUIOutputInvocation. Images now always make it to the gallery, and network requests to get image DTOs are substantially reduced.

2. Canvas temp images always went into the gallery

The LinearUIOutputInvocation was always setting its image's is_intermediate to false. This included all canvas images and resulted in all canvas temp images going to gallery.

This is resolved by making LinearUIOutputInvocation set is_intermediate based on `self.is_intermediate`. The behaviour now more or less mirroring the behaviour of is_intermediate on other image-outputting nodes, except it doesn't save the image again - only changes it.

One extra minor change - LinearUIOutputInvocation only changes is_intermediate if it differs from the image's current setting. Very minor optimisation.

## What type of PR is this? (check all applicable)

- [x] Refactor

- [ ] Feature

- [ ] Bug Fix

- [x] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [x] Yes

- [ ] No, because:

## Description

[feat: add private node for linear UI image

outputting](4599517c6c)

Add a LinearUIOutputInvocation node to be the new terminal node for

Linear UI graphs. This node is private and hidden from the Workflow

Editor, as it is an implementation detail.

The Linear UI was using the Save Image node for this purpose. It allowed

every linear graph to end a single node type, which handled saving

metadata and board. This substantially reduced the complexity of the

linear graphs.

This caused two related issues:

- Images were saved to disk twice

- Noticeable delay between when an image was decoded and showed up in

the UI

To resolve this, the new LinearUIOutputInvocation node will handle

adding an image to a board if one is provided.

Metadata is no longer provided in this unified node. Instead, the

metadata graph helpers now need to know the node to add metadata to and

provide it to the last node that actually outputs an image. This is a

`l2i` node for txt2img & img2img graphs, and a different

image-outputting node for canvas graphs.

HRF poses another complication, in that it changes the terminal node. To

handle this, a new metadata util is added called

`setMetadataReceivingNode()`. HRF calls this to change the node that

should receive the graph's metadata.

This resolves the duplicate images issue and improves perf without

otherwise changing the user experience.

---

Also fixed an issue with HRF metadata.

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Closes#4688

- Closes#4645

## QA Instructions, Screenshots, Recordings

Generate some images with and without a board selected. Images should

end up in the right board per usual, but a bit quicker. Metadata should

still work.

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

Add a LinearUIOutputInvocation node to be the new terminal node for Linear UI graphs. This node is private and hidden from the Workflow Editor, as it is an implementation detail.

The Linear UI was using the Save Image node for this purpose. It allowed every linear graph to end a single node type, which handled saving metadata and board. This substantially reduced the complexity of the linear graphs.

This caused two related issues:

- Images were saved to disk twice

- Noticeable delay between when an image was decoded and showed up in the UI

To resolve this, the new LinearUIOutputInvocation node will handle adding an image to a board if one is provided.

Metadata is no longer provided in this unified node. Instead, the metadata graph helpers now need to know the node to add metadata to and provide it to the last node that actually outputs an image. This is a `l2i` node for txt2img & img2img graphs, and a different image-outputting node for canvas graphs.

HRF poses another complication, in that it changes the terminal node. To handle this, a new metadata util is added called `setMetadataReceivingNode()`. HRF calls this to change the node that should receive the graph's metadata.

This resolves the duplicate images issue and improves perf without otherwise changing the user experience.

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [ ] Bug Fix

- [ ] Optimization

- [X] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [ ] Yes

- [ ] No

## Description

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [ ] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [x] Feature

- [ ] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [x] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [ ] Yes

- [x] No

## Description

[fix(nodes): bump version of nodes post-pydantic

v2](5cb3fdb64c)

This was not done, despite new metadata fields being added to many

nodes.

[feat(ui): add update node

functionality](3f6e8e9d6b)

A workflow's nodes may update itself, if its major version matches the

template's major version.

If the major versions do not match, the user will need to delete and

re-add the node (current behaviour).

The update functionality is not automatic (for now). The logic to update

the node is pretty simple, but I want to ensure it works well first

before doing it automatically when a workflow is loaded.

- New `Details` tab on Workflow Inspector, displays node title, type,

version, and notes

- Button to update the node is displayed on the `Details` tab

- Add hook to determine if a node needs an update, may be updated (i.e.

major versions match), and the callback to update the node in state

- Remove the notes modal from the little info icon

- Modularize the node building logic

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

Probably exist but not sure where.

## QA Instructions, Screenshots, Recordings

Load an old workflow with nodes that need to be updated. Click on each

node that needs updating and click the update button. Workflow should

work.

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

A workflow's nodes may update itself, if its major version matches the template's major version.

If the major versions do not match, the user will need to delete and re-add the node (current behaviour).

The update functionality is not automatic (for now). The logic to update the node is pretty simple, but I want to ensure it works well first before doing it automatically when a workflow is loaded.

- New `Details` tab on Workflow Inspector, displays node title, type, version, and notes

- Button to update the node is displayed on the `Details` tab

- Add hook to determine if a node needs an update, may be updated (i.e. major versions match), and the callback to update the node in state

- Remove the notes modal from the little info icon

- Modularize the node building logic

## Description

pin torch==2.1.0, torchvision=0.16.0

Prevents accidental upgrade to unreleased torch 2.1.1, which breaks

stuff

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #5065

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [X] Feature

- [ ] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [X] No, because: it is trivial

## Have you updated all relevant documentation?

- [ ] Yes

- [X] No

## Description

After the switch to the "ruff" linter, I noticed that the stylecheck

workflow is still described as "black" in the action logs. This small PR

should fix the issue.

No breaking changes for us.

Pydantic is working on its own faster JSON parser, `jiter`, and 2.5.0 starts bringing this in. See https://github.com/pydantic/jiter

There are a number of other bugfixes and minor changes in this version of pydantic.

The FastAPI update is mostly internal but let's stay up to date.

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [ ] Bug Fix

- [X] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [X] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [x] Yes

- [ ] No

## Description

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [ ] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [ ] Bug Fix

- [ ] Optimization

- [x] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [x] No, because:

## Have you updated all relevant documentation?

- [x] Yes

- [ ] No

## Description

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [x] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

## What type of PR is this? (check all applicable)

- [X] Refactor

## Have you discussed this change with the InvokeAI team?

- [X] Extensively

- [ ] No, because:

## Have you updated all relevant documentation?

- [X] Yes

- [ ] No

## Description

As discussed with @psychedelicious and @RyanJDick, this is the first

phase of the model manager refactor. In this phase, I've added support

for storing model configuration information the `invokeai.db` SQL3

database. All the code is separate from the original model manager, so

for the time being the frontend is still using the original YAML-based

configuration, so the web app still works.

To keep things clean, I've added a new FastAPI route called

`model_records` which can add, update, retrieve and delete model

records.

The architecture is described in the first section of

`docs/contributing/MODEL_MANAGER.md`.

## QA Instructions, Screenshots, Recordings

There is a pytest for the model sql storage backend in

`tests/backend/model_manager_2/test_model_storage_sql.py`.

To populate `invokeai.db` with models from your current `models.yaml`,

do the following:

1. Stop the running server

2. Back up `invokeai.db`

3. Run `pip install -e .` to install the command used in the next step.

4. Run `invokeai-migrate-models-to-db`

This will iterate through `models.yaml` and create equivalent database

entries in the `model_config` table of `invokeai.db`. Only the models

named in the yaml file will be migrated, so anything that is autoloaded

will be ignored.

Note that in order to get the `model_records` router to be recognized by

the swagger API, I had to rebuild the frontend. Not sure why this was

necessary and would appreciate a pointer on a less radical way to do

this.

## Added/updated tests?

- [X] Yes

- [ ] No

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [X] Bug Fix

- [X] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [X] No, because it's required

## Have you updated all relevant documentation?

- [ ] Yes

- [X] No, not necessary

## Description

We use Pytorch ~2.1.0 as a dependency for InvokeAI, but the installer

still installs 2.0.1 first until Invoke AIs dependencies kick in which

causes it to get deleted anyway and replaced with 2.1.0. This is

unnecessary and probably not wanted.

Fixed the dependencies for the installation script to install Pytorch

~2.1.0 to begin with.

P.s. Is there any reason why "torchmetrics==0.11.4" is pinned? What is

the reason for that? Does that change with Pytorch 2.1? It seems to work

since we use it already. It would be nice to know the reason.

Greetings

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [ ] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [ ] Bug Fix

- [x] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Description

Bit of a cleanup.

[chore(ui): delete unused

files](5eaea9dd64)

[feat(ui): add eslint rule

react/jsx-no-bind](3a0ec635c9)

This rule enforces no arrow functions in component props. In practice,

it means all functions passed as component props must be wrapped in

`useCallback()`.

This is a performance optimization to prevent unnecessary rerenders.

The rule is added and all violations have been fixed, whew!

[chore(ui): move useCopyImageToClipboard to

common/hooks/](f2d26a3a3c)

[chore(ui): move MM components & store to

features/](bb52861896)

Somehow they had ended up in `features/ui/tabs` which isn't right

## QA Instructions, Screenshots, Recordings

UI should still work.

It builds successfully, and I tested things out - looks good to me.

Do not use `strict=True` when scaling controlnet conditioning.

When using `guess_mode` (e.g. `more_control` or `more_prompt`), `down_block_res_samples` and `scales` are zipped.

These two objects are of different lengths, so using zip's strict mode raises an error.

In testing, `len(scales) === len(down_block_res_samples) + 1`.

It appears this behaviour is intentional, as the final "extra" item in `scales` is used immediately afterwards.

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [ ] Bug Fix

- [X] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [X] No, because: This is just housekeeping

## Have you updated all relevant documentation?

- [ ] Yes

- [X] No, not needed

## Description

Update Accelerate to the most recent version. No breaking changes.

Tested for 1 week in productive use now.

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [ ] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [x] Feature

- [x] Bug Fix

- [x] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Description

This PR introduces [`ruff`](https://github.com/astral-sh/ruff) as the

only linter and formatter needed for the project. It is really fast.

Like, alarmingly fast.

It is a drop-in replacement for flake8, isort, black, and much more.

I've configured it similarly to our existing config.

Note: we had enabled a number of flake8 plugins but didn't have the

packages themselves installed, so they did nothing. Ruff used the

existing config, and found a good number of changes needed to adhere to

those flake8 plugins. I've resolved all violations.

### Code changes

- many

[flake8-comprehensions](https://docs.astral.sh/ruff/rules/#flake8-comprehensions-c4)

violations, almost all auto-fixed

- a good handful of

[flake8-bugbear](https://docs.astral.sh/ruff/rules/#flake8-bugbear-b)

violations

- handful of

[pycodestyle](https://docs.astral.sh/ruff/rules/#pycodestyle-e-w)

violations

- some formatting

### Developer Experience

[Ruff integrates with most

editors](https://docs.astral.sh/ruff/integrations/):

- Official VSCode extension

- `ruff-lsp` python package allows it to integrate with any LSP-capable

editor (vim, emacs, etc)

- Can be configured as an external tool in PyCharm

### Github Actions

I've updated the `style-checks` action to use ruff, and deleted the

`pyflakes` action.

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Closes#5066

## QA Instructions, Screenshots, Recordings

Have a poke around, and run the app. There were some logic changes but

it was all pretty straightforward.

~~Not sure how to best test the changed github action.~~ Looks like it

just used the action from this PR, that's kinda unexpected but OK.

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [ ] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

This rule enforces no arrow functions in component props. In practice, it means all functions passed as component props must be wrapped in `useCallback()`.

This is a performance optimization to prevent unnecessary rerenders.

The rule is added and all violations have been fixed, whew!

* adding VAE recall when using all parameters

* adding VAE to the RecallParameters tab in ImageMetadataActions

* checking for nil vae and casting to null if undefined

* adding default VAE to recall actions list if VAE is nullish

* fix(ui): use `lodash-es` for tree-shakeable imports

---------

Co-authored-by: psychedelicious <4822129+psychedelicious@users.noreply.github.com>

* working

* added selector for method

* refactoring graph

* added ersgan method

* fixing yarn build

* add tooltips

* a conjuction

* rephrase

* removed manual sliders, set HRF to calculate dimensions automatically to match 512^2 pixels

* working

* working

* working

* fixed tooltip

* add hrf to use all parameters

* adding hrf method to parameters

* working on parameter recall

* working on parameter recall

* cleaning

* fix(ui): fix unnecessary casts in addHrfToGraph

* chore(ui): use camelCase in addHrfToGraph

* fix(ui): do not add HRF metadata unless HRF is added to graph

* fix(ui): remove unused imports in addHrfToGraph

* feat(ui): do not hide HRF params when disabled, only disable them

* fix(ui): remove unused vars in addHrfToGraph

* feat(ui): default HRF str to 0.35, method ESRGAN

* fix(ui): use isValidBoolean to check hrfEnabled param

* fix(nodes): update CoreMetadataInvocation fields for HRF

* feat(ui): set hrf strength default to 0.45

* fix(ui): set default hrf strength in configSlice

* feat(ui): use translations for HRF features

---------

Co-authored-by: psychedelicious <4822129+psychedelicious@users.noreply.github.com>

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [X] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [ ] Yes

- [ ] No

## Description

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [ ] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [X] Bug Fix

- [X] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [X] Yes, with @blessedcoolant

- [ ] No, because:

## Have you updated all relevant documentation?

- [ ] Yes

- [ ] No

## Description

This PR updates Transformers to the most recent version and fixes the

value `pad_to_multiple_of` for `text_encoder.resize_token_embeddings`

which was introduced with

https://github.com/huggingface/transformers/pull/25088 in Transformers

4.32.0.

According to the [Nvidia

Documentation](https://docs.nvidia.com/deeplearning/performance/dl-performance-matrix-multiplication/index.html#requirements-tc),

`Performance is better when equivalent matrix dimensions M, N, and K are

aligned to multiples of 8 bytes (or 64 bytes on A100) for FP16`

This fixes the following error that was popping up before every

invocation starting with Transformers 4.32.0

`You are resizing the embedding layer without providing a

pad_to_multiple_of parameter. This means that the new embedding

dimension will be None. This might induce some performance reduction as

Tensor Cores will not be available. For more details about this, or help

on choosing the correct value for resizing, refer to this guide:

https://docs.nvidia.com/deeplearning/performance/dl-performance-matrix-multiplication/index.html#requirements-tc`

This is my first "real" fix PR, so I hope this is fine. Please inform me

if there is anything wrong with this. I am glad to help.

Have a nice day and thank you!

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue:

https://github.com/huggingface/transformers/issues/26303

- Related Discord discussion:

https://discord.com/channels/1020123559063990373/1154152783579197571

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [ ] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [X] Feature

- [ ] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [X] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [X] Yes

- [ ] No

## Description

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [ ] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [x] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [x] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [ ] Yes

- [x] No

## Description

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [ ] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

We have a number of shared classes, objects, and functions that are used in multiple places. This causes circular import issues.

This commit creates a new `app/shared/` module to hold these shared classes, objects, and functions.

Initially, only `FreeUConfig` and `FieldDescriptions` are moved here. This resolves a circular import issue with custom nodes.

Other shared classes, objects, and functions will be moved here in future commits.

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [X] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [X] Yes: @psychedelicious told me to do this :)

- [ ] No, because:

## Have you updated all relevant documentation?

- [ ] Yes

- [ ] No

## Description

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [ ] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

I'm not sure if it's correct way of handling things, but correcting this

string to '==0.0.20' fixes xformers install for me - and maybe for

others it will too. Sorry for absolutely incorrect PR.

Please see [this

thread](https://github.com/facebookresearch/xformers/issues/740), this

is the issue I had (trying to install InvokeAI with

Automatic/Manual/StableMatrix way).

With ~=0.0.19 (0.0.22):

```

(InvokeAI) pip install torch torchvision xformers~=0.0.19

Collecting torch

Obtaining dependency information for torch from edce54779f/torch-2.1.0-cp311-cp311-win_amd64.whl.metadata

Using cached torch-2.1.0-cp311-cp311-win_amd64.whl.metadata (25 kB)

Collecting torchvision

Obtaining dependency information for torchvision from ab6f42af83/torchvision-0.16.0-cp311-cp311-win_amd64.whl.metadata

Using cached torchvision-0.16.0-cp311-cp311-win_amd64.whl.metadata (6.6 kB)

Collecting xformers

Using cached xformers-0.0.22.post3.tar.gz (3.9 MB)

Installing build dependencies ... done

Getting requirements to build wheel ... error

error: subprocess-exited-with-error

× Getting requirements to build wheel did not run successfully.

│ exit code: 1

╰─> [20 lines of output]

Traceback (most recent call last):

File "C:\Users\Drun\invokeai\.venv\Lib\site-packages\pip\_vendor\pyproject_hooks\_in_process\_in_process.py", line 353, in <module>

main()

File "C:\Users\Drun\invokeai\.venv\Lib\site-packages\pip\_vendor\pyproject_hooks\_in_process\_in_process.py", line 335, in main

json_out['return_val'] = hook(**hook_input['kwargs'])

^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\Drun\invokeai\.venv\Lib\site-packages\pip\_vendor\pyproject_hooks\_in_process\_in_process.py", line 118, in get_requires_for_build_wheel

return hook(config_settings)

^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\Drun\AppData\Local\Temp\pip-build-env-rmhvraqj\overlay\Lib\site-packages\setuptools\build_meta.py", line 355, in get_requires_for_build_wheel

return self._get_build_requires(config_settings, requirements=['wheel'])

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\Drun\AppData\Local\Temp\pip-build-env-rmhvraqj\overlay\Lib\site-packages\setuptools\build_meta.py", line 325, in _get_build_requires

self.run_setup()

File "C:\Users\Drun\AppData\Local\Temp\pip-build-env-rmhvraqj\overlay\Lib\site-packages\setuptools\build_meta.py", line 507, in run_setup

super(_BuildMetaLegacyBackend, self).run_setup(setup_script=setup_script)

File "C:\Users\Drun\AppData\Local\Temp\pip-build-env-rmhvraqj\overlay\Lib\site-packages\setuptools\build_meta.py", line 341, in run_setup

exec(code, locals())

File "<string>", line 23, in <module>

ModuleNotFoundError: No module named 'torch'

```

With 0.0.20:

```

(InvokeAI) pip install torch torchvision xformers==0.0.20

Collecting torch

Obtaining dependency information for torch from edce54779f/torch-2.1.0-cp311-cp311-win_amd64.whl.metadata

Using cached torch-2.1.0-cp311-cp311-win_amd64.whl.metadata (25 kB)

Collecting torchvision

Obtaining dependency information for torchvision from ab6f42af83/torchvision-0.16.0-cp311-cp311-win_amd64.whl.metadata

Using cached torchvision-0.16.0-cp311-cp311-win_amd64.whl.metadata (6.6 kB)

Collecting xformers==0.0.20

Obtaining dependency information for xformers==0.0.20 from d4a42f582a/xformers-0.0.20-cp311-cp311-win_amd64.whl.metadata

Using cached xformers-0.0.20-cp311-cp311-win_amd64.whl.metadata (1.1 kB)

Collecting numpy (from xformers==0.0.20)

Obtaining dependency information for numpy from 3f826c6d15/numpy-1.26.0-cp311-cp311-win_amd64.whl.metadata

Using cached numpy-1.26.0-cp311-cp311-win_amd64.whl.metadata (61 kB)

Collecting pyre-extensions==0.0.29 (from xformers==0.0.20)

Using cached pyre_extensions-0.0.29-py3-none-any.whl (12 kB)

Collecting torch

Using cached torch-2.0.1-cp311-cp311-win_amd64.whl (172.3 MB)

Collecting filelock (from torch)

Obtaining dependency information for filelock from 97afbafd9d/filelock-3.12.4-py3-none-any.whl.metadata

Using cached filelock-3.12.4-py3-none-any.whl.metadata (2.8 kB)

Requirement already satisfied: typing-extensions in c:\users\drun\invokeai\.venv\lib\site-packages (from torch) (4.8.0)

Requirement already satisfied: sympy in c:\users\drun\invokeai\.venv\lib\site-packages (from torch) (1.12)

Collecting networkx (from torch)

Using cached networkx-3.1-py3-none-any.whl (2.1 MB)

Collecting jinja2 (from torch)

Using cached Jinja2-3.1.2-py3-none-any.whl (133 kB)

Collecting typing-inspect (from pyre-extensions==0.0.29->xformers==0.0.20)

Obtaining dependency information for typing-inspect from 107a22063b/typing_inspect-0.9.0-py3-none-any.whl.metadata

Using cached typing_inspect-0.9.0-py3-none-any.whl.metadata (1.5 kB)

Collecting requests (from torchvision)

Obtaining dependency information for requests from 0e2d847013/requests-2.31.0-py3-none-any.whl.metadata

Using cached requests-2.31.0-py3-none-any.whl.metadata (4.6 kB)

INFO: pip is looking at multiple versions of torchvision to determine which version is compatible with other requirements. This could take a while.

Collecting torchvision

Using cached torchvision-0.15.2-cp311-cp311-win_amd64.whl (1.2 MB)

Collecting pillow!=8.3.*,>=5.3.0 (from torchvision)

Obtaining dependency information for pillow!=8.3.*,>=5.3.0 from debe992677/Pillow-10.0.1-cp311-cp311-win_amd64.whl.metadata

Using cached Pillow-10.0.1-cp311-cp311-win_amd64.whl.metadata (9.6 kB)

Collecting MarkupSafe>=2.0 (from jinja2->torch)

Obtaining dependency information for MarkupSafe>=2.0 from 08b85bc194/MarkupSafe-2.1.3-cp311-cp311-win_amd64.whl.metadata

Using cached MarkupSafe-2.1.3-cp311-cp311-win_amd64.whl.metadata (3.1 kB)

Collecting charset-normalizer<4,>=2 (from requests->torchvision)

Obtaining dependency information for charset-normalizer<4,>=2 from 50028bbb26/charset_normalizer-3.3.0-cp311-cp311-win_amd64.whl.metadata

Using cached charset_normalizer-3.3.0-cp311-cp311-win_amd64.whl.metadata (33 kB)

Collecting idna<4,>=2.5 (from requests->torchvision)

Using cached idna-3.4-py3-none-any.whl (61 kB)

Collecting urllib3<3,>=1.21.1 (from requests->torchvision)

Obtaining dependency information for urllib3<3,>=1.21.1 from 9957270221/urllib3-2.0.6-py3-none-any.whl.metadata

Using cached urllib3-2.0.6-py3-none-any.whl.metadata (6.6 kB)

Collecting certifi>=2017.4.17 (from requests->torchvision)

Obtaining dependency information for certifi>=2017.4.17 from 2234eab223/certifi-2023.7.22-py3-none-any.whl.metadata

Using cached certifi-2023.7.22-py3-none-any.whl.metadata (2.2 kB)

Requirement already satisfied: mpmath>=0.19 in c:\users\drun\invokeai\.venv\lib\site-packages (from sympy->torch) (1.3.0)

Collecting mypy-extensions>=0.3.0 (from typing-inspect->pyre-extensions==0.0.29->xformers==0.0.20)

Using cached mypy_extensions-1.0.0-py3-none-any.whl (4.7 kB)

Using cached xformers-0.0.20-cp311-cp311-win_amd64.whl (97.6 MB)

Using cached Pillow-10.0.1-cp311-cp311-win_amd64.whl (2.5 MB)

Using cached filelock-3.12.4-py3-none-any.whl (11 kB)

Using cached numpy-1.26.0-cp311-cp311-win_amd64.whl (15.8 MB)

Using cached requests-2.31.0-py3-none-any.whl (62 kB)

Using cached certifi-2023.7.22-py3-none-any.whl (158 kB)

Using cached charset_normalizer-3.3.0-cp311-cp311-win_amd64.whl (97 kB)

Using cached MarkupSafe-2.1.3-cp311-cp311-win_amd64.whl (17 kB)

Using cached urllib3-2.0.6-py3-none-any.whl (123 kB)

Using cached typing_inspect-0.9.0-py3-none-any.whl (8.8 kB)

Installing collected packages: urllib3, pillow, numpy, networkx, mypy-extensions, MarkupSafe, idna, filelock, charset-normalizer, certifi, typing-inspect, requests, jinja2, torch, pyre-extensions, xformers, torchvision

Successfully installed MarkupSafe-2.1.3 certifi-2023.7.22 charset-normalizer-3.3.0 filelock-3.12.4 idna-3.4 jinja2-3.1.2 mypy-extensions-1.0.0 networkx-3.1 numpy-1.26.0 pillow-10.0.1 pyre-extensions-0.0.29 requests-2.31.0 torch-2.0.1 torchvision-0.15.2 typing-inspect-0.9.0 urllib3-2.0.6 xformers-0.0.20

```

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [x] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [x] No, because: I'm no-brainer. It fixed issue for me, so I did PR.

Who knows?

## Technical details:

Windows 11, Standalone clean and freshly-installed Python 3.11

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [X] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [X] No, because:

## Have you updated all relevant documentation?

- [ ] Yes

- [X] No

## Description

Removing LowRA from the initial models as it's been deleted from

CivitAI.

## Related Tickets & Documents

https://discord.com/channels/1020123559063990373/1168415065205112872

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [ ] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [ ] Bug Fix

- [x] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [x] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [x] Yes

- [ ] No

## Description

Improve LoRA patching speed with the following changes:

- Calculate LoRA layer weights on the same device as the target model.

Prior to this change, weights were always calculated on the CPU. If the

target model is on the GPU, this significantly improves performance.

- Move models to their target devices _before_ applying LoRA patches.

- Improve the ordering of Tensor copy / cast operations.

## QA Instructions, Screenshots, Recordings

Tests:

- [x] Tested with a CUDA GPU, saw savings of ~10secs with 1 LoRA applied

to an SDXL model.

- [x] No regression in CPU-only environment

- [ ] No regression (and possible improvement?) on Mac with MPS.

- [x] Weights get restored correctly after using a LoRA

- [x] Stacking multiple LoRAs

Please hammer away with a variety of LoRAs in case there is some edge

case that I've missed.

## Added/updated tests?

- [x] Yes (Added some minimal unit tests. Definitely would benefit from

more, but it's a step in the right direction.)

- [ ] No

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [X] Feature

- [ ] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [X] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [X] Yes

- [ ] No

## Description

This PR gives the user the option of upgrading to the latest PRE-RELEASE

in addition to the default of updating to the latest release. This will

allow users to conveniently try out the latest pre-release for a while

and then back out to the official release if it doesn't work for them.

Added Average Images node

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [ ] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [X] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [X] No, because:

## Have you updated all relevant documentation?

- [X] Yes

- [ ] No

## Description

Added a new community node that averages input images.

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [X] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [X] Yes

- [ ] No, because:

## Have you updated all relevant documentation?

- [X] Yes

- [ ] No

## Description

This PR prevents the invokeai update script from offering prereleases.

Currently translated at 37.7% (460 of 1217 strings)

translationBot(ui): update translation (German)

Currently translated at 36.4% (444 of 1217 strings)

translationBot(ui): update translation (German)

Currently translated at 36.0% (439 of 1217 strings)

Co-authored-by: Alexander Eichhorn <pfannkuchensack@einfach-doof.de>

Translate-URL: https://hosted.weblate.org/projects/invokeai/web-ui/de/

Translation: InvokeAI/Web UI

Currently translated at 37.7% (460 of 1217 strings)

translationBot(ui): update translation (German)

Currently translated at 36.4% (444 of 1217 strings)

translationBot(ui): update translation (German)

Currently translated at 36.4% (443 of 1217 strings)

translationBot(ui): update translation (German)

Currently translated at 36.0% (439 of 1217 strings)

translationBot(ui): update translation (German)

Currently translated at 35.5% (433 of 1217 strings)

Co-authored-by: Fabian Bahl <fabian98@bahl-netz.de>

Translate-URL: https://hosted.weblate.org/projects/invokeai/web-ui/de/

Translation: InvokeAI/Web UI

Currently translated at 36.0% (439 of 1217 strings)

translationBot(ui): update translation (German)

Currently translated at 35.5% (433 of 1217 strings)

Co-authored-by: Jaulustus <jaulustus@gmail.com>

Translate-URL: https://hosted.weblate.org/projects/invokeai/web-ui/de/

Translation: InvokeAI/Web UI

Currently translated at 56.1% (683 of 1217 strings)

translationBot(ui): update translation (Japanese)

Currently translated at 40.3% (491 of 1217 strings)

Co-authored-by: Gohsuke Shimada <ghoskay@gmail.com>

Translate-URL: https://hosted.weblate.org/projects/invokeai/web-ui/ja/

Translation: InvokeAI/Web UI

Update to Load Video Frame node to reflect changes made in link

locations... a.k.a. fixing broken links.

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [ ] Bug Fix

- [ ] Optimization

- [x ] Documentation Update

- [x ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [x ] No, because: Its just a doc change to fix links I made for

resources that the page depends on from my github.

## Have you updated all relevant documentation?

- [? ] Yes

- [ ] No

## Description

load vid frame community node layout and link change.

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or

software specifications as well as any other pertinent information.

-->

## Added/updated tests?

- [ ] Yes

- [ ] No : _please replace this line with details on why tests

have not been included_

## [optional] Are there any post deployment tasks we need to perform?

## What type of PR is this? (check all applicable)

- [ ] Refactor

- [ ] Feature

- [X] Bug Fix

- [ ] Optimization

- [ ] Documentation Update

- [ ] Community Node Submission

## Have you discussed this change with the InvokeAI team?

- [ ] Yes

- [X] No, because n/a

## Have you updated all relevant documentation?

- [X] Yes

- [ ] No

## Description

The introduction of `BaseModelType.Any` broke the code in the merge

script which relied on sd-1 coming first in the BaseModelType enum. This

assumption has been removed and the code should be less brittle now.

## Related Tickets & Documents

<!--

For pull requests that relate or close an issue, please include them

below.

For example having the text: "closes #1234" would connect the current

pull

request to issue 1234. And when we merge the pull request, Github will

automatically close the issue.

-->

- Related Issue #

- Closes #

## QA Instructions, Screenshots, Recordings

<!--

Please provide steps on how to test changes, any hardware or